Semi-supervised GAN for Classification of Multispectral Imagery Acquired by UAVs

Unmanned aerial vehicles (UAV) are used in precision agriculture (PA) to enable aerial monitoring of farmlands. Intelligent methods are required to pinpoint weed infestations and make optimal choice of pesticide. UAV can fly a multispectral camera and collect data. However, the classification of multispectral images using supervised machine learning algorithms such as convolutional neural networks (CNN) requires large amount of training data. This is a common drawback in deep learning we try to circumvent making use of a semi-supervised generative adversarial networks (GAN), providing a pixel-wise classification for all the acquired multispectral images. Our algorithm consists of a generator network that provides photo-realistic images as extra training data to a multi-class classifier, acting as a discriminator and trained on small amounts of labeled data. The performance of the proposed method is evaluated on the weedNet dataset consisting of multispectral crop and weed images collected by a micro aerial vehicle (MAV). The results by the proposed semi-supervised GAN achieves high classification accuracy and demonstrates the potential of GAN-based methods for the challenging task of multispectral image classification.

💡 Research Summary

The paper addresses the challenge of classifying multispectral UAV imagery for precision agriculture when only a limited amount of pixel‑wise labeled data is available. Traditional supervised CNN approaches require large annotated datasets, which are costly and time‑consuming to acquire, especially for multispectral data that includes several spectral bands. To overcome this limitation, the authors propose a semi‑supervised Generative Adversarial Network (GAN) that simultaneously generates realistic multispectral images and learns a pixel‑wise multi‑class classifier.

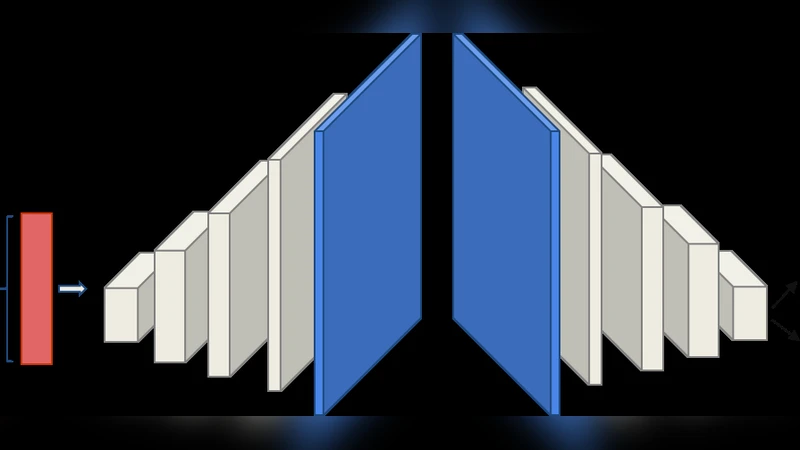

In the proposed architecture, the generator receives a 100‑dimensional uniform noise vector and passes it through four convolutional layers (with 256, 128, 64, and 32 feature maps) to synthesize a single‑channel (or three‑channel) image. The discriminator is a fully convolutional network derived from the DCGAN design, but instead of a binary sigmoid output it ends with a soft‑max layer that predicts probabilities for n real classes (crop, weed, background) plus one additional “fake” class. This modification turns the discriminator into a multi‑class pixel classifier while preserving the adversarial training dynamics. Both networks use batch normalization, LeakyReLU (discriminator) or ReLU (generator), and are optimized with Adam (learning rate = 0.0002, β1 = 0.5). No data augmentation or post‑processing is applied.

Training leverages three data sources: (1) a small subset of the dataset with pixel‑wise annotations (30 %–50 % of the images), (2) unlabeled images, and (3) synthetic images produced by the generator. The loss function for the discriminator combines the standard GAN adversarial term with a supervised cross‑entropy term for the labeled pixels, encouraging the network to learn discriminative features from both real and synthetic data. The generator is trained to fool the discriminator while also producing images that resemble the true multispectral distribution.

The method is evaluated on the WeedNet dataset, which consists of aerial multispectral captures of a sugar beet field using a 4‑band Sequoia camera. Only the Near‑Infrared (NIR, 790 nm) and Red (660 nm) bands are used, together with an NDVI layer derived from them. Experiments vary the number of input channels (Red, NIR, Red+NIR, Red+NIR+NDVI) and the proportion of labeled data (50 %, 40 %, 30 %). Results show that using both Red and NIR channels yields the highest F1 scores (≈ 0.85 with 50 % labeled data). Adding NDVI does not improve performance, likely because NDVI is a linear combination of the two bands and provides no additional independent information. Reducing the labeled portion to 30 % only modestly decreases the F1 score (still around 0.80), demonstrating the robustness of the semi‑supervised approach.

The authors conclude that the semi‑supervised GAN can achieve competitive classification accuracy with substantially fewer labeled samples compared to fully supervised CNNs such as the encoder‑decoder cascade used in the original WeedNet paper. They suggest future work to (i) test the framework on datasets with more spectral bands (e.g., Red‑Edge, Green, Blue), (ii) improve the spectral fidelity of generated images, and (iii) integrate post‑processing techniques like Conditional Random Fields to refine segmentation boundaries. Overall, the study showcases the potential of adversarial learning for cost‑effective, high‑precision weed detection in UAV‑based precision farming.

Comments & Academic Discussion

Loading comments...

Leave a Comment