Sherlock: A Deep Learning Approach to Semantic Data Type Detection

Correctly detecting the semantic type of data columns is crucial for data science tasks such as automated data cleaning, schema matching, and data discovery. Existing data preparation and analysis systems rely on dictionary lookups and regular expression matching to detect semantic types. However, these matching-based approaches often are not robust to dirty data and only detect a limited number of types. We introduce Sherlock, a multi-input deep neural network for detecting semantic types. We train Sherlock on $686,765$ data columns retrieved from the VizNet corpus by matching $78$ semantic types from DBpedia to column headers. We characterize each matched column with $1,588$ features describing the statistical properties, character distributions, word embeddings, and paragraph vectors of column values. Sherlock achieves a support-weighted F$_1$ score of $0.89$, exceeding that of machine learning baselines, dictionary and regular expression benchmarks, and the consensus of crowdsourced annotations.

💡 Research Summary

The paper introduces Sherlock, a deep learning system designed to automatically detect the semantic type of data columns—a task that underpins many data‑science workflows such as automated cleaning, schema matching, and data discovery. Traditional data‑preparation tools rely on handcrafted regular expressions or dictionary look‑ups, which are brittle in the presence of noisy or missing headers and support only a limited set of types. Sherlock addresses these shortcomings by learning from a massive corpus of real‑world tables and by exploiting a rich, multi‑modal feature representation.

Data collection and labeling

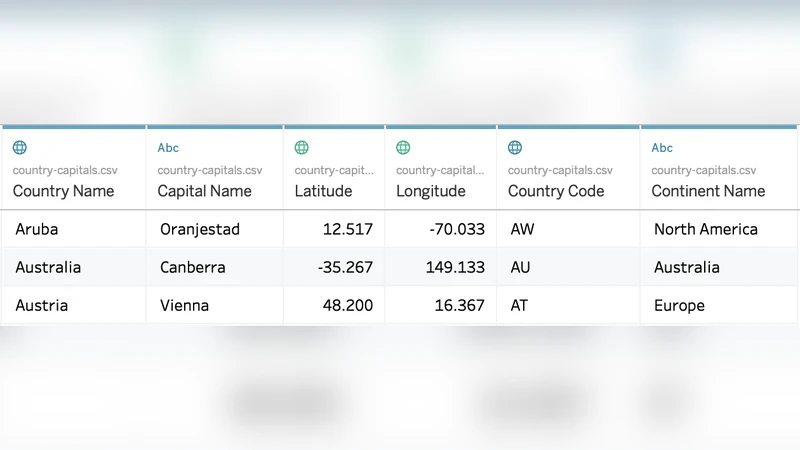

The authors start from the T2Dv2 Gold Standard, which maps 275 DBpedia properties to column headers. They collapse these into 78 high‑level semantic types that are common across public datasets. Using the VizNet repository—a large aggregation of tables harvested from web visualisation platforms and open data portals—they extract columns whose headers exactly match the type names (case‑insensitive, concatenated for multi‑word types). This process yields 686,765 labeled columns, which the authors manually verify to be of high quality, thereby treating the header as ground‑truth.

Feature engineering

Each column is transformed into a fixed‑length vector of 1,588 features grouped into four categories:

- Global statistics (27 features) – entropy, cardinality, fraction of numeric characters, mean length, etc., capturing high‑level distributional properties.

- Character‑level distributions (960 features) – counts of all 96 printable ASCII characters per value, aggregated with ten statistical functions (mean, variance, min, max, skewness, kurtosis, etc.). This mirrors the information used by regular‑expression based tools.

- Pre‑trained word embeddings (200 features) – 50‑dimensional GloVe vectors for each token; column‑level aggregates (mean, median, mode, variance) are computed across all values.

- Self‑trained paragraph vectors (400 features) – using the Distributed Bag‑of‑Words version of Paragraph Vector (PV‑DBOW). Each column is treated as a “document” and its values as “words”, producing a dense representation of the column’s topical content.

These features provide both syntactic cues (character patterns) and semantic cues (word meanings, topic distributions).

Model architecture

Sherlock is a multi‑input neural network. Each feature group is fed into a dedicated sub‑network: dense layers for global statistics, convolutional‑plus‑pooling layers for character distributions, and fully‑connected layers for the embedding vectors. The sub‑network outputs are concatenated and passed through two fully‑connected layers with ReLU activations, batch normalization, and dropout (0.5). The final softmax layer produces a probability distribution over the 78 semantic types. Training uses 60 % of the data, with 20 % for validation and 20 % for testing. Optimization is performed with Adam (learning rate = 1e‑3) and early stopping based on validation loss.

Evaluation

Performance is measured with a support‑weighted F1 score and per‑class precision/recall. Baselines include (i) a decision‑tree classifier, (ii) a random‑forest classifier, (iii) two matching‑based systems that emulate commercial tools (regular‑expression and dictionary look‑ups), and (iv) the consensus of crowdsourced annotations. Sherlock achieves a weighted F1 of 0.89, surpassing all baselines. It excels on textual types such as “name”, “country”, and “date” (F1 > 0.92) while still performing well on numeric or code‑like types (F1 ≈ 0.85). Types with highly heterogeneous or sparse values (e.g., “topic”, “keyword”) see lower scores (~0.70), highlighting areas for future improvement.

Feature importance and error analysis

A decision‑tree analysis reveals that character‑distribution features (e.g., presence of hyphens, slashes) and global statistics (entropy, uniqueness) are the strongest predictors, confirming the intuition that many semantic types have distinctive syntactic signatures. Word‑embedding and paragraph‑vector features contribute primarily to semantic discrimination among types with overlapping character patterns. An error‑reject curve shows that deferring low‑confidence predictions to human review can raise overall system accuracy by 5–7 %, suggesting a practical hybrid workflow.

Limitations and future work

The reliance on exact header matching for labeling means the training set may be biased toward well‑named columns; columns with missing or ambiguous headers could degrade performance. The 78 types are derived from DBpedia and may not cover domain‑specific vocabularies (e.g., medical, financial). The authors propose future directions: (a) automated assessment and cleaning of training labels, (b) enrichment of feature sets with richer language models (e.g., BERT), (c) incorporation of probabilistic column‑level predictions, and (d) establishment of shared benchmarks and open‑source evaluation suites.

Reproducibility

All data, code, and the pretrained Sherlock model are released at https://sherlock.media.mit.edu, enabling other researchers to replicate experiments, extend the feature pipeline, or integrate the model into existing data‑preparation platforms.

In summary, Sherlock demonstrates that a large‑scale, feature‑rich deep learning approach can substantially outperform traditional rule‑based and shallow‑learning methods for semantic column type detection, offering a scalable solution that can be incorporated into modern data‑engineering pipelines.

Comments & Academic Discussion

Loading comments...

Leave a Comment