Underwater Stereo using Refraction-free Image Synthesized from Light Field Camera

There is a strong demand on capturing underwater scenes without distortions caused by refraction. Since a light field camera can capture several light rays at each point of an image plane from various directions, if geometrically correct rays are cho…

Authors: Kazuto Ichimaru, Hiroshi Kawasaki

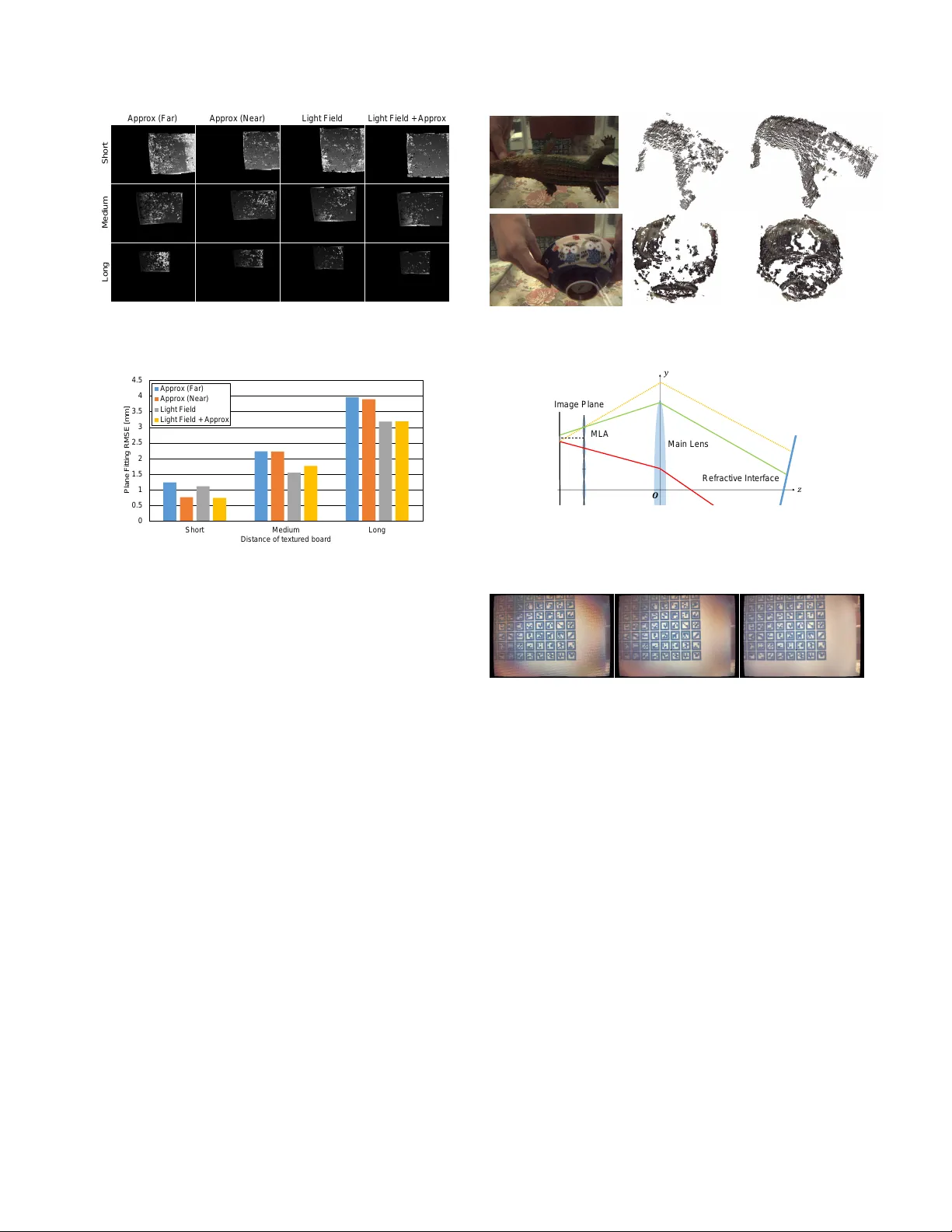

UNDER W A TER STEREO USING REFRA CTION-FREE IMA GE SYNTHESIZED FR OM LIGHT FIELD CAMERA Kazuto Ichimaru Hir oshi Kawasaki K yushu Univ ersity , Japan ABSTRA CT There is a strong demand on capturing underwater scenes without distortions caused by refraction. Since a light field camera can capture se veral light rays at each point of an image plane from various directions, if geometrically correct rays are chosen, it is possible to synthesize a refraction-free im- age. In this paper , we propose a nov el technique to ef ficiently select such rays to synthesize a refraction-free image from an underwater image captured by a light field camera. In addi- tion, we propose a stereo technique to reconstruct 3D shapes using a pair of our refraction-free images, which are central projection. In the experiment, we captured sev eral underwater scenes by tw o light field cameras, synthesized refraction free images and applied stereo technique to reconstruct 3D shapes. The results are compared with previous techniques which are based on approximation, sho wing the strength of our method. Index T erms — Stereo vision, Refraction, Light field, Un- derwater shape reconstruction 1. INTR ODUCTION Underwater scene acquisition is an important research topic for v arious areas, such as underwater construction, marine biology , swimming analysis to name a few . For those pur- poses, 3D shape reconstruction is most important, and thus, many techniques have been researched and dev eloped. In terms of 3D reconstruction, passive stereo using two RGB cameras is commonly used in the air , because of its simplicity and stability . Since ordinary cameras are usually perspective, which means central projection, most stereo techniques also assume the central projection, which enables 1) ef ficient cor - respondence search using epipolar constraint, 2) linear solu- tion on calibration, and 3) shape reconstruction by triangula- tion. Howe ver , underwater images are not central projection because of refraction, and thus, it is dif ficult to apply common stereo techniques to underwater scene. T o o vercome the problem, analytical solution to estimate light path of refraction by solving high order simultaneous equations is proposed [1]. Howe ver , solving such equations requires high computational cost and it also remains ambigu- ities. On the other hand, approximation based technique to synthesize a refraction-free image is proposed [2]. Although the synthesized refraction-free image can be treated as central projection at predefined depth, approximation error increases when object depth becomes far from the depth. Recently , light field imaging draw a wide attention for its potential on post-focusing, single view stereo and so on. Since a light field data is a collection of multiple light rays from various directions including non-central rays at each point on image plane, refraction-free image can be created by choosing a certain light ray from a b undle of rays at each point. In the paper , we propose a technique to find geometri- cally correct light rays from a light field data to synthesize a refraction-free image. Our method is implemented as a pixel warping on a captured light field image, thus, calculation time is comparable to approximation based approach. In addition, we propose a stereo technique using a pair of refraction-free images to reconstruct 3D shapes of underwater en vironment. In the experiment, we captured sev eral underwater scenes by two light field cameras and conducted stereo technique to reconstruct 3D shapes. The results are compared with pre- vious techniques, which are based on approximation model, successfully showing the strength of our method. 2. RELA TED WORK There are generally tw o approaches to handle refraction be- tween the water and the air . The one is geometric approach [1, 3, 4] and the other is approximation-based approach [2, 5, 6, 7]. Geometric approach considers physical model of refrac- tion to trace light rays, and applies forward / backward pro- jection to render 2D images from 3D objects. In those meth- ods, several parameters are necessary to be calibrated, such as refractive index, distance between camera and the water and normal of refractive interface. Agrawal et al. introduced a polynomial formulation for the model and efficient initial value computation method as well as analytical forw ard pro- jection by 4-th order equation [1]. Sedlazeck and K och pro- posed underwater SfM to directly recover 3D shapes without explicit calibration using ef ficient ener gy function for bundle adjustment by considering virtual camera [3]. Kawahara et al. proposed a pixel-wise v arifocal camera model [4]. Note that most of the geometric approaches introduce special models to represent refraction effects, which is not a central projection model, and thus, general stereo method cannot be applied. Approximation-based approach usually con verts an orig- inal image to a central projection image. Ferreira et al. pro- posed an approximation-based technique to make refraction- free image by applying lens distortion model, and showed some results on stereo vision [2]. Bleier and N ¨ uchter [5], Kawasaki et al. [6], and Ichimaru et al. [7] applied active Imag e Plan e 𝑓 Refr ac tiv e I nte rfa ce 𝑑 𝒏 𝑧 𝑦 𝜇 𝑎 𝜇 𝑤 𝒓 𝑎 𝒓 𝑤 𝑷 𝑶 𝑓 𝑣 𝑥 , 𝑦 , 𝑓 𝑷 𝑣 Fig. 1 . Illustration of approximation-based algorithm. method based on approximation-based approach. Since those techniques assume specific depth, se vere distortion occurs if the actual depth is far from the predefined depth. Recently , light field imaging technique draw a wide at- tention. In image processing task, Lu et al. used light field images with CNN for depth map restoration to cope with tur - bidity of water [8], and Li et al. used them for reflection re- mov al [9]. Jeon et al. proposed an accurate depth map estima- tion method using light field camera [10]. Zhang et al. pro- posed plenoptic SfM technique [11]. Kutulakos and Steger achiev ed reconstruction of static transparent objects by light field imaging [12]. W etzstein et al. used light field probes for normal and shape reconstruction of dynamic transparent ob- jects [13]. Skinner and Roberson used light field camera for single-view underwater 3D reconstruction, but they ignored refraction [14]. Among wide v ariety of light field research, synthesis of refraction-free image has not been studied yet. 3. REFRA CTION REMO V AL ALGORITHM In this section, we first introduce con ventional w ays to make refraction-free image (Sec. 3.1), and then, introduce proposed method based on light field imaging (Sec. 3.2). 3.1. V irtual focus and lens distortion for appr oximation Let a camera coordinate system be a pinhole model with fo- cal length f (Fig. 1). A planar refracti ve surf ace is placed in front of the camera with depth d and normal n . Both sides of the refractiv e surface are filled with transparent medium with different refracti ve indices µ a and µ w . When a light ray r a is observed at ( x, y , f ) on the image plane, the ray is backtracked to P on the refractiv e surf ace. As the ray is refracted on the refracti ve surface, the original direction r w of the ray can be calculated based on Snell’ s law . If the original ray did not refracted, it intersects with optical axis at P v = P + t r w with appropriate coefficient t . Then we can define virtual focal length f v = f + | P v z | , as the system becomes central projection model. Howe ver , since f v depends on r a = [ x, y , f ] T , it varies with location on the image (That is why [4] introduced pix el- wise varifocal camera model). Sev eral approximation-based approaches apply lens distortion model to con vert original image into central projection image [5, 6, 7] by using f v and distortion coefficients, which are estimated by minimizing re-projection error . Usually , radial and tangential distortion Refr ac tiv e I nte rfa ce 𝑑 𝒏 𝑧 𝑦 𝜇 𝑎 𝜇 𝑤 𝑶 Main Le ns Imag e Plan e MLA 𝑓 𝑚𝑙𝑎 𝑓 ′ 𝑓 𝒓 𝑎 𝑷 𝒑 ො 𝒓 𝑎 ෝ 𝒑 ො 𝒓 𝑎 ′ 𝑷 ′ Fig. 2 . Illustration of proposed algorithm. Orange line repre- sents necessary ray , and green line represents selected ray . model are used to represent the lens distortion, howe ver , they can only approximate refraction at certain specific depth. Thus, approximation error increases as target depth varies from predefined depth [7], especially , when the refractive interface is slanted, approximation error is biased to specific direction, which leads to sev ere error . 3.2. Ray selection fr om light field image T o overcome the con ventional distortion-free image synthesis technique based on approximation approach, we use light field image. Let a light field camera consist of a single large lens (main lens), micro lenses aligned on a plane (micro lens array; MLA) and image plane behind the MLA plane, as shown in Fig. 2. Main lens, MLA and image plane are paral- lel and all lenses are assumed to follow thin lens model. In the figure, f is a focal length of main lens, f mla is that of MLA and f 0 is a distance between main lens and MLA. Note that focal length f is not equal to flange back f 0 unlike pinhole camera model, howe ver , a distance between MLA and image plane is equal to f mla . The system represents physical light field camera model, instead of two-plane model. When a light ray r a is observed at p on the image plane, the ray is backtracked to P on the refractive surface. If there were no refraction, the ray comes along straight line, ho wever , it is refracted and reaches to another location ˆ p . Therefore, by moving a color of ˆ p onto location p , we can make refraction- free image. In terms of calculation of ˆ p , we use ray tracing technique with thin lens model. W e can analytically compute which mi- cro lens and which pixel the ray reaches. Howe ver informa- tion of the ray is often lost because not all ray passes center of the micro lens. Thus, instead of using the computed ˆ p , we se- lect the closest ray from neighbor rays which passes through the center of the micro lens. A distance between necessary ray and selected ray is defined as below: D = | P 0 − P | + λ cos − 1 ( ˆ r 0 a · ˆ r a ) , (1) where ˆ r 0 a is direction of selected ray , ˆ r a is direction of nec- essary ray , P 0 is intersection between ˆ r 0 a and the refractiv e surface and λ is a weight. Once a ray which minimizes D is found, p is assigned a color of a pix el the ray reached. In practice, since it is almost impossible to calibrate f , it is assumed to be equal to f 0 . The second term of D always Refr act ion Removal Bu ndl e Ad j ustm ent Ca pturing Stage C alibr ation tool in air C alibr ation tool in under wa ter T ar get objec t in under wa ter Ai r Ca li bration Flange back 𝑓 ′ Lens dis t co ef fs Re frac tiv e interface nor mal 𝒏 , depth 𝑑 Re frac tion - f ree images Stereo Cal ib ration Relativ e trans form ation Re ctifica tion Stereo M atching Re con struction Fig. 3 . Schematic of refraction-free stereo. becomes zero based on simple calculation, thus, only the dis- tance from the intersections is minimized. 4. IMPLEMENT A TION 4.1. Refraction-fr ee image synthesis T o achieve efficient computation, we divided the synthesis process into two parts, such as light path computation and pixel warping part, because light path computation is just once required through the entire process. In light path com- putation part, we analytically compute light path for each micro lens to find pixel-to-pix el correspondences, unless nec- essary light path goes to the outside the aperture. W e get locations of all micro lenses manually . Once pixel-to-pixel correspondences are obtained, we apply weighted avera ge and super-sampling for better image quality . For weighted av erage calculation, we extract sev eral rays in ascending order of D and av erage their color intensities according to re- ciprocal number of each D . For super-sampling, we increase the number of pixel-to-pix el correspondences by computing subpixel rays. The number of rays for average and the super- sampling ratio are changed in our implementation. W e finally obtain a pixel warping map based on weight for average and super-sampling for each pix el. In pixel warping part, we simply use the pre-computed pixel warping map to light field image and final image with specified resolution is obtained by bilinear interpolation. 4.2. Underwater ster eo using refraction-free image T wo light field cameras are used to conduct underwater stereo. Overvie w of the process is shown in Fig. 3. First, calibration tools ( e.g . , chess patterned board) are captured both in the air and the liquid and 2D locations of feature points are obtained. Second, real flange back f 0 and lens distortion coefficients of the cameras are calibrated with images captured in the air . Cameras’ real lens distortion effect is removed from all im- ages at this stage. Calibration of relativ e transformation of the cameras is not necessary here, thus air images can be captured separately . Third, d and n of the refractive interface are cal- ibrated by b undle adjustment using the method of [1]. Then, refraction-free images are synthesized using proposed algo- rithm with obtained parameters f 0 , d , and n . Using the image set, relative position and orientation between camera pair are Fig. 4 . Appearance of the experimental setup. Fig. 5 . Examples of refraction-free images with our method. calibrated with the refraction-free images and finally stereo method is applied. As for the stereo algorithm, OpenCV with NCC based matching cost is applied. 5. EXPERIMENT 5.1. Evaluation using planar object For the experiment, two light field cameras, L ytro Illum, and water tank of 90 × 45 × 45 cm dimensions are used (Fig. 4). T wo cameras are set outside the water tank, and the water tank is filled with clear water . W e intentionally slanted the cameras (it looks as if the refractive interface is slanted for the cameras), to make a strong distortion with approximation methods, which is expected to be solved by our technique. W e captured calibration board at two distances, such as far and near from the cameras to see the tolerance of proposed al- gorithm against depth-dependent error . W e applied both pro- posed algorithm as well as approximation-based algorithm [2] to synthesize distortion-free images. For ev aluation, we captured the te xtured planar board at far , medium and near from the camera. W e tested with follow- ing four different conditions, such as (a) approximation-based algorithm [2] with far calibration board, (b) approximation- based algorithm [2] with near calibration board, (c) proposed algorithm and (d) proposed algorithm with distortion remov al based on [2]. W e set number of weighted av eraging pixels to 32, and super-sampling ratio to 2. Examples of refraction- free images are shown in Fig. 5. Image synthesis takes 53 milliseconds for our algorithm with Intel Xeon E5640 CPU, which is a realtime process. After synthesizing refraction-free images using each method, we rectified the images and applied NCC based stereo. Results of disparity maps are shown in Fig. 6. W e can observe that the approximation-based algorithms [2] pro- duces moderate results when the tar get depth is close to the depth of the calibrated tool, ho we ver , the results are getting worse, if the target depth is far from the depth of the calibra- tion tool. On the other hand, proposed algorithms produce better results regardless of depth of the target and the cali- Ap prox (Far) Ap prox (Near) Li ght Fi el d Li ght Fi el d + Ap prox Long M edium Sh ort (a) (b) (c) (d) Fig. 6 . Results of stereo matching with four methods. (a) and (b): approximation-based algorithm [2]. (c) and (d): proposed algorithm. Noises are decreased in our algorithm. 0 0.5 1 1.5 2 2.5 3 3.5 4 4.5 Short Medium Long Pl ane Fi tt in g RMSE [m m] Di stance of tex tur ed boa rd Appr ox (Far) Appr ox (Ne ar ) Light F ie l d Light F ie l d + Ap p rox Fig. 7 . Results of plane fitting on textured board. bration board. Fig. 7 shows quantitative e valuation results by plane fitting on reconstructed board after outlier remov al, clearly showing that the proposed algorithm outperforms the approximation-based techniques quantitativ ely . 5.2. Reconstruction of arbitrary shape objects W e tested our method using several objects with complicated texture, such as a crocodile figurine and a ceramic bo wl. In this experiment, depth of the calibration board is placed far- ther than both target objects. Reconstruction results for qual- itativ e ev aluation are shown in Fig. 8. W e also apply con ven- tional approximation-based algorithm [2] for comparison. W e can confirm that the shapes of the approximation-based algo- rithm is unstable because of the mismatch of the depth be- tween reconstruction and calibration, whereas our technique achiev ed stable reconstruction for both objects. 6. LIMIT A TION In the experiment, we achiev ed higher accuracy in our al- gorithm than conv entional algorithms. Howe ver , in practice, approximation-based algorithm can produce similar/sometimes ev en better quality than our technique, if refractiv e interface is precisely orthogonal to the camera axis. W e consider this is because our algorithm can produce perfect refraction-free image in theory , howe ver , due to the limitation of the aper - ture size, image quality can be degraded by the following reason. According to our calculation, possible field-of-vie w for perfect refraction-free image is 30 degrees, while original field-of-view is about 60 degrees for L ytro Illum. T o compen- sate the problem, we introduce a weighted average of nearby Fig. 8 . Qualitati ve comparison on reconstruction. Left: Cap- tured images. Center: Results of approximation-based algo- rithm [2]. Right: Results of proposed algorithm. 𝑧 𝑦 𝑶 Refr ac tiv e I nte rfa ce Ma in Le ns Imag e Plan e MLA Fig. 9 . Illustration of the limitation and compensation by weighted averaging. Since red line ray is observed instead of orange line going outside the aperture, we collect neighbor green line rays to synthesize orange line ray . Fig. 10 . Comparison of synthesized images with different numbers of av eraged rays (1, 8, 64, from left to right). pixels in our method (Fig. 9), which leads a defocus effect producing blur in final image by increasing the number of av eraging rays (Fig. 10). Using wider field-of-view for the lens is one promising solution. 7. CONCLUSION In this paper, we propose an algorithm to synthesize refraction- free image using light field camera. Proposed algorithm en- abled geometrically correct image synthesis for an y kind of refraction and performed better 3D reconstruction based on stereo than previous approximation-based methods. More- ov er , the proposed method is capable of fast-computation, i.e. , real-time process with common PC. Although there is a limitation due to the limited size of aperture, our e xperiments show that our technique is mostly better than previous meth- ods, especially under se vere refraction cases. As our future work, we consider underwater single view depth computation is also possible using light field camera, as well as applying the proposed method to activ e stereo techniques. 8. REFERENCES [1] Amit Agrawal, Srikumar Ramalingam, Y uichi T aguchi, and V isesh Chari, “ A theory of multi-layer flat refracti ve geometry , ” in IEEE Confer ence on Computer V ision and P attern Recognition . 2012, IEEE. [2] Ricardo Ferreira, Jo ˜ ao P . Costeira, and Jo ˜ ao A. Santos, “Stereo reconstruction of a submerged scene, ” in P at- tern Recognition and Image Analysis . 2005, pp. 102– 109, Springer . [3] Anne Jordt-Sedlazeck and Reinhard Koch, “Refractiv e structure-from-motion on underwater images, ” in IEEE International Confer ence on Computer V ision . 2013, IEEE. [4] Ryoichi Kaw ahara, Shohei Nob uhara, and T akashi Mat- suyama, “ A pixel-wise varifocal camera model for effi- cient forward projection and linear extrinsic calibration of underwater cameras with flat housings, ” in IEEE In- ternational Conference on Computer V ision W orkshops . 2013, pp. 819–824, IEEE. [5] Michael Bleier and Andreas N ¨ uchter , “Low-cost 3d laser scanning in air or water using self-calibrating structured light, ” International Arc hives of the Pho- togrammetry , Remote Sensing and Spatial Information Sciences , pp. 105–112, 2017. [6] Hiroshi Kawasaki, Hideaki Nakai, Hirohisa Baba, Ryusuke Sagawa, and Ryo Furukawa, “Calibration tech- nique for underwater activ e oneshot scanning system with static pattern projector and multiple cameras, ” in IEEE W inter Confer ence on Applications of Computer V ision . 2017, IEEE. [7] Kazuto Ichimaru, Ryo Furukawa, and Hiroshi Kawasaki, “Multi-scale cnn stereo and pattern re- mov al technique for underwater activ e stereo system, ” in International Confer ence on 3D V ision , 2018. [8] Huimin Lu, Y ujie Li, Hyoungseop Kim, and Seiichi Serikawa, Underwater Light F ield Depth Map Restora- tion Using Deep Con volutional Neural F ields , vol. 752, pp. 305–312, Springer , 2018. [9] T ingtian Li, Daniel P .K. Lun, Y uk-Hee Chan, and Bu- dianto, “Robust reflection remov al based on light field imaging, ” IEEE T ransactions on Image Pr ocessing , pp. 1798–1812, 2018. [10] Hae-Gon Jeon, Jaeik Park, Gyeongmin Choe, Jinsum Park, Y unsu Bok, Y u-Wing T ai, and In So Kweon, “ Ac- curate depth map estimation from a lenslet light field camera, ” in IEEE Confer ence on Computer V ision and P attern Recognition . 2015, IEEE. [11] Y ingling Zhang, Peihong Y u, W ei Y ang, Y uanxi Ma, and Jingyi Y u, “Ray space features for plenoptic structure- from-motion, ” in IEEE International Conference on Computer V ision . 2017, IEEE. [12] Kiriakos N. Kutulakos and Eron Steger , “ A theory of refractiv e and specular 3d shape by light-path triangu- lation, ” International Journal of Computer V ision , vol. 76, pp. 13–29, 2008. [13] Gordon W etzstein, David Roodnick, W olfgang Hei- drich, and Ramesh Raskar , “Refracti ve shape from light field distortion, ” in IEEE International Confer ence on Computer V ision . 2011, IEEE. [14] Katherine A. Skinner and Matthe w Johnson-Roberson, “T o wards real-time underwater 3d reconstruction with plenoptic cameras, ” in IEEE International Confer ence on Intellig ent Robots and Systems . 2016, IEEE.

Original Paper

Loading high-quality paper...

Comments & Academic Discussion

Loading comments...

Leave a Comment