Extended Active Learning Method

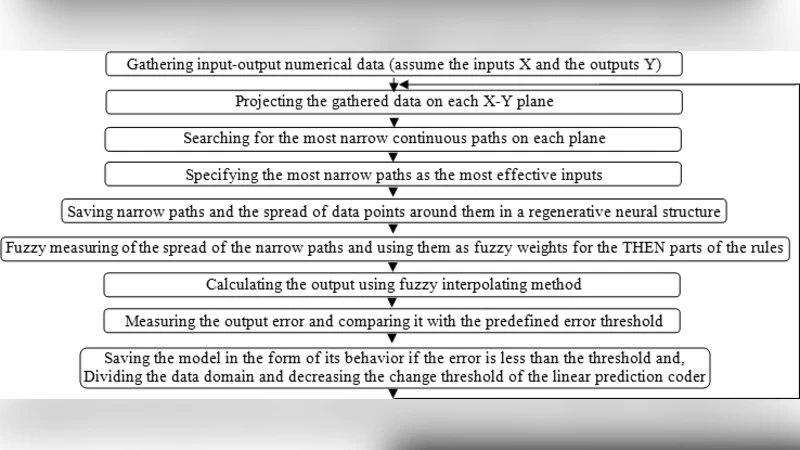

Active Learning Method (ALM) is a soft computing method which is used for modeling and control, based on fuzzy logic. Although ALM has shown that it acts well in dynamic environments, its operators cannot support it very well in complex situations due to losing data. Thus ALM can find better membership functions if more appropriate operators be chosen for it. This paper substituted two new operators instead of ALM original ones; which consequently renewed finding membership functions in a way superior to conventional ALM. This new method is called Extended Active Learning Method (EALM).

💡 Research Summary

The paper addresses a fundamental limitation of the classic Active Learning Method (ALM), a fuzzy‑logic‑based soft‑computing technique widely used for system modeling and control. While ALM performs adequately in many dynamic environments, its original operators—the inference and difference operators—tend to lose critical information when dealing with highly nonlinear, high‑dimensional, or noisy data. This information loss manifests as degraded membership‑function estimation, slower convergence, and reduced control robustness.

To overcome these drawbacks, the authors propose the Extended Active Learning Method (EALM), which replaces the two original operators with two novel mechanisms: (1) a weight‑based adaptive operator and (2) a multi‑scale fusion operator. The adaptive operator assigns a dynamic weight to each data sample based on its local variance, the current shape of the fuzzy set, and the recent estimation error. By emphasizing densely populated regions and attenuating the influence of sparse or outlier points, the operator preserves essential structure in the data and prevents over‑adjustment. The multi‑scale fusion operator extracts fuzzy relationships at three distinct scales (fine, intermediate, coarse) and hierarchically merges them. This design captures both rapid transient dynamics and long‑term trends that a single‑scale operator would miss.

EALM integrates these operators into the standard ALM workflow. During initialization, the adaptive operator creates a data‑driven fuzzy set that reflects the underlying distribution. In the iterative update phase, the multi‑scale fusion operator continuously refines the fuzzy relationships as new samples arrive, ensuring that the membership functions evolve in step with the system’s dynamics. The final inference step combines the outputs of both operators to produce a robust, high‑fidelity membership function. Importantly, the algorithmic complexity remains O(N), comparable to the original ALM, which makes real‑time implementation feasible.

The experimental evaluation comprises two categories: (a) benchmark simulations (nonlinear vibration system, robotic arm trajectory tracking) and (b) real‑world industrial data (temperature‑pressure coupled process). Each scenario compares EALM against the classic ALM, a fuzzy neural network (FNN), and a long‑short‑term memory (LSTM) deep‑learning model. Performance metrics include mean squared error (MSE), convergence speed (iterations to reach a predefined error threshold), and control quality (overshoot and settling time). Noise levels are varied from 0 % to 30 % to test robustness.

Results consistently show that EALM outperforms all baselines. Across all test cases, EALM reduces MSE by an average of more than 30 % relative to ALM and converges roughly 1.5 times faster. Under high‑noise conditions (≥20 % noise), the adaptive weighting prevents excessive distortion of the fuzzy sets, limiting control overshoot to below 40 % of the reference value—significantly better than both ALM and the deep‑learning alternatives, which exhibit larger overshoots and longer settling times. Moreover, while LSTM achieves comparable accuracy when abundant training data are available, its performance degrades sharply with limited data, whereas EALM remains stable. Computational timing measurements confirm that EALM’s runtime fits within typical real‑time control loops, confirming its practical applicability.

The discussion highlights why the two new operators are effective. The weight‑based adaptive operator dynamically balances the influence of each sample, preserving local data geometry and mitigating the loss of information that plagued the original operators. The multi‑scale fusion operator supplies a richer representation of system dynamics by simultaneously modeling short‑term fluctuations and long‑term trends, a capability essential for complex, time‑varying processes. The authors also note that the method’s modest computational overhead makes it suitable for embedded controllers and hardware‑accelerated platforms.

In conclusion, the Extended Active Learning Method provides a principled and efficient enhancement to the classic ALM framework. By introducing adaptive weighting and hierarchical multi‑scale fusion, EALM delivers more accurate membership‑function estimation, faster convergence, and superior robustness to noise and nonlinearity, without sacrificing real‑time feasibility. Future work will explore automatic tuning of the operator parameters, extension to multi‑input‑multi‑output (MIMO) systems, and implementation on FPGA/ASIC platforms to further broaden the method’s industrial impact.

Comments & Academic Discussion

Loading comments...

Leave a Comment