Multi-agent Attentional Activity Recognition

Multi-modality is an important feature of sensor based activity recognition. In this work, we consider two inherent characteristics of human activities, the spatially-temporally varying salience of features and the relations between activities and co…

Authors: Kaixuan Chen, Lina Yao, Dalin Zhang

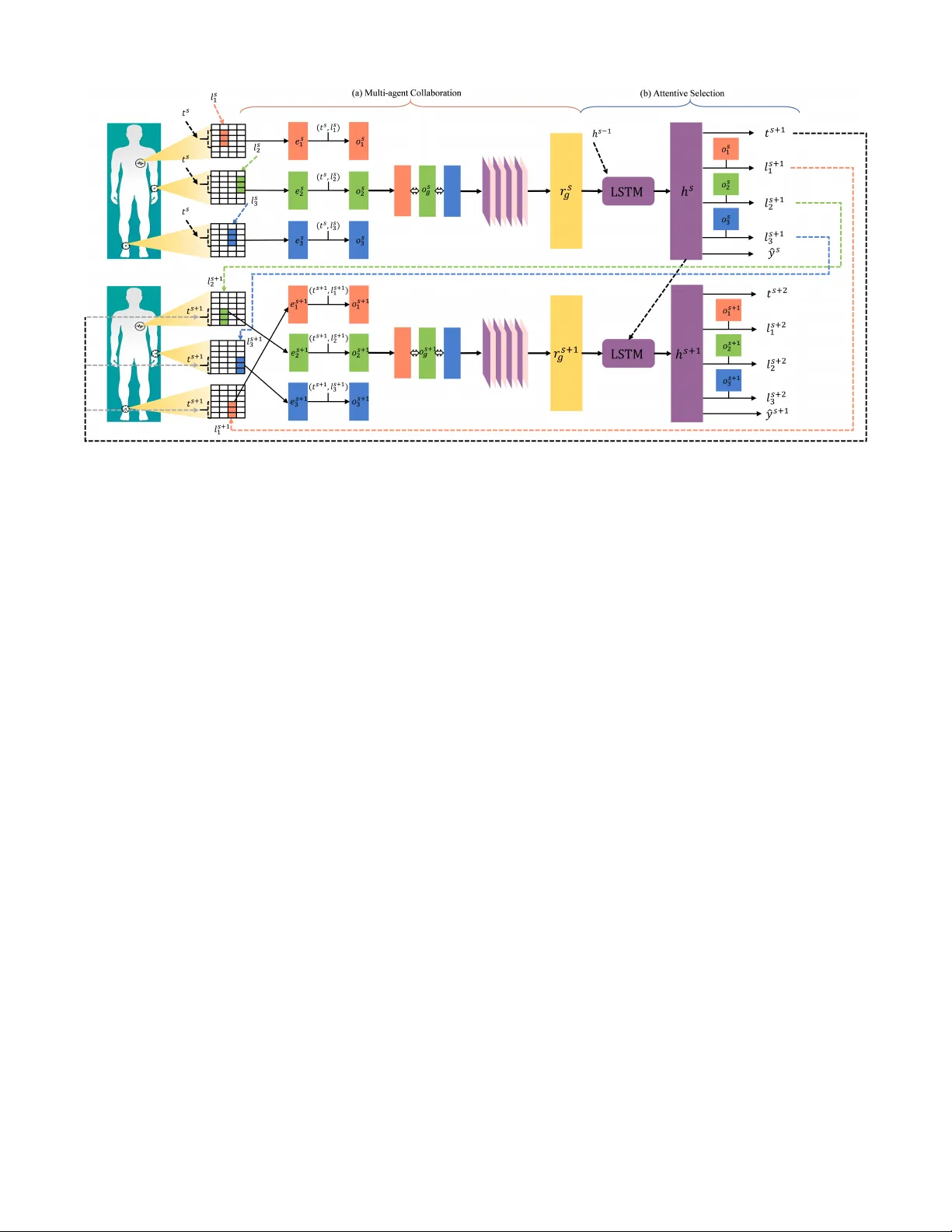

Multi-agent Attentional Activity Recognition Kaixuan Chen 1 , Lina Y ao 1 , Dalin Zhang 1 , Bin Guo 2 and Zhiwen Y u 2 1 Uni versity of Ne w South W ales 2 Northwestern Polytechnical Uni versity { kaixuan.chen@student., lina.yao@ } unsw .edu.au, Abstract Multi-modality is an important feature of sensor based acti vity recognition. In this work, we con- sider two inherent characteristics of human acti v- ities, the spatially-temporally v arying salience of features and the relations between activities and corresponding body part motions. Based on these, we propose a multi-agent spatial-temporal atten- tion model. The spatial-temporal attention mech- anism helps intelligently select informativ e modal- ities and their activ e periods. And the multiple agents in the proposed model represent activities with collecti ve motions across body parts by in- dependently selecting modalities associated with single motions. W ith a joint recognition goal, the agents share g ained information and coordinate their selection policies to learn the optimal recog- nition model. The experimental results on four real-world datasets demonstrate that the proposed model outperforms the state-of-the-art methods. 1 Introduction The ability to identify human activities via on-body sensors has been of interest to the healthcare community [ Anguita et al. , 2013 ] , the entertainment [ Freedman and Zilberstein, 2018 ] and fitness [ Guo et al. , 2017 ] community . Some works of Human Activity Recognition (HAR) are based on hand- crafted features for statistical machine learning models [ Lara and Labrador, 2013 ] . Until recently , deep learning has expe- rienced massive success in modeling high-lev el abstractions from complex data [ Pouyanfar et al. , 2018 ] , and there is a growing interest in dev eloping deep learning for HAR [ Ham- merla et al. , 2016 ] . Despite this, these methods still lack suf- ficient justification when being applied to HAR. In this work, we consider two inherent characteristics of human activities and exploit them to impro ve the recognition performance. The first characteristic of human activities is the spatially- temporally varying salience of features. Human activities can be represented as a sequence of multi-modal sensory data. The modalities include acceleration, angular velocity and magnetism from different positions of testers’ bodies, such as chests, arms and ankles. Ho wev er , only a part of modal- ities from specific positions are informativ e for recognizing certain activities [ W ang and W ang, 2017 ] . Irrelev ant modali- ties often influence the recognition and undermine the perfor- mance. For instance, identifying lying mainly relies on peo- ple’ s orientations (magnetism), and going upstairs can be eas- ily distinguished by upward acceleration from arms and an- kles. In addition, the significance of modalities changes ov er time. Intuitiv ely , the modalities are only important when the body parts are acti vely participating in the acti vities. There- fore, we propose a spatial-temporal attention method to select salient modalities and their activ e periods that are indicativ e of the true activity . Attention has been proposed as a se- quential decision task in earlier works [ Denil et al. , 2012; Mnih et al. , 2014 ] . This mechanism has been applied to sensor based HAR in recent years. [ Chen et al. , 2018 ] and [ Zhang et al. , 2018 ] transform the sensory sequences into 3- D activity data by replicating and permuting the input data, and the y propose to attentionally keep a focal zone for classi- fication. Howe ver , these methods heavily rely on data pre- processing, and the replication increases the computation complexity unnecessarily . Also, these methods do not take the temporally-varying salience of modalities into account. In contrast, the proposed spatial-temporal attention approach di- rectly selects informativ e modalities and their acti ve time that are relev ant to classification from raw data. The experiment results shows that our model mak es HAR more explainable. The second characteristic of human activities considered in this paper is acti vities are portrayed by motions on sev- eral body parts collecti vely . For instance, running can be seen as a combination of arm and ankle motions. Some works like [ Radu et al. , 2018; Y ang et al. , 2015 ] are com- mitted to fusing multi-modal sensory data for time-series HAR, but they only fuse the information of local modali- ties from the same positions. These methods, as well as the e xisting attention based methods [ Chen et al. , 2018; Zhang et al. , 2018 ] , are limited in capturing the global inter - connections across different body parts. T o fill this gap, we propose a multi-agent reinforcement learning approach. W e simplify activity recognition by dividing the activities into sub-motions with which an independent intelligent agent is associated and by coordinating the agents’ actions. These agents select informativ e modalities independently based on both their local observations and the information shared by each other . Each agent can individually learn an efficient se- lection policy by trial-and-error . After a sequence of selec- Figure 1: The ov erview of the proposed model. At each step s , three agents a 1 , a 2 , a 3 individually select modalities and obtain observations o s 1 , o s 2 , o s 3 from the input x at ( t s , l s 1 ) , ( t s , l s 2 ) and ( t s , l s 3 ) . The agents then exchange and process the gained information to get the repre- sentation r s g of the shared observation. And they decide the next locations again. Based on a sequence of observ ations after an episode, the agents jointly mak e the classification. Red, green and blue denote the workflows that are associated with a 1 , a 2 , a 3 , respecti vely . Other colors denote the shared information and its representations. tions and information e xchanges, a joint decision on recogni- tion is made. The selection policies are incrementally coor- dinated during training since the agents share a common goal which is to minimize the loss caused by false recognition. The key contrib utions of this research are as follows: • W e propose a spatial-temporal attention method for temporal sensory data, which considers the spatially- temporally varying salience of features, and allows the model to focus on the informati ve modalities that are only collected in their activ e periods. • W e propose a multi-agent collaboration method. The agents represent activities with collective motions by in- dependently selecting modalities associated with single motions and sharing observations. The whole model can be optimized by coordinating the agents’ selection poli- cies with the joint recognition goal. • W e evaluate the proposed model on four datasets. The comprehensiv e e xperiment results demonstrate the supe- riority of our model to the state-of-the-art approaches. 2 The Proposed Method 2.1 Problem Statement W e now detail the human acti vity recognition problem on multi-modal sensory data. Each input sample ( x , y ) consists of a 2-d vector x and an activity label y . Let x = [ x 0 , x 1 , ... x K ] where K denotes the time windo w length and x i denotes the sensory vector collected at the point i in time. x i is the com- bination of multi-modal sensory data collected from testers’ different body positions such as chests, arms and ankles. Sup- pose that x i = ( m i 1 , m i 2 , ... m i N ) = ( x i 0 , x i 1 , ...x i P ) , where m de- notes data collected from each position, N denotes the num- ber of positions (in our datasets, N = 3 ), and P denotes the number of values per vector . Therefore, x ∈ R K × P and y ∈ [1 , ..., C ] . C represents the number of acti vity classes. The goal of the proposed model is to predict the activity y . 2.2 Model Structure The ov ervie w of the model structure is sho wn in Figure 1. At each step s , the agents select an activ e period together and individually select informative modalities from the input x . These agents share their information and independently de- cide where to “look at” at the next step. The locations are determined spatially and temporally in terms of modalities and time. After se veral steps, the final classification is jointly conducted by the agents based on a sequence of the obser- vations. Each agent can incrementally learn an efficient de- cision policy over episodes. But by ha ving the same goal, which is to jointly minimize the recognition loss, they collab- orate with each other and learn to align their behaviors such that it achiev es their common goal. Multi-agent Collaboration. In this work we simplify activity recognition by dividing the activities into sub-motions and require each agent select in- formativ e modalities that are associated with one motion. Suppose that we employ H agents a 1 , a 2 , ...a H (we assume H = 3 in this paper for simplicity). The workflows of a 1 , a 2 , a 3 are shown in red, green and blue in Figure 1. At each step s , each agent locally observes a small patch of x , which includes information of a specific modality from a motion in its acti ve period. Let the observations be e s 1 , e s 2 , e s 3 as Figure 1 shows. They are extracted from x at the locations ( t s , l s 1 ) , ( t s , l s 2 ) and ( t s , l s 3 ) , respectively , where t denotes the selected activ e period and l denote the location of a modal- ity in the input x . The model encodes the region around ( t s , l s i ) ( i ∈ { 1 , 2 , 3 } ) with high resolution but uses a pro- gressiv ely lower resolution for points further from ( t s , l s i ) in order to remo ve noises and av oid information loss in [ Zontak et al. , 2013 ] . W e then further encode the observations into higher le vel representations. With regard to each agent a i ( i ∈ { 1 , 2 , 3 } ), the observ ation e s i and the location ( t s , l s i ) are linear transformed independently , parameterized by θ e and θ tl , respecti vely . Next, the summation of these two parts is further transformed with another linear layer parameterized by θ o The whole process can be summarized as the follo wing equation: o s i = f o ( e s i , t s , l s i ; θ e , θ tl , θ o ) = L ( L ( e s i ) + L ( concat ( t s , l s i ))) i ∈ { 1 , 2 , 3 } , (1) where L ( • ) denotes a linear transformation and concat ( t s , l s i ) represents the concatenation of t s and l s i . Each linear layer is followed by a rectified linear unit (ReLU) activ ation. Therefore, o s i contains information from ”what” ( ρ ( C f , l f t ) ), ”where” ( l f t ) and ”when”. Making multiple observations not only av oids the system processing the whole data at a time but also maximally pre- vents the information loss from only selecting one region of data. Furthermore, multiple agents make observations indi- vidually so that they can represent acti vities with the collec- tiv e modalities from different motions. The model can ex- plore various combinations of modalities to recognize activi- ties during learning. Then we are interested in the collaborative setting where the agents communicate with each other and share the obser- vations they make. So we get the shared observation o s g by concatenate o s 1 , o s 2 , o s 3 together . o s g = concat ( o s 1 , o s 2 , o s 3 ) , (2) so that o s g contains all the information observed by three agents. A con volutional netw ork is further applied to process o s g and extract the informati ve spatial relations. The output is then reshaped to be the representation r s g . r s g = f c ( o s g ; θ c ) = r eshape ( C onv ( o s g )) (3) And r s g represents the activity to be identified with multiple modalities selected from motions on different body positions. Attentive Selection. In this section, the details about how to select modalities and activ e period attentively are introduced. W e first introduce the episodes in this work. The agents incrementally learn the at- tentiv e selection policies over episodes. In each episode, fol- lowing the bottom-up processes, the model attenti vely selects data re gions and inte grates the observations ov er time to gen- erate dynamic representations, in order to determine effecti ve selections and maximize the rewards, i.e., minimize the loss. Based on this, LSTM is appropriate to b uild an episode as it incrementally combines information from time steps to ob- tain final results. As can be seen in Figure 1, at each step s , the LSTM module recei ves the representation r s g and the pre- vious hidden state h s − 1 as the inputs. Parameterized by θ h , it outputs the current hidden state h s : h s = f h ( r s g , h s − 1 ; θ h ) (4) Now we introduce the selection module. The agents are supposed to select salient modalities and an active period at each step. T o be specific, they need to select the locations where the y make next observations. Three agents control l s +1 1 , l s +1 1 , l s +1 3 independently based on both the hidden state h s and their individual observ ations o s 1 , o s 2 , o s 3 so that the in- dividual decisions are made from the ov erall observation as well. t s +1 is jointly decided based on h s only since it is a common selection. The decisions are made by the agents’ selection policies which are defined by Gaussian distribution stochastic process: l s +1 i ∼ P ( · | f l ( h s , o s i ; θ l i )) i ∈ { 1 , 2 , 3 } , (5) and t s +1 ∼ P ( · | f t ( h s ; θ t )) (6) The purpose of stochastic selections is to explore more kinds of selection combinations such that the model can learn the best selections during training. T o align the agents’ selection policies, we assign the agents a common goal that correctly recognizing acti vities after a se- quence of observations and selections. They together receiv e a positive re ward if the recognition is correct. Therefore, at each step s , a prediction ˆ y s is made by: ˆ y s = f y ( h s ; θ y ) = sof tmax ( L ( h s )) (7) Usually , agents receive a rew ard r after each step. But in our case, since only the classification in the last step S is repre- sentativ e, the agents receive a delayed reward R after each episode. R = ( 1 if ˆ y S = y 0 if ˆ y S 6 = y (8) The target of optimization is to coordinate all the selection policies by maximizing the expected value of the reward ¯ R after sev eral episodes. 2.3 T raining and Optimization This model in volves parameters that define the multi-agent collaboration and the attentive selection. The parameters Θ = { θ e , θ tl , θ o , θ c , θ h , θ l i , θ t , θ y } ( i ∈ { 1 , 2 , 3 } ). The parameters for classification can be optimized by minimizing the cross- entropy loss: L c = − 1 N N X n =1 C X c =1 y n ( c ) log F y ( x ) , (9) where F y is the overall function that outputs ˆ y giv en x . C is the number of activity classes, and y n ( c ) = 1 if the n -th sample belongs to the c -th class and 0 otherwise. Howe ver , selection policies that are mainly defined by θ l i ( i ∈ { 1 , 2 , 3 } ) and θ t are expected to select a sequence of locations. The parameters are thus non-differentiable. In this view , we deploy a P artially Observable Markov Deci- sion Process (POMDP) [ Cai et al. , 2009 ] to solve the op- timization problem. Suppose e s = ( e s 1 , e s 2 , e s 3 ) , l t s = ( l s 1 , l s 2 , l s 3 , t s ) , W e consider each episode as a trajectory τ = { e 1 , l t 1 , y 1 ; e 2 , l t 2 , y 2 ; ..., e S , l t S , y S } . Each trajectory rep- resents one order of the observations, the locations and the predictions our agents mak e. After agents repeat N episodes, we can obtain { τ 1 , τ 2 , ..., τ N } , and each τ has a probabil- ity p ( τ ; Θ) to be obtained. The probability depends on the selection policy Π = ( π 1 , π 2 , π 3 ) of the agents. Our goal is to learn the best selection policy Π that maxi- mizes ¯ R . Specifically , Π is decided by Θ . Thus we need to find out the optimized Θ ∗ = arg max Θ [ ¯ R ] . One common way is gradient ascent. Generally , giv en a sample x with rew ard f ( x ) and proba- bility p ( x ) , the gradient can be calculated as follows: ∇ θ E x [ f ( x )] = ∇ θ X x p ( x ) f ( x ) = X x p ( x ) ∇ θ p ( x ) p ( x ) f ( x ) = X x p ( x ) ∇ θ log p ( x ) f ( x ) = E x [ f ( x ) ∇ θ log p ( x )] (10) In our case, a trajectory τ can be seen as a sample, the prob- ability of each sample is p ( τ ; Θ) , and the reward function ¯ R = E p ( τ ;Θ) [ R ] . W e hav e the gradient: ∇ Θ ¯ R = E p ( τ ;Θ) [ R ∇ Θ log p ( τ ; Θ)] (11) By considering the training problem as a POMDP and fol- lowing the REINFORCE rule [ W illiams, 1992 ] : ∇ Θ ¯ R = E p ( τ ;Θ) [ R S X s =1 ∇ Θ log Π( y | τ 1: s ; Θ)] (12) Since we need sev eral samples τ for one input x to learn the best polic y combination, we adopt Monte Carlo sampling which utilizes randomness to yield results that might be theo- retically deterministic. Supposing M is the number of Monte Carlo sampling copies, we duplicate the same input for M times and a verage the prediction results. The M copies gen- erate M subtly different results owing to the stochasticity , so we hav e: ∇ Θ ¯ R ≈ 1 M M X i =1 R ( i ) S X s =1 ∇ Θ log Π( y ( i ) | τ ( i ) 1: s ; Θ) , (13) where M denotes the number of Monte Carlo samples, i de- notes the i th duplicated sample, and y i is the correct label for the i th sample. Therefore, the overall optimization can be summarized as maximizing ¯ R and minimizing Eq. 9. The detailed procedure is shown in Algorithm 1. 3 Experiments 3.1 Experiment Setting W e now introduce the settings in our experiments. The time window of inputs is 20 with 50% overlap. The size of each Algorithm 1 Training and Optimization Require: sensory matrix x , label y , the length of episodes S , the number of Monte Carlo samples M . Ensure: parameters Θ . 1: Θ = R andomI nitial iz e () 2: while training do 3: duplicate x for M times 4: for i from 1 to M do 5: l 1( i ) 1 , l 1( i ) 2 , l 1( i ) 3 , t 1( i ) = RandomI nitializ e () 6: f or s from 1 to S do 7: extract e s ( i ) 1 , e s ( i ) 2 , e s ( i ) 2 8: o s ( i ) 1 , o s ( i ) 2 , o s ( i ) 3 ← E q . 1 9: o s ( i ) g , r s ( i ) g , h s ( i ) ← E q . 2 , E q . 3 , E q . 4 10: l s ( i ) 1 , l s ( i ) 2 , l s ( i ) 3 , t s ( i ) ← E q . 5 , E q . 6 11: ˆ y s ( i ) ← E q . 7 12: record τ ( i ) 1: s 13: end for 14: R ( i ) ← E q . 8 15: end f or 16: ˆ y = 1 M P M i =1 ˆ y S ( i ) 17: L c , ∇ Θ ¯ R ← E q . 9 , E q . 13 18: Θ ← Θ − ∇ Θ L c + ∇ Θ ¯ R 19: end while 20: return Θ observation patch is set to K 8 × P 8 , where K × P is the size of the inputs. In the partial observ ation part, the sizes of θ e , θ tl , θ o are 128 , 128 , 220 , respectiv ely . The filter size of the con volutional layer in the shared observation module is 1 × M and the number of feature maps is 40 , where M denotes the width of o s g . The size of LSTM cells is 220 , and the length of episodes is 40 . The Gaussian distribution that defines the selection policies is with a variance of 0 . 22 . T o ensure the rigorousness, the experiments are per- formed by Leave-One-Subject-Out (LOSO) on four datasets, MHEAL TH [ Banos et al. , 2014 ] , P AMAP2 [ Reiss and Stricker , 2012 ] , UCI HAR [ Anguita et al. , 2013 ] and MARS. They contain 10 , 9 , 30 , 8 subjects’ data, respecti vely . 3.2 Comparison with State-of-the-Art T o verify the overall performance of the proposed model, we first compare our model with other state-of-the-art methods. The compared methods include a con volutional model on multichannel time series for HAR (MC-CNN) [ Y ang et al. , 2015 ] , a CNN-based multi-modal fusion model (C-Fusion) [ Radu et al. , 2018 ] , a deep multimodal HAR model with classifier ensemble (MARCEL) [ Guo et al. , 2016 ] , an en- semble of deep LSTM learners for activity recognition (E- LSTM) [ Guan and Pl ¨ otz, 2017 ] , a parallel recurrent model with conv olutional attentions (PRCA) [ Chen et al. , 2018 ] and a weighted av erage spatial LSTM with selecti ve attention (W AS-LSTM) [ Zhang et al. , 2018 ] . As can be observed in T able 1, with respect to the datasets, MARCEL, E-LSTM, PRCA, W AS-LSTM and the proposed model perform better than MC-CNN and C-Fusion T able 1: The prediction performance of the proposed approach and other state-of-the-art methods. * denotes attention based state-of-the-art. The best performance is indicated in bold. MH Method MC-CNN C-Fusion MARCEL E-LSTM PRCA* W AS-LSTM* Ours* Accuracy 87.19 ± 0.77 88.66 ± 0.62 92.35 ± 0.46 91.58 ± 0.38 93.32 ± 0.75 91.42 ± 1.25 96.12 ± 0.37 Precision 86.50 ± 0.61 86.36 ± 0.72 93.17 ± 0.84 90.50 ± 0.68 92.11 ± 0.96 91.35 ± 0.70 95.46 ± 0.33 Recall 87.29 ± 0.44 89.68 ± 0.72 92.81 ± 0.44 91.58 ± 0.59 92.25 ± 0.94 91.99 ± 1.04 96.76 ± 0.30 F1 86.89 ± 0.66 87.98 ± 0.79 92.98 ± 0.74 91.03 ± 0.68 92.17 ± 1.06 91.66 ± 1.21 96.10 ± 0.47 PMP Method MC-CNN C-Fusion MARCEL E-LSTM PRCA* W AS-LSTM* Ours* Accuracy 81.16 ± 1.32 81.86 ± 0.74 82.87 ± 0.81 83.21 ± 0.68 82.39 ± 1.04 84.89 ± 2.18 90.33 ± 0.62 Precision 81.57 ± 0.89 81.63 ± 0.53 83.51 ± 0.71 84.01 ± 0.54 82.44 ± 0.99 84.44 ± 1.54 89.25 ± 0.78 Recall 81.43 ± 0.64 81.96 ± 0.89 81.12 ± 0.79 83.88 ± 0.74 82.86 ± 0.90 84.20 ± 1.83 90.49 ± 0.94 F1 81.50 ± 0.72 81.79 ± 0.71 82.29 ± 0.76 83.94 ± 0.95 82.64 ± 1.19 84.81 ± 1.06 89.86 ± 0.81 HAR Method MC-CNN C-Fusion MARCEL E-LSTM PRCA* W AS-LSTM* Ours* Accuracy 75.86 ± 0.59 74.64 ± 0.78 80.16 ± 0.72 80.78 ± 0.94 81.29 ± 1.22 71.29 ± 1.08 85.72 ± 0.83 Precision 76.93 ± 0.78 73.30 ± 0.75 81.63 ± 0.50 81.34 ± 0.43 80.55 ± 1.26 70.76 ± 0.93 85.61+0.53 Recall 75.81 ± 0.39 74.07 ± 0.48 80.81 ± 0.64 80.63 ± 0.54 81.66 ± 1.03 71.10 ± 1.37 85.08 ± 0.72 F1 76.36 ± 1.11 73.68 ± 0.79 81.21 ± 0.85 80.98 ± 0.64 81.11 ± 1.02 70.92 ± 1.16 85.34 ± 0.58 MARS Method MC-CNN C-Fusion MARCEL E-LSTM PRCA* W AS-LSTM* Ours* Accuracy 81.34 ± 0.59 81.48 ± 0.56 81.68 ± 0.87 81.59 ± 0.77 85.38 ± 0.82 74.82 ± 1.42 88.29 ± 0.87 Precision 81.68 ± 0.62 81.84 ± 0.68 81.23 ± 0.84 81.79 ± 0.85 85.99 ± 1.07 75.89 ± 1.54 88.75 ± 0.81 Recall 81.06 ± 0.90 82.15 ± 0.82 82.44 ± 0.54 81.65 ± 0.93 84.95 ± 0.95 74.80 ± 1.63 87.20 ± 0.67 F1 81.32 ± 0.42 81.99 ± 0.64 81.85 ± 0.97 81.71 ± 0.81 85.46 ± 1.02 75.34 ± 1.27 87.96 ± 0.74 T able 2: Ablation Study . S1 ∼ S6 are six structures by systematically removing fi ve components from the proposed model. The considered components are: a) the selection module, (b) the partial observation processing from e s 1 to o s i ( i ∈ { 1 , 2 , 3 } ), (c) the con volutional merge of shared observations, (d) the temporal attenti ve selection (e) the multi-agent for selection. Ablation MHEAL TH P AMAP2 Accuracy Precision Recall F1 Accurac y Precision Recall F1 S1 85.75 ± 0.70 84.67 ± 0.92 85.83 ± 0.78 84.34 ± 0.89 79.60 ± 0.56 79.86 ± 0.51 79.68 ± 0.49 79.57 ± 0.83 S2 80.59 ± 1.54 80.37 ± 0.95 80.95 ± 1.44 80.00 ± 1.12 71.49 ± 1.55 71.62 ± 1.18 71.38 ± 1.36 71.49 ± 1.42 S3 85.49 ± 0.88 85.67 ± 0.35 84.62 ± 0.71 85.14 ± 0.86 77.68 ± 0.73 77.35 ± 0.52 77.74 ± 0.82 77.04 ± 0.59 S4 88.32 ± 0.75 87.11 ± 0.96 87.25 ± 0.94 87.17 ± 1.06 78.39 ± 1.04 78.44 ± 0.99 78.86 ± 0.90 78.64 ± 1.19 S5 91.93 ± 0.94 90.85 ± 0.85 91.35 ± 0.73 91.88 ± 0.81 83.53 ± 0.95 83.66 ± 0.85 83.38 ± 0.61 83.51 ± 0.74 S6 96.12 ± 0.37 95.46 ± 0.33 96.76 ± 0.30 96.10 ± 0.47 90.33 ± 0.62 89.25 ± 0.78 90.49 ± 0.94 89.86 ± 0.81 Ablation UCI HAR MARS Accuracy Precision Recall F1 Accurac y Precision Recall F1 S1 73.68 ± 0.73 73.79 ± 0.54 73.98 ± 0.59 73.38 ± 0.42 79.78 ± 0.89 79.37 ± 0.82 79.49 ± 0.42 79.31 ± 0.64 S2 68.95 ± 2.85 67.41 ± 2.97 67.92 ± 2.88 67.66 ± 2.81 71.94 ± 2.18 71.34 ± 2.52 71.99 ± 2.67 71.15 ± 2.71 S3 75.45 ± 0.77 75.54 ± 1.27 75.49 ± 0.88 75.88 ± 0.94 78.34 ± 0.84 78.42 ± 0.83 78.48 ± 0.98 78.40 ± 0.99 S4 76.29 ± 1.22 76.55 ± 1.26 77.66 ± 1.03 77.11 ± 1.02 81.38 ± 0.82 81.99 ± 1.07 81.95 ± 0.95 81.46 ± 1.02 S5 80.71 ± 0.94 80.95 ± 1.82 80.44 ± 0.74 80.11 ± 0.52 85.51 ± 0.86 84.94 ± 0.73 85.73 ± 0.66 84.81 ± 0.98 S6 85.72 ± 0.83 85.61+0.53 85.08 ± 0.72 85.34 ± 0.58 88.29 ± 0.87 88.75 ± 0.81 87.20 ± 0.67 87.96 ± 0.74 in MHEAL TH and P AMAP2, as these models enjoy higher variance. They fit well when data contain numerous features and complex patterns. On the other hand, data in UCI HAR and MARS ha ve fewer features, b ut MARCEL, E-LSTM, PRCA and our model still perform well while the perfor- mance of W AS-LSTM deteriorates. The reason is that W AS- LSTM is based on a complex structure and it requires more features as input. In contrast, MARCEL and E-LSTM adopt rather simple models like DNNs and LSTMs. Despite the ensembles, they are still suitable for fe wer features. PRCA and the proposed model select salient features directly with intuitiv e re wards, so they do not necessarily need a lar ge number of features as well. In addition, the attention based methods, PRCA and W AS-LSTM, are more unstable than the other methods since the selection is stochastic and they can- not guarantee the ef fectiv eness of all the selected features. Overall, our model outperforms the compared state-of-the-art and eliminates the instability of regular selecti ve attentions. 3.3 Ablation Study W e perform a detailed ablation study to examine the contri- butions of the proposed model components to the prediction performance in T able 2. Considering that there are five re- mov able components in this model: (a) the modality selection module, (b) the transformation from e s i to o s i ( i ∈ { 1 , 2 , 3 } ), (c) the con volutional network for higher-le vel representa- tions, (d) the temporal attentiv e selection (e) the multi-agent. W e consider six structures: S1 : W e first remov e the selection module including the observations, episodes, selections and rew ards. For comparison, we set S1 to be a regular CNN as (a) Standing l 1 (b) Standing l 2 (c) Standing l 3 (d) Going Upstairs l 1 (e) Going Upstairs l 2 (f) Going Upstairs l 3 (g) Running l 1 (h) Running l 2 (i) Running l 3 (j) L ying l 1 (k) L ying l 2 (l) L ying l 3 Figure 2: V isualization of the selected modalities and time on MHEAL TH. The input matrices’ size is 20 × 23 , where 20 is the length of the time windo w and 23 is the number of modalities. Thus each grid denotes an input feature, and the v alues in the grids represent the frequency with which this feature is selected. Lighter colors denote higher frequency . T o be clear, detailed illustration is pro vided in T able 3. a baseline. S2 : W e employ one agent but remov e (b), (c), (d) and (e), so the workflo w is: inputs → e s i → LSTM → selections and rewards. The performance decreases and is more unstable than other structures. Although S2 includes at- tentions, the model does not include the previous selections in their observations, which influences their next decisions significantly . S3 : Based on S2, we add (b) to (a). (b) con- tributes considerably since the prediction results are improved by 5% to 7% , because it feeds back the history selections to the agents for learning. S4 : W e further consider (c) in the model. It can be observed that this setting also achiev es better performance than S3 since it conv olutionally merges the par- tial observations. S5 : (d) is added. The workflow is the same as S3, but the agents make an additional action: selecting t S , which leads to another attention mechanism in time lev el. The performance is improv ed by 3% to 5% . S6 : The pro- posed model. When combining all these benefits, our model achiev es the best performance, higher than S5 by 5% to 7% . 3.4 V isualization and Explainability The proposed method decomposes the activities into partic- ipating motions, from each of which the agents decide the most salient modalities individually , which makes the model explainable. W e present the visualized process of recognizing standing, going upstairs, running and lying on MHEAL TH. The av ailable features include three 3 -axis acceleration from chests, arms and ankles, tw o ECG signals, two 3 -axis angular velocity and tw o 3 -axis magnetism vectors from arms and an- kles. Figure 2 shows the modality heatmaps of all agents. W e observe that each agent does focus on only a part of modal- ities in a time period during recognition. T able 3 lists the most frequently selected modalities. W e can observe that magnetism (orientation) in standing and lying is selected as one of the most acti ve features, owing to the fact it is easy to distinguish between standing and lying with people’ s ori- entation. Another e xample is that the most distinguishing T able 3: The activ e modalities for acti vities selected by the agents are listed. X, Y , Z denote the axis of data. Acc, Ang and Magn denote acceleration, angular velocity and magnetism, respectively . The most frequently selected locations are indicated in bold. Activity Agent Location Standing 1 Y , Z -Magn-Ankle, X,Y -Acc-Arm 2 Y -Acc-Ankle, ECG1,2-Chest 3 X, Y -Ang-Ankle Going Upstairs 1 Y , Z -Acc-Ankle, X,Y -Ang-Ankle 2 X,Y ,Z-Acc-Ankle, X ,Y -Ang-Ankle 3 Y , Z -Acc-Arm, X,Y ,Z-Ang-Arm Running 1 Y ,Z-Acc-Chest, ECG1,2-Chest X ,Y ,Z-Acc-Ankle 2 X,Y ,Z-Acc-Arm, X ,Y -Ang-Arm 3 Y ,Z-Acc-Arm, X, Y .Z-Ang-Arm L ying 1 Y ,Z-Acc-Chest, ECG1 ,2-Chest 2 Y , Z -Magn-Ankle 3 X, Y ,Z-Magn-Arm characteristic of going upstairs is “up”. Therefore, Z-axis acceleration is specifically selected by agents for going up- stairs. Also, identifying running inv olves acceleration, ECG, and arm swing, which conforms to the experiment evidence as well. The agents also select se veral other features with lower frequencies, which a voids losing ef fecti ve information. 4 Conclusion In this work, we first propose a selectiv e attention method for spatially-temporally varying salience of features. Then, multi-agent is proposed to represent activities with collectiv e motions. The agents’ cooperate by aligning their actions to achiev e their common recognition tar get. W e experimentally ev aluate our model on four real-world datasets, and the results validate the contrib utions of the proposed model. References [ Anguita et al. , 2013 ] Davide Anguita, Alessandro Ghio, Luca Oneto, Xavier Parra, and Jorge Luis Reyes-Ortiz. A public domain dataset for human activity recognition using smartphones. In ESANN , 2013. [ Banos et al. , 2014 ] Oresti Banos, Rafael Garcia, Juan A Holgado-T erriza, Miguel Damas, Hector Pomares, Ignacio Rojas, Alejandro Saez, and Claudia V illalonga. mhealth- droid: a nov el frame work for agile de velopment of mobile health applications. In International W orkshop on Ambient Assisted Living , pages 91–98. Springer , 2014. [ Cai et al. , 2009 ] Chenghui Cai, Xuejun Liao, and Lawrence Carin. Learning to explore and exploit in pomdps. In Ad- vances in Neural Information Processing Systems (NIPS) , pages 198–206, 2009. [ Chen et al. , 2018 ] Kaixuan Chen, Lina Y ao, Xianzhi W ang, Dalin Zhang, T ao Gu, Zhiwen Y u, and Zheng Y ang. Inter- pretable parallel recurrent neural networks with con v olu- tional attentions for multi-modality activity modeling. In Neural Networks (IJCNN), 2018 International Joint Con- fer ence on , pages 3016–3021. IEEE, 2018. [ Denil et al. , 2012 ] Misha Denil, Loris Bazzani, Hugo Larochelle, and Nando de Freitas. Learning where to at- tend with deep architectures for image tracking. Neural computation , 24(8):2151–2184, 2012. [ Freedman and Zilberstein, 2018 ] Richard Gabriel Freed- man and Shlomo Zilberstein. Roles that plan, activity , and intent recognition with planning can play in games. In W orkshops at the Thirty-Second AAAI Conference on Ar- tificial Intelligence , 2018. [ Guan and Pl ¨ otz, 2017 ] Y u Guan and Thomas Pl ¨ otz. En- sembles of deep lstm learners for activity recognition us- ing wearables. Pr oceedings of the ACM on Interactive, Mobile, W earable and Ubiquitous T echnologies, IMWUT , 1(2):11, 2017. [ Guo et al. , 2016 ] Haodong Guo, Ling Chen, Liangying Peng, and Gencai Chen. W earable sensor based multi- modal human activity recognition exploiting the diversity of classifier ensemble. In Proceedings of the 2016 A CM International Joint Conference on P ervasive and Ubiqui- tous Computing, UbiComp 2016, Heidelber g, Germany , September 12-16, 2016 , pages 1112–1123, 2016. [ Guo et al. , 2017 ] Xiaonan Guo, Jian Liu, and Y ingying Chen. Fitcoach: V irtual fitness coach empo wered by wear - able mobile devices. In 2017 IEEE Confer ence on Com- puter Communications, INFOCOM 2017, Atlanta, GA, USA, May 1-4, 2017 , pages 1–9, 2017. [ Hammerla et al. , 2016 ] Nils Y . Hammerla, Shane Halloran, and Thomas Pl ¨ otz. Deep, con volutional, and recurrent models for human acti vity recognition using wearables. In Pr oceedings of the T wenty-Fifth International Joint Con- fer ence on Artificial Intelligence, IJCAI 2016, New Y ork, NY , USA, 9-15 July 2016 , pages 1533–1540, 2016. [ Lara and Labrador , 2013 ] Oscar D Lara and Miguel A Labrador . A surve y on human acti vity recognition using wearable sensors. IEEE Communications Surve ys & T uto- rials , 15(3):1192–1209, 2013. [ Mnih et al. , 2014 ] V olodymyr Mnih, Nicolas Heess, Alex Grav es, et al. Recurrent models of visual attention. In Advances in neur al information pr ocessing systems , pages 2204–2212, 2014. [ Pouyanfar et al. , 2018 ] Samira Pouyanfar , Saad Sadiq, Y ilin Y an, Haiman Tian, Y udong T ao, Maria Presa Reyes, Mei-Ling Shyu, Shu-Ching Chen, and SS Iyengar . A sur - ve y on deep learning: Algorithms, techniques, and ap- plications. ACM Computing Surveys (CSUR) , 51(5):92, 2018. [ Radu et al. , 2018 ] V alentin Radu, Catherine T ong, Soura v Bhattacharya, Nicholas D Lane, Cecilia Mascolo, Ma- hesh K Marina, and Fahim Ka wsar . Multimodal deep learning for activity and context recognition. Pr oceedings of the ACM on Interactive, Mobile, W earable and Ubiqui- tous T echnologies, IMWUT , 1(4):157, 2018. [ Reiss and Stricker , 2012 ] Attila Reiss and Didier Stricker . Introducing a new benchmarked dataset for activit y moni- toring. In W earable Computers (ISWC), 2012 16th Inter- national Symposium on , pages 108–109. IEEE, 2012. [ W ang and W ang, 2017 ] Hongsong W ang and Liang W ang. Modeling temporal dynamics and spatial configurations of actions using two-stream recurrent neural networks. The Confer ence on Computer V ision and P attern Recognition (CVPR) , 2017. [ W illiams, 1992 ] Ronald J Williams. Simple statistical gradient-following algorithms for connectionist reinforce- ment learning. Machine learning , 8(3-4):229–256, 1992. [ Y ang et al. , 2015 ] Jianbo Y ang, Minh Nhut Nguyen, Phyo Phyo San, Xiaoli Li, and Shonali Krishnaswamy . Deep con v olutional neural networks on multichannel time series for human acti vity recognition. In IJCAI , pages 3995–4001, 2015. [ Zhang et al. , 2018 ] Xiang Zhang, Lina Y ao, Chaoran Huang, Sen W ang, Mingkui T an, Guodong Long, and Can W ang. Multi-modality sensor data classification with se- lectiv e attention. In Pr oceedings of the T wenty-Seventh International Joint Conference on Artificial Intelligence , IJCAI 2018, J uly 13-19, 2018, Stockholm, Sweden. , pages 3111–3117, 2018. [ Zontak et al. , 2013 ] Maria Zontak, Inbar Mosseri, and Michal Irani. Separating signal from noise using patch recurrence across scales. In Pr oceedings of the IEEE Confer ence on Computer V ision and P attern Recognition (CVPR) , pages 1195–1202, 2013.

Original Paper

Loading high-quality paper...

Comments & Academic Discussion

Loading comments...

Leave a Comment