Evolving neural networks to follow trajectories of arbitrary complexity

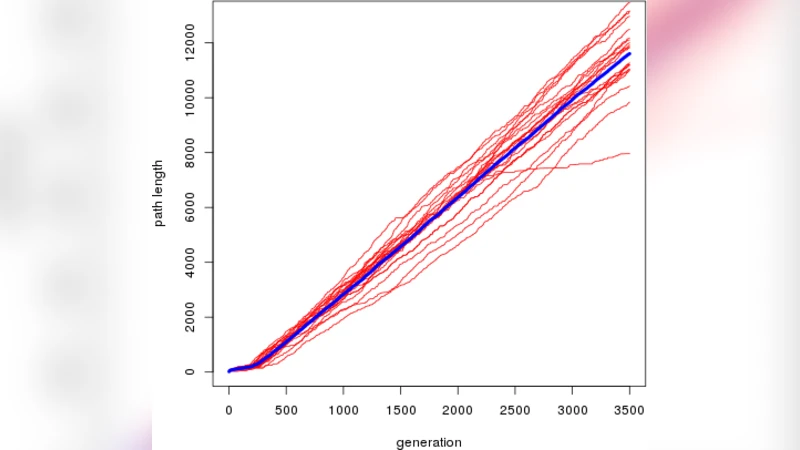

Many experiments have been performed that use evolutionary algorithms for learning the topology and connection weights of a neural network that controls a robot or virtual agent. These experiments are not only performed to better understand basic biological principles, but also with the hope that with further progress of the methods, they will become competitive for automatically creating robot behaviors of interest. However, current methods are limited with respect to the (Kolmogorov) complexity of evolved behavior. Using the evolution of robot trajectories as an example, we show that by adding four features, namely (1) freezing of previously evolved structure, (2) temporal scaffolding, (3) a homogeneous transfer function for output nodes, and (4) mutations that create new pathways to outputs, to standard methods for the evolution of neural networks, we can achieve an approximately linear growth of the complexity of behavior over thousands of generations. Overall, evolved complexity is up to two orders of magnitude over that achieved by standard methods in the experiments reported here, with the major limiting factor for further growth being the available run time. Thus, the set of methods proposed here promises to be a useful addition to various current neuroevolution methods.

💡 Research Summary

The paper addresses a fundamental limitation in neuroevolutionary robotics: the rapid saturation of behavioral complexity when evolving neural network controllers. Standard approaches such as NEAT (NeuroEvolution of Augmenting Topologies) allow the network topology to grow, but they apply mutations uniformly across the whole genome and keep mutation rates fixed. Consequently, after a few hundred generations the evolution plateaus, producing only modestly complex behaviors.

To overcome this, the authors introduce four complementary mechanisms that together enable near‑linear growth of behavioral complexity over thousands of generations.

-

Freezing of Previously Evolved Structure – Genes (nodes and connections) that have survived a predefined number of generations become immutable. Only recently added genes are eligible for mutation. This mimics biological regions with reduced mutation rates and protects useful sub‑structures from being destroyed.

-

Temporal Scaffolding – The trajectory to be followed is presented incrementally. The agent first has to master a short segment; once it succeeds, a new segment is appended. This staged increase of task difficulty keeps selective pressure constant while allowing the controller to gradually acquire more sophisticated temporal dynamics.

-

Homogeneous Transfer Function for Output Nodes – Instead of the usual tanh or linear averaging, the authors use a sine function (sin πx) as the activation of output neurons. Because the sine is periodic, any new connection can drive the output to any value in the full range

Comments & Academic Discussion

Loading comments...

Leave a Comment