Bayesian Pitch Tracking Based on the Harmonic Model

Fundamental frequency is one of the most important characteristics of speech and audio signals. Harmonic model-based fundamental frequency estimators offer a higher estimation accuracy and robustness against noise than the widely used autocorrelation…

Authors: Liming Shi, Jesper Kjaer Nielsen, Jesper Rindom Jensen

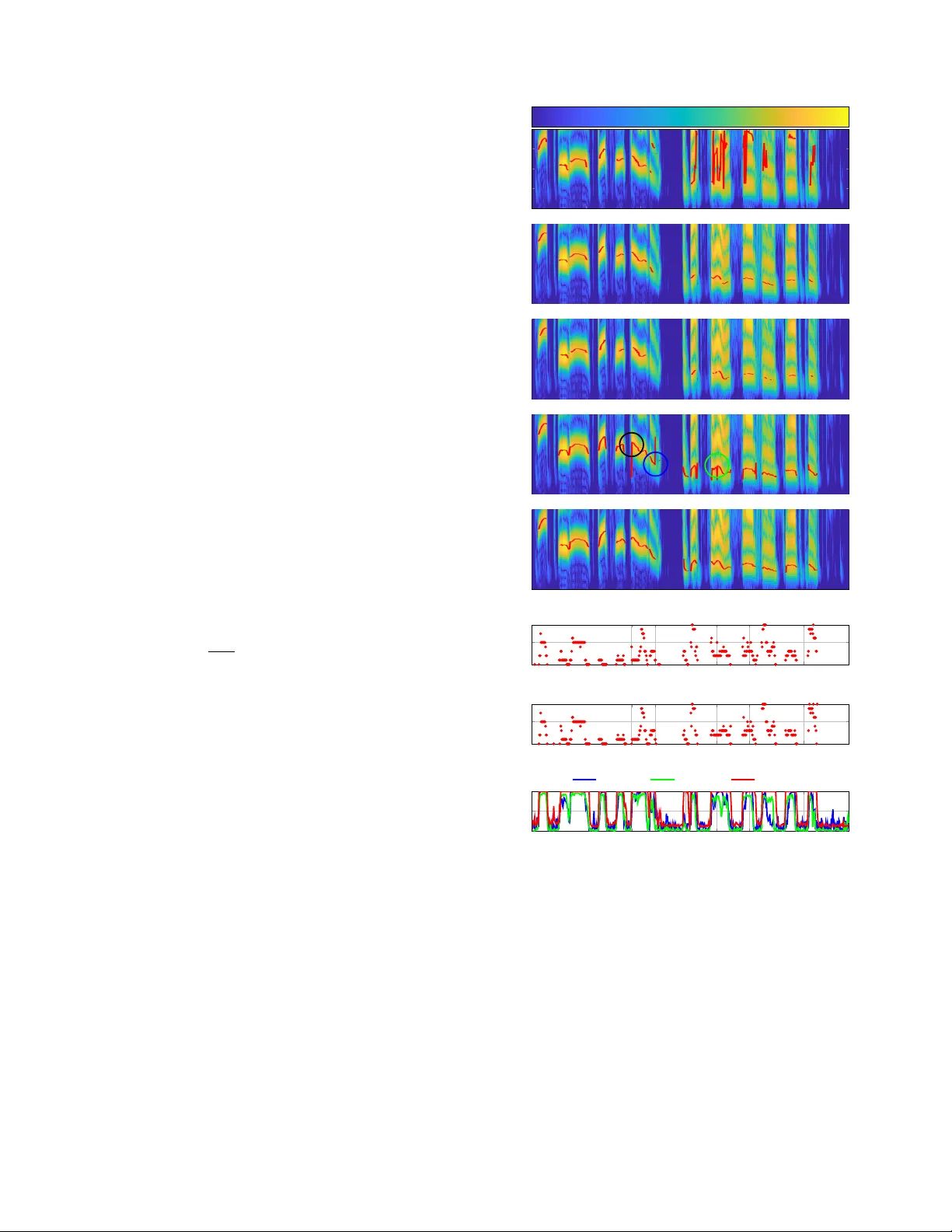

Preprint 1 Bayesian Pitch T racking Based on the Harmonic Model Liming Shi, Student Member , IEEE, Jesper Kjær Nielsen, Member , IEEE, Jesper Rindom Jensen, Member , IEEE, Max A. Little, and Mads Græsbøll Christensen, Senior Member , IEEE Abstract —Fundamental frequency is one of the most important characteristics of speech and audio signals. Harmonic model- based fundamental frequency estimators offer a higher esti- mation accuracy and rob ustness against noise than the widely used autocorr elation-based methods. Howev er , the traditional harmonic model-based estimators do not take the temporal smoothness of the fundamental frequency , the model order , and the voicing into account as they process each data segment independently . In this paper , a fully Bayesian fundamental frequency tracking algorithm based on the harmonic model and a first-order Markov process model is proposed. Smoothness priors are imposed on the fundamental frequencies, model orders, and voicing using first-order Mark ov process models. Using these Markov models, fundamental frequency estimation and voicing detection err ors can be reduced. Using the harmonic model, the proposed fundamental fr equency tracker has an improv ed rob ustness to noise. An analytical form of the likelihood function, which can be computed efficiently , is derived. Compared to the state-of-the-art neural network and non-parametric approaches, the proposed fundamental frequency tracking algorithm reduces the mean absolute errors and gross errors by 15% and 20% on the Keele pitch database and 36% and 26% on sustained /a/ sounds from a database of Parkinson’ s disease voices under 0 dB white Gaussian noise. A MA TLAB version of the proposed algorithm is made fr eely a vailable for r eproduction of the results 1 . Index T erms —Fundamental frequency or pitch tracking, har - monic model, Markov process, harmonic order , voiced-un voiced detection I . I N T RO D U C T I O N T HE problem of estimating the fundamental frequency or pitch information from noisy sound signals occurs in many applications, such as speech synthesis [1], voice disorder detection [2], and automatic speech recognition [3]. Funda- mental frequenc y is a physical feature defined as the lowest frequency component of a periodic signal, while pitch is a per- ceptual feature, related to human listening [4]. Our objective is to estimate fundamental frequency . But, following [5], [6], we do not distinguish between fundamental frequency and pitch and use them interchangeably . Pitch is usually estimated using a segment of sound signals (a.k.a., frame) with a fixed segment This work was funded by the Danish Council for Independent Research, grant ID: DFF 4184-00056. L. Shi, J. K. Nielsen, J. R. Jensen and M. G. Christensen are with the Audio Analysis Lab, CREA TE, Aalborg Univ ersity , DK-9000 Aalborg, Denmark, e- mail: {ls, jrj, jkn,mgc}@create.aau.dk M. A. Little is with the Engineering and Applied Science, Aston Uni- versity and Media Lab, Massachusetts Institute of T echnology , e-mail: max.little@aston.ac.uk 1 An implementation of the proposed algorithm using MA TLAB may be found in https://tinyurl.com/yxn4a543 length (e.g., 15-35 ms for speech signals [7]). Numerous pitch estimation algorithms have been proposed in the last fifty years, which can be categorized as unsupervised and su- pervised approaches. Unsupervised pitch estimation methods can be further categorized as non-parametric and parametric approaches. Examples of non-parametric approaches include the YIN [8], RAPT [9], SWIPE [10] and PEF A C [5] methods. YIN and RAPT compute autocorrelation functions from short frames of sound signals in the time domain. Howe v er , they are not robust against noise [11] and suffer from pitch octave errors (that is, a rational multiple of the true pitch) [12]. T o reduce the pitch octave errors, SWIPE uses the cross- correlation function against a sawtooth signal combined with the spectrum of the signal, and exploits only the first and prime harmonics of the signal. PEF A C estimates the pitch in the log- frequency domain by con volving each frame’ s po wer spectrum with a filter that sums the energy of the pitch harmonics. Dynamic programming is used to obtain a smooth estimate of the pitch track. Due to the filtering and built-in spectral normalization methods, PEF A C is claimed to work in high lev els of noise. Howe ver , a long frame length (e.g., 90.5 ms in PEF A C by default) is required to obtain good pitch estimation accuracy which is not practical in man y real-time applications. More recently , a single frequency filtering approach based pitch estimation algorithm is proposed, which exploits the high SNR frequency component to overcome the effects of degradations in speech signal [13]. By contrast, parametric methods (e.g., harmonic model- based pitch estimators [6], [14], [15]) ha ve also been pro- posed for pitch estimation. Compared with non-parametric approaches, harmonic model-based pitch estimators work with a short frame length (e.g., 20 ms), and show higher rob ust- ness to additive noise, fewer octav e errors, and better time- frequency resolution [16], [17]. Recently , a computationally efficient pitch estimator based on a harmonic model has been proposed, which is referred to as the fast NLS [11]. Ho wev er , one problem with most of the harmonic model based pitch estimators is that they do not take the temporal smoothness of the pitch, the harmonic order , and voicing into account as they process each frame independently . As a result, outliers, due to octav e errors or voicing detection errors, occur . A sample-by- sample Kalman filtering-based pitch tracking algorithm using a time-varying harmonic model is proposed in [18] by assuming that the pitch and weights follow first-order Markov chains. A particle filtering-based pitch tracking algorithm based on the source-filter speech model combining with the harmonic modelling of input source is introduced in [19]. Ho wev er , the Preprint 2 good performance of the algorithms in [18] and [19] requires careful initializations. Moreov er , it is difficult to integrate the time-varying model order into these algorithms, see [20] as an example of combing discrete and continuous state spaces. W ith either a known or estimated model order , a maximum a posteriori (MAP) pitch estimator based on the harmonic model has been de veloped to exploit the temporal dynamics of the pitch [21]. The model weights and observ ation noise variance are estimated by maximizing the maximum likeli- hood function (i.e., a frequentist perspectiv e). Smooth pitch estimates are obtained, and thus the pitch octave errors are reduced. An additional v oicing state is further considered in [22] for estimating the pitch and obtaining the voiced-un voiced decision jointly . Howe ver , the pitch tracking approach in [21] and [22] has many drawbacks. First, the assumption of a fixed harmonic order for multiple frames is not valid. In fact, in audio signals, the harmonic order often changes from frame to frame [23]. Second, matrix inv ersions are required to be stored for each candidate pitch to reduce the computational complexity . Third, errors can be found in transition frames where the v oicing changes, because the past pitch information is not exploited when an unv oiced frame occurs. Finally , it is well-known that estimating parameters from a frequentist’ s perspectiv e leads to ov er-fitting [24]. More recently , neural network based supervised pitch track- ing algorithms were proposed [25]–[27], which show robust- ness against noise. The method proposed in [26] uses deep stacking network for joint speech separation and pitch esti- mation. The CREPE [27] discretises the pitch in logarithmic scale and uses a deep conv olutional neural network to produce a pitch estimate. Howe ver , the unv oiced/silent state is not considered in the model. The maximum v alue of the output of the neural netw ork is used as a heuristic estimate of the voicing probability . Moreov er , to satisfy user’ s demand for different frequency resolution or frame length, the whole system is required to be retrained, which is usually time-consuming. In this paper , we propose a fully Bayesian harmonic model- based pitch tracking approach. By using the harmonic model, as opposed to non-parametric methods, improved robustness against background noise and octav e errors can be obtained. First-order Markov processes are used to capture the temporal dynamics of pitch, harmonic order , and voicing. By using information from pre vious frames, the rate of octav e errors and the voicing detection errors can be further reduced. Compared to [21] and [22], we not only consider the temporal dynamics of pitch and voicing, but also of the harmonic order , which enables us to detect if any pitch is present, and estimate the pitch and harmonic order jointly and accurately . Moreover , past information on pitch is exploited to improve robustness against temporal voicing changes. Furthermore, by adopting a fully Bayesian approach to model weights and observation noise v ariances, the overfitting can be av oided. By assigning a proper transition pdf for the weights, fast NLS [11] can be easily incorporated into the proposed algorithm, leading to low computational and storage complexities. The rest of the paper is organized as follows. In Section II, we briefly revie w general Bayesian tracking theory . In Section III and Section IV, we present the proposed harmonic obser- vation and state ev olution models, respectiv ely . In Section V, the proposed pitch tracking algorithm is deriv ed based on the harmonic observation and state e volution models. In Section VI, we briefly revie w the prewhitening step for dealing with non-Gaussian noise. Simulation results are giv en in Section VII, and the conclusions given in Section VIII. Notation: Boldface symbols in lowercase and uppercase letters denote column vectors and matrices, respectively . I I . B AY E S I A N T R A C K I N G In this section, we briefly revie w Bayesian tracking in gen- eral, which forms the fundamental structure of the proposed pitch tracking algorithm. Consider the problem of estimating the state sequence { x n } , 1 ≤ n ≤ N from noisy observations { y n } , 1 ≤ n ≤ N , related by y n = h ( x n , v n ) , (1) where h ( · ) denotes a mapping function between the state and observation v ectors, v n denotes an i.i.d. observation noise sequence, and n denotes the time inde x. The state sequence follows a first-order Markov process: x n = f ( x n − 1 , m n ) , (2) where f ( · ) denotes a mapping function between the current and previous states, and m n denotes an i.i.d. state noise sequence. The elements in the state vector x n can either be continuous or discrete. Assume that the posterior pdf p ( x n − 1 | Y n − 1 ) is av ailable with the initial pdf being defined as p ( x 0 ) , where Y n − 1 denotes a collection of observ ation vectors from the first observ ation vector up to the ( n − 1) th observation vector , i.e., Y n − 1 = [ y 1 , · · · , y n − 1 ] . The objectiv e of Bayesian tracking is to obtain a posterior distribution ov er the state vector x n based on the current and previous observations recursiv ely , i.e., p ( x n | Y n ) . The posterior p ( x n | Y n ) can be obtained in two stages: predict and update. In the prediction stage, we obtain the prediction pdf p ( x n | Y n − 1 ) by using the transition pdf p ( x n | x n − 1 ) from (2), i.e., p ( x n | Y n − 1 ) = ˆ p ( x n | x n − 1 ) p ( x n − 1 | Y n − 1 ) d x n − 1 , 2 ≤ n ≤ N , p ( x 1 ) = ˆ p ( x 1 | x 0 ) p ( x 0 ) d x 0 , n = 1 , (3) which is known as the Chapman-K olmogorov equation. Note that if the elements in x n are all discrete v ariables, the integration operator should be replaced with the summation operator . In the update stage, combining (1) and the prediction pdf from the prediction stage, Bayes’ rule can be applied to obtain the posterior, i.e., p ( x n | Y n ) = p ( y n | x n , Y n − 1 ) p ( x n | Y n − 1 ) p ( y n | Y n − 1 ) , 2 ≤ n ≤ N , p ( x 1 | Y 1 ) = p ( y 1 | x 1 ) p ( x 1 ) p ( y 1 ) , n = 1 , (4) Preprint 3 where p ( y n | x n , Y n − 1 ) and p ( y 1 | x 1 ) are the likelihood func- tions and p ( y n | Y n − 1 ) and p ( y 1 ) are the normalization factors, respectiv ely . Closed form solutions can be obtained for (3) and (4) in at least two cases. In the first case, when both v n and m n are drawn from Gaussian distributions with known parameters, and both h ( x n , v n ) and f ( x n − 1 , m n ) are linear functions ov er the v ariables, (3) and (4) reduce to the well- known Kalman-filter [24]. In the second case, when the state space is discrete and has a limited number of states, (3) and (4) reduce to the forward step of the forward-backward algorithm for hidden Markov model (HMM) inference [24]. In other cases, the inference of the posterior p ( x n | Y n ) can be approximated using Monte Carlo approaches, such as particle filtering [28]. Next, we define the mapping function h ( · ) and formulate the observation equation (1) based on the harmonic model in Section III, and then explain the state ev olution model (2) for the proposed pitch tracking algorithm in Section IV. I I I . H A R M O N I C O B S E RV A T I O N M O D E L A. The harmonic observation model Consider the general signal observation model giv en by y n = s n + v n , (5) where the observation vector y n is a collection of M samples from the n th frame defined as y n = [ y n, 1 , · · · , y n,M ] T , the clean signal vector s n and noise vector v n are defined similarly to y n , M is the frame length in samples and n is the frame index. W e assume that v n is a multi variate white noise processes with zero mean and diagonal covariance matrix σ 2 n I , σ 2 n is the noise variance, I is the identity matrix. When voiced speech or music is present, we assume that the pitch, model weights and model order are constant over a short frame (typically 15 to 35 ms for speech signals and longer for music signals) and s n,m (i.e., the m th element of s n ) follows the harmonic model, i.e., H 1 : s n,m = K n X k =1 [ α k,n cos ( k ω n m ) + β k,n sin ( k ω n m )] , (6) where α k,n and β k,n are the linear weights of the k th harmonic, ω n = 2 π f n /f s is the normalized digital radian frequency , f s is the sampling rate, and K n is the number of harmonics. When voiced speech/music is absent (unv oiced or silent), a null model is used, i.e., H 0 : y n = v n . (7) Note that, based on the source-filtering model of speech generation, the unv oiced speech can be modelled as a coloured Gaussian process [29]. The observ ation noise in practice may hav e non-stationary and non-Gaussian properties, such as babble noise. Ho wev er , we can deal with this by prewhitening the observ ation signals [23], which will be described in Section VI. Writing (6) in matrix form and combining (5) and (6) yields H 1 : y n = Z ( ω n , K n ) a K n + v n , (8) where Z ( ω 0 , K n ) = [ c ( ω n ) , · · · , c ( K n ω n ) , d ( ω n ) , · · · , d ( K n ω n )] , c ( ω n ) = [ cos ( ω n 1) , · · · , cos ( ω n M )] T , d ( ω n ) = [ sin ( ω n 1) , · · · , sin ( ω n M )] T , a K n = [ α 1 ,n , · · · , α K n ,n , β 1 ,n , · · · , β K n ,n ] T . W e can further write (7) and (8) together by introducing a binary voicing indicator variable u n , i.e., y n = u n Z ( ω n , K n ) a K n + v n , (9) where u n ∈ { 0 , 1 } . When u n = 0 and u n = 1 , (9) reduces to the unv oiced and voiced models (7) and (8), respectiv ely . W e collect all the unknown variables into the state vector x n = [ a K n , σ 2 n , ω n , K n , u n ] T . Comparing (9) and (1), we can conclude that the mapping function h ( · ) is a nonlinear function w .r .t. the state vector x n . Moreover , the state vector x n contains continuous variables a K n , σ 2 n , ω n and discrete variables K n and u n . Howe ver , due to the non-linear char- acteristics of (9) w .r .t. ω n , uniform discretisation over the pitch ω n is commonly used [11]. An off-grid estimate of ω n can be obtained by pitch refinement algorithms, such as gradient descent [30]. Our target is to obtain estimates of the fundamental frequency ω n , the harmonic order K n , and the voicing indicator u n , that is a subset of x n defined as ¨ x n = [ ω n , K n , u n ] T , from the noisy observation y n . I V . T H E S TA T E E V O L U T I O N M O D E L In this section, we deri ve the state ev olution model (2) or more generally the transition probability density/mass function (pdf/pmf) p ( x n | x n − 1 , Y n − 1 ) for continuous/discrete states of the proposed model. Following the fast NLS pitch es- timation approach [11], we uniformly discretize the pitch ω n ∈ { ω f , 1 ≤ f ≤ F } over the range [ ω min , ω max ] , where ω min and ω max denote the lowest and highest pitches in the searching space, respectively . Prior information can be used to set ω min and ω max . For example, pitch is usually between 70 to 400 Hz for speech signals. The grid size is set to j F ω max 2 π k − l F ω min 2 π m + 1 , where F denotes the DFT size for computing the likelihood function (see Section V and [11] for further details), b·c and d·e denote the flooring and ceiling operators, respecti vely . It is also sho wn that the optimal choice of F depends on the frame length and the harmonic order [11]. Howe ver , for simplicity and fast implementation, in this paper , we set F = 2 14 . The state space for the discrete variables can be expressed as {M ( n ) : [ ω n = ω f , K n = k , u n = 1] T , 1 ≤ f ≤ F , 1 ≤ k ≤ K max } ∪ {M 0 ( n ) : u n = 0 } . The prediction pdf p ( x n | Y n − 1 ) defined in (3) can be factorized as p ( x n | Y n − 1 ) = p ( a K n | σ 2 n , ¨ x n , Y n − 1 ) × p ( σ 2 n | ¨ x n , Y n − 1 ) p ( ¨ x n | Y n − 1 ) . (10) Preprint 4 W e first explain the transition pdfs for the continuous vari- ables σ 2 n and a K n , and then discuss the transition pmfs for the discrete variables ω n , K n and u n . The selection of a state ev olution model is a trade-off between being physically accurate and ending up with a practical solution. A. T ransition pdfs for the noise variance and weights T o obtain the prediction pdf for the noise variance p ( σ 2 n | ¨ x n , Y n − 1 ) , the transition pdf for the noise variance p ( σ 2 n | σ 2 n − 1 , ¨ x n , Y n − 1 ) should be defined. A reasonable as- sumption for the noise v ariance is that it changes slo wly from frame to frame. For example, the unknown parameter σ 2 n can be assumed to ev olve according to an in verse Gamma distribution [31], i.e. p ( σ 2 n | σ 2 n − 1 ) = I G ( σ 2 n | c, dσ 2 n − 1 ) . (11) where I G ( x | α, β ) = β α Γ( α ) x − α − 1 exp( − β x ) and Γ( · ) denotes the gamma function. With this transition pdf, an analytical form of the posterior distribution on x n cannot be deriv ed. A sequential Monte Carlo approach can be used to ap- proximate the posterior numerically [32]. Howe ver , the ma- jor drawback of any Monte Carlo filtering strategy is that sampling in high-dimensional spaces can be inef ficient [33]. A Rao-blackwellized particle filtering approach [34], which marginalises out some of the variables for statistical variance reduction, can be used to deal with this problem. Howe ver , we do not pursue this approach any further in this paper, and leav e it for future work. Instead, for simplicity , we assume independence between σ 2 n and σ 2 n − 1 , and use the Jeffery’ s prior , i.e., p ( σ 2 n | σ 2 n − 1 , ¨ x n , Y n − 1 ) ∝ 1 /σ 2 n , σ 2 n > 0 . (12) As can be seen, the Jeffery’ s prior (12) is a limiting case of (11) with c → 0 and d → 0 . Similarly , we define the transition pdf for the weights as p ( a K n | a K n − 1 , σ 2 n , ¨ x n , Y n − 1 ) . Imposing smoothness depen- dency on the weight time e volution can reduce pitch octa ve errors [35]. Howe ver , in order to use the fast algorithm [11], we assume that the model weights between consecutive frames are conditionally independent giv en pre vious observ ations and the rest of unknown variables. Follo wing [36], we use the hierarchical prior p ( a K n | a K n − 1 , σ 2 n , ¨ x n , Y n − 1 , g n ) = N ( a K n | 0 , g n σ 2 n ( Z ( ω n , K n ) T Z ( ω n , K n ) − 1 ) , (13) p ( g n | δ ) = δ − 2 2 (1 + g n ) − δ / 2 , g > 0 , (14) where N ( x | µ , Σ ) denotes that the vector x has the multiv ari- ate normal distribution with mean µ and covariance matrix Σ . The prior distribution for the weights (13) is known as Zellner’ s g-prior [37]. As can be seen from (13), giv en ω n and K n , the prior cov ariance matrix is a scaled version of the Fisher information matrix. W ith Zellner’ s g-prior, a closed- form calculation of the marginal likelihood can be obtained [38]. Moreover , the fast algorithm in [11] for computing the marginal likelihood can be applied (see Section V for detail). ¨ x n − 1 ¨ x n ¨ x n +1 a K n − 1 a K n a K n +1 y n − 1 y n y n +1 g n − 1 g n g n +1 σ 2 n − 1 σ 2 n σ 2 n +1 Fig. 1. A graphical model of the proposed method with shaded nodes indicating observed variables. The graphical model for the proposed method is sho wn in Fig. 1. Note that, instead of obtaining point estimates of the noise variance and weight parameters using maximum likelihood [21], a Bayesian approach is used to represent the full uncertainty over these parameters. B. T ransition pmfs for ω n , K n and u n In [21], to reduce octav e errors, a first-order Markov model is used for the pitch ev olution provided that the harmonic order is fix ed and known/estimated for multiple frames. Another voicing e volution model is further considered in [22] by imposing the so-called "hang-over" scheme [39]. Although in some cases, the harmonic order may not be of interest, it is still necessary to estimate it to obtain correct pitch estimates [40]. In fact, considering the temporal dynamics of the model order helps reducing the octav e errors, which will be verified by the simulation results. Moreov er , using priors for the model order is also necessary for model comparison [36]. In this paper , we propose to track the pitch ω n , the harmonic order K n and the voicing indicator u n jointly . More specifically , we impose smoothness constraints on ω n and K n , and hang- ov er on voicing state using first-order Markov processes. The transition probability for the n th frame to be voiced with pitch ω n and harmonic order K n when the previous frame is also voiced with ω n − 1 and K n − 1 can be expressed as p ( M ( n ) |M ( n − 1)) = p ( ω n , K n | ω n − 1 , K n − 1 , u n − 1 = 1 , u n = 1) × p ( u n = 1 | u n − 1 = 1) . (15) W e assume that the pitch ω n and harmonic order K n ev olv e according to their own, independent dynamics given u n = 1 and u n − 1 = 1 , i.e., p ( ω n , K n | ω n − 1 , K n − 1 , u n = 1 , u n − 1 = 1) = p ( ω n | ω n − 1 , u n = 1 , u n − 1 = 1) × p ( K n | K n − 1 , u n = 1 , u n − 1 = 1) , (16) which means when both time frame n − 1 and n are voiced, the pitch and harmonic order only depend on their pre vious Preprint 5 ω n − 1 ω n ω n +1 u n − 1 u n u n +1 K n − 1 K n K n +1 Fig. 2. A graphical model specifying conditionally independence relations for the discrete variables. states. In fact, this assumption is only true when the product of the maximum allowed harmonic order and the pitch is less than half of the sampling frequency . Howe ver , by using a Bayesian approach, a model with a larger harmonic order is more penalized than with a smaller one. Even if a large value is used for the maximum allowed harmonic order in the proposed approach, the posterior model probability with a large harmonic order can be small [41]. In [42], an infinite number of harmonics is used, and the non-parametric prior distribution is used to penalize the models with large harmonic orders. By assuming the pitch and harmonic order are conditionally independent given u n = 1 and u n − 1 = 1 , the Bayesian inference of the model posterior , sho wn in Section V, can be simplified. The transition probability for the n th frame to be voiced with pitch ω n and harmonic order K n when the previous frame is un voiced/silent can be expressed as p ( M ( n ) |M 0 ( n − 1)) = p ( ω n , K n | u n = 1 , u n − 1 = 0) p ( u n = 1 | u n − 1 = 0) . (17) The priors from an un voiced frame to a voiced frame p ( ω n , K n | u n = 1 , u n − 1 = 0) are set to p ( ω m , K m | Y m , u m = 1) , which can be calculated as p ( ω m , K m | Y m , u m = 1) = p ( ω m , K m , u m = 1 | Y m ) 1 − p ( u m = 0 | Y m ) , (18) where m is the closest frame inde x to n that satisfies the constraint p ( u m = 0 | Y m ) < 0 . 5 ( m th frame is voiced). In fact, if the previous frame is not voiced, we exploit the information from the latest frame that is voiced as the prior for the pitches and harmonic orders. The motiv ation for this choice is that the pitch and harmonic order usually do not change abruptly after a short segment of un voiced/silent frames. Using the past information as the pri or , robustness against the voicing state changes can be improved. The graphical model for the ev olution of ¨ x ( n ) is shown in Fig. 2. Assuming the Markov processes are time-in v ariant, we can express the transition matrices for the pitch, harmonic order and voicing as A ω , A K and A u , respectively . V . P I T C H T R AC K I N G In this section, a joint pitch and harmonic order tracking, and voicing detection algorithm is derived based on the Bayesian tracking formulas (3) and (4). First, note that, by assuming that σ 2 n and σ 2 n − 1 are conditionally independent giv en ¨ x n and Y n − 1 , and a K n and a K n − 1 are conditionally independent giv en σ 2 n , ¨ x n and Y n − 1 , the prediction pdfs are equal to the transition pdfs, i.e., p ( σ 2 n | ¨ x n , Y n − 1 ) = p ( σ 2 n | σ 2 n − 1 , ¨ x n , Y n − 1 ) , (19) p ( a K n | σ 2 n , ¨ x n , Y n − 1 ) = ˆ p ( a K n | a K n − 1 , σ 2 n , ¨ x n , Y n − 1 , g n ) p ( g n ; δ ) dg n . (20) Based on (3), prediction pmfs for discrete variables p ( ¨ x ( n ) | Y n − 1 ) can be expressed as p ( M ( n ) | Y n − 1 ) = X M ( n − 1) p ( M ( n ) |M ( n − 1)) p ( M ( n − 1) | Y n − 1 )+ p ( M ( n ) |M 0 ( n − 1)) p ( M 0 ( n − 1) | Y n − 1 ) , (21) p ( M 0 ( n ) | Y n − 1 ) = 1 X h =0 p ( u n = 0 | u n − 1 = h ) p ( u n − 1 = h | Y n − 1 ) = p ( u n = 0 | u n − 1 = 0) p ( M 0 ( n − 1) | Y n − 1 )+ p ( u n = 0 | u n − 1 = 1)(1 − p ( M 0 ( n − 1) | Y n − 1 )) . (22) W ith the prediction pdfs and pmfs in hand, we can obtain the update equation based on (4). In order to obtain the posteriors for the pitch, harmonic order and voicing indicators, the weights and noise variance can be integrated out from the update equation, i.e., p ( ¨ x n | Y n ) ∝ ˆ p ( y n | x n , Y n − 1 ) p ( x n | Y n − 1 ) d a K n dσ 2 n = p ( y n | ¨ x n , Y n − 1 ) p ( ¨ x n | Y n − 1 ) , (23) where p ( y n | ¨ x n , Y n − 1 ) denotes a marginal likelihood, defined as p ( y n | ¨ x n , Y n − 1 ) = ˆ p ( y n | x n ) p ( a K n | σ 2 n , ¨ x n , Y n − 1 ) × p ( σ 2 n | ¨ x n , Y n − 1 ) p ( g n ; δ ) d a K n dσ 2 n dg n . (24) Using (9), (12), (13), (14), (19) and (20), a closed-form marginal likelihood can be obtained, i.e., p ( y n | ¨ x n , Y n − 1 ) = ( δ − 2) 2 K n + δ − 2 2 F 1 M 2 , 1; 2 K n + δ 2 ; R 2 ( ω n , K n ) u n × m M ( y n ) , (25) Preprint 6 where m M ( y n ) = Γ( M 2 ) ( π || y n || 2 2 ) M 2 , (26) R 2 ( ω n , K n ) = y T n Z ( ω n , K n ) ˆ a K n y T n y n , (27) ˆ a K n = ( Z ( ω n , K n ) T Z ( ω n , K n )) − 1 Z ( ω n , K n ) y n , (28) m M ( y n ) denotes the null model likelihood (i.e., p ( y n | u n = 0) ) and 2 F 1 denotes the Gaussian hypergeometric function [43]. T o compute R 2 ( ω n , K n ) for all the candidate pitches and harmonic orders, the fast algorithm [11] can be applied. Moreov er , from a computational point of view , a Laplace approximation of (24) can be derived as an alternativ e instead of marginalizing w .r .t. g n analytically [36]. Note that, for the discrete vector ¨ x n , it should satisfy the normalisation constraint, 1 = X ¨ x n p ( ¨ x n | Y n ) = p ( M 0 ( n ) | Y n ) + X M ( n ) p ( M ( n ) | Y n ) . (29) Finally , estimates of the pitch and harmonic order and the voiced/un v oiced state can be jointly obtained using the maximum a posterior (MAP) estimator . More specifically , the n th frame is labeled as v oiced if p ( u n = 0 | Y n ) < 0 . 5 , and the pitch and harmonic order are obtained as ( ˆ ω n , ˆ K n ) = max ω n ,K n p ( ω n , K n , u n = 1 | Y n ) . (30) The proposed Bayesian pitch tracking algorithm is shown in Algorithm 1. T o make inferences, we need to specify the transition matrices for the pitch p ( ω n | ω n − 1 , u n = 1 , u n − 1 = 1) , the harmonic order p ( K n | K n − 1 , u n = 1 , u n − 1 = 1) and p ( u n | u n − 1 ) . Following [21], we set p ( ω n | ω n − 1 , u n = 1 , u n − 1 = 1) = N ( ω n | ω n − 1 , σ 2 ω ) . The transition probability for the model order is chosen as p ( K n | K n − 1 , u n = 1 , u n − 1 = 1) = N ( K n | K n − 1 , σ 2 K ) . Smaller σ 2 ω and σ 2 K lead to smoother estimates of the pitch and harmonic order while larger values make the inference less dependent on the previous estimates. The matrix A u is controlled by p ( u n = 1 | u n − 1 = 0) and p ( u n = 0 | u n − 1 = 1) . In order to reduce the false negati ve (wrongly classified as unv oiced when a frame is voiced) rate, we set p ( u n = 1 | u n − 1 = 0) = 0 . 4 , p ( u n = 0 | u n − 1 = 1) = 0 . 3 , respectiv ely , that is, the transition probability from un v oiced to voiced is higher than from voiced to un voiced. Note that each row of A ω , A K , and A u is normalised to ensure they are proper pmfs. By setting σ 2 ω → ∞ , σ 2 K → ∞ , p ( u n = 1 | u n − 1 = 0) = 0 . 5 and p ( u n = 0 | u n − 1 = 1) = 0 . 5 , the proposed algorithm reduces to the fast NLS algorithm [11]. V I . P R E W H I T E N I N G The fast NLS and proposed pitch tracking algorithm are de- riv ed under the assumption of white Gaussian noise. Howe v er , this assumption is usually violated in practice, for example, babble noise in a conference hall. Therefore, a prewhitening step is required to deal with the inconsistency between the white Gaussian noise model assumption and real life noise Algorithm 1 The proposed Bayesian pitch tracking 1: Initiate the harmonic order K max , transition matrices A ω , A K and A u , and the initial probability p ( u 0 | y 0 ) and p ( ω 0 , K 0 , u 0 = 1 | y 0 ) satisfying the constraint p ( u 0 = 0 | y 0 ) + P ω 0 ,K 0 p ( ω 0 , K 0 , u 0 = 1 | y 0 ) = 1 2: for n = 1 , 2 , · · · do 3: Pr ediction step: 4: Obtain p ( M ( n ) | Y n − 1 ) based on (21), (15) and (17). 5: Obtain p ( M 0 ( n ) | Y n − 1 ) based on (22). 6: Update step: 7: Calculate p ( y n | ¨ x n , Y n − 1 ) using the fast weight esti- mation algorithm [11] and (25). 8: Calculate the unnormalised posteriors p ( M ( n ) | Y n ) and p ( M 0 ( n ) | Y n ) based on (23). 9: Normalise the posteriors based on the constraint (29). 10: MAP estimation: 11: if p ( M 0 ( n ) | Y n ) > 0 . 5 then 12: The n th frame is labeled as unv oiced/silent. 13: else 14: The n th frame is labeled as voiced. 15: Estimating ˆ ω n and ˆ K n based on (30). 16: Update p ( ω m , K m | Y m , u m = 1) based on (18). 17: end if 18: end for model. A linear prediction (LP) based prewhitening step is applied to each frame to deal with the non-white Gaussian noise (see [23], [44] for detail). The power spectral density (PSD) of the noise giv en noisy signals is estimated using the minimum mean-square error (MMSE) estimator [45]. W e refer to the fast NLS and proposed algorithm with pre whitening step as Prew-Fast NLS and Prew-Proposed, respectiv ely . V I I . S I M U L A T I O N In this section, we test the performance of the proposed harmonic model-based pitch tracking algorithm on real speech signals. A. Databases The databases used for e valuating the performance of dif- ferent algorithms are as follows: K eele pitch database : containing 10 spoken sentences from fiv e male and fiv e female speakers at a sampling rate of 20 kHz [46]. the "ground truth" pitch estimates are extracted from electroglottography with 10 ms time frame increment and 25.6 ms frame length. In fact, there are many spikes and wrong estimates in the "ground truth" pitch values, especially in the transient frames. Howe ver , we present the results for the Keele database to facilitate comparison with other pitch estimation algorithms that use this database. P arkinson’ s disease database : containing 130 sustained /a/ phonations from patients with Parkinson’ s disease [47] at a sampling rate of 44.1 kHz. Each of the phonations is in one second length. The estimated "ground truth" pitches in 10 ms time frame increment are extracted from electroglottography (EGG). Preprint 7 B. P erformance measur es Three performance measures are considered: T otal err or ratio (TER) [22]: voicing detection performance measure. It is calculated based on the ratio between the number of incorrect v oicing detection (false positiv e and true negati v e) estimates and the number of total estimates. Gr oss err or ratio (GER) [10]: accuracy measure of pitch estimates. It is computed based on the ratio between the number of pitch estimates that differ by more than 20 percents from the ground truth and the number of total estimates. The un v oiced frames from the ground truth are excluded and the pitch value of the voiced frame that is wrongly labeled as un v oiced frames by different pitch estimation algorithms is set to 0. Mean absolute error (MAE) [47]: accuracy measure of pitch estimates. It is computed based on mean of the absolute errors between the ground truth and estimates. The un voiced frames from the ground truth are excluded and the oracle voicing detector is used for all the algorithms. C. Results First, the proposed approach is tested on concatenated speech signals uttered by a female speaker first, male speaker second, sampled at 16 kHz. The spectrogram of the clean speech signals, pitch estimates, order estimates and the voicing detection results for PEF A C, CREPE, YIN, fast NLS and the proposed algorithm are sho wn in Fig. 3. The time frames of the spectrograms without red lines on top are un v oiced or silent frames. The v ariances for the transition matrices σ 2 ω and σ 2 K are set to 16 π 2 f 2 s and 1 , respectiv ely . The SNR for white Gaussian noise is set to 0 dB. The candidate pitch ω 0 is constrained to the range 2 π [70 400] /f s for PEF A C, YIN, fast NLS and the proposed algorithm. Howe v er , the results for the neural network based approach CREPE is based on the model with the pitch range 2 π [32 . 7 1975 . 5] /f s provided by the authors [27]. T o change the settings for CREPE, re-training of the neural network model is required. The maximum allowed harmonic order for the proposed and fast NLS is set to 10. The frame length is M = 400 samples (25 ms) with 60% overlap. As can be seen from Fig. 3, the voicing detection results of both the fast NLS and the proposed algorithm are better than those of YIN, PEF AC and CREPE. For example, the frames around 2.8 s are correctly classified as voiced by the fast NLS and the proposed, b ut wrongly labeled as unv oiced by YIN, PEF A C and CREPE. Fast NLS suffers from octav e errors, and has outliers particularly in the transition frames where voicing decisions change. In the transition frame around 1.8 s, the pitch and number of harmonics are wrongly estimated to 84.8 Hz and five, respectively , by the fast NLS. In contrast, they are estimated to 248.8 Hz and one, respectiv ely , by the proposed. Clearly , the estimates of the proposed fit better to the spectrogram than the estimates of the fast NLS. The reason for the robustness against transient frames of the proposed algorithm is that the pitch and harmonic order information of the latest voiced frame is used as the prior , i.e. (18). The harmonic order of the frame in 2.2 s is estimated to two by both the fast NLS and the proposed. Howe ver , the 0 0.1 0.2 0.3 0.4 [dB] 20 10 0 − 10 − 20 − 30 0 0.1 0.2 0.3 0.4 0 0.1 0.2 0.3 0.4 F requency [kHz] 0 0.1 0.2 0.3 0.4 0 0.1 0.2 0.3 0.4 1 6 10 Order estimates of the fast-NLS 1 6 10 Order estimates of the prop osed 0 1.8 2.2 2.8 3.4 4 5 0 0.5 1.0 Time [s] V oicing probability PEF AC CREPE Proposed Fig. 3. Pitch estimates from PEF A C, CREPE, YIN, fast NLS and the proposed, the order estimates of the fast NLS and the proposed, and the voicing probabilities for real speech signals in 0 dB white Gaussian noise (from top to bottom). pitch is wrongly estimated to 288.8 Hz by the fast NLS, but correctly estimated to 150.4 Hz by the proposed. By imposing temporal smoothness prior on the pitch using the Markov process model p ( ω n | ω n − 1 , u n = 1 , u n − 1 = 1) , smoother estimates of the pitches are obtained. An octave error is produced by the fast NLS in the frame around 3.4 s. The pitch and harmonic order are estimated to 72 and six, respectively , by the fast NLS, but 143.2 and three, respecti vely , by the proposed. In fact, harmonic orders are estimated to three in the Preprint 8 0 0.5 1.0 1.5 [dB] 20 10 0 − 10 − 20 − 30 1 2 3 4 5 6 7 8 0 0.5 1.0 1.5 Time [s] F requency [kHz] Fig. 4. Pitch estimates of fast NLS and the proposed algorithm for musical sounds in -5 dB white Gaussian noise (from top to bottom). surrounding frames by both the fast NLS and the proposed. By using Bayesian tracking for the pitches and harmonic orders, smoother estimates of the pitches and harmonic orders are obtained. In conclusion, the proposed Bayesian pitch tracking algorithm obtains smooth estimates of the pitch and harmonic orders, and good voicing detection results by exploiting the past information. The second experiment tests the performance of the pro- posed algorithm on musical instrument sounds (flute) from the Univ ersity of Iowa Musical Instrument Samples database, decreasing from note B5 to C5. The spectrogram of the clean signals and the pitch estimates from fast NLS and the proposed algorithm are shown in Fig. 4. The music signal is downsampled to 16 kHz. The SNR for Gaussian white noise is set to -5 dB. The pitch ω 0 is constrained to the range 2 π [100 1500] /f s . The other parameters are set to the same as for Fig. 3. As can be seen, the proposed algorithm not only has smoother estimates of the pitch than fast NLS but also better voicing detection results. In the third e xperiment, we test the performance of the proposed algorithm on the Keele pitch database with white Gaussian noise. A v erages ov er 20 independent Monte Carlo experiments are used to obtain the experimental results. TER, GER and MAE for different SNRs for PEF A C, SWIPE, YIN, CREPE, fast NLS and the proposed algorithm are shown in Fig. 5, Fig. 6 and Fig. 7, respecti vely . For YIN, fast NLS and the proposed algorithm, the frame length is set to the same as the reference, i.e., 25.6 ms. Frame lengths 25.6 ms and 90.5 ms (default value) are used for PEF AC. The other parameters are set to the same as for Fig. 3. As can be seen from Fig. 5 and Fig. 6, PEF A C has better performance in terms of both GER and TER than CREPE at -10 dB SNR. Moreover , using a longer frame length (90.5 ms) for PEF A C leads to a lower GER but a higher TER compared with a shorter frame length (25.6 ms). SWIPE and YIN hav e similar performance in terms of T ABLE I T OT A L E R RO R R A T IO I N C O LO R E D N O IS E SNR -5.00 0.00 5.00 10.00 PEF AC (90.5 ms) Babble 0.42 0.38 0.34 0.29 Factory 0.34 0.30 0.27 0.25 PEF AC (25.6 ms) Babble 0.41 0.35 0.29 0.24 Factory 0.30 0.25 0.22 0.21 SWIPE Babble 0.50 0.42 0.29 0.19 Factory 0.52 0.49 0.40 0.28 CREPE Babble 0.40 0.29 0.21 0.16 Factory 0.39 0.28 0.20 0.15 YIN Babble 0.50 0.43 0.32 0.22 Factory 0.50 0.43 0.32 0.22 Prew-F ast NLS Babble 0.35 0.27 0.18 0.12 Factory 0.28 0.20 0.14 0.11 Prew-Proposed Babble 0.34 0.25 0.17 0.12 Factory 0.28 0.20 0.15 0.12 T ABLE II G RO S S E R RO R R A T I O I N C O LO R E D N O IS E SNR -5.00 0.00 5.00 10.00 PEF AC (90.5 ms) Babble 0.62 0.51 0.44 0.39 Factory 0.56 0.47 0.41 0.38 PEF AC (25.6 ms) Babble 0.72 0.65 0.60 0.57 Factory 0.68 0.61 0.57 0.54 SWIPE Babble 0.96 0.81 0.55 0.36 Factory 1.00 0.94 0.76 0.54 CREPE Babble 0.73 0.50 0.34 0.24 Factory 0.75 0.53 0.36 0.26 YIN Babble 0.95 0.83 0.61 0.42 Factory 0.96 0.83 0.61 0.42 Prew-F ast NLS Babble 0.57 0.41 0.30 0.24 Factory 0.55 0.42 0.33 0.28 Prew-Proposed Babble 0.53 0.36 0.27 0.24 Factory 0.51 0.37 0.29 0.25 T ABLE III M E AN A B S O LU T E V A LU E [ H Z ] I N C O L OR E D N O IS E W I T H O R AC L E V OI C I N G D E TE C T OR SNR -5.00 0.00 5.00 10.00 PEF AC (90.5 ms) Babble 49.81 39.15 31.73 27.96 Factory 36.20 31.24 27.97 26.69 PEF AC (25.6 ms) Babble 81.49 72.65 65.71 60.54 Factory 72.61 64.93 57.93 54.20 SWIPE Babble 31.73 17.94 10.95 8.04 Factory 43.91 27.02 16.02 10.51 CREPE Babble 68.95 44.93 30.57 21.89 Factory 79.00 52.41 34.51 24.70 YIN Babble 56.25 39.05 23.86 14.96 Factory 57.37 38.53 23.41 14.97 Prew-F ast NLS Babble 64.81 45.79 31.45 23.79 Factory 74.58 57.88 44.93 36.50 Prew-Proposed Babble 33.33 17.91 12.22 10.81 Factory 19.32 13.20 11.23 10.48 Preprint 9 − 10 − 5 0 5 10 15 20 0 10 20 30 40 50 SNR [dB] T otal error ratio [%] PEF AC (90.5 ms) PEF AC (25.6 ms) SWIPE CREPE YIN F ast NLS Proposed Fig. 5. T otal error ratio in different SNRs for the Keele pitch database − 10 − 5 0 5 10 15 20 0 20 40 60 80 SNR [dB] GER [%] PEF AC (90.5 ms) PEF AC (25.6 ms) SWIPE CREPE YIN F ast NLS Proposed Fig. 6. Gross error ratio in dif ferent SNRs for the Keele pitch database TER and GER. The fast NLS method outperforms the PEF A C, SWIPE, YIN and CREPE. By imposing a smoothing prior on the pitches, harmonic orders and the voicing and using the harmonic model combined, the proposed algorithm achiev es lower GER and TER than the fast-NLS. As can be seen from Fig. 7, when the oracle voicing detector is used, the SWIPE has the lowest MAE from 5 to 20 dB while the proposed algorithm achieves the best performance from -10 to 0 dB. In the fourth experiment, the performance of the proposed algorithm with prewhitening is tested on the Keele pitch database in colored noise, i.e., babble noise and factory noise. The TER, GER and MAE results for Prew-proposed, Prew- fast NLS, PEF A C, Y in and SWIPE are sho wn in I, II and III, respectiv ely . The linear prediction order for the prewhitening is set to 30. The maximum allowed harmonic order for the − 10 − 5 0 5 10 15 20 0 10 30 50 SNR [dB] MAE [Hz] PEF AC (90.5 ms) SWIPE CREPE YIN F ast NLS Proposed Fig. 7. Mean absolute error in different SNRs for the Keele pitch database with oracle voicing detector proposed and fast NLS is set to 30. The other parameters are set to the same as for Fig. 5. As can be seen from T ABLE I and II, PEF A C with 90.5 ms and 25.6 ms hav e a lower TER and GER than YIN and SWIPE in -5 and 0 SNR conditions. The Prew-Proposed and Prew-Fast NLS hav e lower voicing detection errors and Gross errors than YIN, PEF AC and SWIPE in both babble and factory noise conditions. Although similar performance in term of TER can be seen for Pre w-Proposed and Prew-Fast NLS, the Pre w- Proposed has a lower GER than Pre w-Fast NLS. As can be seen from T ABLE III, when the oracle v oicing detector is used, the SWIPE achie ves the lo west MAE in babble noise. The Prew-proposed has a comparable performance with the SWIPE in babble noise and has the best performance in f actory noise. In the fifth experiment, we inv estigate the effect of re ver - beration on the performance of different pitch estimation algo- rithms. Rev erberation is the process of multi-path propagation and occurs when the speech or audio signals are recorded in an acoustically enclosed space. A commonly used metric to measure the rev erberation is the rev erberation time (R T60) [48]. The re verberated signals used for testing are generated by filtering the signal by synthetic room impulse responses (RIRs) with R T60 varying from 0.2 to 1 s in 0.1 s step. The dimension of the room is set to 10 × 6 × 4 m. The distance between the source and microphone is set to 1 m. The RIRs are generated using the image method [49] and implemented using the RIR Generator toolbox [50]. The position of the receiv er is fixed while the position of the source is v aried randomly from 60 degrees left of the receiv er to 60 degrees right of the receiver for each Monte Carlo experiment. The TER, GER and MAE results on the Keele pitch database for the proposed, fast NLS, PEF A C, Y in and SWIPE are sho wn in Fig. 8, Fig. 9 and Fig. 10, respectiv ely , where the parameters are set to the same as for Fig. 5. As can be seen from Fig. 8, the PEF A C (90.5 ms) has the lowest v oicing detection errors in Prepri n t 10 0 . 2 0 . 3 0 . 4 0 . 5 0 . 6 0 . 7 0 . 8 0 . 9 1 0 10 20 30 40 R T60 [s] T otal error ratio [%] PEF AC (90.5 ms) PEF AC (25.6 ms) SWIPE CREPE YIN F ast NLS Proposed Fig. 8. T otal error ratio in different rev erberation time for the Keele pitch database 0 . 2 0 . 3 0 . 4 0 . 5 0 . 6 0 . 7 0 . 8 0 . 9 1 0 20 40 60 R T60 [s] GER [%] PEF AC (90.5 ms) PEF AC (25.6 ms) SWIPE CREPE YIN F ast NLS Proposed Fig. 9. Gross error ratio in different reverberation time for the Keele pitch database more reverberated conditions (R T60 from 0.5 to 1 s) while the proposed algorithm has a better v oicing detection performance in less reverberated conditions. The proposed and the fast NLS has similar performance in terms of TER. Howe ver , as can be seen from Fig. 9, the proposed outperforms the PEF AC, SWIPE, CREPE, YIN and fast NLS in terms of GER. From Fig. 10, we can conclude that SWIPE has the best performance while the proposed is the second best one in terms of MAE. In the final experiment, the performance of the proposed algorithm is tested on sustained /a/ signals (voiced) from the Parkinson’ s disease database. The signals are do wnsampled to 16 kHz. TER, GER and MAE for different SNRs are shown in Fig. 11, Fig. 12 and Fig. 13, respectively . The parameters 0 . 2 0 . 3 0 . 4 0 . 5 0 . 6 0 . 7 0 . 8 0 . 9 1 0 10 20 30 R T60 [s] MAE [Hz] PEF AC (90.5 ms) SWIPE CREPE YIN F ast NLS Proposed Fig. 10. Mean absolute error in different rev erberation time for the Keele pitch database with oracle voicing detector − 15 − 10 − 5 0 5 10 15 20 0 20 40 60 80 100 SNR [dB] T otal error ratio [%] PEF AC (90.5 ms) SWIPE CREPE YIN F ast NLS Proposed Fig. 11. T otal error ratio in different SNRs for the Parkinson’ s disease database are set to the same as for Fig. 5. Similar conclusions to Fig. 5 and Fig. 6 can be drawn from Fig. 11 and Fig. 12. The proposed algorithm has the best performance in terms of the TER and GER. Moreover , the proposed algorithm presents the lowest MAE, especially from -15 to 0 dB. The spectrogram of one of the sustained /a/ sounds from the P arkinson’ s disease database, pitch estimates of the PEF A C (oracle), YIN (oracle) and the proposed algorithm in 0 dB white Gaussian noise are shown in Fig. 14. The oracle voicing detector from the ground truth (all v oiced) is used for both PEF AC and YIN. As can be seen from Fig. 14, the proposed algorithm outperforms the PEF A C (oracle) and YIN (oracle). Based on the above experiments, PEF A C obtains a better pitch estimation and v oicing detection performance than the neural network-based CREPE in low SNR scenarios. The Prepri nt 11 − 15 − 10 − 5 0 5 10 15 20 0 20 40 60 80 100 SNR [dB] GER [%] PEF AC (90.5 ms) SWIPE CREPE YIN F ast NLS Proposed Fig. 12. Gross error ratio in different SNRs for the Parkinson’ s disease database − 15 − 10 − 5 0 5 10 15 20 0 10 30 50 70 SNR [dB] MAE [Hz] PEF AC (90.5 ms) SWIPE CREPE YIN F ast NLS Proposed Fig. 13. Mean absolute error in different SNRs for the Parkinson’ s disease database with oracle voicing detector SWIPE offers good performance in terms of MAE in high SNRs. The proposed algorithm obtains superior performance in terms of GER, TER and MAE compared to PEF A C, SWIPE,YIN, CREPE, and the fast NLS in lo w SNR scenarios for the K eele pitch database and Parkinson’ s disease database. V I I I . C O N C L U S I O N S In this paper, a fully Bayesian harmonic model-based pitch tracking algorithm is proposed. Using a parametric harmonic model, the proposed algorithm shows good robustness against noise. The non-stationary ev olution of the pitch, harmonic order and voicing state are modelled using first-order Markov chains. A fully Bayesian approach is applied for the noise v ari- ance and weights to av oid ov er-fitting. Using the hierarchical g-prior for the weights, the likelihood function can be easily 0 100 200 300 400 [dB] 20 10 0 − 10 − 20 − 30 0 100 200 300 400 F requency [Hz] 0 0 . 5 1 0 100 200 300 400 Time [s] Fig. 14. Pitch estimates from PEF A C (oracle), YIN (oracle), and the proposed for sustained /a/ sounds from a database of Parkinson’ s disease voices in 0 dB white Gaussian noise. ev aluated using the fast NLS. The computational complexity of the recursiv e calculation of the predicted and posterior distributions is reduced by exploiting conditional indepen- dence between the pitch and harmonic order giv en the voicing indicators. Simulation results show that the proposed algorithm has good robustness against voicing state changes by carrying past information on pitch ov er the unv oiced/silent segments. The results of the pitch estimates and voicing detection for spoken sentences and sustained vowels are compared against ground truth estimates in the Keele and Parkinson’ s disease databases, showing that the proposed algorithm presents good pitch estimation and voicing detection accuracy ev en in very noisy conditions (e.g., -15 dB). R E F E R E N C E S [1] D. Erro, I. Sainz, E. Na vas, and I. Hernaez, “Harmonics plus noise model based vocoder for statistical parametric speech synthesis, ” IEEE J . Sel. T opics Signal Pr ocess. , v ol. 8, no. 2, pp. 184–194, April 2014. [2] A. Tsanas, M. A. Little, P . E. McSharry , and L. O. Ramig, “ Accurate telemonitoring of parkinson’ s disease progression by noninv asiv e speech tests, ” IEEE T rans. Biomed. Eng. , vol. 57, no. 4, pp. 884–893, 2010. [3] P . Ghahremani, B. BabaAli, D. Povey , K. Riedhammer , J. Trmal, and S. Khudanpur, “ A pitch extraction algorithm tuned for automatic speech recognition, ” in Proc. IEEE Int. Conf. Acoust., Speech, Signal Pr ocess. IEEE, 2014, pp. 2494–2498. [4] D. Gerhard, Pitch extr action and fundamental frequency: History and curr ent techniques . Department of Computer Science, University of Regina Regina, Canada, 2003. [5] S. Gonzalez and M. Brookes, “PEF AC-a pitch estimation algorithm robust to high lev els of noise, ” IEEE/ACM T rans. Audio, Speech, and Lang. Pr ocess. , vol. 22, no. 2, pp. 518–530, 2014. Preprint 12 [6] M. G. Christensen and A. Jakobsson, “Multi-pitch estimation, ” Synthesis Lectur es on Speech & Audio Pr ocessing , vol. 5, no. 1, pp. 1–160, 2009. [7] K. K. Paliw al, J. G. Lyons, and K. K. Wójcicki, “Preference for 20-40 ms window duration in speech analysis, ” in 4th International Conference on Signal Processing and Communication Systems . IEEE, 2010, pp. 1–4. [8] A. De Cheveigné and H. Kawahara, “YIN, a fundamental frequency estimator for speech and music, ” J . Acoust. Soc. Am. , vol. 111, no. 4, pp. 1917–1930, 2002. [9] D. T alkin, “ A robust algorithm for pitch tracking (RAPT), ” Speech coding and synthesis , vol. 495, p. 518, 1995. [10] A. Camacho and J. G. Harris, “ A sawtooth waveform inspired pitch estimator for speech and music, ” J . Acoust. Soc. Am. , vol. 124, no. 3, pp. 1638–1652, 2008. [11] J. K. Nielsen, T . L. Jensen, J. R. Jensen, M. G. Christensen, and S. H. Jensen, “Fast fundamental frequency estimation: Making a statistically efficient estimator computationally ef ficient, ” Signal Process. , vol. 135, pp. 188–197, 2017. [12] P . Ghahremani, B. BabaAli, D. Povey , K. Riedhammer , J. Trmal, and S. Khudanpur, “ A pitch extraction algorithm tuned for automatic speech recognition, ” in Proc. IEEE Int. Conf. Acoust., Speech, Signal Pr ocess. IEEE, 2014, pp. 2494–2498. [13] G. Aneeja and B. Y e gnanarayana, “Extraction of fundamental frequency from degraded speech using temporal en velopes at high snr frequencies, ” IEEE/ACM T rans. Audio, Speech, and Lang. Process. , vol. 25, no. 4, pp. 829–838, 2017. [14] B. Quinn and P . Thomson, “Estimating the frequency of a periodic function, ” Biometrika , vol. 78, no. 1, pp. 65–74, 1991. [15] J. Sward, H. Li, and A. Jakobsson, “Off-grid fundamental frequency estimation, ” IEEE/ACM T rans. Audio, Speech and Lang. Pr oc. , vol. 26, no. 2, pp. 296–303, Feb . 2018. [16] M. G. Christensen, A. Jakobsson, and S. H. Jensen, “Joint high- resolution fundamental frequency and order estimation, ” IEEE T rans. Audio, Speech, and Lang. Pr ocess. , vol. 15, no. 5, pp. 1635–1644, 2007. [17] M. G. Christensen, “ Accurate estimation of low fundamental frequencies from real-valued measurements, ” IEEE Tr ans. Audio, Speech, and Lang. Pr ocess. , vol. 21, no. 10, pp. 2042–2056, Oct 2013. [18] L. Shi, J. K. Nielsen, J. R. Jensen, M. A. Little, and M. G. Christensen, “ A kalman-based fundamental frequency estimation algorithm, ” in Pr oc. IEEE W orkshop Appl. of Signal Process. to Aud. and Acoust. IEEE Press, 2017, pp. 314–318. [19] G. Zhang and S. Godsill, “Fundamental frequency estimation in speech signals with variable rate particle filters, ” IEEE/ACM T rans. Audio, Speech and Lang. Pr oc. , vol. 24, no. 5, pp. 890–900, 2016. [20] C. Andrieu, M. Davy , and A. Doucet, “Efficient particle filtering for jump markov systems. application to time-varying autoregressions, ” IEEE T rans. Signal Pr ocess. , vol. 51, no. 7, pp. 1762–1770, 2003. [21] J. T abrikian, S. Dubnov , and Y . Dickalov , “Maximum a-posteriori probability pitch tracking in noisy environments using harmonic model, ” IEEE T rans. Speech Audio Process. , vol. 12, no. 1, pp. 76–87, 2004. [22] E. Fisher, J. T abrikian, and S. Dubnov , “Generalized likelihood ratio test for voiced-un voiced decision in noisy speech using the harmonic model, ” IEEE T rans. Audio, Speech, and Lang. Pr ocess. , vol. 14, no. 2, pp. 502–510, 2006. [23] S. M. Nørholm, J. R. Jensen, and M. G. Christensen, “Instantaneous fundamental frequency estimation with optimal segmentation for non- stationary voiced speech, ” IEEE/ACM T r ans. Audio, Speech, and Lang. Pr ocess. , vol. 24, no. 12, pp. 2354–2367, 2016. [24] C. M. Bishop, P attern reco gnition and machine learning . springer , 2006. [25] K. Han and D. W ang, “Neural network based pitch tracking in very noisy speech, ” IEEE/ACM T rans. Audio, Speech, and Lang. Process. , vol. 22, no. 12, pp. 2158–2168, 2014. [26] X. Zhang, H. Zhang, S. Nie, G. Gao, and W . Liu, “ A pairwise algorithm using the deep stacking network for speech separation and pitch estimation, ” IEEE/ACM T rans. Audio, Speech, and Lang. Pr ocess. , vol. 24, no. 6, pp. 1066–1078, 2016. [27] J. W . Kim, J. Salamon, P . Li, and J. P . Bello, “Crepe: A con volutional representation for pitch estimation, ” in Proc. IEEE Int. Conf. Acoust., Speech, Signal Process. , April 2018, pp. 161–165. [28] M. S. Arulampalam, S. Maskell, N. Gordon, and T . Clapp, “ A tutorial on particle filters for online nonlinear/non-gaussian bayesian tracking, ” IEEE T rans. Signal Pr ocess. , vol. 50, no. 2, pp. 174–188, 2002. [29] J. Makhoul, “Linear prediction: A tutorial revie w , ” Pr oc. IEEE , vol. 63, no. 4, pp. 561–580, 1975. [30] M. G. Christensen, P . Stoica, A. Jakobsson, and S. H. Jensen, “Multi- pitch estimation, ” Signal Pr ocess. , vol. 88, no. 4, pp. 972–983, 2008. [31] C. Févotte, N. Bertin, and J.-L. Durrieu, “Nonnegati ve matrix factor- ization with the itakura-saito div ergence: W ith application to music analysis, ” Neural computation , vol. 21, no. 3, pp. 793–830, 2009. [32] O. Cappé, S. J. Godsill, and E. Moulines, “ An overvie w of existing methods and recent advances in sequential monte carlo, ” Proc. IEEE , vol. 95, no. 5, pp. 899–924, 2007. [33] W . F ong, S. J. Godsill, A. Doucet, and M. W est, “Monte carlo smoothing with application to audio signal enhancement, ” IEEE T rans. Signal Pr ocess. , vol. 50, no. 2, pp. 438–449, 2002. [34] A. Doucet, N. De Freitas, K. Murphy , and S. Russell, “Rao- blackwellised particle filtering for dynamic bayesian networks, ” in Pr oceedings of the Sixteenth confer ence on Uncertainty in artificial intelligence . Morgan Kaufmann Publishers Inc., 2000, pp. 176–183. [35] S. I. Adalbjörnsson, A. Jakobsson, and M. G. Christensen, “Multi-pitch estimation exploiting block sparsity , ” Signal Process. , vol. 109, pp. 236– 247, 2015. [36] J. K. Nielsen, M. G. Christensen, A. T . Cemgil, and S. H. Jensen, “Bayesian model comparison with the g-prior , ” IEEE T rans. Signal Pr ocess. , vol. 62, no. 1, pp. 225–238, 2014. [37] A. Zellner, “On assessing prior distributions and bayesian regression analysis with g-prior distributions, ” Bayesian inference and decision techniques , 1986. [38] F . Liang, R. Paulo, G. Molina, M. A. Clyde, and J. O. Berger , “Mixtures of g priors for bayesian variable selection, ” J. Amer . Stat. Assoc. , vol. 103, no. 481, pp. 410–423, 2008. [39] J. Sohn, N. S. Kim, and W . Sung, “ A statistical model-based voice activity detection, ” IEEE Signal Process. Lett. , vol. 6, no. 1, pp. 1–3, 1999. [40] M. G. Christensen, A. Jakobsson, and S. H. Jensen, “Joint high- resolution fundamental frequency and order estimation, ” IEEE T rans. Audio, Speech, and Lang. Pr ocess. , vol. 15, no. 5, pp. 1635–1644, 2007. [41] P . Stoica and Y . Selen, “Model-order selection: a review of information criterion rules, ” IEEE Signal Pr ocess. Mag. , vol. 21, no. 4, pp. 36–47, 2004. [42] K. Y oshii and M. Goto, “ A nonparametric bayesian multipitch analyzer based on infinite latent harmonic allocation, ” IEEE T rans. A udio, Speech, and Lang. Process. , vol. 20, no. 3, pp. 717–730, 2012. [43] I. S. Gradshteyn and I. M. Ryzhik, T able of integr als, series, and pr oducts . Academic press, 2014. [44] A. E. Jaramillo, J. K. Nielsen, and M. G. Christensen, “ A study on how pre-whitening influences fundamental frequency estimation, ” in Proc. IEEE Int. Conf. Acoust., Speech, Signal Process. , May 2019, pp. 6495– 6499. [45] T . Gerkmann and R. C. Hendriks, “Unbiased mmse-based noise power estimation with low complexity and low tracking delay , ” IEEE T rans. Audio, Speech, and Lang. Pr ocess. , vol. 20, no. 4, pp. 1383–1393, 2012. [46] F . Plante, G. F . Meyer , and W . A. Ainsworth, “ A pitch extraction reference database, ” in F ourth European Conference on Speech Com- munication and T echnology , 1995. [47] A. Tsanas, M. Zañartu, M. A. Little, C. Fox, L. O. Ramig, and G. D. Clifford, “Rob ust fundamental frequency estimation in sustained vowels: detailed algorithmic comparisons and information fusion with adaptive kalman filtering, ” J. Acoust. Soc. Am. , vol. 135, no. 5, pp. 2885–2901, 2014. [48] P . A. Naylor and N. D. Gaubitch, Speech dere verberation . Springer Science & Business Media, 2010. [49] J. B. Allen and D. A. Berkley , “Image method for efficiently simulating small-room acoustics, ” J. Acoust. Soc. Am. , vol. 65, no. 4, pp. 943–950, 1979. [50] E. A. Habets, “Room impulse response generator, ” T echnische Univer - siteit Eindhoven, T ech. Rep , v ol. 2, no. 2.4, p. 1, 2006.

Original Paper

Loading high-quality paper...

Comments & Academic Discussion

Loading comments...

Leave a Comment