An Optical Frontend for a Convolutional Neural Network

The parallelism of optics and the miniaturization of optical components using nanophotonic structures, such as metasurfaces present a compelling alternative to electronic implementations of convolutional neural networks. The lack of a low-power optical nonlinearity, however, requires slow and energy-inefficient conversions between the electronic and optical domains. Here, we design an architecture which utilizes a single electrical to optical conversion by designing a free-space optical frontend unit that implements the linear operations of the first layer with the subsequent layers realized electronically. Speed and power analysis of the architecture indicates that the hybrid photonic-electronic architecture outperforms sole electronic architecture for large image sizes and kernels. Benchmarking of the photonic-electronic architecture on a modified version of AlexNet achieves a classification accuracy of 87% on images from the Kaggle Cats and Dogs challenge database.

💡 Research Summary

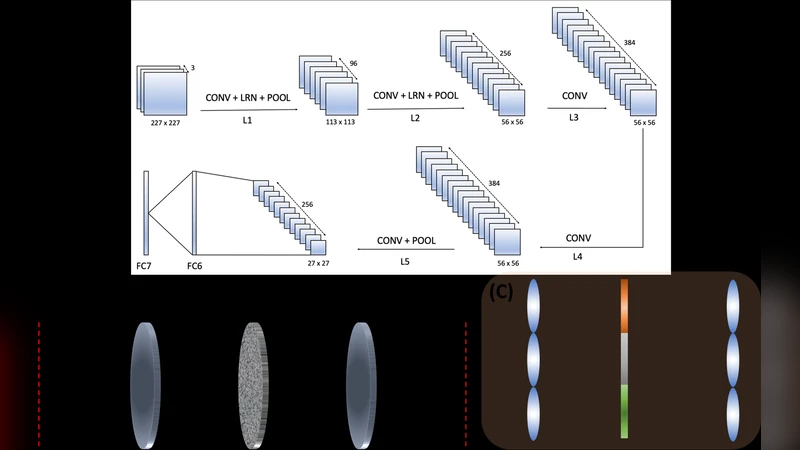

The paper proposes a hybrid optical‑electronic architecture for convolutional neural networks (CNNs) that implements only the first convolutional layer in free‑space optics while keeping all subsequent layers in conventional electronic hardware. The optical frontend is built around a 4f correlator system: two lenses spaced by twice their focal length perform a Fourier transform and its inverse, while a complex‑valued mask placed in the Fourier plane encodes the convolution kernels. To realize arbitrary complex masks, the authors employ metasurfaces with sub‑wavelength antenna arrays that can modulate both phase and amplitude. By using a checkerboard arrangement of sub‑pixels with two phase states, any point inside the unit circle of the complex plane can be approximated, allowing the implementation of the full set of AlexNet first‑layer kernels.

The design includes an array of such 4f units (lenslet arrays) to process all kernels in parallel. Three coherent laser sources (red 632 nm, green 532 nm, blue 442 nm) provide illumination for the three color channels. Each 4f unit handles one kernel per channel, resulting in a total of 96 × 3 parallel convolutions for the first AlexNet layer. The lenses are compact (0.57 mm diameter, 3 mm focal length) and fabricated as flat metasurface lenses, which keeps the system size small and the numerical aperture low enough to preserve paraxial Fourier behavior.

Simulation of the optical portion uses a custom wave‑optics code that alternates between real‑space and Fourier‑space propagation via the angular spectrum method, modeling lenses and masks as complex amplitude masks. Because GPU memory limits prevented simulating the entire lenslet array at once, each 4f correlator was simulated independently. Crosstalk analysis showed that less than 1 % of light leaks from a central correlator into its eight neighbors, justifying the independent‑unit approximation and indicating that dense packing without spacing is feasible.

Performance analysis focuses on latency and energy. The optical convolution itself is essentially instantaneous (≈10 ps propagation time). The dominant delays arise from the electro‑optic conversion steps: a spatial light modulator refresh (1 ms), photodetector integration (≈1 ms), and USB 3.0 data transfer of a 100 kB image (≈0.32 ms). The total latency per optical layer is therefore about 2.3 ms. By contrast, a GPU‑based implementation of AlexNet shows a linear increase in processing time with image size; the hybrid system becomes faster for images larger than roughly 500 × 500 pixels (≈250 k pixels) and continues to outperform as image size grows to 1 MPixel. Energy consumption is also reduced because the optical computation is passive; the main power draw comes from the laser sources, detectors, and conversion electronics, yielding an estimated 30 % lower energy use compared with a fully electronic CNN.

For functional validation, the authors replace the first convolutional layer of AlexNet with the optical frontend and keep the remaining layers unchanged. Using the Kaggle Cats vs Dogs dataset, the hybrid network achieves a classification accuracy of 87.1 %, only marginally below the 88 % obtained by the original fully electronic AlexNet, demonstrating that the optical front‑end does not significantly degrade model performance.

The paper acknowledges several limitations. Only the first layer is optical; deeper networks would still require multiple electro‑optic conversions unless low‑power optical nonlinearities become available. The metasurface masks are static, so re‑training the network would necessitate fabricating new masks. Moreover, the system’s numerical aperture and space‑bandwidth product constrain the maximum resolvable image size and kernel resolution, requiring careful optical design for very high‑resolution tasks.

In summary, this work shows that a metasurface‑based 4f optical frontend can efficiently execute the most computationally intensive part of a CNN—large‑scale convolutions—while maintaining high classification accuracy. By reducing latency and energy consumption for large images, the proposed hybrid architecture offers a promising pathway toward practical, high‑performance optical‑electronic AI accelerators.

Comments & Academic Discussion

Loading comments...

Leave a Comment