MiSC: Mixed Strategies Crowdsourcing

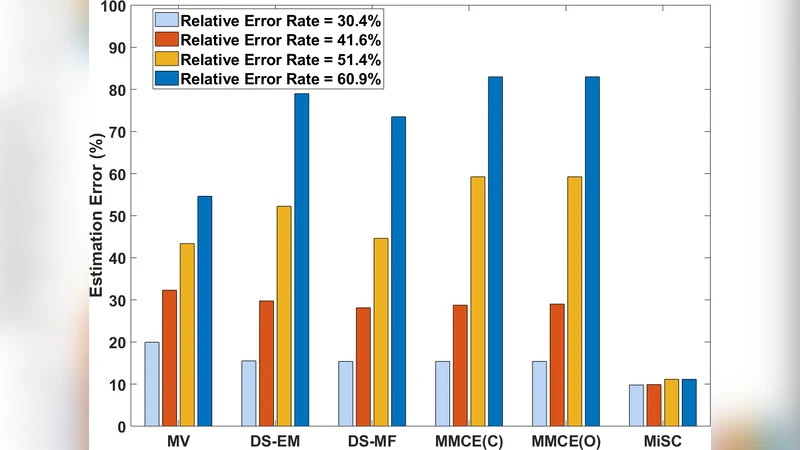

Popular crowdsourcing techniques mostly focus on evaluating workers’ labeling quality before adjusting their weights during label aggregation. Recently, another cohort of models regard crowdsourced annotations as incomplete tensors and recover unfilled labels by tensor completion. However, mixed strategies of the two methodologies have never been comprehensively investigated, leaving them as rather independent approaches. In this work, we propose $\textit{MiSC}$ ($\textbf{Mi}$xed $\textbf{S}$trategies $\textbf{C}$rowdsourcing), a versatile framework integrating arbitrary conventional crowdsourcing and tensor completion techniques. In particular, we propose a novel iterative Tucker label aggregation algorithm that outperforms state-of-the-art methods in extensive experiments.

💡 Research Summary

The paper introduces MiSC (Mixed Strategies Crowdsourcing), a novel framework that unifies two traditionally separate strands of crowdsourcing research: (i) conventional label‑aggregation methods that assess worker quality and re‑weight contributions (e.g., Dawid‑Skene EM, majority voting, minimax entropy), and (ii) tensor‑completion approaches that treat the collection of noisy, incomplete annotations as an incomplete high‑order tensor and recover missing entries via low‑rank assumptions.

Core Idea

Annotations are first encoded into a three‑way binary tensor A of size (workers × items × classes). For each observed label, a single “1’’ is placed in the corresponding fiber, guaranteeing at most one non‑zero per 3‑mode fiber. In the noise‑free case this tensor admits an extremely low Tucker rank—typically (1, min(#items,#classes), min(#items,#classes)). The authors exploit this property by repeatedly applying a low‑rank Tucker decomposition to denoise and fill missing entries.

Iterative Completion‑Deduction Loop

- Deduction step – Apply any conventional aggregation algorithm (the authors use DS‑EM) to the current tensor to obtain a provisional 1 × N_i vector of estimated true labels.

- Encoding step – Convert this vector into a new binary slice S (1 × N_i × N_c) and concatenate it with the original tensor, forming an augmented tensor T of size (N_w+1) × N_i × N_c.

- Completion step – Perform Tucker‑based tensor completion on T:

- Initialize factor matrices and core tensor via a truncated higher‑order SVD (Algorithm 1).

- Refine them with Higher‑Order Orthogonal Iteration (HOOI, Algorithm 2) while enforcing predetermined Tucker ranks (R₁,R₂,R₃).

- The objective is to minimize the Frobenius norm ‖

Comments & Academic Discussion

Loading comments...

Leave a Comment