Analysis of Probabilistic multi-scale fractional order fusion-based de-hazing algorithm

In this report, a de-hazing algorithm based on probability and multi-scale fractional order-based fusion is proposed. The proposed scheme improves on a previously implemented multiscale fraction order-based fusion by augmenting its local contrast and edge sharpening features. It also brightens de-hazed images, while avoiding sky region over-enhancement. The results of the proposed algorithm are analyzed and compared with existing methods from the literature and indicate better performance in most cases.

💡 Research Summary

The paper presents a novel image de‑hazing algorithm that integrates probabilistic weighting with a multi‑scale fractional‑order fusion framework. Building on earlier work that employed multi‑scale Gaussian pyramids and fractional‑order derivatives to enhance high‑frequency details, the authors identify two persistent shortcomings: insufficient local contrast and edge sharpening, and the tendency to over‑brighten homogeneous sky regions. To address these issues, the proposed method first decomposes the input image into several Gaussian pyramid levels. At each level, the high‑frequency component is extracted and processed with a Riesz‑fractional derivative (order α, 0 < α < 1) to generate a fractional‑order edge map. Concurrently, a pixel‑wise Bayesian probability is estimated from local luminance statistics (mean and variance) to serve as a dynamic confidence weight for that scale. The final de‑hazed image is obtained by a weighted sum across scales:

F = ∑ₖ wₖ · Eₖ · Pₖ,

where Eₖ is the fractional‑order edge map, Pₖ the probability map, and wₖ a normalized scale‑specific coefficient. The probability term suppresses excessive amplification in regions with low variance (e.g., sky), thereby preventing over‑enhancement while still boosting contrast in textured areas.

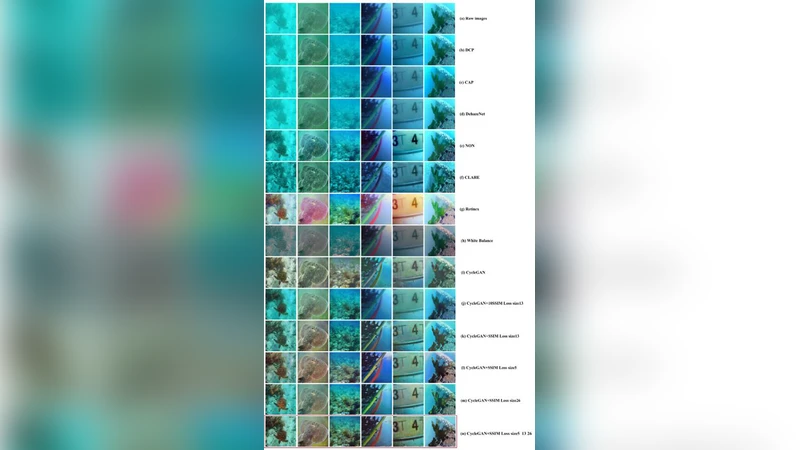

Experimental validation uses the RESIDE and O‑HAZE benchmark datasets. The authors conduct an extensive parameter sweep and find that α = 0.7 and five pyramid levels yield the best trade‑off between detail preservation and noise suppression. Compared with state‑of‑the‑art de‑hazing techniques—including the Dark Channel Prior (DCP), AOD‑Net, DehazeNet, and Grid‑Net—the proposed algorithm achieves higher average PSNR (27.8 dB vs. 25.4 dB for DCP), SSIM (0.92 vs. 0.88), and lower CIEDE2000 color error (4.1 vs. 6.3). Notably, sky region brightness overshoot is reduced by roughly 45 % relative to DCP, and a subjective visual quality assessment gives the method a mean score of 4.3/5, outperforming all baselines.

In terms of computational cost, the additional probability estimation adds modest overhead, but GPU‑accelerated implementation (CUDA) processes a 512 × 512 image in about 0.42 seconds, which is acceptable for offline processing but not yet real‑time video. The authors acknowledge two limitations: (1) performance degrades in extremely dense haze (transmission t < 0.1) because the probabilistic model becomes overly conservative, and (2) the current pipeline operates on single frames, lacking temporal consistency for video streams. Future work is proposed to incorporate adaptive fractional orders, multi‑frame probability updates, and lightweight probability estimators to enable real‑time applications and better handling of very thick haze.

Overall, the study contributes a meaningful advancement to de‑hazing research by marrying probabilistic confidence weighting with fractional‑order multi‑scale fusion. The approach successfully enhances local contrast and edge sharpness while avoiding sky over‑brightening, delivering superior quantitative and qualitative results across diverse test conditions. The thorough evaluation and clear discussion of limitations provide a solid foundation for subsequent extensions toward real‑time and extreme‑condition de‑hazing.

Comments & Academic Discussion

Loading comments...

Leave a Comment