p-Multigrid matrix-free discontinuous Galerkin solution strategies for the under-resolved simulation of incompressible turbulent flows

In recent years several research efforts focused on the development of high-order discontinuous Galerkin (dG) methods for scale resolving simulations of turbulent flows. Nevertheless, in the context of incompressible flow computations, the computational expense of solving large scale equation systems characterized by indefinite Jacobian matrices has often prevented from dealing with industrially-relevant computations. In this work we seek to improve the efficiency of Rosenbrock-type linearly-implicit Runge-Kutta methods by devising robust, scalable and memory-lean solution strategies. In particular, we introduce memory saving p-multigrid preconditioners coupling matrix-free and matrix-based Krylov iterative smoothers. The p-multigrid preconditioner relies on cheap block-diagonal smoother’s preconditioners on the fine space to reduce assembly costs and memory allocation, and ensures an adequate resolution of the coarsest space of the multigrid iteration using Additive Schwarz precondioned smoothers to obtain satisfactory convergence rates and optimal parallel efficiency of the method. Extensive numerical validation is performed. The Rosenbrock formulation is applied to test cases of growing complexity: the laminar unsteady flow around a two-dimensional cylinder at Re=200 and around a sphere at Re=300, the transitional flow problem of the ERCOFTAC T3L test case suite with different levels of free-stream turbulence. As proof of concept, the numerical solution of the Boeing Rudimentary Landing Gear test case at Re=10^6 is reported. A good agreement of the solutions with experimental data is documented, as well as strong memory savings and execution time gains with respect to state-of-the art solution strategies.

💡 Research Summary

This paper presents a memory‑efficient and scalable solution strategy for high‑order discontinuous Galerkin (dG) discretizations of incompressible turbulent flows. The authors combine a matrix‑free Flexible GMRES (FGMRES) linear solver with a p‑multigrid preconditioner tailored for Rosenbrock‑type linearly‑implicit Runge‑Kutta time integration. The Jacobian matrix is assembled only once per time step, and the matrix‑free implementation avoids storing the full sparse system, dramatically reducing memory consumption, especially for high polynomial orders (k ≥ 4).

The p‑multigrid hierarchy uses a cheap element‑wise block‑diagonal (EWBJ) smoother on the finest level, which eliminates the need for off‑diagonal Jacobian blocks and thus saves memory. On coarser levels the method switches to conventional matrix‑based GMRES with Additive Schwarz (AS) smoothers, providing robust global coupling and rapid convergence. To prevent the excessive stabilization that typically arises when coarse operators are inherited directly from the fine level, the authors adopt a “rescaled‑inherited” approach, scaling the viscous contributions on coarse grids to maintain a proper balance between convection and diffusion.

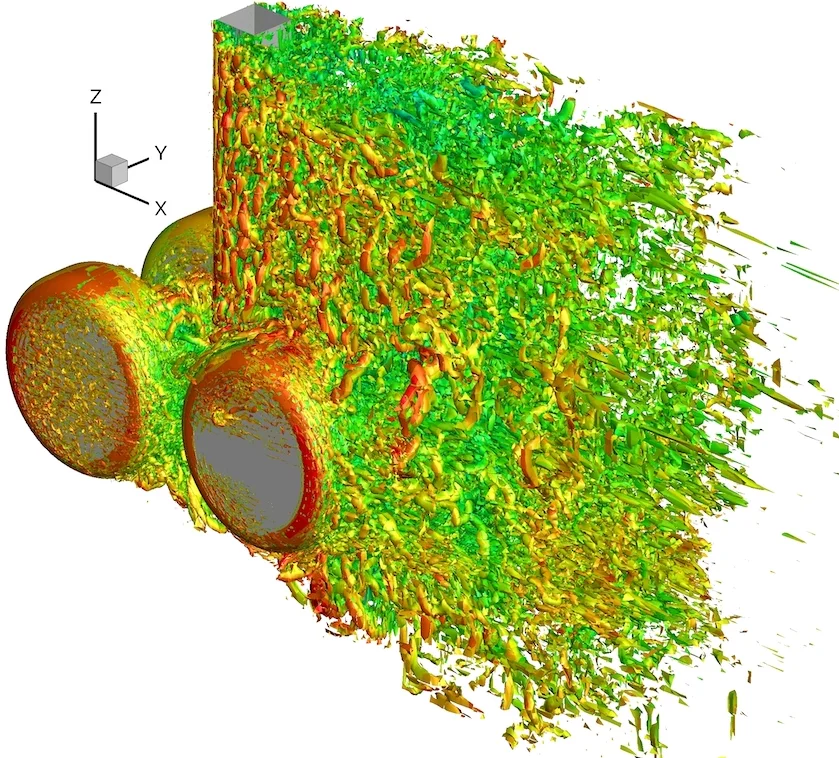

The framework is validated on a progression of increasingly complex test cases. First, unsteady laminar flow around a 2‑D cylinder at Re = 200 and around a sphere at Re = 300 demonstrate accurate temporal and spatial resolution while achieving 60–70 % memory savings compared with fully assembled matrix solvers. Next, the ERCOFTAC T3L1 benchmark (Re = 3450, varying free‑stream turbulence) is simulated using implicit LES (ILES). The p‑multigrid‑matrix‑free solver reproduces the transition and turbulent statistics with a factor‑of‑two speed‑up and roughly 50 % lower memory footprint. Finally, a high‑Reynolds‑number (Re = 10⁶) Boeing Rudimentary Landing Gear configuration is solved on an unstructured, highly stretched mesh with polynomial degree k = 5. The results match experimental pressure and force coefficients, and the total wall‑clock time is reduced by about 2.5× relative to state‑of‑the‑art matrix‑based strategies.

Scalability tests on a distributed‑memory HPC platform show near‑linear speed‑up up to 1,024 MPI processes, confirming that the combination of matrix‑free operator evaluation and p‑multigrid preconditioning scales well with both problem size and core count. The authors also discuss implementation details such as BR2 viscous fluxes, numerical fluxes based on an artificial compressibility Riemann solver, and the construction of restriction/prolongation operators in a matrix‑free fashion.

In summary, the paper delivers a practical, high‑performance framework that simultaneously reduces memory usage and accelerates convergence for large‑scale, under‑resolved turbulent flow simulations. By integrating Rosenbrock time integration, matrix‑free FGMRES, and a hybrid p‑multigrid preconditioner with rescaled‑inherited coarse operators, the authors achieve robust, scalable performance on industrial‑relevant problems, paving the way for more ambitious LES/ILES studies at very high Reynolds numbers.

Comments & Academic Discussion

Loading comments...

Leave a Comment