Adversarially Trained Autoencoders for Parallel-Data-Free Voice Conversion

We present a method for converting the voices between a set of speakers. Our method is based on training multiple autoencoder paths, where there is a single speaker-independent encoder and multiple speaker-dependent decoders. The autoencoders are trained with an addition of an adversarial loss which is provided by an auxiliary classifier in order to guide the output of the encoder to be speaker independent. The training of the model is unsupervised in the sense that it does not require collecting the same utterances from the speakers nor does it require time aligning over phonemes. Due to the use of a single encoder, our method can generalize to converting the voice of out-of-training speakers to speakers in the training dataset. We present subjective tests corroborating the performance of our method.

💡 Research Summary

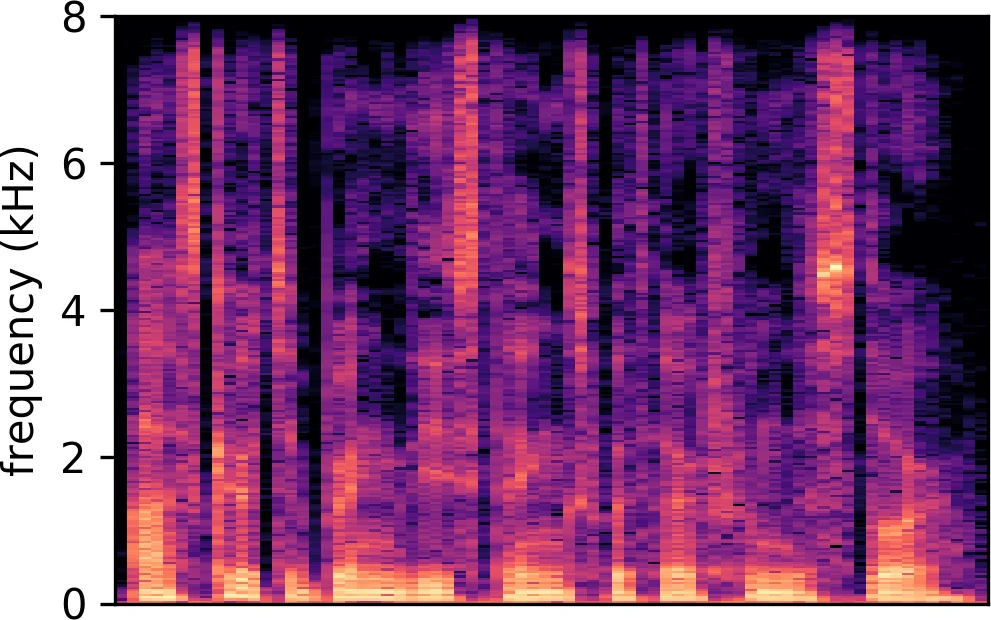

The paper introduces a novel voice conversion (VC) framework that operates without parallel training data, leveraging an adversarially trained autoencoder architecture. The core idea is to separate speaker‑independent content from speaker‑specific characteristics by using a single encoder shared across all speakers and a distinct decoder for each target speaker. During training, the encoder‑decoder pairs are optimized to minimize a reconstruction loss (L1 distance between the original and reconstructed mel‑spectrograms), while an auxiliary speaker classifier is trained to predict the speaker identity from the encoder’s latent representation. The encoder is simultaneously trained to maximize the classifier’s loss, thereby encouraging the latent code to be speaker‑agnostic. Formally, the objective is

\

Comments & Academic Discussion

Loading comments...

Leave a Comment