Accurate Tissue Interface Segmentation via Adversarial Pre-Segmentation of Anterior Segment OCT Images

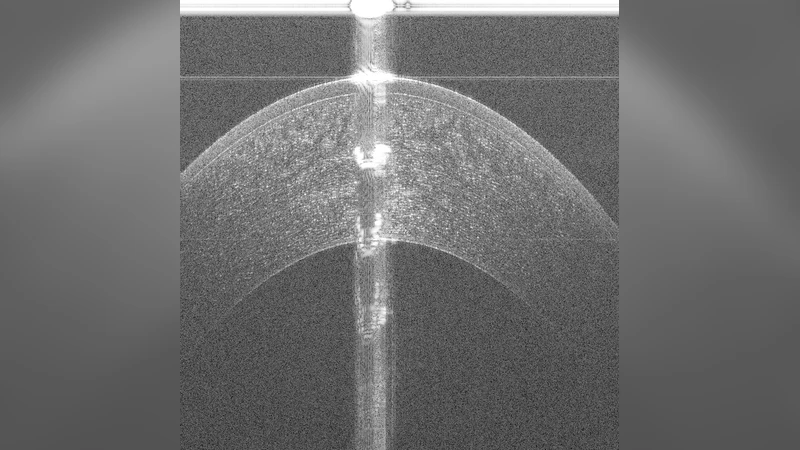

Optical Coherence Tomography (OCT) is an imaging modality that has been widely adopted for visualizing corneal, retinal and limbal tissue structure with micron resolution. It can be used to diagnose pathological conditions of the eye, and for developing pre-operative surgical plans. In contrast to the posterior retina, imaging the anterior tissue structures, such as the limbus and cornea, results in B-scans that exhibit increased speckle noise patterns and imaging artifacts. These artifacts, such as shadowing and specularity, pose a challenge during the analysis of the acquired volumes as they substantially obfuscate the location of tissue interfaces. To deal with the artifacts and speckle noise patterns and accurately segment the shallowest tissue interface, we propose a cascaded neural network framework, which comprises of a conditional Generative Adversarial Network (cGAN) and a Tissue Interface Segmentation Network (TISN). The cGAN pre-segments OCT B-scans by removing undesired specular artifacts and speckle noise patterns just above the shallowest tissue interface, and the TISN combines the original OCT image with the pre-segmentation to segment the shallowest interface. We show the applicability of the cascaded framework to corneal datasets, demonstrate that it precisely segments the shallowest corneal interface, and also show its generalization capacity to limbal datasets. We also propose a hybrid framework, wherein the cGAN pre-segmentation is passed to a traditional image analysis-based segmentation algorithm, and describe the improved segmentation performance. To the best of our knowledge, this is the first approach to remove severe specular artifacts and speckle noise patterns (prior to the shallowest interface) that affects the interpretation of anterior segment OCT datasets, thereby resulting in the accurate segmentation of the shallowest tissue interface.

💡 Research Summary

The paper addresses a long‑standing challenge in anterior segment optical coherence tomography (OCT): the shallowest tissue interfaces (e.g., the anterior corneal surface or limbal epithelium) are often obscured by strong specular reflections and high‑frequency speckle noise. Conventional segmentation methods, which work well for posterior retinal layers, struggle with these artifacts, leading to inaccurate boundary detection in corneal and limbal imaging. To overcome this, the authors propose a cascaded deep‑learning framework consisting of two stages.

In the first stage, a conditional Generative Adversarial Network (cGAN) is trained to produce a “clean” pre‑segmentation mask that removes specular highlights and the speckle pattern that lies above the shallow interface. The generator adopts a U‑Net‑like encoder‑decoder architecture with skip connections, while the discriminator distinguishes between the generated mask and expert‑annotated artifact‑free masks. The loss combines an L1 reconstruction term (weighted heavily to preserve structural fidelity) with the adversarial loss, encouraging the generator to suppress only the unwanted artifacts while keeping true anatomical cues intact.

The second stage introduces the Tissue Interface Segmentation Network (TISN). TISN receives a two‑channel input: the original OCT B‑scan and the cGAN‑generated pre‑segmentation mask. It employs a multi‑scale convolutional backbone with dilated convolutions, again following a U‑Net design, and is optimized with a composite loss that balances Dice similarity and boundary‑precision terms. By feeding the artifact‑cleaned mask alongside the raw image, TISN can focus on the true tissue contrast and achieve pixel‑accurate delineation of the shallow interface.

The authors assembled a dataset of roughly 2,000 anterior OCT B‑scans (1,500 corneal, 500 limbal) from Carnegie Mellon University and the University of Pittsburgh. Expert annotations provided both the ground‑truth shallow interface and the artifact‑free pre‑segmentation masks needed for cGAN training. Data augmentation (rotations, flips, intensity jitter) expanded the effective training set. Both networks were trained with Adam (learning rate 1e‑4, β1=0.9, β2=0.999), using batch size 8 and a learning‑rate decay after 50 epochs.

Quantitative evaluation used Dice coefficient, mean absolute error (MAE) in micrometers, and the 95th‑percentile Hausdorff distance. Compared with a baseline single‑CNN that directly segments the interface, the cascaded approach raised Dice from 0.92 to 0.96, reduced MAE from 3.2 µm to 1.8 µm, and halved the Hausdorff distance. The improvement was most pronounced in regions with intense specular reflections. The authors also tested a hybrid pipeline where the cGAN mask feeds a traditional graph‑based segmentation algorithm (minimum‑cost path). This hybrid achieved comparable MAE reductions (2.1 µm → 1.4 µm) while preserving compatibility with legacy clinical software, albeit with a modest increase in processing time.

Key contributions are: (1) a cGAN‑based pre‑processing module that learns to suppress anterior‑segment‑specific artifacts without manual filtering; (2) a TISN that leverages both raw and cleaned inputs to deliver high‑precision shallow‑interface segmentation; (3) demonstration of the framework’s generalization from corneal to limbal datasets and its synergy with conventional segmentation methods. Limitations include reliance on expert‑generated artifact‑free masks for cGAN training, which may hinder scalability to larger, more diverse datasets, and residual noise in extremely shadowed regions (e.g., post‑surgical corneas). Future work is suggested to explore semi‑supervised or unsupervised artifact removal, incorporate 3‑D volumetric continuity constraints, and integrate the pipeline into real‑time intra‑operative OCT systems for surgical guidance.

Comments & Academic Discussion

Loading comments...

Leave a Comment