Separake: Source Separation with a Little Help From Echoes

It is commonly believed that multipath hurts various audio processing algorithms. At odds with this belief, we show that multipath in fact helps sound source separation, even with very simple propagation models. Unlike most existing methods, we neith…

Authors: Robin Scheibler, Diego Di Carlo, Antoine Deleforge

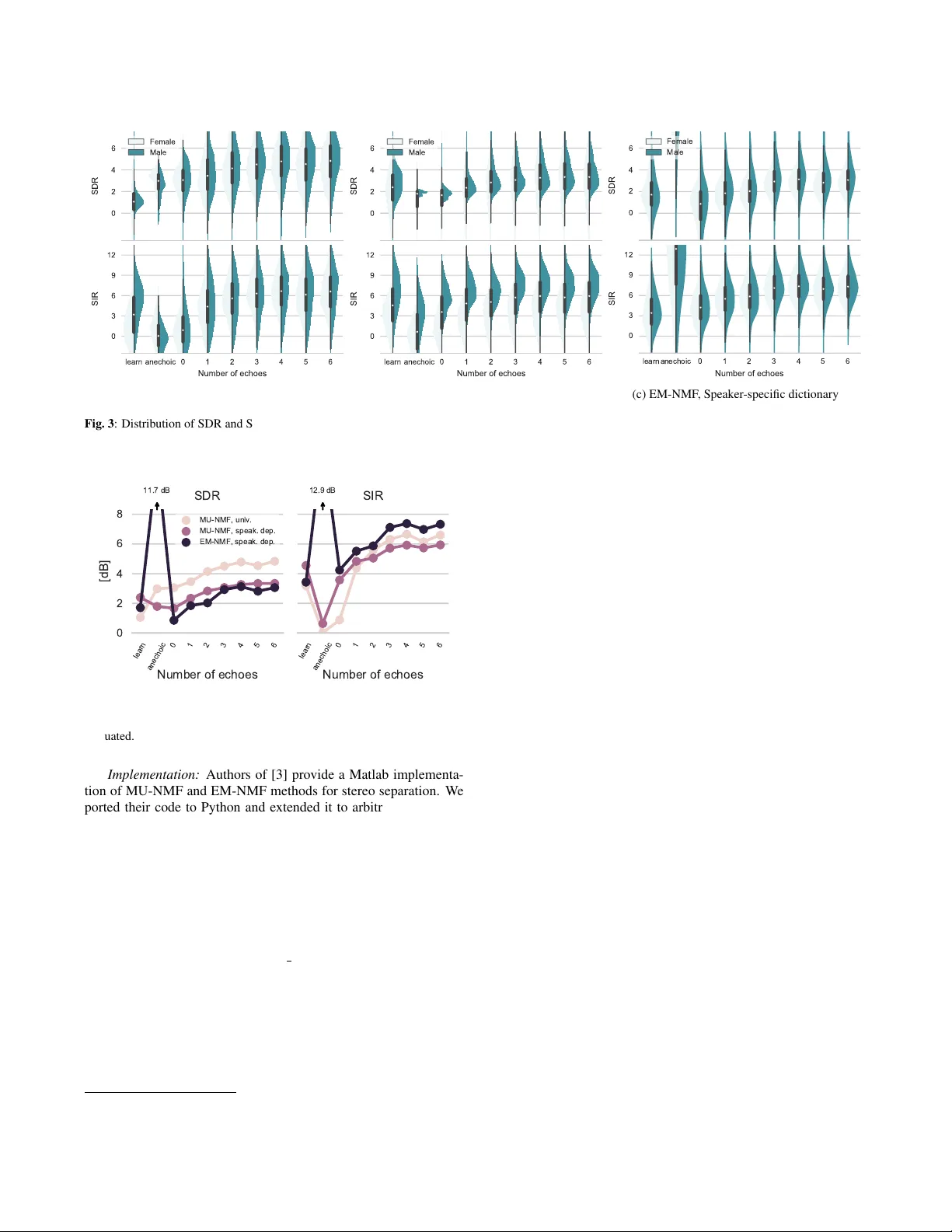

SEP ARAKE: SOURCE SEP ARA TION WITH A LITTLE HELP FR OM ECHOES Robin Scheibler † , Die go Di Carlo ‡ , Antoine Delefor ge ‡ , and Ivan Dokmani ´ c ] † T okyo Metropolitan Uni versity , T okyo, Japan ‡ Inria Rennes - Bretagne Atlantique, France ] Coordinated Science Lab, ECE, Uni versity of Illinois at Urbana-Champaign ABSTRA CT It is commonly believed that multipath hurts v arious audio process- ing algorithms. At odds with this belief, we show that multipath in fact helps sound source separation, e ven with very simple propaga- tion models. Unlike most existing methods, we neither ignore the room impulse responses, nor we attempt to estimate them fully . W e rather assume that we know the positions of a fe w virtual micro- phones generated by echoes and we sho w how this gi ves us enough spatial di versity to get a performance boost over the anechoic case. W e sho w improvements for two standard algorithms—one that uses only magnitudes of the transfer functions, and one that also uses the phases. Concretely , we show that multichannel non-ne gativ e matrix factorization aided with a small number of echoes beats the vanilla variant of the same algorithm, and that with magnitude information only , echoes enable separation where it w as previously impossible. Index T erms — Source separation, echoes, room geometry , NMF , multi-channel. 1. INTRODUCTION Source separation algorithms can be grouped according to ho w they deal with sound propagation: those that ignore it [1], those that as- sume a single anechoic path [2], those that model the room transfer functions (TFs) entirely [3, 4], and those that attempt to separately estimate the contribution of the early echoes and the contribution of the late tail [5]. In this paper we propose yet another route: we assume knowing the locations of a few walls relativ e to the micro- phone array , which enables us to exploit the associated virtual mi- cr ophones . This assumption is easy to satisfy in living rooms and conference rooms, b ut the corresponding model incurs a significant mismatch with respect to the complete rev erberation. W e show that it nonetheless gi ves sizable performance boosts while being simple to estimate. A typical setup is illustrated in Figure 1. W e consider J sources emitting from J distinct directions of arri val (DO As) { θ j } J j =1 , and an array of M microphones. The array is placed close to a wall or a corner . There are two reasons why this is useful: first, it renders echoes from the nearby walls significantly stronger than all other echoes; second, it keeps the resulting virtual array (real and virtual microphones) compact. The latter justifies the far field assumption which in turn simplifies exposition. Real and virtual microphones form dipoles with div erse frequency- dependent directivity patterns. Our goal is to design algorithms which benefit from this known spatial di versity . The research presented in this paper is reproducible. Code and data are av ailable at dokmanic.ece.illinois.edu/separake.zip . Ivan Dokmani ´ c was supported by a Google Faculty Research A ward. s 1 s 2 r 1 r 2 r 11 r 12 r 21 r 22 Virtual microphones Fig. 1 : T ypical setup with two speakers recorded by tw o microphones. The illustration shows the virtual microphone model (gre y microphones) with di- rect sound path (dotted lines) and resulting first-order echoes (colored lines) Echoes have been used previously to enhance various audio processing tasks. It was shown that they improve indoor beam- forming [6, 7, 8], aid in sound source localization [9], and enable low-resource microphone array self-localization [10]. They , how- ev er , seems to be rarely analyzed in the context of source separation with non-negati ve source models. Since most speech communica- tion takes place indoors, we belie ve that our findings are rele vant for modern applications and voice-based assistants like Amazon Echo or Google Home. 1.1. Our Goal and Main Findings Our emphasis here is different than that in [5]. Rather than fit the echo model, we aim to show that separation in the presence of echoes is in f act better than separation without echoes. W e ask the follo wing questions: 1. Is speech separation with echoes fundamentally easier than speech separation without echoes? Are there specific settings where this is true or false? 2. Is it necessary to fully model the re verberation or can we get away with a geometric perspective where we kno w the loca- tions of a few virtual microphones? T o answer these questions we set up sev eral simple experiments. W e take two standard, well-understood multi-channel source separation algorithms which estimate the channel (the TFs), and instead of up- dating the channel estimate we simply furnish the TFs of real and a few virtual microphones. The first algorithm—non-negati ve ma- trix factorization (NMF) via multiplicativ e updates (MU)—only uses the magnitudes of the transfer functions, while the second one— expectation maximization (EM)—also uses the phases. In this initial in vestigation we look at the (over)determined case ( J ≤ M ); the analysis of the underdetermined case is postponed to a forthcoming journal version of this paper . Our findings can be summarized as follows: • (MU) W ith magnitudes only , multi-channel anechoic separa- tion is hardly any better than single-channel separation: as the magnitude of the transfer functions is the same at all micro- phones, channel modeling offers no div ersity . The situation is different in rooms where the direction-dependent magni- tude of TFs v aries significantly from microphone to micro- phone. W e show that replacing the transfer functions with a few echoes (even just one) gives significant performance gains compar ed to not modeling the TFs at all, b ut also that it does better than learning the TFs thr ough multiplicative updates. • (EM) W ith both phases and magnitudes, anechoic separation will be near-perfect since it corresponds to a determined lin- ear system. Therefore, any uncertainty from imperfections in channel modeling will make things worse. Surprisingly , appr oximating the TFs with one echo matches learning them thr ough EM updates and using mor e outperforms it. For a sneak peak at the gains, f ast forward to Figure 3. 2. MODELING Suppose J sources emit inside the room and we hav e M micro- phones. Each microphone receiv es y m ( t ) = J X j =1 c j m ( t ) , with c j m being the spatial image of the j th source at the m th micro- phone. Spatial images are giv en as c mj ( t ) = ( x j ∗ h j m )( t ) , where h j m is the room impulse response between the source j and microphone m . The room impulse response is a central object in this paper . W e model it as h j m ( t ) = K X k =0 α k j m δ ( t − t k j m ) + e j m ( t ) , where the sum comprises the line-of-sight propagation and the earli- est K echoes we want to account for (at most 6 in this paper), while the error term e j m ( t ) collects later echoes and the tail of the rev er- beration. W e do not assume e j m ( t ) to be known. W e assume that the sources are in the far field of real and virtual microphones so the times t k j m depend only on the source DOAs which we assume are known. Assuming K echoes per source are kno wn, we can form an approximate TF from source j to microphone m , b H j,m ( e j ω ) = K X k =0 α k j m e − iωt k j m . (1) The far field assumption implies that only the relative arri val times are known so we can arbitrarily fix the delay of the direct path to zero. In addition, we assume all walls to be spectrally flat in the frequency range of interest and set all α k j m to a same constant. As usual, we process by frames. In the short-time Fourier trans- form (STFT) domain the m th microphone signal reads Y m [ f , n ] = J X j =1 b H j m [ f ] X j [ f , n ] + B m [ f , n ] (2) with f and n being the frequency and frame index, X j [ f , n ] the STFT of the j th source signal, and B m [ f , n ] a term including noise and model mismatch. It is con venient to group the microphone ob- servations in v ector form, Y [ f , n ] = b H [ f ] X [ f , n ] + B [ f , n ] . (3) where Y [ f , n ] = Y m [ f , n ] m , b H [ f ] = b H j m [ f , n ] m,j , X [ f , n ] = X j [ f , n ] j , and B [ f , n ] = B m [ f , n ] m . Let the squared magni- tude of the spectrogram of the j th source be P j = | X j [ f , n ] | 2 f n . W e postulate a non-negativ e factor model for P j : P j = D j Z j , (4) where D j is the non-ne gati ve dictionary , and the latent variables Z j are called activations . Source separation can then be cast as an infer- ence problem in which we maximize the likelihood of the observed Y over all possible non-negativ e factorizations (4). This normally in volv es learning the channel (frequency-domain mixing matrices). Instead of learning, we fix the channel to the earliest few echoes. 3. SOURCE SEP ARA TION BY NMF T o ev aluate the usefulness of echoes in source separation, we mod- ify the multi-channel NMF framework of Ozerov and F ´ evotte [3] as follows. First, we introduce a dictionary learned from available training data. W e explore both speaker-specific and univ ersal dic- tionaries [11]. Speaker -specific dictionaries can be beneficial when speakers are known in advance. Univ ersal dictionary is more ver- satile but gi ves a weaker regularization prior. Second, rather than learning the TF from the data, we use the approximate model of (1). In the following we briefly describe the tw o used algorithms. 3.1. NMF using Multiplicative Updates (MU-NMF) Multiplicativ e updates for NMF only in volve the magnitudes and are simpler than the EM updates. They have been originally pro- posed by Lee and Seung [12]. W e use the Itakura-Saito diver gence [13] between the observed multi-channel squared magnitude spectra V m = [ | Y m [ n, f ] | 2 ] f n and their non-negati ve factorizations, b V m = J X j =1 diag( Q j m ) D j Z j , m = 1 , . . . , M (5) where Q j m = | b H j m [ f ] | 2 f is the vector of squared magnitude of the approximate TF between microphone m and source j . W e add an ` 1 -penalty term to promote sparsity in the activ ations due to the potentially large size of the universal dictionary [11]. The cost function is thus C MU ( Z j ) = X mf n d IS ( V m [ f , n ] | b V m [ f , n ]) , + γ X j k Z j k 1 , (6) where d IS ( v | ˆ v ) = v ˆ v − log v ˆ v − 1 . By adapting the original MU rule deriv ations from Ozerov and F ´ evotte, we obtain the following regularized MU update rule: Z j ← Z j P m (diag( Q ij ) D j ) > V j b V − 2 j P m (diag( Q ij ) D j ) > b V − 1 j + γ , (7) where multiplication , power , and division are element-wise. Importantly , ne glecting the rev erberation (or working in the ane- choic regime) leads to a constant Q j m for all j and m . A conse- quence is that the MU-NMF frame work breaks down with a uni- versal dictionary . Indeed, (5) becomes the same for all m , b V m = P j DZ j = D P j Z j , so e ven with correct atoms chosen, we can assign them to any source without changing Y m , and hence the cost. Therefore, anechoic multi-channel separation with a universal dic- tionary cannot work well. This intuitive reasoning is corroborated by numerical experiments in Section 4.3. The problem is o vercome by the EM-NMF algorithm which keeps the channel phase and is thus able to exploit the phase di versity across the array . Of course, in line with the message of this paper, it is also o vercome by using echoes. 3.2. NMF using Expectation Maximization (EM-NMF) Unlike the MU algorithm that independently maximizes the log- likelihood of TF magnitudes, EM-NMF maximizes the joint log- likelihood ov er all complex-valued channels [3]. Hence, it takes into account observed phases. Each source j is modeled as the sum of components with complex Gaussian priors of the form c k [ f , n ] ∼ C N 0 , d f k z kn such that X j [ f , n ] ∼ C N (0 , ( D j Z j ) f n ) , (8) and the magnitude spectrum P j of (4) can be understood as the variance of source j . Under this model, and assuming uncorrelated noise, the microphone signals also follow a complex Gaussian dis- tribution with co variance matrix Σ Y [ f , n ] = b H [ f ] Σ X [ f , n ] b H H [ f ] + Σ B [ f , n ] , (9) and the negati ve log-likelihood of the observed signal is C EM ( Z j ) = P f n trace Y [ f , n ] Y [ f , n ] H Σ − 1 Y [ f , n ] + log det Σ Y [ f , n ] . This quantity can be ef ficiently minimized using the EM algorithm proposed in [3]. W e modify the original algorithm by fixing the source dictionaries D j and the early-echo channel model b H [ f ] throughout the iterations. 4. NUMERICAL EXPERIMENTS W e test our hypotheses through computer simulations. In the follo w- ing, we describe the simulation setup, dictionary learning protocols, and we discuss the results. 4.1. Setup An array of three microphones arranged on the corners of an equi- lateral triangle with edge length 0.3 m is placed in the corner of a 3D room with 7 w alls. W e select 40 sources at random locations at a distance ranging from 2.5 m to 4 m from the microphone array . Pairs of sources are chosen so that they are at least 1 m apart. The floor plan and the locations of microphones are depicted in Figure 2. 0.00 0.05 0.10 0.15 Time [s] 6 m 3 m 4 m 5 m 2.5 m 4 m Fig. 2 : On the left, a typical simulated RIR. On the right, the simulated sce- nario. learn anechoic 0 1 2 3 4 5 6 γ = 10 − 1 10 10 10 − 3 0 0 0 0 0 T able 1 : V alue of the regularization parameter γ used with the univ ersal dictionary . The scenario is repeated for e very two active sources out of the 780 possible pairs. The sound propagation between sources and microphones is simulated using the image source model implemented in pyr ooma- coustics Python package [14]. The wall absorption factor is set to 0.4, leading to a T60 of approximately 100 ms. An example RIR is shown in Figure 2. The sampling frequency is set to 16 kHz, STFT frame size to 2048 samples with 50% overlap between frames, and we use a cosine window for analysis and synthesis. Partial TFs are then built from the K nearest image microphones. The global delay is discarded. W ith this setup, we perform three dif ferent experiments. In the first one, we ev aluate MU-NMF with a universal dictionary . In the other two, we ev aluate the performance of MU-NMF and EM-NMF with Speaker -specific dictionaries. W e vary K from 1 to 6 and use three baseline scenarios: 1. learn : The TFs are learned from the data along the activ ations as originally proposed [3]. 2. anechoic : Anechoic conditions, without echoes nor model mismatch. 3. no echoes : Re verberation is present but ignored (i.e. K = 0 ). W ith the universal dictionary , the large number of latent variables warrants the introduction of sparsity-inducing regularization. The value of the re gularization parameter γ was chosen by a grid search on a holdout set with the signal-to-distortion ratio (SDR) as the figure of merit [15] (T able 1). 4.2. Dictionary T raining, T est Set, and Implementation Universal Dictionary: Follo wing the methodology of [11] we select 25 male and 25 female speakers and use all av ailable training sen- tences to form the univ ersal dictionary D = [ D M 1 · · · D M 25 D F 1 · · · D F 25 ] . The test signals were selected from speakers and utterances outside the training set. The number of latent v ariables per speaker is 10 so that with STFT frame size of 2048 we hav e D ∈ R 1025 × 500 . Speaker -Specific Dictionary: T wo dictionaries were trained on one male and one female speaker . One utterance per speaker was excluded to be used for testing. The number of latent variables per speaker was set to 20. All dictionaries were trained on samples from the TIMIT corpus [16] using the NMF solver in scikit-learn Python package [17]. (a) MU-NMF , Universal dictionary (b) MU-NMF , Speaker-specific dictionary 0 2 4 6 SDR Female Male learn anechoic 0 1 2 3 4 5 6 Number of echoes 0 3 6 9 12 SIR (c) EM-NMF , Speaker-specific dictionary Fig. 3 : Distribution of SDR and SIR for male and female speakers as a function of the number of echoes included in modeling, and comparison with the three baselines. learn anechoic 0 1 2 3 4 5 6 Number of echoes 0 2 4 6 8 [dB] 11.7 dB SDR MU-NMF, univ. MU-NMF, speak. dep. EM-NMF, speak. dep. learn anechoic 0 1 2 3 4 5 6 Number of echoes 12.9 dB SIR Fig. 4 : Summary of the median SDR and SIR for the different algorithms ev aluated. Implementation: Authors of [3] provide a Matlab implementa- tion of MU-NMF and EM-NMF methods for stereo separation. W e ported their code to Python and extended it to arbitrary number of input channels. 1 The number of iterations for MU-NMF (EM-NMF) was set to 200 (300) and simulated annealing in EM-NMF imple- mentation was disabled. 4.3. Results W e evaluate the performance in terms of signal-to-distortion ratio (SDR) and source-to-interference ratio (SIR) as defined in [15]. W e compute these metrics using the mir eval toolbox [18]. The distributions of SDR and SIR resulting from separation us- ing MU-NMF and a univ ersal dictionary are shown in Figure 3a, with a summary in Figure 4. W e use the median performance to compare the results from dif ferent algorithms. First, we confirm that separation fails for flat TFs ( anechoic and K = 0 ) with SIR around 0 dB. Learning the TFs performs somewhat better in terms of SIR than in terms of SDR, though both are low . Introducing approximate 1 Our implementation and all experimental code are publicly a vailable in line with the philosophy of reproducible research. TFs dramatically improv es performance: we outperform the learned approach e ven with a single echo. W ith up to six echoes, gains are +2 dB SDR and +5 dB SIR. Interestingly , with more than one echo, ` 1 regularization becomes unnecessary; non-negativity and echo pri- ors are sufficient to separate sources. Separation with speaker -dependent dictionaries is less challeng- ing since we hav e a stronger prior . Accordingly , as shown in Fig- ures 3b and 4, MU-NMF no w achieves some separation ev en without the channel information. The gains from using echoes are smaller, though one echo is still suf ficient to match the median performance of learned TFs. Using an echo, howe ver , results in a smaller vari- ance. This is surprising at least to the authors of this paper . Adding more echoes further improv es SDR (SIR) by up to +2 dB (+3 dB). In the same scenario, EM-NMF (Figure 3c) has near -perfect per- formance on anechoic signals which is expected as the problem is ov erdetermined. As for MU, a single echo suffice to reach the per- formance of learned TFs and more further improves it. Moreov er , echoes significantly improv e separation power as illustrated by up to 3 dB improv ement ov er learn . It is interesting to note that in all experiments the first three echoes almost saturate the metrics. This is good news since higher order echoes are hard to estimate. 5. CONCLUSION In this paper we began studying the role of early echoes in compu- tational auditory scene analysis, in particular in source separation. W e found a simple echo model not only improv es performance, but it enables separation in conditions where it is not normally pos- sible, for example with certain non-negati ve speaker-independent models. Echoes seem to play an essential role in magnitude-only algorithms like non-negati ve matrix factorization via multiplicative updates. They improve separation as measured in terms of standard metrics even when compared to approaches that learn the transfer functions. W e believe these results are only a first step in understand- ing the potential of echoes in computational auditory scene analysis. They suggest that simple models used in this paper could be used as regularizers in other common audio processing tasks. Ongoing work includes running real experiments, studying the underdeter- mined case, and blindly estimating the wall parameters. 6. REFERENCES [1] J. Le Roux, J. R. Hershey , and F . W eninger, “Deep NMF for speech separation, ” in IEEE ICASSP . IEEE, 2015, pp. 66–70. [2] S. Rickard, “The duet blind source separation algorithm, ” Blind Speech Separ ation , pp. 217–241, 2007. [3] A. Ozerov and C. F ´ evotte, “Multichannel nonnegati ve matrix factorization in con volutiv e mixtures for audio source separa- tion, ” IEEE T rans. Audio, Speec h, Language Pr ocess. , vol. 18, no. 3, pp. 550–563, 2010. [4] A. A. Nugraha, A. Liutkus, and E. V incent, “Multichannel au- dio source separation with deep neural networks, ” IEEE/ACM T ransactions on Audio, Speec h, and Language Processing , vol. 24, no. 9, pp. 1652–1664, 2016. [5] S. Leglaiv e, R. Badeau, and G. Richard, “Multichannel audio source separation with probabilistic re verberation modeling, ” in IEEE W ASP AA . IEEE, 2015, pp. 1–5. [6] I. Dokmani ´ c, R. Scheibler , and M. V etterli, “Raking the Cock- tail Party, ” IEEE J. Sel. T op. Signal Pr ocess. , vol. PP , no. 99, pp. 1–12, 2015. [7] R. Scheibler, I. Dokmani ´ c, and M. V etterli, “Raking Echoes in the T ime Domain, ” in IEEE ICASSP , Brisbane, 2015. [8] R. Scheibler , “Rak e, Peel, Sketch, ” Ph.D. dissertation, IC, Lau- sanne, 2017. [9] F . Ribeiro, D. E. Ba, and C. Zhang, “Turning Enemies Into Friends: Using Reflections to Improve Sound Source Local- ization, ” ICME , 2010. [10] I. Dokmani ´ c, L. Daudet, and M. V etterli, “From acoustic room reconstruction to SLAM, ” in 2016 IEEE International Confer- ence on Acoustics, Speech and Signal Processing (ICASSP) . IEEE, 2016, pp. 6345–6349. [11] D. L. Sun and G. J. Mysore, “Univ ersal speech models for speaker independent single channel source separation, ” IEEE ICASSP , pp. 141–145, 2013. [12] D. D. Lee and H. S. Seung, “ Algorithms for non- negati ve matrix factorization, ” in Advances in Neural Information Pr ocessing Systems 13 , T . K. Leen, T . G. Dietterich, and V . Tresp, Eds. MIT Press, 2001, pp. 556–562. [Online]. A vailable: http://papers.nips.cc/paper/ 1861- algorithms- for- non- negativ e- matrix- factorization.pdf [13] C. F ´ evotte and J. Idier, “ Algorithms for nonne gativ e matrix fac- torization with the β -div ergence, ” Neur al computation , v ol. 23, no. 9, pp. 2421–2456, 2011. [14] R. Scheibler, E. Bezzam, and I. Dokmani ´ c, “Pyroomacoustics: A Python package for audio room simulations and array pro- cessing algorithms, ” arXiv pr eprint arXiv:1710.04196 , 2017. [15] E. V incent, H. Sawada, P . Bofill, S. Makino, and J. P . Rosca, “First stereo audio source separation ev aluation campaign: data, algorithms and results, ” in International Conference on Independent Component Analysis and Signal Separation . Springer , 2007, pp. 552–559. [16] J. S. Garofolo, L. F . Lamel, W . M. Fisher, J. G. Fiscus, D. S. Pallett, N. L. Dahlgren, and V . Zue, “TIMIT acoustic- phonetic continuous speech corpus, ” Linguistic data consor- tium , vol. 10, no. 5, p. 0, 1993. [17] F . Pedregosa, G. V aroquaux, A. Gramfort, V . Michel, B. Thirion, O. Grisel, M. Blondel, P . Prettenhofer , R. W eiss, V . Dubourg et al. , “Scikit-learn: Machine learning in Python, ” Journal of Machine Learning Resear ch , vol. 12, no. Oct, pp. 2825–2830, 2011. [18] C. Raf fel, B. McFee, E. J. Humphrey , J. Salamon, O. Nieto, D. Liang, D. P . Ellis, and C. C. Raffel, “mir ev al: A transparent implementation of common MIR metrics, ” in In Pr oceedings of the 15th International Society for Music Information Retrieval Confer ence, ISMIR . Citeseer , 2014.

Original Paper

Loading high-quality paper...

Comments & Academic Discussion

Loading comments...

Leave a Comment