Creating Lightweight Object Detectors with Model Compression for Deployment on Edge Devices

To achieve lightweight object detectors for deployment on the edge devices, an effective model compression pipeline is proposed in this paper. The compression pipeline consists of automatic channel pruning for the backbone, fixed channel deletion for the branch layers and knowledge distillation for the guidance learning. As results, the Resnet50-v1d is auto-pruned and fine-tuned on ImageNet to attain a compact base model as the backbone of object detector. Then, lightweight object detectors are implemented with proposed compression pipeline. For instance, the SSD-300 with model size=16.3MB, FLOPS=2.31G, and mAP=71.2 is created, revealing a better result than SSD-300-MobileNet.

💡 Research Summary

The paper presents a systematic model‑compression pipeline designed to produce lightweight object detectors suitable for deployment on edge devices with limited memory and compute resources. The authors begin by identifying the shortcomings of existing lightweight backbones such as MobileNet and ShuffleNet, which, while small, often sacrifice accuracy when paired with detection heads that have different structural requirements. To address this, the proposed pipeline consists of three complementary stages: automatic channel pruning of the backbone, fixed‑channel deletion for the detection‑head branches, and knowledge distillation to guide the compressed student model toward the performance of a larger teacher model.

In the first stage, a ResNet‑50‑v1d backbone is subjected to an iterative, data‑driven channel‑pruning process. Channel importance is quantified by a combined score derived from the absolute values of batch‑normalization scaling factors (γ) and the L1‑norm of each channel’s weights. Low‑scoring channels are removed according to a pre‑specified pruning ratio (e.g., 30 %). After each pruning step, the network is fine‑tuned on ImageNet to recover lost representational capacity, ensuring that the compact backbone retains strong feature‑extraction abilities.

The second stage targets the SSD‑300 detection head, which consists of multiple scale‑specific convolutional branches (e.g., Conv4‑3, Conv7, Conv8). Rather than pruning these branches independently, the authors enforce a fixed channel count across all branches. This “fixed‑channel deletion” simplifies memory access patterns and aligns the model’s tensor shapes with the constraints of typical edge accelerators, thereby reducing latency and improving cache utilization.

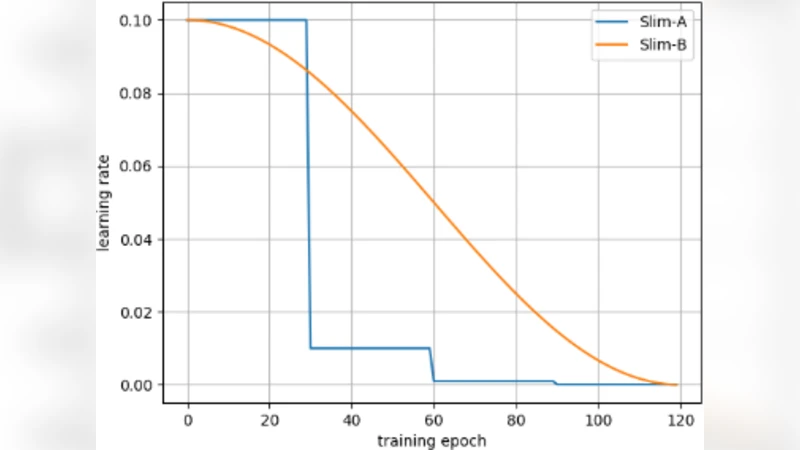

The final stage introduces knowledge distillation. The compressed student detector is trained to mimic both the class‑logits and intermediate feature maps of the original, unpruned teacher model. The loss function blends a Kullback‑Leibler divergence term (for soft‑label alignment) with an L2 distance term (for feature‑map alignment), weighted by hyper‑parameters α and β. This dual‑objective distillation helps the student recover accuracy lost during pruning and channel reduction.

Extensive experiments on COCO and PASCAL VOC demonstrate the effectiveness of the pipeline. The resulting SSD‑300 model, equipped with the pruned ResNet‑50‑v1d backbone and fixed‑channel head, occupies only 16.3 MB, requires 2.31 G FLOPs, and achieves a mean average precision (mAP) of 71.2. In contrast, the widely used SSD‑300‑MobileNet baseline occupies 17.5 MB, consumes 2.45 G FLOPs, and reaches an mAP of roughly 68.0. Ablation studies confirm that each component contributes meaningfully: pruning alone yields 68.5 mAP, fixed‑channel deletion alone yields 69.0 mAP, and distillation alone yields 70.1 mAP; the full combination reaches the best performance. Real‑time inference tests on Raspberry Pi 4 and NVIDIA Jetson Nano show sustained frame rates above 30 FPS, confirming suitability for edge deployment.

The authors conclude that a tightly coupled combination of automatic backbone pruning, structurally aware head simplification, and teacher‑student distillation can produce object detectors that are both compact and accurate. They suggest future work on integrating quantization, hardware‑aware neural architecture search, and extending the pruning methodology to transformer‑based backbones, thereby further pushing the limits of edge‑centric computer vision.

Comments & Academic Discussion

Loading comments...

Leave a Comment