A Splitting-Based Iterative Algorithm for GPU-Accelerated Statistical Dual-Energy X-Ray CT Reconstruction

When dealing with material classification in baggage at airports, Dual-Energy Computed Tomography (DECT) allows characterization of any given material with coefficients based on two attenuative effects: Compton scattering and photoelectric absorption. However, straightforward projection-domain decomposition methods for this characterization often yield poor reconstructions due to the high dynamic range of material properties encountered in an actual luggage scan. Hence, for better reconstruction quality under a timing constraint, we propose a splitting-based, GPU-accelerated, statistical DECT reconstruction algorithm. Compared to prior art, our main contribution lies in the significant acceleration made possible by separating reconstruction and decomposition within an ADMM framework. Experimental results, on both synthetic and real-world baggage phantoms, demonstrate a significant reduction in time required for convergence.

💡 Research Summary

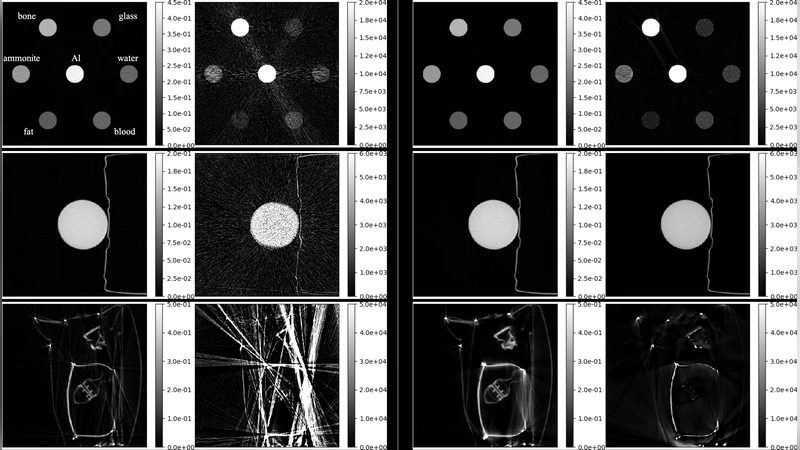

The paper addresses the challenge of achieving fast yet accurate dual‑energy computed tomography (DECT) for material classification in airport baggage screening. Traditional projection‑domain decomposition methods, such as the Constrained Decomposition Method (CDM) followed by filtered back‑projection (FBP), are highly parallelizable but suffer from severe streaking artifacts, especially in the photo‑electric (PE) component, due to the large dynamic range between Compton scattering and PE attenuation. On the other hand, statistical MAP‑based iterative reconstructions provide higher fidelity by incorporating priors (e.g., total variation) and modeling Poisson noise, yet they are computationally intensive because they must solve a large‑scale, non‑linear optimization problem that couples reconstruction and energy decomposition.

The authors propose a novel splitting‑based algorithm within an Alternating Direction Method of Multipliers (ADMM) framework that explicitly separates the reconstruction step from the dual‑energy decomposition step. Starting from the MAP formulation (Equation 4), they introduce auxiliary variables for the TV regularizer and the non‑negativity constraint, leading to a constrained problem (Equation 5) and its augmented Lagrangian (Equation 6). Instead of solving the coupled non‑linear sub‑problems (Equations 7‑8) with a monolithic Levenberg‑Marquardt (LM) plus Conjugate Gradient (CG) approach, they further split each ADMM iteration into two simpler sub‑problems:

-

Tomographic reconstruction sub‑problem – By introducing an auxiliary variable a = e·R·x, the authors reformulate the update of the primal variables (x_c, x_p) as a weighted least‑squares problem (Equation 12). This linear system is large, sparse, and shift‑invariant, allowing the use of a preconditioned Conjugate Gradient (PCG) solver. A high‑pass ramp filter serves as the preconditioner, accelerating convergence.

-

Dual‑energy decomposition sub‑problem – With the reconstructed line integrals fixed, each ray is processed independently to estimate the Compton and PE coefficients that best match the measured projections. This is performed using the Unconstrained Decomposition Method (UDM), which still employs LM for the small non‑linear fit but now operates on a per‑ray basis, making it embarrassingly parallel.

The key advantages of this split are a dramatic reduction in the number of arithmetic operations per ADMM iteration (Table 1) and the ability to map both sub‑problems efficiently onto GPUs. The forward and back‑projection operations are handled by the Astra toolbox, while the per‑ray LM updates are parallelized with GpuFit. Consequently, the average time per iteration drops from ~0.12 s (LM × CG) to ~0.04 s (UDM‑PCG) on an Nvidia 1080Ti.

Experimental validation is performed on three datasets: a synthetic phantom (sim18), a real water‑bottle phantom (Water), and a cluttered baggage phantom containing metallic objects (Clutter). All experiments use the same regularization parameters (λ = 10⁻⁵, ρ = 10⁻³) and initialize the Compton map with CDM‑FBP. Quantitative quality is measured by the normalized ℓ₂‑distance ξ(x) = 20 log₁₀(‖x−x*‖₂/‖x*‖₂). Results show that the proposed method reaches comparable or lower ξ values in far fewer iterations and with substantially lower wall‑clock time. Visual inspection confirms that the PE images produced by the split algorithm are largely free of the streaking artifacts that dominate CDM‑FBP, and that object shapes in the cluttered phantom are recovered with reasonable fidelity.

In conclusion, the paper demonstrates that separating reconstruction and decomposition within an ADMM scheme enables the use of algorithmic components (PCG and per‑ray LM) that are naturally suited to GPU acceleration, yielding a speed‑up of 2–3× over the state‑of‑the‑art while preserving or improving image quality. Future work will extend the approach to full 3‑D volumes, explore more sophisticated initialization strategies (potentially deep‑learning based), and investigate extensions to multi‑energy (>2) CT.

Comments & Academic Discussion

Loading comments...

Leave a Comment