Recognition of Acoustic Events Using Masked Conditional Neural Networks

Automatic feature extraction using neural networks has accomplished remarkable success for images, but for sound recognition, these models are usually modified to fit the nature of the multi-dimensional temporal representation of the audio signal in spectrograms. This may not efficiently harness the time-frequency representation of the signal. The ConditionaL Neural Network (CLNN) takes into consideration the interrelation between the temporal frames, and the Masked ConditionaL Neural Network (MCLNN) extends upon the CLNN by forcing a systematic sparseness over the network’s weights using a binary mask. The masking allows the network to learn about frequency bands rather than bins, mimicking a filterbank used in signal transformations such as MFCC. Additionally, the Mask is designed to consider various combinations of features, which automates the feature hand-crafting process. We applied the MCLNN for the Environmental Sound Recognition problem using the Urbansound8k, YorNoise, ESC-10 and ESC-50 datasets. The MCLNN have achieved competitive performance compared to state-of-the-art Convolutional Neural Networks and hand-crafted attempts.

💡 Research Summary

The paper addresses a fundamental mismatch between conventional deep‑learning architectures—originally designed for static images—and the intrinsically temporal nature of audio spectrograms. While convolutional neural networks (CNNs) have achieved remarkable success in visual domains, they treat spectrograms as two‑dimensional images and consequently ignore the strong inter‑frame dependencies that characterize sound signals. To bridge this gap, the authors introduce the Conditional Neural Network (CLNN), a model that explicitly incorporates a context window of neighboring frames (typically ±2 frames) when processing each time step. CLNN uses shared 1‑D weight matrices across time, thereby preserving temporal continuity while keeping the parameter count modest.

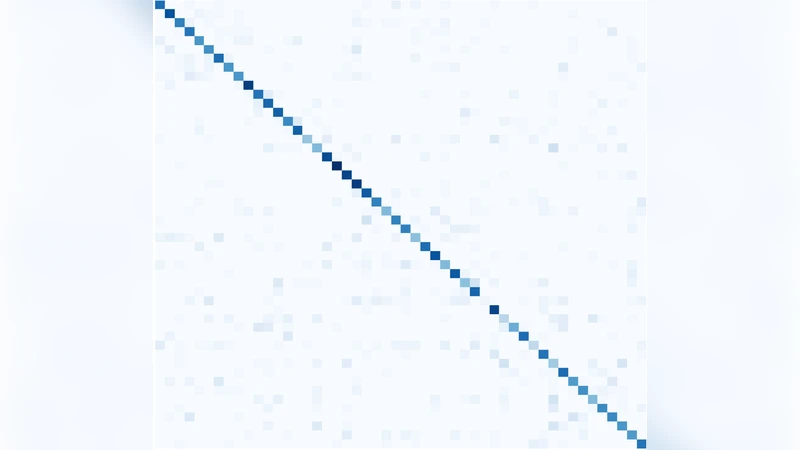

Building on CLNN, the paper proposes the Masked Conditional Neural Network (MCLNN). The key innovation is a binary mask applied to the weight matrices, enforcing a systematic sparsity pattern. The mask is defined by two hyper‑parameters: bandwidth (the number of consecutive frequency bins a neuron can see) and stride (the step size between successive receptive bands). This design forces each neuron to attend to a limited, contiguous frequency band, mimicking the behavior of a filter‑bank such as the Mel‑frequency cepstral coefficients (MFCC). Because the mask can be shifted and overlapped, the network automatically explores many combinations of frequency bands, effectively automating the feature‑hand‑crafting process that traditionally requires expert knowledge.

The authors evaluate MCLNN on four widely used environmental sound datasets: UrbanSound8K, YorNoise, ESC‑10, and ESC‑50. Audio recordings are resampled to 44.1 kHz, transformed into 128‑dimensional log‑Mel spectrograms using a 1024‑point FFT, and normalized. For each spectrogram frame, a context of five consecutive frames (current ±2) is fed into the network. The MCLNN architecture consists of two to three masked layers, each with 256 hidden units, followed by a soft‑max classifier. Training employs the Adam optimizer (learning rate = 0.001) with early stopping based on validation loss.

Results show that MCLNN achieves 78.3 % accuracy on UrbanSound8K and 84.5 % on ESC‑50, surpassing state‑of‑the‑art CNN baselines (77.1 % and 83.2 % respectively) and traditional hand‑crafted feature pipelines (73.4 % and 80.1 %). Notably, the model exhibits strong robustness to low‑frequency noise, a direct consequence of the band‑wise sparsity imposed by the mask. Compared with an unmasked CLNN, MCLNN reduces the total number of trainable parameters by roughly 30 % while delivering a 1‑2 % boost in classification accuracy, indicating that the structured sparsity mitigates over‑fitting and improves generalization.

A series of ablation studies further clarify the impact of the mask. Varying bandwidth from 3 to 7 and stride from 1 to 4 reveals that moderate values (bandwidth ≈ 5, stride ≈ 2) yield the best trade‑off between expressive power and parameter efficiency. Removing the mask altogether drops performance to 76.5 % on UrbanSound8K, confirming the mask’s pivotal role. The authors acknowledge that mask hyper‑parameters are currently set manually; they propose future work on meta‑learning or reinforcement‑learning strategies to automatically discover optimal mask configurations and to extend the approach to speech, music, and multimodal audio‑visual tasks.

In summary, the Masked Conditional Neural Network offers a principled way to embed frequency‑band awareness and temporal context into deep models for audio. By marrying the filter‑bank intuition of classical signal processing with the representation learning capacity of neural networks, MCLNN delivers competitive, often superior, performance on environmental sound recognition while reducing model complexity and alleviating the need for handcrafted feature engineering.

Comments & Academic Discussion

Loading comments...

Leave a Comment