Audio style transfer

'Style transfer' among images has recently emerged as a very active research topic, fuelled by the power of convolution neural networks (CNNs), and has become fast a very popular technology in social media. This paper investigates the analogous probl…

Authors: Eric Grinstein, Ngoc Duong, Alexey Ozerov

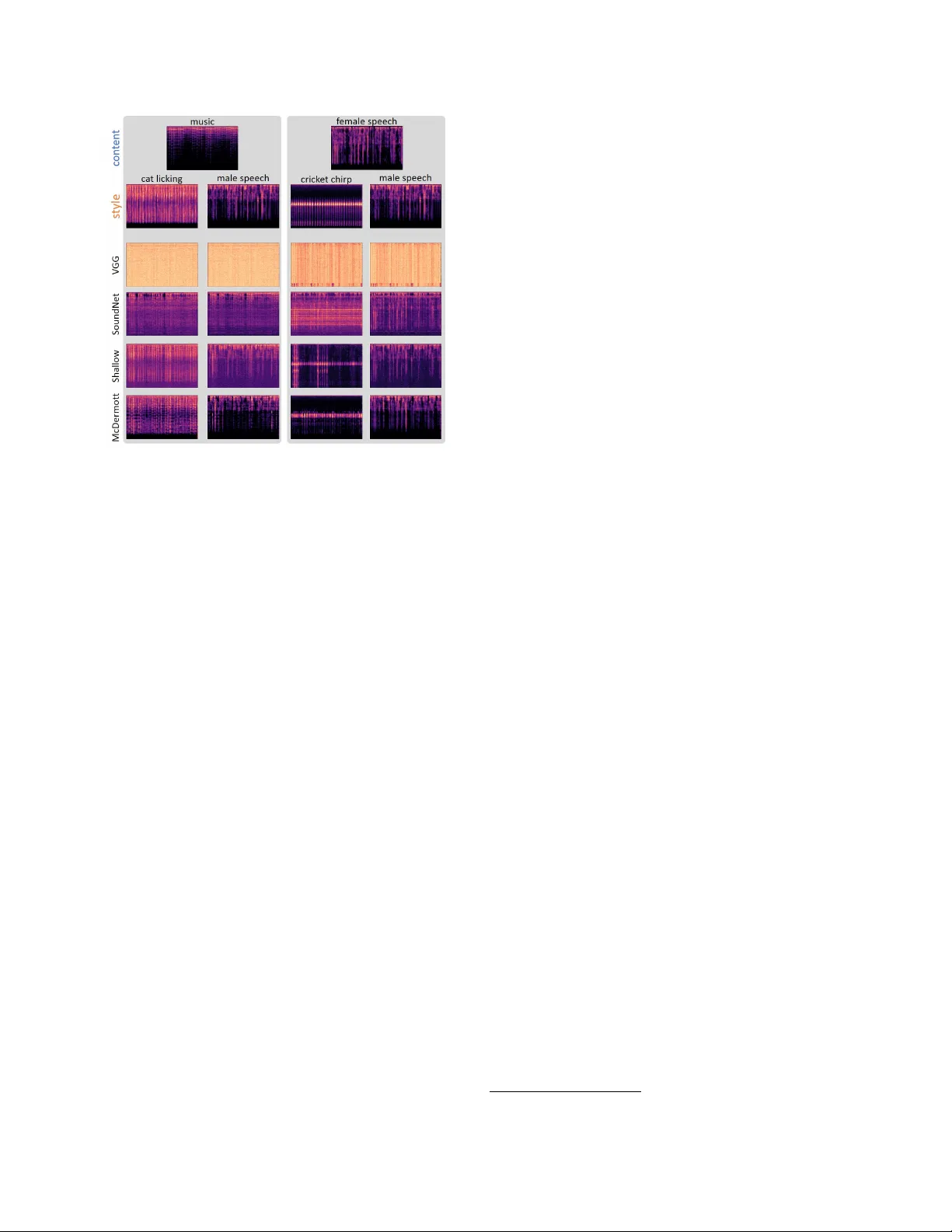

A UDIO STYLE TRANSFER Eric Grinstein, Ngoc Q. K. Duong, Ale xey Ozer ov and P atrick P ´ er ez T echnicolor 975 av enue des Champs Blancs, CS 17616, 35576 Cesson S ´ evign ´ e, France eric.grinstein@outlook.com, { quang-khanh-ngoc.duong, firstname.lastname } @technicolor .com ABSTRA CT “Style transfer” among images has recently emerged as a v ery activ e research topic, fuelled by the power of conv olution neural networks (CNNs), and has become fast a very popu- lar technology in social media. This paper in vestigates the analogous problem in the audio domain: Ho w to transfer the style of a reference audio signal to a target audio content ? W e propose a flexible frame work for the task, which uses a sound texture model to extract statistics characterizing the reference audio style, followed by an optimization-based au- dio texture synthesis to modify the target content. In contrast to mainstream optimization-based visual transfer method, the proposed process is initialized by the target content instead of random noise and the optimized loss is only about texture, not structure. These dif ferences prov ed k ey for audio style transfer in our experiments. In order to extract features of interest, we in vestigate different architectures, whether pre- trained on other tasks, as done in image style transfer , or en- gineered based on the human auditory system. Experimental results on dif ferent types of audio signal confirm the potential of the proposed approach. Index T erms — Audio style transfer , sound texture model, texture synthesis, deep neural network, auditory system. 1. INTR ODUCTION AND RELA TED WORK Both visual texture synthesis, whose goal is to synthesize a natural looking texture image from a giv en sample, and vi- sual texture transfer, which aims at wea ving the reference te x- ture within a tar get photo, have been long studied in computer vision [1, 2]. Recently , within the era of con volutional neu- ral networks (CNNs), these subjects have been revisited with great success. In their seminal w ork, Gatys et al. [3] exploit the deep features provided by a pre-trained object recognition CNN to transfer by optimization the “style” (mainly texture at various scales and color palette) of a painting to a photo- graph whose layout and structure are preserved. The result is a pleasing painterly depiction of the original scene. This is a form of e xample-based non-photorealistic rendering, which is no w av ailable in many social applications. Since then, the Fig. 1 . A udio style transfer : Drawing analogy from example-based image stylisation. work of Gatys et al. has spark ed a great deal of research, see recent revie w on neural style transfer [4]. Moving tow ard audio, sound textur e can be understood as the global temporal homogeneity of acoustic ev ents [5], while the sound color palette may be seen as a set of most represen- tativ e spectral shapes. Note that model-based natural sound texture synthesis has also been extensi vely studied in the lit- erature [5, 6]. Closer to audio style transfer , a cross-synthesis method that hybridizes two sounds by simply multiplying the short term spectrum of one sound by a short term spectral en velop of another sound was proposed by Serra [7]. More recently , Collins and Sturm proposed a dictionary-based ap- proach for sound morphing, where sound textures can be rep- resented by the dictionary’ s atoms [8]. T o date, thanks to the power of CNNs, data-driv en approaches hav e gained a lot in performance allo wing high quality synthesis of complicated audio such as speech [9] and music [10]. Beyond texture syn- thesis and in analogy to image style transfer , some attention has been recently giv en to audio style transfer, notably in the form of exploratory work presented in blog posts [11, 12]. These initial in vestigations showed that the original algorithm proposed in [3] for image is not fully adapted to audio sig- nals. The result sounds often as a mixture of the style and content sounds [11] rather than as a stylized content. Another attempt was done to apply style transfer for prosodic speech [13]. The authors concluded that their approach, while allow- ing the transfer of low-le vel textural features, had a difficulty in transferring high-le vel prosody such as emotion or accent. Finally , regarding music, it is w orth to mention a pioneering work by Pachet [14], where the same music piece is auto- matically re-orchestrated in dif ferent styles. Ho wever , this re- orchestration is performed in the domain of symbolic music description (music scores) and not in the raw audio domain, as we consider here. While visual style transfer provides ne w means to create compelling pictures for professionals and consumers, a suc- cess in audio signals would similarly open ambitious appli- cations including professional audio editing, music creation, sound design and movie post-production (including dubbing). In this paper we present our contribution to this important new topic. In particular , we adapt the frame work of Gatys et al. with a new way of initializing the optimization process and a simplified loss (style only). T o extract the style statistics and to define the loss accordingly , we in vestigate the use of dif fer- ent CNN architectures, inspired by those used in image style transfer but adapted to audio signals. In addition, we explore the use of an auditory-motiv ated sound texture model. The rest of the paper is organized as follo ws. Section 2 discusses the audio style transfer problem together with our view on what could be audio style . Section 3 presents the proposed framework, which allows the use of different audio texture models and associated optimization-based synthesis algorithms in a flexible way . Experimental results are dis- cussed in Section 4. Finally , we conclude in Section 5. 2. PR OBLEM SETTING AND DISCUSSION Gatys et al. [3] introduced the concept of neural style transfer between images, with impressi ve results on transfering col- ors and paint strokes from a painting to a photograph. In this framew ork, starting from a content image and a style image, the corresponding deep feature maps related to content and to style are extracted and stored. The problem consists in computing a new image that matches well the content fea- tures and rele vant statistics of style features. Starting from a random white noise image, this is obtained through iterativ e minimization of a suitable two-fold loss. As a result, the final image retains the global structure of the content image while borrowing te xtures and colors from the style image. A preliminary work on audio style transfer by Ulyanov and Lebede v [11] relies on a similar optimization framew ork, though using a wide, shallo w , random network (a single layer with 4096 random filters) rather than a deep pre-trained one as in visual transfer . More specifically , a 1D audio wav eform is transformed into a 2D representation using the short time Fourier transform (STFT). The resulting spectrogram repre- sentation, when discarding phase information, can be vie wed as a 2D image for processing. Ho wev er, it is still processed as a 1D signal of vector (frequency) observations. In other words, the color channels in image case are replaced by fre- quency channels. The spectrogram to be computed is initial- ized as random noise and iterati vely updated to minimize a loss function between its features and those e xtracted from Fig. 2 . Proposed audio style transfer framework : Giv en an audio texture e xtraction model (artificial neural net or au- ditory model), the content sound is iterati vely modified such that its audio texture matches well the one of the style sound. If required by texture model, raw signals are mapped to and from a suitable representation space by pre/post-processing. the content and style sounds. The results obtained with this method are still limited as the preservation of content and style remains unclear . Note that though the notions of style and content are not formally defined and are context-dependent (depending on both the task and the data), researchers working on visual style transfer seem to agree on the following: “Style” refers mostly to the space-inv ariant intra-patch statistics, i.e. , to the texture at se veral spatial scales, and to the distribution of col- ors a.k.a. the color palette; “Content” encompasses the broad structure of the scene, that is, its semantic and geometric lay- out [3, 4]. In audio, the notions of style and content are e ven harder to define and would depend more on the context. For speech for instance, content may refer to the linguistic infor- mation like phonemes and words while style may relate to the particularities of the speaker such as speaker’ s identity , intonation, accent, and/or emotion. For music, on the other hand, content could be some global musical structure (includ- ing, e.g . , the score played and rhythm) while style may refer to the timbres of musical instruments and musical genre. Fig. 1 depicts the analogy between image style transfer explored in computer vision (first ro w), and audio style transfer yet to be explored (second ro w). 3. PR OPOSED FRAMEWORK A general workflo w of the proposed audio style transfer framew ork is depicted in Fig. 2. It consists in two stages: (1) Style extraction and (2) Style transfer . In more detail, first the content and style wa veforms are pre-processed, if required by subsequent steps, so as to obtain a desired sig- nal representation. As an example, they can be transformed into 2D spectrogram representations by the STFT . Then the texture statistics of these signal representations are extracted by a sound texture model, which can be, e.g. , a neural net- work or an engineered perceptual model. Given these text ure statistics, an optimization algorithm is used to synthesize the representation of the final signal. If this representation is not in the wa veform domain, a final post-processing is required to recov er and play back the newly synthesized audio signal. Note that this framework dif fers from existing optimization- based visual and audio style transfer approaches [3, 15, 11] in two related ways: It does not make use of a content loss and it does not initialize the signal to be optimized with a random noise. Instead, we propose to manipulate directly the content sound representation so as it gradually incorporates style te x- ture into it. In other words, the new audio is initialized by the content sound and is modified to minimize a style loss only . W e found this to be a key factor for producing compelling results, after numerous trials with random noise initialization and two-fold losses, following existing approaches. W e note that despite the absence of content loss, the global structure of the content is nonetheless preserved in the final signal. Started from the content, the iterative optimization con verges to a local minimum with similar structure. This preserv ation of the structure could be further enforced with a content loss, but it did not appear necessary in our experiments while be- ing potentially harmful (making gradient descent less able to mov e away from content initialization). In the follo wing, we present two types of sound texture models that we in vestigated. 3.1. Neural network-based appr oach Motiv ated by the works in image style transfer , we in vesti- gated the use of neural networks for audio style transfer . Giv en the representation x of an input audio signal (raw signal or spectrogram), a CNN is used as follows to extract statistics that characterize stationary sound textures. Denot- ing F ` = [ f `,k ] K ` k =1 the matrix of the K ` (vectorized) activ ation maps at layer ` of the network, Gatys et al. proposed to use as statistics the Gram matrices G ` = F > ` F ` at several layers ` ∈ L of the network. 1 The style loss to minimize is: L ( x ; x sty ) = X ` ∈ L G ` ( x ) − G ` ( x sty ) 2 F , (1) where x sty is the style audio. W e minimize this loss by gra- dient descent started at the content audio x cont . Each gradient step requires to back-propagate from concerned acti vations all the way to network input x . W ith this approach, we tested sev eral neural nets. V GG-19 [16] This is a well-known deep neural network de- signed and trained for image classification. Gatys et al. used it to e xtract features for image style transfer . As a 2D spectro- gram can be viewed as an image, we first tried the VGG-19 1 Equiv alently , this Gram matrix is, up to a factor and a shift, the space- in variant cross-channel co variance matrix of acti vations. architecture directly , taking features at the output of the five con volutional blocks, to see ho w it performs for audio style transfer . In this case x is obtained by transforming the 1D wa veform into a 2D spectrogram representation by the STFT . In order to adapt to the input format of VGG, we replicate the spectrogram three times to obtain an RGB-like image. After optimization, we av eraged the three RGB channels to obtain the final spectrogram. The missing phase information is es- timated by Griffin & Lim’ s algorithm [17], yielding the final sound wa veform. SoundNet [18] This is a fully con volutional deep network that is specifically designed and learned on a large amount of unlabeled videos (including sounds). The resulting sound features hav e shown state-of-the-art performance on standard benchmarks for acoustic scene/object classification. With this architectures, x is a raw wa veform and Gram matrices are computed for all 8 con volutional layers. Wide-Shallo w-Random network Recent work on image style transfer showed that untrained shallow neural nets can be used to extract style statistics [19, 20, 21]. This idea was also considered for audio in the preliminary work by Ulyanov and Lebedev [11]. W e use the same setting, one-layer CNN with 4096 random filters and conv olution only performed along the temporal axis, taking 2D spectrograms as input. 3.2. A uditory-based approach In the CNN-based approach, the sound texture model is data- driv en, at least in case of VGG and SoundNet, which are pre-trained. Though CNNs are v ery powerful in general, it is quite difficult to interpret the resulting statistics. Thus we in vestigate an alternative approach where the sound texture model has been designed by experts of auditory perception. Our approach strongly relies on the sound texture analysis and synthesis system proposed by McDermott and Simon- celli [5]. This texture model emulates the human auditory system through three sound processing steps (which can also be viewed as three layers of a hand-crafted neural network): cochlear filtering, en velope extraction and compressiv e non- linearity , and modulation filtering. The first layer uses 30 bandpass cochlear filters to decompose the wav eform into acoustic frequency bands. The second layer extracts the en- velope of each frequenc y band computed in the first step and applies a compressiv e nonlinearity to it. The third layer fur- ther decomposes each compressed env elope by 20 bandpass modulation filters. These modulation filters are argued to be conceptually similar to coachlear filters, except that they op- erate on the compressed env elopes rather than on the sound wa veform as in the first decomposition layer . W ithin this texture model, statistics used for capturing audio style are as follo ws (see [5] for details): V ariance of each coahlear filter band in the first layer; Mean, variance, ske wness of each env elope band and cross-band correlation in the second layer; Power from each modulation band and Fig. 3 . Result samples : Spectrogram of the content sound (1st row), of the style sound (2nd row), and of the results obtained by different sound te xture models (3rd to 6th rows). cross-band correlation in the third layer . These statistics were shown to be suited for summarizing stationary sounds like textures over a moderate time scale. For synthesizing the sound signal from desired statistics, a variant of gradient de- scent is performed, starting from random noise signal. It min- imizes a loss analogous to (1) but with additional moments to match beside the v ariances and correlations captured by Gram matrices. [5]. Our frame work shown in Fig. 2 pro- ceeds similarly , using statistics of style audio as goal, but starting from the content sound so that its statistics progres- siv ely become closer to the desired ones. Note that in this approach, the wa veform signals are processed directly , thus the pre-processing and post-processing blocks in Fig. 2 are not needed as in the case of SoundNet. 4. EXPERIMENTS W e ev aluated the proposed framew ork using the four different sound texture models presented in previous section – VGG-19 CNN, SoundNet CNN, Shallow CNN (short for wide, shal- low and random) and McDermott auditory model –, with se v- eral types of content and style sounds. Our subjecti ve tests hav e confirmed that initializing the optimization by the con- tent sound itself yields much more meaningful results com- pared to the con ventional workflow where a random n oise signal is used instead in conjunction with a content loss. The results obtained by the latter approach are actually inline with the observations in [11]. Fig. 3 shows the spectrograms of some content sounds (piano piece of Pachelbel’ s Canon in D and speech of a w oman speaking French), of the style sounds (male speech and simple te xtures like cat licking milk and crickets chirping), and of the results obtained by the four tex- ture models. The corresponding audio files together with few more examples are av ailable for informal listening on the sup- porting webpage. 2 As can be seen and heard from the examples, the results obtained by VGG are extremely noisy and far from our expec- tations. This is comprehensible since VGG is designed and trained for image description. SoundNet offers some mean- ingful results, but the produced sounds contain a substantial noise and they seem not appealing in terms of style insertion. Surprisingly , the shallow net with random filters works far better than SoundNet and in many cases it succeeds in insert- ing local te xture from the style sound into the global struc- ture of the content sound. McDermott’ s model, which is de- signed to model human auditory system, produces compelling results. T o better understand and interpret the results, one can see in the last ro w of Fig. 3 that in the case of music content, the attacks of piano notes are preserved in the transformed signal while the local texture of the style sound is introduced in synchronicity with these attacks. Using the “cat licking milk” as style and music as content, one can hear a “cat lick” at every onset of the piano notes. Finally , when using speech as style and piano music as content, one can feel as if the melody is hummed by the speaker e ven though the phonemes are lost. One can also hear and see that while the shallow net seems to place the style texture in the attacks more precisely , McDermott’ s model recreates better the local texture. Again in the example of the “cat licking milk” style, indi vidual licks are placed with better precision for the shallo w net, b ut we perceiv e them more as whips rather than licks. On the other hand, McDermott’ s model places licks less frequently in the resulting sound, but the y are more recognizable by listening. 5. CONCLUSION In this work, we have proposed a general frame work for au- dio style transfer . For extracting the required audio texture statistics, we inv estigated the use of different neural network architectures as well as of a handcrafted model inspired by the human auditory system. Experiments sho wed that the lat- ter and a shallo w random net were the tw o able to produce promising results. Importantly , we found that initializing the iterativ e optimization by the content sound itself, and discard- ing the content loss, plays an important role in obtaining these results. This research topic is nascent and, at this stage, nu- merous aspects related to the design of relev ant sound te xture models should be further inv estigated in future work. Also the fundamental question of “what should be the style to transfer and what should be the content to pr eserve?” is still largely open for future discussion. 2 https://egrinstein.github.io/2017/10/25/ast. html 6. REFERENCES [1] Alexei A Efros and Thomas K Leung, “T exture syn- thesis by non-parametric sampling, ” in Computer V i- sion, 1999. The Pr oceedings of the Se venth IEEE Inter - national Confer ence on . IEEE, 1999, vol. 2, pp. 1033– 1038. [2] Alexei A. Efros and William T . Freeman, “Image quilt- ing for texture synthesis and transfer , ” in Pr oceedings of the 28th Annual Confer ence on Computer Graphics and Inter active T echniques , Ne w Y ork, NY , USA, 2001, SIGGRAPH ’01, pp. 341–346, A CM. [3] Leon A Gatys, Alexander S Eck er , and Matthias Bethge, “Image style transfer using conv olutional neural net- works, ” in Pr oceedings of the IEEE Confer ence on Computer V ision and P attern Recognition , 2016, pp. 2414–2423. [4] Y ongcheng Jing, Y ezhou Y ang, Zunlei Feng, Jingwen Y e, and Mingli Song, “Neural style transfer: A revie w , ” arXiv pr eprint arXiv:1705.04058 , 2017. [5] Josh H. McDermott and Eero P . Simoncelli, “Sound tex- ture perception via statistics of the auditory periphery: Evidence from sound synthesis, ” Neur on , v ol. 71, no. 5, pp. 926 – 940, 2011. [6] Joan Bruna and St ´ ephane Mallat, “ Audio texture synthesis with scattering moments, ” arXiv preprint arXiv:1311.0407 , 2013. [7] X. Serra, A system for sound analy- sis/transformation/synthesis based on a deterministic plus stochastic decomposition , Ph.D. thesis, Stanford Univ ersity , 1989. [8] N. Collins and B. L. Sturm, “Sound cross-synthesis and morphing using dictionary-based methods, ” in Pr oceed- ings of the International Computer Music Confer ence , 2011, pp. 595–601. [9] Aaron van den Oord, Sander Dieleman, Heiga Zen, Karen Simonyan, Oriol V inyals, Alex Graves, Nal Kalchbrenner , Andre w Senior , and Koray Kavukcuoglu, “Wav eNet: A generativ e model for raw audio, ” arXiv pr eprint arXiv:1609.03499 , 2016. [10] Jesse Engel, Cinjon Resnick, Adam Roberts, Sander Dieleman, Douglas Eck, Karen Simonyan, and Mo- hammad Norouzi, “Neural audio synthesis of musi- cal notes with Wav eNet autoencoders, ” arXiv pr eprint arXiv:1704.01279 , 2017. [11] Dmitry Ulyanov and V adim Lebedev , “ Audio te x- ture synthesis and style transfer , ” 2016, http:// tinyurl.com/y844x8qt . [12] Davis F oote, Daylen Y ang, and Mostafa Rohaninejad, “Do androids dream of electric beats?, ” 2016, http: //tinyurl.com/yb5ww2tw . [13] Anthony Perez, Chris Proctor , and Archa Jain, “Style transfer for prosodic speech, ” T ech. Rep., Stanford Uni- versity , 2017. [14] Franc ¸ ois Pachet, “ A joyful ode to automatic orches- tration, ” A CM T ransactions on Intelligent Systems and T echnology (TIST) , v ol. 8, no. 2, pp. 18, 2016. [15] Fujun Luan, Sylvain Paris, Eli Shechtman, and Kavita Bala, “Deep photo style transfer, ” arXiv preprint arXiv:1703.07511 , 2017. [16] Karen Simonyan and Andre w Zisserman, “V ery deep con volutional networks for large-scale image recogni- tion, ” arXiv pr eprint arXiv:1409.1556 , 2014. [17] D. Grif fin and Jae Lim, “Signal estimation from mod- ified short-time fourier transform, ” IEEE T ransactions on Acoustics, Speech, and Signal Pr ocessing , v ol. 32, no. 2, pp. 236–243, Apr 1984. [18] Y usuf A ytar, Carl V ondrick, and Antonio T orralba, “Soundnet: Learning sound representations from unla- beled video, ” pp. 892–900, 2016. [19] Ivan Ustyuzhaninov , Wieland Brendel, Leon A Gatys, and Matthias Bethge, “T exture synthesis using shal- low con volutional networks with random filters, ” arXiv pr eprint arXiv:1606.00021 , 2016. [20] Gilles Puy , Srdan Kitic, and Patrick P ´ erez, “Unifying lo- cal and non-local signal processing with graph CNNs, ” arXiv pr eprint arXiv:1702.07759 , 2017. [21] Kun He, Y an W ang, and John Hopcroft, “ A po werful generativ e model using random weights for the deep im- age representation, ” in Advances in Neural Information Pr ocessing Systems , 2016, pp. 631–639.

Original Paper

Loading high-quality paper...

Comments & Academic Discussion

Loading comments...

Leave a Comment