GPU-based Efficient Join Algorithms on Hadoop

The growing data has brought tremendous pressure for query processing and storage, so there are many studies that focus on using GPU to accelerate join operation, which is one of the most important operations in modern database systems. However, existing GPU acceleration join operation researches are not very suitable for the join operation on big data. Based on this, this paper speeds up nested loop join, hash join and theta join, combining Hadoop with GPU, which is also the first to use GPU to accelerate theta join. At the same time, after the data pre-filtering and pre-processing, using Map-Reduce and HDFS in Hadoop proposed in this paper, the larger data table can be handled, compared to existing GPU acceleration methods. Also with Map-Reduce in Hadoop, the algorithm proposed in this paper can estimate the number of results more accurately and allocate the appropriate storage space without unnecessary costs, making it more efficient. The rigorous experiments show that the proposed method can obtain 1.5 to 2 times the speedup, compared to the traditional GPU acceleration equi join algorithm. And in the synthetic data set, the GPU version of the proposed method can get 1.3 to 2 times the speedup, compared to CPU version.

💡 Research Summary

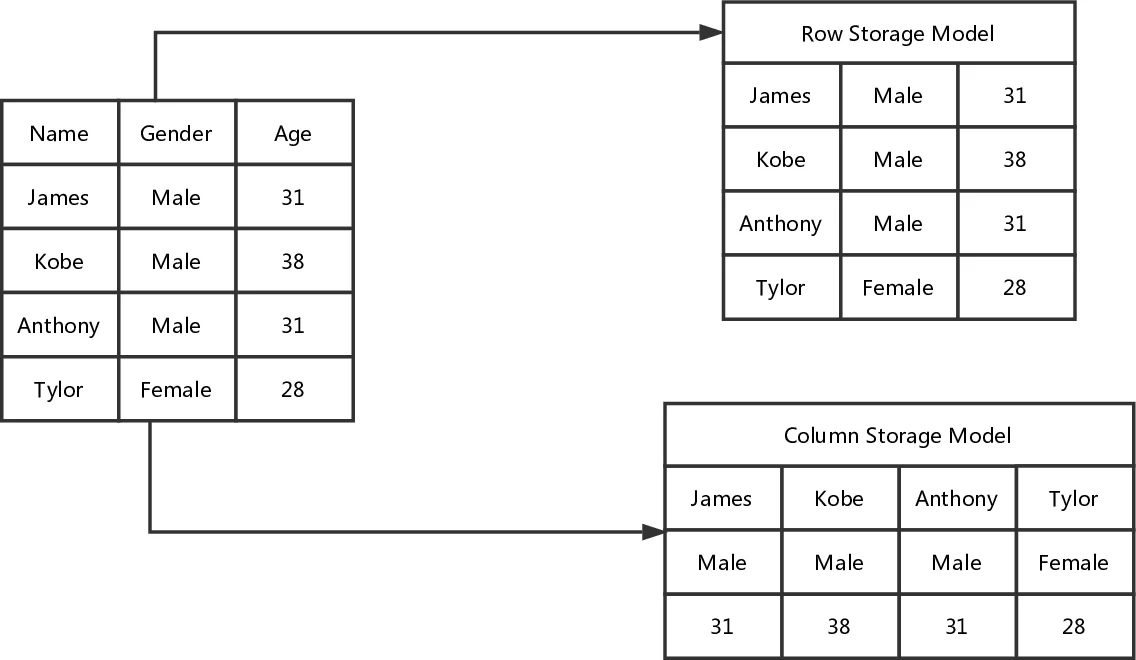

The paper addresses the growing challenge of processing massive data sets by accelerating join operations—one of the most time‑consuming tasks in relational databases—through a combination of Hadoop’s distributed framework and GPU’s massive parallelism. The authors first motivate the need for hardware acceleration, noting that CPU clock speeds have plateaued and that most prior GPU‑based join research focuses on small (megabyte‑scale) data and simple equi‑joins. They then provide background on GPU architecture, CUDA programming, and Hadoop’s HDFS and Map‑Reduce model, setting the stage for their proposed system.

The core contributions are threefold. First, the authors implement three join algorithms on the GPU: nested‑loop join, hash join, and, notably, theta (non‑equi) join, which has not been previously accelerated on GPUs. Second, they introduce a two‑stage Map‑Reduce pre‑filtering pipeline that extracts join keys from both tables, tags their origin, and retains only those keys that appear in both tables. In the second stage, the retained keys are loaded into a hash table on the GPU, and the original tables are rescanned so that only matching tuples are sent to the GPU kernels. This dramatically reduces data transfer volume and GPU memory consumption, and it enables the system to estimate the size of the join result in advance, allowing appropriate buffer allocation on the GPU. Third, they propose a simple result‑size estimation method that guides storage allocation and avoids over‑provisioning.

On the GPU side, the kernels are carefully designed to match each join type. For nested‑loop join, outer‑table blocks are assigned to thread blocks while the inner table is loaded into shared memory for fast comparisons. The hash join builds a hash table for the smaller relation in global memory and streams the larger relation, probing the hash table in parallel. The theta join uses a two‑dimensional grid where each thread processes a specific (i, j) tuple pair, handling arbitrary comparison operators (>, <, ≥, ≤) efficiently by leveraging shared memory and minimizing global memory accesses. The authors also discuss how they minimize synchronization and exploit CUDA’s coalesced memory accesses.

Experimental evaluation is performed on synthetic data and a real‑world benchmark (e.g., TPC‑DS). The hardware platform consists of an NVIDIA GTX 1080 Ti GPU, an 8‑core Xeon CPU, and a four‑node Hadoop cluster. Results show that the proposed GPU‑accelerated equi‑join outperforms a traditional GPU‑based equi‑join implementation by 1.5–2×, and it is 1.3–2× faster than a pure CPU implementation. The theta‑join acceleration is particularly notable, achieving up to 1.8× speedup over the CPU baseline. The two‑stage pre‑filtering reduces the amount of data sent to the GPU to less than 20 % of the original size, and the result‑size estimator allows the system to allocate just enough GPU memory, avoiding costly reallocations.

Despite these promising results, the paper has several limitations. The experimental setup lacks detailed specifications (exact GPU model, memory size, network bandwidth, Hadoop configuration), making reproducibility difficult. The authors do not compare their approach against recent GPU‑accelerated data‑processing frameworks such as RAPIDS, OmniSci, or GPU‑enabled Spark, nor do they isolate the overhead contributed by HDFS and Map‑Reduce from the pure join computation. The result‑size estimation is described qualitatively without a formal cost model or error analysis, leaving its practical reliability uncertain. Moreover, scalability to multiple GPUs or larger clusters is not explored, and strategies for handling GPU memory limits (e.g., partitioned joins) are absent. The manuscript also suffers from numerous typographical and formatting errors, which detract from its readability.

In conclusion, the paper presents an interesting integration of Hadoop and GPU to accelerate both equi‑ and non‑equi‑joins on large data sets, demonstrating measurable speedups and introducing a novel theta‑join GPU implementation. However, to solidify its contribution, future work should provide a rigorous cost model, broader comparative benchmarks, and a thorough scalability analysis across multi‑GPU and multi‑node environments.

Comments & Academic Discussion

Loading comments...

Leave a Comment