Smooth minimization of nonsmooth functions with parallel coordinate descent methods

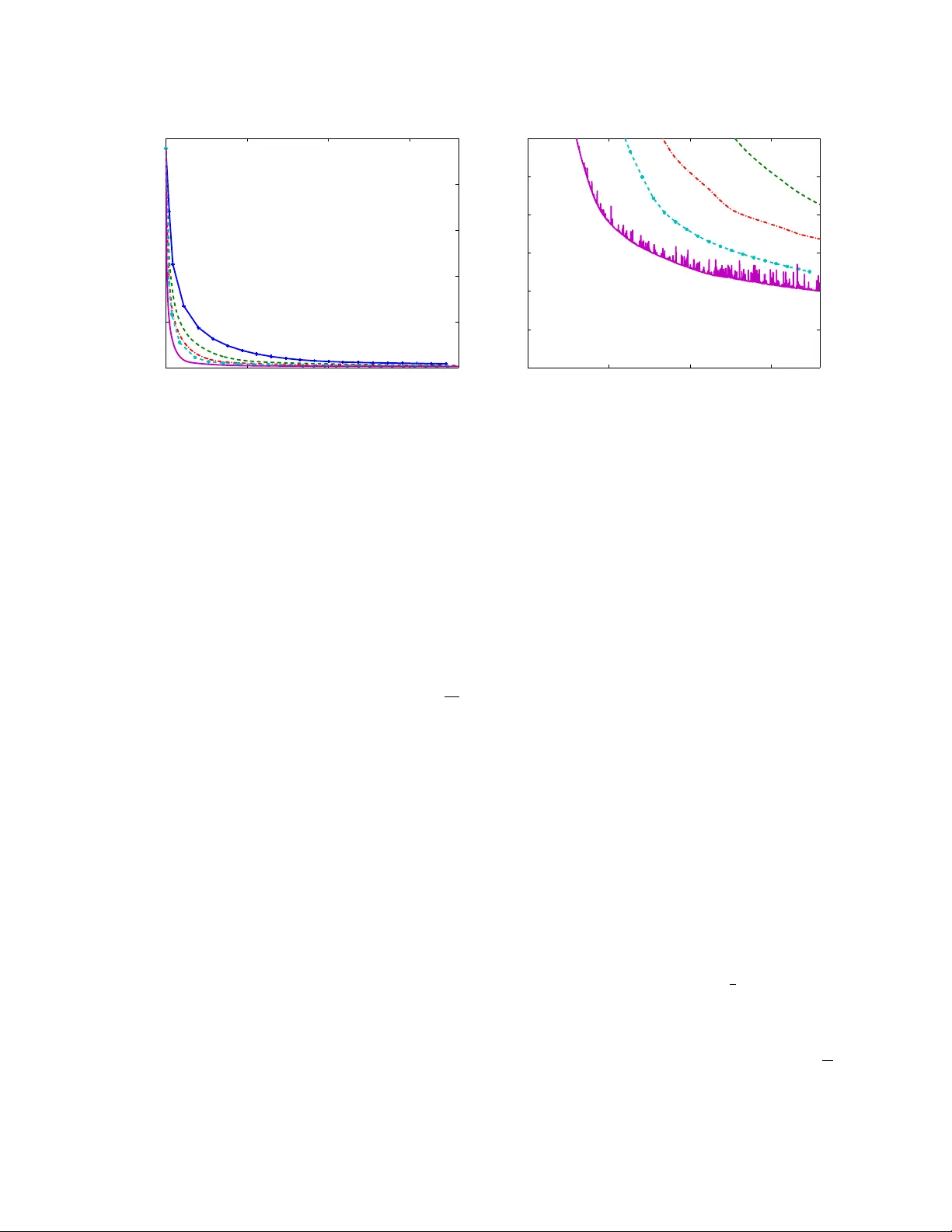

We study the performance of a family of randomized parallel coordinate descent methods for minimizing the sum of a nonsmooth and separable convex functions. The problem class includes as a special case L1-regularized L1 regression and the minimizatio…

Authors: Olivier Fercoq, Peter Richtarik