Transformation Properties of Learned Visual Representations

When a three-dimensional object moves relative to an observer, a change occurs on the observer’s image plane and in the visual representation computed by a learned model. Starting with the idea that a good visual representation is one that transforms linearly under scene motions, we show, using the theory of group representations, that any such representation is equivalent to a combination of the elementary irreducible representations. We derive a striking relationship between irreducibility and the statistical dependency structure of the representation, by showing that under restricted conditions, irreducible representations are decorrelated. Under partial observability, as induced by the perspective projection of a scene onto the image plane, the motion group does not have a linear action on the space of images, so that it becomes necessary to perform inference over a latent representation that does transform linearly. This idea is demonstrated in a model of rotating NORB objects that employs a latent representation of the non-commutative 3D rotation group SO(3).

💡 Research Summary

The paper investigates how visual representations should behave under three‑dimensional motions of objects relative to an observer. The authors argue that a “good” representation is one that transforms linearly (i.e., equivariantly) with respect to the underlying scene transformations, such as the rigid‑body group SE(3). Using the formalism of group representation theory, they show that any linear representation of a motion group can be decomposed into a direct sum of irreducible (elementary) representations. This decomposition is not merely algebraic; under certain statistical assumptions it yields a representation whose components are decorrelated or even conditionally independent.

First, the paper formalizes the scene as a function x : ℝ³ → ℝᴷ and defines the action of a group element g ∈ SE(3) as T(g)x(p)=x(g⁻¹p). T(g) is linear and satisfies the homomorphism property T(g)T(h)=T(gh), making it a group representation. By a classic theorem, any unitary representation is equivalent (via a change of basis) to a block‑diagonal matrix whose blocks are irreducible representations. The authors emphasize three practical consequences: (1) computational efficiency, (2) a precise definition of “disentangled” representations, and (3) the ability to construct invariant features from polynomial invariants of the irreducible blocks.

The statistical link is established through two results. In a fully observable setting, if a template τ is transformed by a group element sampled uniformly from a compact group G, the covariance of the transformed data in the irreducible basis is diagonal. For the circle group (rotations in 2‑D) this reduces to the familiar Fourier transform, where each frequency component is uncorrelated. Theorem 1 generalizes this to any compact (possibly non‑commutative) group, showing that the covariance matrix of ˆx = ˆT(g)τ is block‑diagonal with each block proportional to the identity, i.e., the irreducible components are decorrelated.

A second statistical insight concerns dynamical models. By defining a Gaussian transition p(xₜ|xₜ₋₁,g)=N(xₜ;T(g)xₜ₋₁,σ²) with T(g) a unitary representation, the sufficient statistics become the matrix elements of the irreducible representations. The posterior over g remains in the same exponential family as the prior, and the marginal transition factorizes across irreducible components, yielding conditional independence.

Real visual data, however, are only partially observable because a 3‑D scene is projected onto a 2‑D image plane via perspective. The authors argue that a linear action of SE(3) on the image space cannot exist; new structures can appear or disappear under motion. Instead of imposing strong geometric priors (e.g., planarity), they propose to learn a latent scene variable z that lives in a space where the motion group acts linearly. The generative process is: sample a latent pose gₙ,ᵥ ∈ SO(3) for each view v of object n, transform the latent scene zₙ by the irreducible representation ˆT(gₙ,ᵥ), feed the transformed latent vector into a neural network f_θ, and generate the observed image xₙ,ᵥ with Gaussian noise. A standard normal prior is placed on z, and a uniform prior on the rotation group. The full joint log‑probability includes reconstruction error, a Gaussian prior on the latent code, and weight regularization; gradients are obtained by back‑propagation.

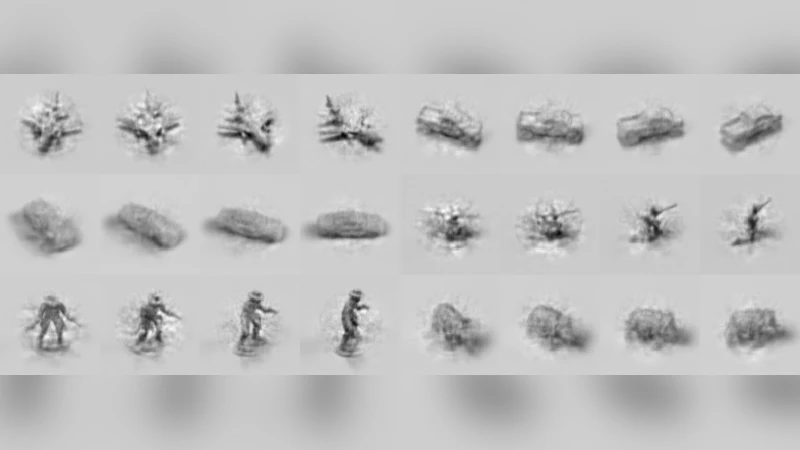

To instantiate ˆT(g) for SO(3), the paper uses the well‑known block‑diagonal structure of the unitary dual of SO(3), i.e., the Wigner‑D matrices for each angular momentum l. Each block corresponds to an irreducible representation of dimension 2l + 1. By learning the latent code and the per‑view rotations jointly on the NORB dataset (objects rendered from multiple viewpoints), the model demonstrates that (i) the latent space respects the linear SO(3) action, (ii) the irreducible blocks become decorrelated, and (iii) the latent code varies smoothly with rotation, enabling view synthesis and interpolation.

In summary, the paper provides a principled bridge between group‑theoretic transformation properties and statistical objectives in representation learning. It shows that enforcing equivariance to a motion group leads naturally to a decomposition into irreducible components, which under mild assumptions are statistically independent. By moving the linear action to a latent space, the approach handles partial observability inherent in perspective projection and can model non‑commutative groups such as SO(3). This framework opens a path toward visual representations that are simultaneously invariant, equivariant, disentangled, and statistically simple, with potential impact on object recognition, pose estimation, and 3‑D reconstruction.

Comments & Academic Discussion

Loading comments...

Leave a Comment