Vid2Game: Controllable Characters Extracted from Real-World Videos

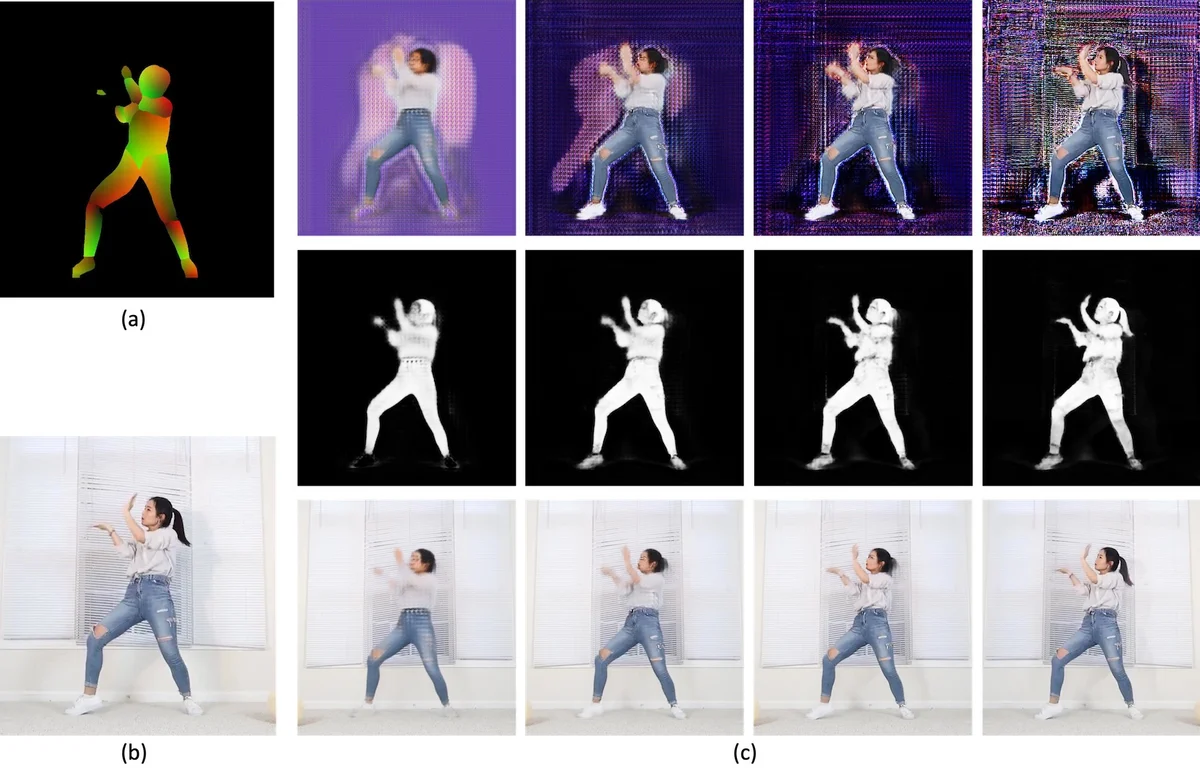

We are given a video of a person performing a certain activity, from which we extract a controllable model. The model generates novel image sequences of that person, according to arbitrary user-defined control signals, typically marking the displacement of the moving body. The generated video can have an arbitrary background, and effectively capture both the dynamics and appearance of the person. The method is based on two networks. The first network maps a current pose, and a single-instance control signal to the next pose. The second network maps the current pose, the new pose, and a given background, to an output frame. Both networks include multiple novelties that enable high-quality performance. This is demonstrated on multiple characters extracted from various videos of dancers and athletes.

💡 Research Summary

Vid2Game introduces a two‑stage generative framework that extracts a human character from an uncontrolled video and enables a user to re‑animate that character under arbitrary low‑dimensional control signals, such as a 2‑D displacement vector from a joystick. The system consists of a Pose‑to‑Pose (P2P) network that predicts the next body pose given the current pose and a control vector, and a Pose‑to‑Frame (P2F) network that renders a high‑resolution RGB image and an alpha mask from the previous and current poses (plus any hand‑held objects) together with a user‑specified background.

Problem formulation – During training, a source video is processed with DensePose to obtain dense body part maps and with a semantic segmentation model to extract hand‑held objects. The trajectory of the character’s center of mass serves as the control signal. At test time the user supplies an initial pose, a sequence of 2‑D displacements, and a background (static or dynamic). The pipeline first autoregressively generates a pose sequence via P2P, then synthesizes each frame via P2F, finally blending the generated foreground with the background using the learned mask.

Pose2Pose network – Built on the pix2pixHD generator, P2P operates at 512 × 512 resolution to focus on pose rather than texture. The key novelty is a set of “conditioned residual blocks” placed in the middle of the latent space. Each block adds a linearly projected version of the 2‑D control vector to the activations before the convolution, preventing a full bypass and ensuring the control signal directly influences pose dynamics. The network is trained with a combination of LSGAN loss, multi‑scale discriminator feature‑matching loss, and a VGG‑based perceptual loss (λ_D = λ_VGG = 10).

Pose2Frame network – Also derived from pix2pixHD, P2F receives a six‑channel input formed by concatenating the previous pose+object and the current pose+object (the object channel is simply added to each RGB channel). It outputs an RGB image z_i and an alpha mask m_i (values in

Comments & Academic Discussion

Loading comments...

Leave a Comment