$varepsilon$-differential agreement: A Parallel Data Sorting Mechanism for Distributed Information Processing System

The order of the input information plays a very important role in a distributed information processing system (DIPS). This paper proposes a novel data sorting mechanism named the {\epsilon}-differential agreement (EDA) that can support parallel data sorting. EDA adopts the collaborative consensus mechanism which is different from the traditional consensus mechanisms using the competition mechanism, such as PoS, PoW, etc. In the system that employs the EDA mechanism, all participants work together to compute the order of the input information by using statistical and probability principles on a proportion of participants. Preliminary results show variable fault-tolerant rates and consensus delay for systems that have different configurations when reaching consensus, thus it suggests that it is possible to use EDA in a system and customize these parameters based on different requirements. With the unique mechanism, EDA can be used in DIPS of multi-center decision cluster, not just the rotating center decision cluster.

💡 Research Summary

The paper addresses the critical problem of data ordering in Distributed Information Processing Systems (DIPS), where even a slight change in transaction sequence can cause cascading failures. Traditional centralized databases suffer from scalability and single‑point‑of‑failure issues, while blockchain‑style consensus mechanisms (Proof‑of‑Work, Proof‑of‑Stake, etc.) rely on competitive selection of a single “winner” node, leading to serial processing and limited throughput.

To overcome these limitations, the authors propose a novel consensus protocol called ε‑Differential Agreement (EDA). EDA is inspired by neural network dynamics: each node behaves like a neuron that receives a subset of order proposals, processes them through a propagation function φ and an activation function σ, and updates its local view of the transaction order. The protocol works in discrete rounds. In round 0 a transaction’s provisional order is broadcast to a random proportion p (e.g., 1 %) of the nodes. In subsequent rounds each node computes a new order estimate O_{τ}^{t+1}=σ(φ(O_{τ}^{t}+∑_{s∈S_i^t} w_s·O_s)), where S_i^t is the set of samples received by node i at round t and w_s are weighting factors.

Statistically, when the sample size n·p exceeds about nine, the binomial distribution of received order positions can be approximated by a normal distribution with mean 0.5·n and variance 0.25·n. Consequently, the order statistic Θ follows N(0.5·n, 0.25·n). The difference Δ between any two nodes’ estimates is then modeled as N(0, 0.5·n). By allowing a tolerance ε (the “ε‑differential”), the system declares consensus once the absolute difference falls within ε. This probabilistic definition of consensus replaces the deterministic zero‑error requirement of classic protocols (e.g., PBFT, PoW).

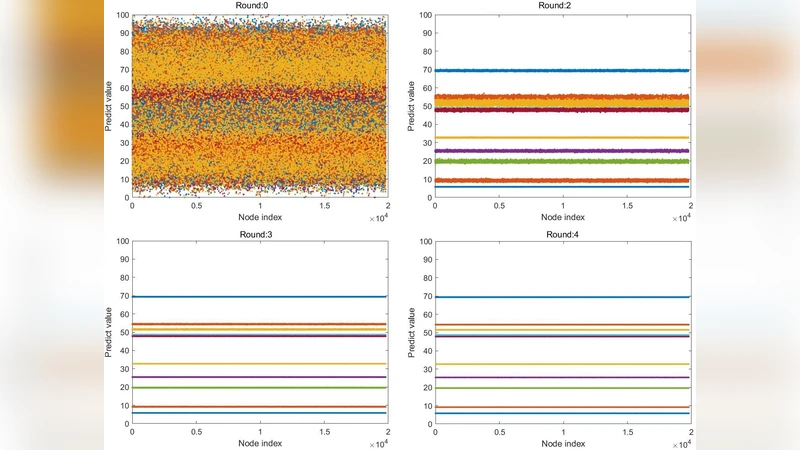

The authors evaluate EDA with a simulation of 20 000 nodes. They fix a failure‑node ratio of 1 % (these nodes behave arbitrarily) and set a minimum sampling ratio p = 1 %. Two ε settings are examined: ε < 1 % and ε < 0.01 %. For ε < 1 % the protocol converges in roughly 5–7 rounds and can process up to 100 transaction packages in parallel. Tightening the tolerance to ε < 0.01 % increases the required rounds to about 12–15 but enables simultaneous handling of up to 10 000 packages. Thus, by tuning ε and p, system designers can trade off latency, fault tolerance, and throughput to match application requirements.

Beyond ordering, the paper claims that EDA can serve as a “real‑random number generator.” Because the consensus outcome depends on the collective, uncontrollable behavior of many nodes, the resulting bits are argued to have higher entropy than conventional pseudo‑random generators. However, the manuscript provides no rigorous statistical tests (e.g., NIST suite) to substantiate this claim.

The discussion expands on potential application domains: large‑scale clustering, distributed AI platforms, real‑time market matching, and even cognitive‑style negotiation systems where each participant contributes a partial view of reality. The authors liken the EDA network to a brain, suggesting that as the network scales it could approximate a Monte‑Carlo measurement system capable of uncovering patterns beyond human perception.

In summary, ε‑Differential Agreement introduces a probabilistic, collaborative consensus mechanism that:

- Uses only a small random subset of nodes per round, reducing communication overhead.

- Allows configurable error tolerance ε, giving flexibility in latency vs. consistency trade‑offs.

- Supports true parallel processing of many transaction batches, dramatically increasing throughput compared with serial block‑building schemes.

- Claims additional benefits such as high‑entropy randomness and applicability to AI‑driven negotiation.

While the theoretical framework is intriguing, the paper suffers from several shortcomings: the neural‑network analogy is not rigorously mapped to concrete algorithmic steps; experimental validation is limited to synthetic simulations without real‑world network conditions; and the randomness claim lacks empirical evidence. Future work should focus on formal security analysis, performance benchmarking on heterogeneous hardware, and thorough randomness testing before EDA can be considered a practical alternative to established consensus protocols.

Comments & Academic Discussion

Loading comments...

Leave a Comment