Human vs. Computer Go: Review and Prospect

The Google DeepMind challenge match in March 2016 was a historic achievement for computer Go development. This article discusses the development of computational intelligence (CI) and its relative strength in comparison with human intelligence for the game of Go. We first summarize the milestones achieved for computer Go from 1998 to 2016. Then, the computer Go programs that have participated in previous IEEE CIS competitions as well as methods and techniques used in AlphaGo are briefly introduced. Commentaries from three high-level professional Go players on the five AlphaGo versus Lee Sedol games are also included. We conclude that AlphaGo beating Lee Sedol is a huge achievement in artificial intelligence (AI) based largely on CI methods. In the future, powerful computer Go programs such as AlphaGo are expected to be instrumental in promoting Go education and AI real-world applications.

💡 Research Summary

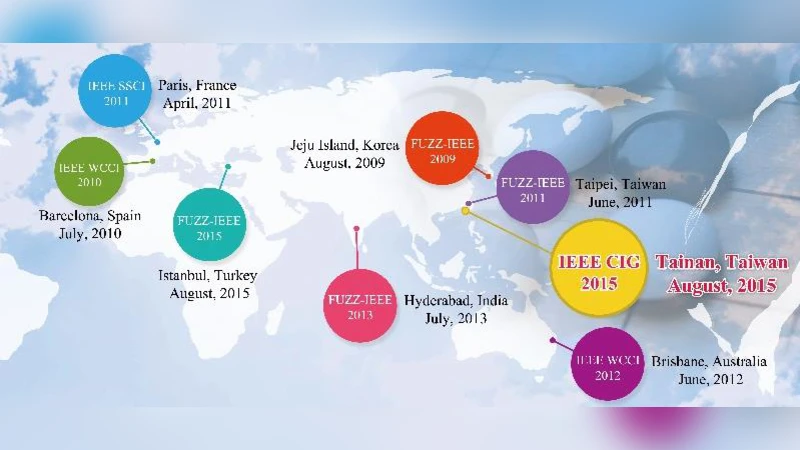

The paper provides a comprehensive review of the evolution of computer Go from its early rule‑based systems in the late 1990s to the breakthrough achieved by DeepMind’s AlphaGo in March 2016. It begins by outlining why Go has long been considered a grand challenge for artificial intelligence: the game’s enormous state space (approximately 10^170 possible positions) and the subtle, long‑term strategic reasoning required to play at a high level. The authors then chronologically summarize key milestones between 1998 and 2016. Early programs relied on handcrafted heuristics and static evaluation functions, achieving only amateur‑level strength. The introduction of Monte‑Carlo Tree Search (MCTS) around 2006 dramatically improved search efficiency, and by the early 2010s open‑source engines such as GnuGo, Fuego, and Pachi were regularly competing in IEEE Computational Intelligence Society (CIS) contests, often surpassing human players at the 9‑stone level. Notable CIS entries like Zen and MoGo combined MCTS with pattern‑based priors, demonstrating that hybrid approaches could bridge the gap between pure search and domain knowledge.

The core of the paper focuses on AlphaGo’s architecture and training methodology. AlphaGo consists of three main components: a policy network, a value network, and an MCTS engine that uses both networks to guide its search. The policy network was first trained by supervised learning on roughly 30 million human expert moves, reducing the branching factor by a factor of about 200. This network was then refined through reinforcement learning via self‑play, employing policy‑gradient methods (REINFORCE) to improve move selection beyond human patterns. Simultaneously, a separate value network was trained to predict the outcome of a given board position directly, eliminating the need for exhaustive roll‑outs. The final system integrates these networks with MCTS: the policy network supplies a probability distribution over moves, the value network supplies a leaf‑node evaluation, and the tree search balances exploration and exploitation using the Upper Confidence Bound formula. The authors detail the massive computational infrastructure behind AlphaGo—thousands of CPU cores and multiple GPU clusters—allowing the system to generate and evaluate billions of self‑play games, ultimately achieving a self‑play win rate of 99.8 %.

The paper also incorporates commentary from three top professional Go players (Lee Sedol, Kim Jeong‑hwan, and Cho Dong‑hyun) on the five games played between AlphaGo and Lee Sedol. The professionals highlight several “non‑human” moves (e.g., Move 37 in Game 2) that demonstrated a global strategic perspective rarely seen in human play. They note that AlphaGo’s style combined aggressive territorial acquisition with subtle influence, forcing humans to reconsider conventional opening theory. Rather than viewing AlphaGo as a mere replacement for human intuition, the players suggest it serves as a powerful analytical tool that can expand human understanding of the game.

In its conclusion, the paper argues that AlphaGo’s victory is a landmark not only for Go but for the broader field of computational intelligence. It shows that deep neural networks, when coupled with efficient search algorithms and massive self‑play data, can master tasks previously thought to require uniquely human intuition. The authors forecast several future directions: (1) the use of strong Go programs as educational aids for teaching opening theory, life‑and‑death problems, and positional judgment; (2) the transfer of AlphaGo‑style policy/value architectures to other domains that involve sequential decision making under uncertainty, such as medical diagnosis, autonomous planning, and real‑time strategy games; (3) research into model compression and lightweight networks to enable deployment on mobile or embedded platforms; and (4) exploration of multi‑agent reinforcement learning where several AI agents collaborate or compete, potentially yielding richer strategic insights. Overall, the paper positions AlphaGo as a catalyst for a new era in AI, where human expertise and machine learning synergistically advance both scientific understanding and practical applications.