Designing Sensing as a Service (S2aaS) Ecosystem for Internet of Things

The Internet of Things (IoT) envisions the creation of an environment where everyday objects (e.g. microwaves, fridges, cars, coffee machines, etc.) are connected to the internet and make users’ lives more productive, efficient, and convenient. During this process, everyday objects capture a vast amount of data that can be used to understand individuals and their behaviours. In the current IoT ecosystems, such data is collected and used only by the respective IoT solutions. There is no formal way to share data with external entities. We believe this is very efficient and unfair for users. We believe that users, as data owners, should be able to control, manage, and share data about them in any way that they choose and make or gain value out of them. To achieve this, we proposed the Sensing as a Service (S2aaS) model. In this paper, we discuss the Sensing as a Service ecosystem in terms of its architecture, components and related user interaction designs. This paper aims to highlight the weaknesses of the current IoT ecosystem and to explain how S2aaS would eliminate those weaknesses. We also discuss how an everyday user may engage with the S2aaS ecosystem and design challenges.

💡 Research Summary

The paper begins by outlining the current state of the Internet of Things (IoT) ecosystem, where data generated by everyday objects—such as appliances, vehicles, and wearables—is collected and used exclusively by the proprietary platforms that own those devices. This siloed approach leads to three major shortcomings: (1) redundant data collection that wastes energy and bandwidth, (2) heightened privacy risks because users have no control over who accesses their personal streams, and (3) a loss of potential economic and societal value that could be derived from broader data sharing.

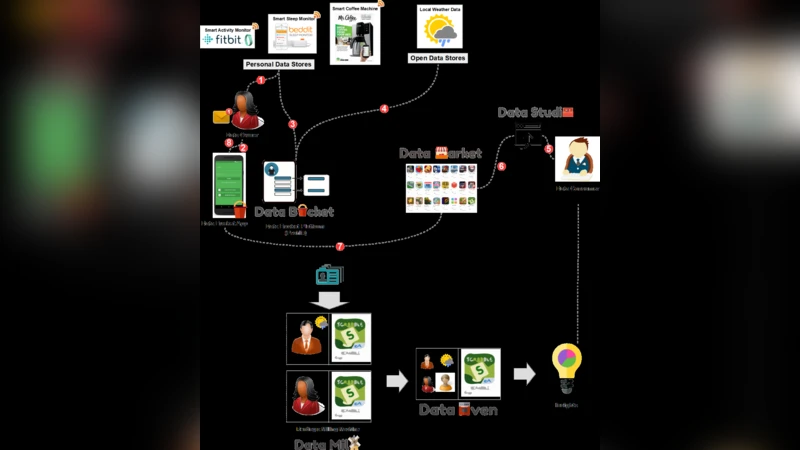

To address these issues, the authors propose a “Sensing as a Service” (S2aaS) model that re‑positions the user as the true owner of the data. In the S2aaS ecosystem three principal actors interact: the Data Provider (the end‑user), the Data Broker (a platform that mediates transactions), and the Data Consumer (businesses, research institutions, or public services). The core idea is to enable users to define fine‑grained sharing policies for each sensor, each time window, and each intended purpose, and to receive compensation—cash, points, coupons, or service credits—when their data is accessed.

The architecture is organized into four layers. The Physical Layer consists of heterogeneous sensors embedded in everyday objects that generate raw measurements. The Edge Layer performs local preprocessing, encryption, and policy enforcement; a mobile application allows users to configure sharing preferences on the fly. Privacy‑preserving techniques such as homomorphic encryption and differential privacy are employed so that raw data never leaves the device in an unprotected form.

The Broker Layer hosts a decentralized data marketplace built on a hybrid blockchain (private consensus for high‑throughput contract execution, public anchoring for immutability). Smart contracts encode the terms of each data lease—access rights, duration, price, and revocation conditions—and automatically enforce payment and audit trails. Distributed storage (e.g., IPFS) guarantees data integrity and availability without relying on a single point of failure.

The Service Layer provides APIs and streaming interfaces (Kafka, Flink) that allow consumers to subscribe to data feeds, run analytics, or train AI models. Because the broker mediates access, consumers receive only the data slices they have paid for, and they are bound by the contractual obligations encoded in the smart contract.

Key technical components identified include: (i) lightweight cryptography for resource‑constrained edge devices, (ii) a “Data Sharing Dashboard” UI that visualizes sensor status, sharing policies, and real‑time earnings, (iii) a pricing engine that can use market‑driven auctions or fixed‑rate models, and (iv) compliance modules that map the platform’s operations to GDPR, CCPA, and emerging data‑sovereignty regulations.

Implementation challenges are discussed in depth. Network latency and power consumption become critical when scaling to millions of sensors; the authors suggest adaptive sampling and edge‑offloading strategies to mitigate these effects. Smart‑contract gas costs and blockchain scalability are addressed by employing a layered consensus model where most contract logic executes off‑chain and only cryptographic proofs are posted to the public ledger. The lack of standardized metadata schemas is tackled by adopting the ISO/IEC 30141 IoT reference architecture and W3C Web of Things vocabularies, enabling interoperable data descriptions across vendors.

The paper concludes that S2aaS can transform the IoT landscape from a closed, vendor‑centric data monopoly into an open, user‑driven data economy. This shift promises new revenue streams for users, richer datasets for innovators, and more transparent, accountable data practices. However, the authors caution that technical sophistication must be matched by user education, robust legal frameworks, and incentives for platform providers to open their data pipelines. Future work is outlined as pilot deployments, longitudinal studies of user behavior, and policy‑level collaborations to ensure that S2aaS can be scaled responsibly and ethically.

Comments & Academic Discussion

Loading comments...

Leave a Comment