Towards Traceability in Data Ecosystems using a Bill of Materials Model

Researchers and scientists use aggregations of data from a diverse combination of sources, including partners, open data providers and commercial data suppliers. As the complexity of such data ecosystems increases, and in turn leads to the generation of new reusable assets, it becomes ever more difficult to track data usage, and to maintain a clear view on where data in a system has originated and makes onward contributions. Reliable traceability on data usage is needed for accountability, both in demonstrating the right to use data, and having assurance that the data is as it is claimed to be. Society is demanding more accountability in data-driven and artificial intelligence systems deployed and used commercially and in the public sector. This paper introduces the conceptual design of a model for data traceability based on a Bill of Materials scheme, widely used for supply chain traceability in manufacturing industries, and presents details of the architecture and implementation of a gateway built upon the model. Use of the gateway is illustrated through a case study, which demonstrates how data and artifacts used in an experiment would be defined and instantiated to achieve the desired traceability goals, and how blockchain technology can facilitate accurate recordings of transactions between contributors.

💡 Research Summary

This paper addresses the growing challenge of maintaining traceability and accountability within complex, multi-source data ecosystems used in scientific research and AI development. It proposes a novel conceptual model for data traceability by adapting the Bill of Materials (BoM) and Bill of Lots (BoL) schemes, which are well-established for supply chain tracking in manufacturing and agri-food industries, to the domain of digital data.

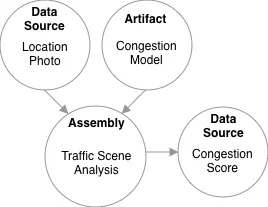

The core innovation lies in modeling a data pipeline as a series of “assemblies.” Each assembly is defined by its input data sources, supporting “artifacts” (such as software components, ML models, licenses, documentation, and human resources), and its output, which can be new data or a new artifact. The static design of the entire system, describing how these assemblies are connected, constitutes the BoM. Whenever the described experiment or process is executed, a unique BoL instance is created from the BoM. This BoL captures dynamic, run-time information—like the specific API endpoints called, parameter values used, or the actual data retrieved—in “shadow” data items linked to each BoM component. The combination of the static BoM and the dynamic BoLs provides a complete, queryable record of a data product’s lineage, enabling both backward tracing (to find origins) and forward tracking (to find all uses).

The paper details the architecture and implementation of a pilot gateway named “dataBoM,” which operationalizes this model. Built using Node.js with an Apollo GraphQL server interface and a MongoDB backend, the gateway provides APIs for researchers to define, store, and query BoMs, and to instantiate and archive BoLs for each experiment run. This creates an auditable trail of data provenance and context.

Furthermore, the paper explores the integration of blockchain technology to enhance the model’s trustworthiness in multi-party, decentralized settings. Blockchain’s properties of immutability and non-repudiation can provide a secure, persistent ledger for recording transactions between data providers and consumers. For instance, a BoM could store a blockchain address, allowing a client application to initiate a smart contract transaction at runtime to access paid data or to immutably log a data request. A case study illustrating traffic congestion analysis using data from IoT sensors, social media, and open data sources demonstrates the practical application of the gateway and the traceability benefits it affords.

In summary, this work presents a structured, industry-inspired framework to bring much-needed transparency and accountability to data-driven science and AI. By treating data and its context as composable components in a supply chain, the BoM model and its implementing gateway offer a powerful mechanism for end-to-end traceability, facilitating error diagnosis, compliance verification, and the development of more trustworthy data economies.

Comments & Academic Discussion

Loading comments...

Leave a Comment