Speech denoising by parametric resynthesis

This work proposes the use of clean speech vocoder parameters as the target for a neural network performing speech enhancement. These parameters have been designed for text-to-speech synthesis so that they both produce high-quality resyntheses and al…

Authors: Soumi Maiti, Michael I M, el

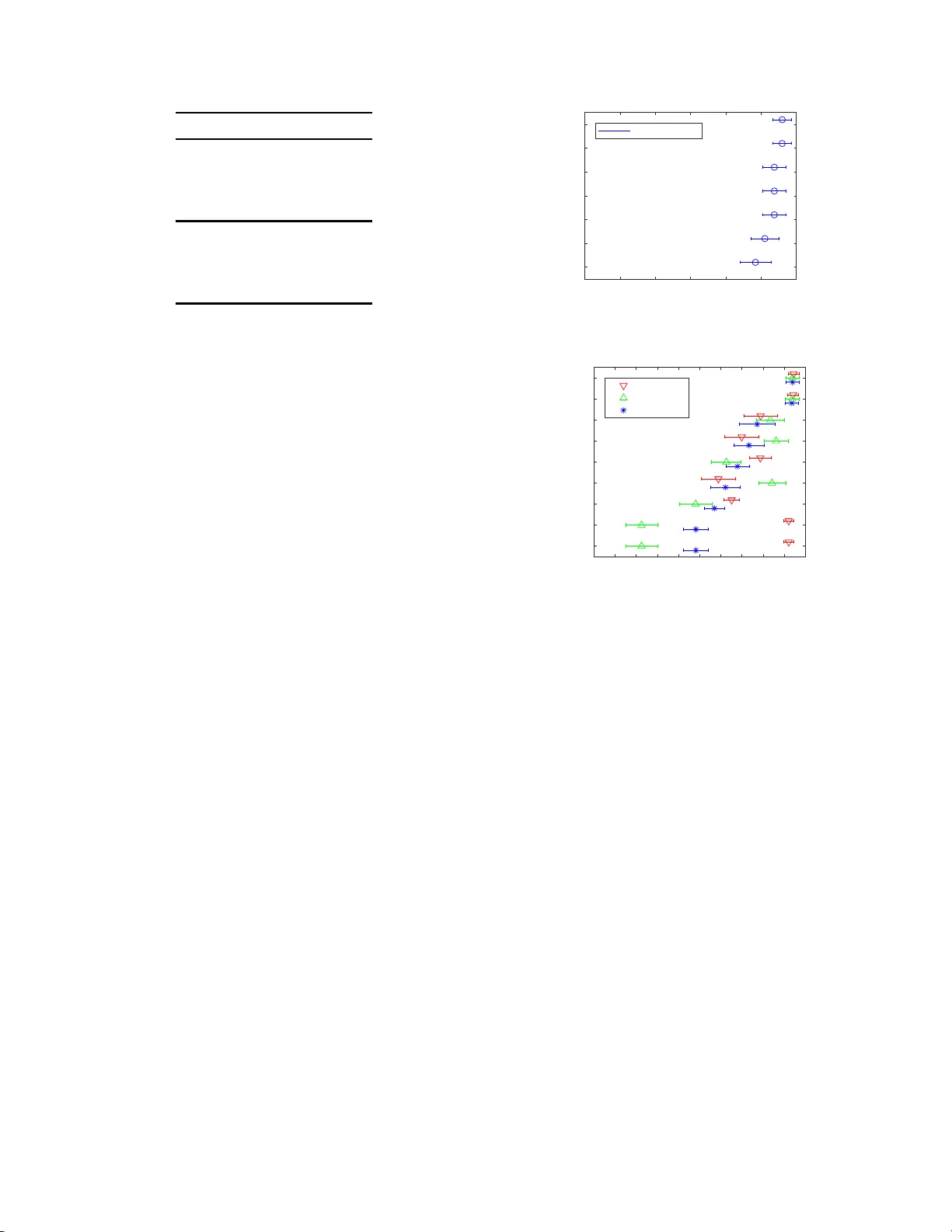

SPEECH DENOISING BY P ARAMETRIC RESYNTHESIS Soumi Maiti and Michael I Mandel The CUNY Graduate Center and Brooklyn College Ne w Y ork, NY ABSTRA CT This w ork proposes t he use of clean spe e ch vocoder parame- ters as th e target fo r a neural network performin g speech en- hancemen t. These parameter s have been designed f o r text-to- speech synthesis so that they both prod uce high -quality resyn - theses a nd a lso are straightf orward to model with neural n et- works, but have not been utilized in speec h enhan cement until now . In comparison to a matched text-to-speech system th at is giv en the gro und tru th transcripts o f the noisy speech, our model is able to prod uce m ore n atural speech because it has access to the true prosody in the noisy speech . In co mparison to two denoising systems, the o racle W iener mask and a DNN- based mask predictor, our model equals the oracle W iener mask in subjective quality and intelligibility and surp a sses the r ealistic system. A vocoder-based upper boun d shows that there is still room for improvement with this approach beyond the oracle Wiener mask. W e test speaker-dependen ce with two speakers and show that a single model can be used for mu ltiple spea kers. Index T erms — Speech enh ancemen t, synthesis, vocoder 1. INTR ODUCTION The g eneral approach of speech enh ancement has been to modify a noisy signal to make it more like the clean signal [1]. The main prob le m s for such systems ar e the over -suppression of the speech and und er-suppression of the noise. Ideally , speech enh a n cement systems s h ould remove the n o ise com- pletely with out d ecreasing th e speech quality . There are, howe ver, statistical text-to-speech (TT S) synthesis system s that can pr o duce high-q uality spe ech from textual inpu ts (e.g., [2]) by training an ac oustic mo del to m ap text to the time-varying acoustic parameters of a vocoder, which then generates the speech. The m ost difficult par t of th is task , howe ver, is predicting realistic pro sody (timin g infor m ation and pitch and loudness con to urs) from pure text. In this pape r, we propo se combinin g these two ap proach es to capitalize on the stre n gths of each by predicting the acous- tic parameter s of clean speech from a noisy observation a n d then using a vocoder to synthesize the speech. W e show that this combine d system c an p roduce high-q uality and noise-fr ee speech utilizing the true pro sody observed in the noisy speech. W e de monstrate that the noisy spee ch signal has mo re info r- mation abou t th e clean speech than its tr anscript does. Specif- ically , it is easi er to p redict realistic pr o sody from the noisy speech than from text. Thus, we train a neural network t o learn the m apping from n oisy speech features to th e acous- tic p arameters o f the co rrespon ding clean speech. From the predicted a c o ustic features, we g enerate clean sp e ech u sin g a speech synth esis vocod e r . Since we are creating a clea n resynthesis of the n o isy sign al, the output sp e ech q uality will be hig her than stan dard speech denoising sy stems and co m- pletely noise-f ree. W e refer to the pr oposed m odel as p ara- metric r esynthesis . In this paper, we sh ow that parametric resyn thesis outper- forms statistical TTS in terms of tr aditional speech synthesis objective metrics. Next we subjectively ev aluate the intelligi- bility and q uality of the resyn thesized speech and com pare it with a m ask pred icted by a DNN-based system [3] and the o r- acle W ien e r mask [4]. W e show that the resy nthesized speech is noise-f r ee an d has overall quality and intelligibility equ iv- alent to the orac le W iene r mask and exceeding that of the DNN-predicte d mask. W e also show that a sing le p aramet- ric re sy nthesis mo del can be u sed fo r m ultiple speakers. 2. RELA TED WORK T ra ditional speech synthesis systems a r e of two typ e s, con - catenative and param etric. In ou r previous works, [5, 6, 7 , 8] we propo sed concaten ativ e synthesis system s for d enois- ing sp e ech. Thou gh these mo dels can g enerate h ig h quality speech, th ey are speaker-dependent an d gen erally requ ir e a large dictio n ary of speech examples f rom that speaker . Alter- natively , the c urrent p aper utilizes a p arametric speec h synthe- sis model, w h ich m ore e asily gen eralizes to comb inations of condition s n ot seen explicitly in training examples. In terms of param etric resynthesis, Rethage et al. [9] b u ilt an en d -to-end mo del to map noisy audio to explicit m odels of both clean speech and noise using a W aveNet-like [10] ar- chitecture. Com pared to this model, our de n oising system is much simple r, as it does not require an explicit m o del of the observed noise in order to conver g e and n eeds much less data and time to train. This simplicity comes from u sing th e non- neural WORLD v ocoder [11]. ©2019 IEEE. Personal use of this material is permitted. Permission from IE EE must be obtained for all o th er uses, in any current or future m edia, including reprin ting/repu blishing this material f o r ad vertising or p romo tio nal purpo ses, cr eating new collective works, f o r r esale or redistribution to servers or lists, or reuse o f any c opyrighted co m ponen t of this work in other works. Fig. 1 . V ocod er denoising model 3. MODEL O VER VIEW Parametric resynthesis consists of two stages: pr e diction and synthesis as shown in Figure 1. The first stage is to train a pre d iction mo del with noisy audio featur es as inpu t and clean acou stic features a s ou tp ut labels. The seco nd stage is to resynthesize audio using the vocoder from the pred ic te d acoustic featu res. W e use th e WOR LD vocoder [11] to transform be- tween acoustic parameters an d clean speech wa veform . T his vocoder allows both th e encoding of speech audio into acous- tic p arameters and the decoding of a c o ustic parameters back into audio with very little lo ss of speech qu ality . The acoustic parameters ar e m uch e a sier to p redict u sing neura l network prediction mo dels than the r aw audio. W e use the encod ing of clean speech to gen e rate our tr aining targets and th e d e- coding of prediction s to g enerate o u tput aud io. The WORLD vocoder is incorp o rated into the Merlin neural network- based speech synth esis system [ 2], and we utilize Merlin’ s train ing targets and losses for our mo del. The pr ediction mod el is a n eural ne twork th at takes as input the log m e l spectra of the noisy audio and predic ts clean spe ech acoustic fe atures at a fixed frame rate. The WORLD encod er outpu ts f o ur acou stic param eters: i) spec- tral en velop e, ii) log f u ndamen tal freq u ency (F0) , iii) a voiced/un voiced dec ision and i v ) aperiod ic en ergy of the spectral env elope. All the featu res are con c atenated with their first and second deriv atives and used a s the targets of the pr ediction model. There are 60 fe atures for spec tr al en- velope, 5 for band ape r iodicity , 1 fo r F0 and a boolean flag for the voiced/unv o ic e d decision. The p rediction model is then trained to minimize the m ean squared error loss between prediction and grou nd tr uth. Th is architectur e is similar to the acoustic mo delling of statistical TT S. W e first use a feed- forward DNN as the core of the pr ediction model, then we use LSTMs [12] for better inc o rpora tion of co ntext. For the feed-fo rward DNN, we include a n explicit con text of ± 4 neighbo ring frames. 4. EXPERIMENTS 4.1. Dataset The no isy dataset is generated by add ing en vir onmental n oise to th e CMU arctic speech dataset [13]. The arctic dataset contains the same 11 32 sentences spoken by four different speakers. The speech is recor ded in stud io en v ironmen t. The sentences ar e taken fr om d ifferent texts fro m Pro ject Guten - berg and a re phone tica lly balanced . W e ad d environmental noise fr om the CHiME-3 challeng e dataset [1 4]. The noise was record ed in f our d ifferent environments: street, ped es- trian walkway , cafe, and bus interior . Six channels are av ail- able for each no isy file, we treat each channel as a separ ate noise recordin g. W e mix clean sp eech with a rand o mly cho- sen no ise file starting from a r a ndom o ffset with a con stant gain o f 0 .95. The signal- to-noise ratio (SNR) o f the noisy files rang e s from − 6 dB to 21 dB, with av erage bein g 6 dB. The sentences are 2 to 13 words long, with a mean len g th of 9 words. W e m ainly use a female spee c h corp us (“slt”) for our experimen ts. A male (“ bdl”) voice is used to test the speaker-dependence of the system. The dataset is pa r titioned into 1 000- 6 6-66 as train -dev-test. Features are extracted with a wind ow size of 64 m s at a 5 ms hop size. 4.2. Evaluation W e e valuate two a sp ects of the param etric resynthesis system. Firstly , we co mpare speech synthesis ob je c tive metrics like spectral distortio n and erro rs in F0 prediction with a TTS sys- tem. This quantifies the perfo rmance o f our m odel in transf e r- ring pro sody from noisy to clean speec h. Secondly , we com- pare the intelligibility an d quality of the speec h generated by parametric resynthesis (PR) aga inst two speech e n hancem ent systems, a DNN-pred icted ratio mask (DNN-IRM) [3] and the oracle W iener mask ( OWM) [4]. The id eal ratio mask DNN is trained with the same data as PR. The O WM uses k nowledge of the true speech to compute the W iener mask and serves as an upp e r bo und on th e perfo rmance ac h iev able by m ask based enhancem ent systems 1 . A lim itation of the prop osed metho d is that the vocoder is not able to p erfectly repro duce clea n speech, so we en- code and decode clean speech with it in order to estimate the loss in intelligibility and quality attributable to th e vocoder alone, which we show is small. W e call this system vocoder- encoded -decod ed (VED). Mo r eover , we also measure the per- forman ce of a DNN that predicts vocode r parameters dir ectly from c lean sp eech as a mor e realistic upp er bou nd o n o ur speech deno ising system. This is the PR mo del with clean speech as input, referred to as PR-clean . 4.3. TTS objective measur es First, we ev aluate the TTS objective measures fo r PR, PR- clean, an d th e TTS system. W e tr a in the feedfo rward DNN with 4 layers o f 51 2 neurons ea c h with tan h activ atio n func- tion an d th e LSTM with 2 layers of width 512 each. W e u se adam op tim ization [15] an d early stop ping regularization . For TTS system inputs, we use the ground truth tran script o f the noisy sp e ech. As b o th TT S and PR are predictin g acoustic features, we m easure errors in th e prediction v ia mel c e pstral 1 All files are av ailabl e at http://mr- pc.org/work/ic assp19/ Spectral Distortion F0 measures System MCD (dB ↓ ) B APD (dB ↓ ) RMSE (Hz ↓ ) CORR ( ↑ ) VUV ( ↓ ) PR-clean 2.68 0.16 4.95 0.96 2.78% TTS (DN N) 5.28 0.25 13.06 0.71 6.66 % TTS (L STM) 5.05 0.24 12.60 0.73 5.60 % PR (D NN ) 5.07 0.19 8.83 0.93 6.48% PR (L STM) 4.81 0.19 5 . 62 0.95 5.27% T able 1 . T T S o bjective me asures fo r single - speaker experimen t: mean cepstral distor tion ( MCD), ba nd aperio dicity (BAPD), root mean squ are error (RMSE), voiced-unvoiced err o r rate (VUV), an d correlation (CORR). For MCD, BAPD, RMSE, and VUV lower is better ( ↓ ), for CORR high er is better ( ↑ ). Speakers Spectral Distortion F0 measures Model T ra in T est MCD (dB ↓ ) B APD(dB ↓ ) RMSE(Hz ↓ ) CORR( ↑ ) UUV( ↓ ) PR slt slt 4.81 0.19 5.62 0.95 5.27% PR slt+bdl slt 4.91 0.20 8.36 0.92 6.50% PR bdl bdl 5.40 0.21 9.67 0.82 12.34% PR slt+bdl bdl 5.19 0.21 10.41 0.82 12.17% T able 2 . T TS ob jectiv e measures for multi-spe a ker parametr ic resynthesis models compared to single spea ker model. distortion (MCD), ba n d ape riodicity distor tio n (BAPD), F0 root mean squ are erro r (RMSE), Pearson correlation (CORR) of F0, and classification er r or in voiced-unvoiced decisio n s (VUV). Th e results are rep orted in T able 1. Results from PR-clean show that acoustic parame ters that generate spe e ch with very low spectral distortion and F0 error can be pr edicted from clean spee ch. Mo re impo r tantly , we see from T ab le 1 that PR perf o rms consider ably better th an the TTS system. It is also in ter esting to note that the F0 measures, RMSE an d Pearson c orrelation are sign ificantly better in the parametric resynth e sis system than TT S. T his demo nstrates that it is easier to predict acoustic features, inclu d ing prosod y , from noisy speech than from text. W e observe that the LSTM perfor ms best an d it is u sed in o ur subsequent experim ents. Evaluating multiple speaker model Next we train a PR model with speech from two speakers an d test its effecti ve- ness o n each speaker’ s d ataset. W e first train two single- speaker PR mod els using the slt (female) and bdl (male) d ata in the CMU arctic dataset. Then we train a new PR mod el with speech f rom both speakers. W e me asure the o bjective metrics on both d atasets to u nderstand how well a sing le model can mod el both speakers. Th ese objective metrics are re p orted in T able 2, from wh ich we observe that the single-speaker m o dels sligh tly o u t-perfo rm the multi-speaker models. On the bdl dataset, howe ver, the multi- sp eaker mo del perfor ms better than the single-speaker model in pre d icting voicing decision s and in MCD. It scores th e same in BAPD and F0 co rrelation, but d o es worse on F0 RMSE. These re- sults show that the same mo del can b e used f o r multiple speakers. In f uture work we will invest igate the degree to which a sing le mo del can generalize to co mpletely u nseen speakers. 4.4. Speech enhancement objective measures W e measure ob jectiv e intelligib ility with sho rt-time-o b jectiv e- intelligibility (STOI) [1 6] and ob jectiv e qu a lity with percep - tual evaluation o f speech quality (PE SQ) [17]. W e compare the clean , noisy , VED, TTS, PR-clean speech for referen ce. The results are reported in T able 3. Of the vocoder-based systems, VED shows very high ob - jectiv e quality and intelligib ility . T his dem onstrates that the vocoder is able to produce high fidelity speech when it is fed with acoustic parameters that ar e exactly correct. The PR- clean system shows slightly lower intelligibility and q uality than VED. The TTS system shows very low qua lity and intel- ligibility , but this can be explained by the fact that th e objec- ti ve measur e s c o mpare the outpu t to the o r iginal clean signal. For the speech deno ising systems, the oracle Wiener mask perf orms b est, be cause it has access to the clean spe ech. While it is an up per bound on mask - based sp eech en hance- ment, it does degrade the quality of the speech f rom the clean by a tten uating regions where th ere is speech presen t, but the no ise is louder . Parametric resy n thesis outp e rforms the predicted IRM in objecti ve quality and intelligibility . 4.5. Subjective I ntelligibility and Quality Finally we ev aluate the subjective intelligibility and q uality of PR com p ared with O WM, DNN-IRM, PR-clean, and th e Model PESQ STOI Clean 4.50 1.00 VED 3.39 0.93 O WM 3 .31 0.96 PR-clean 2.98 0.92 PR 2.43 0.87 DNN-IRM 2. 26 0.80 Noisy 1.88 0.88 TTS 1.33 0.08 T able 3 . Speech enhan cement objective metrics: qua lity (PESQ) and intelligibility (STOI), higher is better for b o th. Systems in the top section u se oracle inf ormation abo ut the clean speech . All systems sorted by PESQ. groun d truth c le a n an d noisy speech. From 66 test sen tences, we chose 12 , with 4 sentences from each o f three grou ps: SNR < 0 d B, 0 dB ≤ SNR < 5 d B, and 5 dB ≤ SNR. Prelim- inary listening tests showed that the PR-clean files soun ded quite similar to the VED files, so we included only PR-clean. This resulted in a total of 84 files (7 versions of 12 sen tences). For the subjective inte llig ibility te st, sub jects wer e pr e - sented with all 84 senten ces in a ra ndom ord er an d were asked to transcribe the word s that they h e ard in each on e. Four su b - jects listene d to the files. A list of all o f the word s was given to the subjects in alphab etical or der, but they were asked to wr ite what they he a rd. Figu re 2 shows the percentag e of words correctly iden tified averaged over all files. Intelligibility is very high ( > 90 % ) in all systems, including noisy . PR-clean achieves intelligib ility as hig h as clean speech . O WM, PR, and noisy speech had equiv alen t intelligibility , slightly below that o f clean speech. Th is sho w s that PR ac h iev es intelligibil- ity as high a s the oracle Wiener mask. The speech qua lity test f ollows the Mu ltip le Stimuli with Hidden Reference and Anch or (MUSHRA) p aradigm [18]. Subjects were pr esented with all seven of the versions of a giv en sentence together in a r andom or der witho u t iden tifiers, along with r eference clean and noisy versions. The subjects rated the spee ch quality , n oise reductio n qu ality , and overall quality of each version between 1 and 1 00, with higher scores denoting b etter qu a lity . Th ree subjects particip ated an d re- sults a r e shown in Figure 3 . From the re su lts, we see that the PR system a chieves higher noise suppression quality th an th e OW M, dem onstrat- ing th at the output is n oise-free. PR also achieves co m parable overall quality to OWM an d PR-clean , indicating that its per- forman ce is close to the ceiling imposed b y the vocoder . This ceiling is dem o nstrated by the difference b e twe en PR-clean and the origin al clean sp eech. Note also that the large objec- ti ve d ifferences between PR an d O WM are n ot present in the subjective resu lts, sugge stin g that reference -based ob jectiv e measures may not be accurate for synthetic signals. The PR 70 75 80 85 90 95 100 DNN-IRM TTS PR Noisy OWM Clean PR-clean words identified Fig. 2 . Subjective intelligib ility: percentage of corre c tly identified words. E rror b ars show twice the stand ard erro r . 0 10 20 30 40 50 60 70 80 90 100 Noisy Hidden Noisy DNN-IRM TTS OWM PR PR-clean Hidden Clean Clean Speech Noise Sup Overall Fig. 3 . Subjective qu ality: higher is better . system ac hieves b etter speech q uality tha n the TTS system and better quality in all three mea sures tha n DNN-IRM. 5. CONCLUSION This paper has intro duced a speech d enoising system inspired by statistical text-to-speech synth e sis. T he p roposed para- metric resyn th esis system pre dicts the time-varying acoustic parameters o f c le a n speech directly from noisy sp e e ch, an d then u ses a vocoder to gener a te the speech wa vefo rm. W e show that this model outper forms statistical TTS by cap tur- ing the prosody of the noisy speech. It pr ovides c o mpara- ble qu a lity and intelligibility to th e o racle Wiener m ask by reprod ucing all p a rts of the speec h signal, even th o se buried in noise, while still allowing room for impr ovemen t as demon- strated b y its own o racle u pper bou nd. Future work will ex- plore th e extent of spea ker-indepen dence tha t is ach iev able with this system and other kind s of inpu ts like filtered and de- graded speech [19], and electroph ysiological r e cording s like EEG [ 20] an d ECoG [21]. 6. A CK NO WLEDGMENTS The auth o rs would like to than k Y uxuan W a n g for he lp ful dis- cussions. This m a te r ial is b ased upon work sup ported by the National Science Foundatio n (N SF) under Grant IIS-16 1 8061 . Any opinion s, finding s, and conclusio n s or recommend a tions expressed in this material are those of the au thor(s) and do no t necessarily reflect the views o f the NSF . 7. REFERENCES [1] D. W ang and J. Chen, “Supervised spee ch separation based on d eep learnin g: An overview , ” IEEE/ACM T ransaction s on Audio, Spee ch, a nd Language Pr o cess- ing , v o l. 2 6, no. 10, pp. 170 2–172 6, Oct. 2018 . [2] Z hizheng W u , Oliver W atts, and Simo n K in g, “Mer- lin: An ope n sourc e neural network speech synth esis system, ” P r oc. SSW , 20 16. [3] Y u xuan W ang , Arun Nar ayanan, and DeLiang W ang, “On training targets fo r super vised speech sep aration, ” IEEE/ACM T rans. Audio, Speech, a nd Lan guage Pr o- cessing , vol. 22, n o . 12, pp. 18 49–1 858, 2 014. [4] Ha kan Erdogan, John R. Her sh ey , Shinji W atanab e, an d Jonathan Le Roux, “Phase-sensitive a n d recognitio n- boosted speech sep aration using d eep recu rrent neural networks, ” in Pr oc. ICASSP , 201 5, vol. 20 15-Au gus. [5] M ichael I Mand el, Y o u ng-Suk Cho, and Y uxuan W ang , “Learning a con catenative resynth esis system f o r n oise suppression, ” in Pr oc. IEEE GlobalSIP Conf , 2 014, p p. 582–5 86. [6] So umi Maiti and Mich ael I Ma n del, “Concatenative resynthesis using twin networks, ” Pr oc. I nterspeech , p p. 3647– 3651 , 2017 . [7] So umi Maiti, Joey Ching , and Michael Man del, “Large vocab ulary co ncatenative resyn thesis, ” in Pr oc. Inter- speech , 20 18. [8] Ali Raza Sy ed, Trinh V iet Anh, an d Michael I Man- del, “Con catenative resynthesis with improved train ing signals for speech enha n cement, ” in Pr o c. Interspeech , 2018. [9] Da rio Rethage, Jordi Pons, and Xavier Serra , “ A wa venet f o r speech d enoising, ” in Pr oc. I CASSP , 2 0 18, pp. 5069–5 0 73. [10] A ¨ aron van den Oord, Sander Dieleman, Heiga Zen, Karen Simonyan, Oriol V inyals, Alex Grav e s, Nal Kalchbrenn e r, And rew W Senior, and K oray Kavukcuoglu, “W aveNet: A g enerative mod e l for raw audio., ” in Pr oc. ISCA S SW , 2016, p. 125. [11] Masan o ri Morise, Fumiya Y okomo ri, an d K enji Ozawa, “WORLD: a vocoder-based h igh-qu ality speech synthe- sis system fo r rea l- time application s, ” IEICE T ransac- tions on Informatio n an d Sy stems , vol. 99 , no. 7 , pp. 1877– 1884 , 2016 . [12] Sepp Hoc h reiter and J ¨ urgen Schmidh uber, “Long sh ort- term m emory , ” Neural Comp utation , vol. 9, n o. 8, pp. 1735– 1780 , 1997 . [13] John K om inek a nd Alan W Black, “ T he CMU ar ctic speech datab a ses, ” in F ifth ISCA wo rksh o p on speech synthesis , 2 0 04. [14] J. Barker, R. Marxer, E. V incent, and S. W atanabe, “The third CHiME speech separation and recognition chal- lenge: Dataset, task and b aselines, ” in Pr oc . ASRU , 2015, pp . 5 0 4–51 1. [15] Diederik P . Kingma and Jimmy Ba, “ Adam : A Method for Stochastic Optimization , ” arXiv:141 2.698 0 [cs] , Dec. 2 014. [16] Cees H T aa l, Richard C Hendr iks, Rich a r d Heusdens, and Jesper Jen sen, “ A short- tim e ob jectiv e intelligibility measure for time-frequ e n cy weighted noisy speech, ” in Pr oc. ICASSP , 20 10, pp . 421 4 –421 7. [17] Antony W Rix, John G Beeren ds, Michael P Ho llier , and Andries P Hekstra, “Percep tu al e valuation of speech q uality ( PESQ)-a new meth o d for speech qu a l- ity assessment o f telephon e n etworks and c odecs, ” in Pr oc. ICASSP . IEE E , 20 01, vol. 2, pp. 749–752 . [18] “Method for th e subjec tive assessment of in termediate quality le vel of audio systems, ” T ech. Rep. BS.1 534-3 , Internatio nal T elecommun ication Un ion Radio c ommu- nication Stan d ardization Sector (ITU-R), 2015. [19] Michael I Mandel and Y oung Suk Cho, “ Audio super-resolution using concaten ativ e resynthesis, ” in Pr oc. IEEE W ASP AA , 2015. [20] James A. O’Sulliv an , Alan J. Power , Nima Me sg arani, Siddharth Rajaram, John J. Foxe, Barbara G. Shin n- Cunningh am, Malcolm Slaney , Shihab A. Shamma, and Edmun d C. Lalor, “ Attentional selection in a cock - tail p arty environment can be decod ed from single-trial EEG, ” Cer ebral Cortex , vol. 25 , no. 7, pp. 1 697–1 706, July 2 015. [21] Hassan Akbar i, Bahar Khalig hinejad, Jose L Her r ero, Ashesh D Mehta, and Nima Me sg arani, “T ow a rds re- constructing intelligible spe e ch from the h uman audi- tory cortex, ” S cientific reports , vol. 9, no. 1 , p p. 8 74, 2019.

Original Paper

Loading high-quality paper...

Comments & Academic Discussion

Loading comments...

Leave a Comment