PRADA: Protecting against DNN Model Stealing Attacks

Machine learning (ML) applications are increasingly prevalent. Protecting the confidentiality of ML models becomes paramount for two reasons: (a) a model can be a business advantage to its owner, and (b) an adversary may use a stolen model to find transferable adversarial examples that can evade classification by the original model. Access to the model can be restricted to be only via well-defined prediction APIs. Nevertheless, prediction APIs still provide enough information to allow an adversary to mount model extraction attacks by sending repeated queries via the prediction API. In this paper, we describe new model extraction attacks using novel approaches for generating synthetic queries, and optimizing training hyperparameters. Our attacks outperform state-of-the-art model extraction in terms of transferability of both targeted and non-targeted adversarial examples (up to +29-44 percentage points, pp), and prediction accuracy (up to +46 pp) on two datasets. We provide take-aways on how to perform effective model extraction attacks. We then propose PRADA, the first step towards generic and effective detection of DNN model extraction attacks. It analyzes the distribution of consecutive API queries and raises an alarm when this distribution deviates from benign behavior. We show that PRADA can detect all prior model extraction attacks with no false positives.

💡 Research Summary

The paper “PRADA: Protecting against DNN Model Stealing Attacks” presents a comprehensive study on the threat of model extraction attacks against Deep Neural Networks (DNNs) and proposes a novel detection-based defense mechanism. Model extraction is a critical security concern where an adversary, with only black-box access to a target model via a prediction API (e.g., in MLaaS platforms), aims to steal the model’s functionality by querying it to train a substitute model. This threatens the model owner’s intellectual property and business advantage, and enables the adversary to craft transferable adversarial examples from the substitute to attack the original model.

The work is structured around two main contributions: advancing model extraction attacks and developing a generic defense.

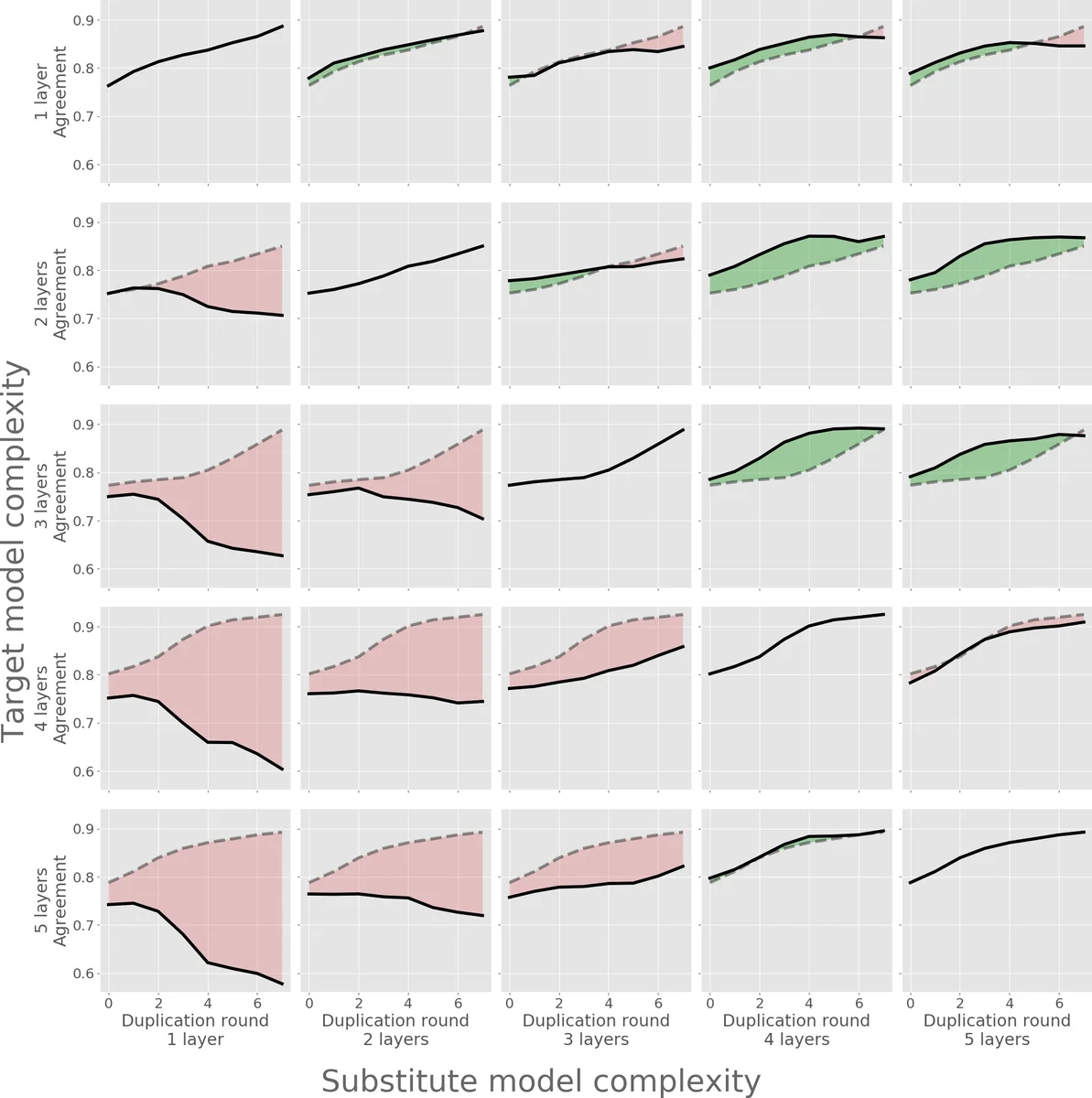

First, the authors introduce new and more effective model extraction attacks. They identify limitations in prior attacks like PAPERNOT (focused on adversarial example transferability) and TRAMER (effective mainly on simple models). Their enhanced attack framework focuses on two key improvements: 1) Cross-validated hyperparameter optimization: Instead of guessing or using fixed hyperparameters, the adversary systematically searches for optimal training parameters (like learning rate, epochs) using the limited queried data, significantly boosting the substitute model’s performance. 2) Generalized synthetic data generation: They extend the Jacobian-based Dataset Augmentation (JbDA) technique from PAPERNOT by employing various adversarial example generation methods (FGSM, BIM, JSMA) to create synthetic queries. This approach more effectively explores the decision boundaries of the evolving substitute model. Evaluations on MNIST and CIFAR-10 datasets show that these new attacks substantially outperform prior work, achieving gains of up to +46 percentage points (pp) in prediction accuracy and +29-44 pp in the transferability of both targeted and non-targeted adversarial examples. The paper also provides key insights, such as the importance of receiving prediction probabilities (not just labels) for better transferability, and the strategic trade-off between using the same architecture as the target model (for higher transferability) versus a more complex one (for higher accuracy).

Second, the paper proposes PRADA, the first generic technique for detecting model extraction attacks. PRADA operates on the premise that the pattern of queries issued during a model extraction attack is statistically distinguishable from benign API usage. The defense monitors a sequence of consecutive query vectors from a single client. It computes the difference (δ) between successive query vectors and analyzes the distribution of these δ values. Under normal usage, queries are assumed to be more random, leading to a δ distribution that approximates a Gaussian. During an extraction attack, however, queries are strategically crafted (e.g., near decision boundaries), causing the empirical δ distribution to deviate significantly from normality. PRADA uses a statistical test (Kolmogorov-Smirnov test) to measure this deviation and raises an alarm if it exceeds a threshold. The evaluation demonstrates that PRADA successfully detects all prior model extraction attacks as well as the new attacks proposed in the paper, achieving a 100% detection rate with zero false positives on benign traffic.

In conclusion, this paper makes significant dual contributions: it demonstrates that model extraction attacks against DNNs are more potent and practical than previously thought, and it provides a effective, generic detection method as a crucial first step towards defending against them. The work underscores the ongoing arms race in machine learning security and sets the stage for future research into more robust defenses and stealthier attacks.

Comments & Academic Discussion

Loading comments...

Leave a Comment