An Empirical Study of Obsolete Answers on Stack Overflow

Stack Overflow accumulates an enormous amount of software engineering knowledge. However, as time passes, certain knowledge in answers may become obsolete. Such obsolete answers, if not identified or documented clearly, may mislead answer seekers and cause unexpected problems (e.g., using an out-dated security protocol). In this paper, we investigate how the knowledge in answers becomes obsolete and identify the characteristics of such obsolete answers. We find that: 1) More than half of the obsolete answers (58.4%) were probably already obsolete when they were first posted. 2) When an obsolete answer is observed, only a small proportion (20.5%) of such answers are ever updated. 3) Answers to questions in certain tags (e.g., node.js, ajax, android, and objective-c) are more likely to become obsolete. Our findings suggest that Stack Overflow should develop mechanisms to encourage the whole community to maintain answers (to avoid obsolete answers) and answer seekers are encouraged to carefully go through all information (e.g., comments) in answer threads.

💡 Research Summary

The paper “An Empirical Study of Obsolete Answers on Stack Overflow” investigates how knowledge embedded in Stack Overflow (SO) answers becomes outdated over time and what characteristics distinguish such obsolete answers. The authors begin by motivating the problem: as software frameworks, libraries, and security standards evolve, answers that were once correct can later mislead developers, potentially causing bugs, performance regressions, or security vulnerabilities. To quantify this phenomenon, the researchers collected a large dataset spanning four years (2019‑2022), comprising more than 100,000 answers across a wide range of tags. They employed a two‑step methodology. First, they automatically extracted answers and associated comments containing keywords such as “obsolete,” “out‑dated,” “deprecated,” and similar expressions. Second, two human annotators manually examined each candidate to determine whether the answer truly contained obsolete information, and if so, whether the obsolescence was present at the moment of posting or emerged later as the technology changed.

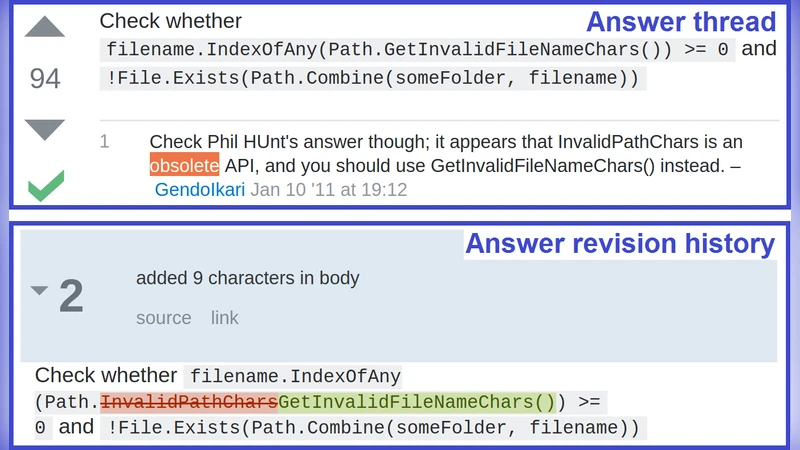

The empirical results reveal three striking patterns. (1) A majority—58.4%—of the identified obsolete answers were probably already obsolete when they were first posted. This suggests that many askers and answerers rely on outdated documentation, legacy tutorials, or personal experience without verifying the current state of the ecosystem. (2) When an answer is flagged as obsolete, only a small fraction—20.5%—ever receives an update. In other words, four out of five obsolete answers remain unchanged indefinitely, even after community members have pointed out their shortcomings. The authors note that highly voted and frequently viewed answers are especially resistant to change, creating a “knowledge bottleneck” where a single outdated answer can propagate incorrect practices to a large audience. (3) Tag‑level analysis shows that answers belonging to fast‑moving domains such as node.js, ajax, android, and objective‑c are significantly more likely to become obsolete than those in more stable, theory‑oriented areas like algorithms or data structures. This aligns with the rapid release cycles and frequent API deprecations characteristic of those platforms.

To address these issues, the authors propose a two‑pronged strategy. On the technical side, they advocate for an automated “obsolescence detection” system that continuously scans code snippets, API calls, and security protocol references within answers. When a mismatch with the latest official specifications is detected, the system would attach a visible warning badge to the answer and optionally suggest a migration path or updated code sample. On the social side, they recommend redesigning the Stack Overflow user interface to lower the friction for community members to propose updates. Possible features include an “Update Suggestion” button, version‑comparison visualizations, and automated notifications when a previously flagged answer is edited. Moreover, they suggest introducing incentive mechanisms—such as special badges, reputation bonuses, or highlighted “maintained” tags—to reward users who keep answers current.

Policy‑level recommendations for the Stack Overflow platform include formalizing an “obsolete” label that becomes part of the search index, encouraging moderators to periodically review high‑traffic tags that evolve quickly, and providing tools for the community to collectively curate and retire answers that are no longer relevant. The authors argue that without such systematic maintenance, Stack Overflow risks becoming a repository of stale knowledge, undermining its core mission as a reliable, up‑to‑date resource for developers worldwide.

In conclusion, the study provides robust empirical evidence that a substantial portion of Stack Overflow answers become outdated either immediately or shortly after posting, and that community‑driven updates are currently insufficient. By combining automated detection with community incentives and UI enhancements, the platform can better manage knowledge decay, ensuring that developers receive accurate, secure, and modern guidance. This work not only highlights a pressing problem in collaborative Q&A sites but also offers a concrete roadmap for making large‑scale technical knowledge bases resilient to the inevitable march of software evolution.

Comments & Academic Discussion

Loading comments...

Leave a Comment