Factor analysis of dynamic PET images: beyond Gaussian noise

Factor analysis has proven to be a relevant tool for extracting tissue time-activity curves (TACs) in dynamic PET images, since it allows for an unsupervised analysis of the data. Reliable and interpretable results are possible only if considered wit…

Authors: Yanna Cruz Cavalcanti, Thomas Oberlin, Nicolas Dobigeon

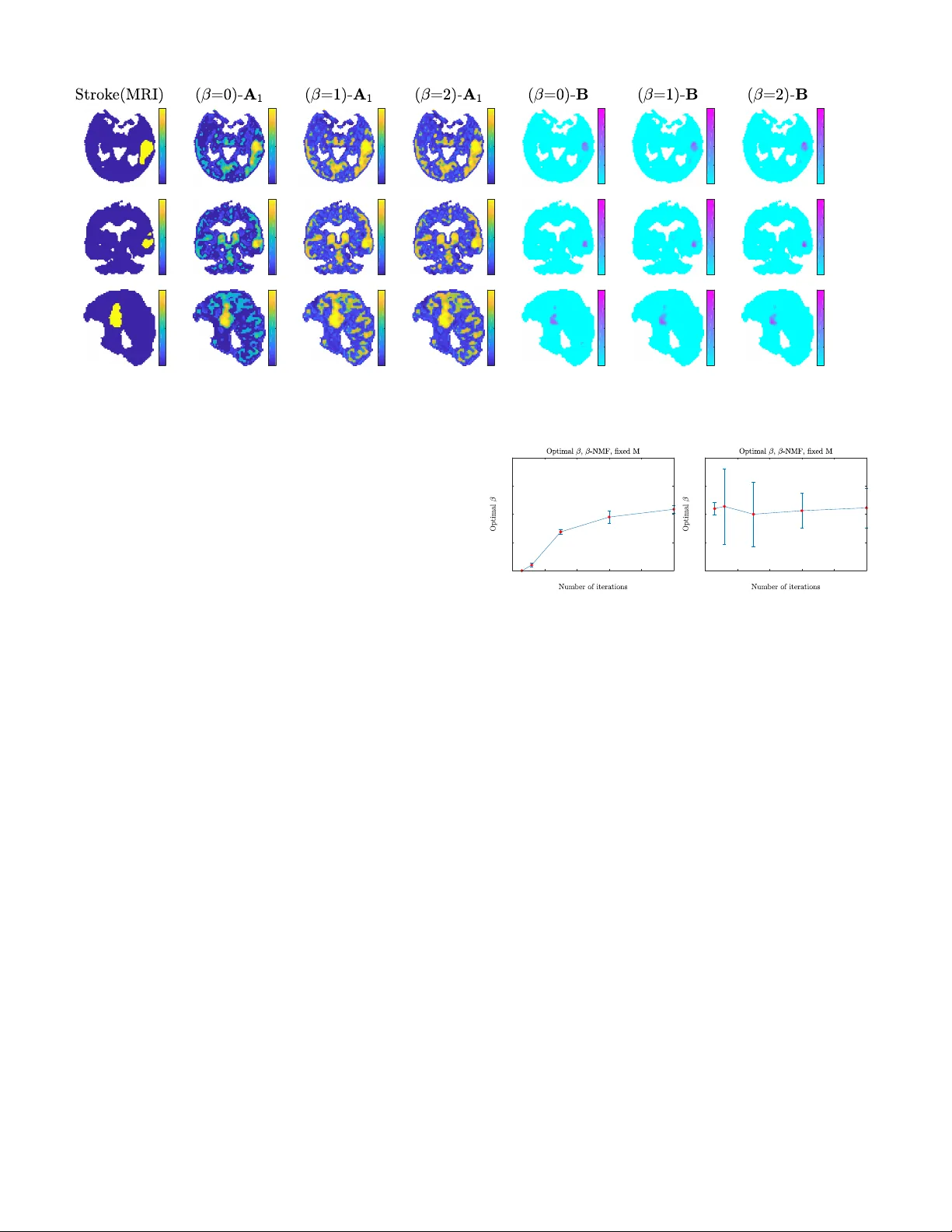

1 F actor analysis of dynamic PET images: be yond Gaussian noise Y anna Cruz Cav alcanti, Member , IEEE , Thomas Oberlin, Member , IEEE , Nicolas Dobigeon, Senior Member , IEEE , C ´ edric F ´ evotte, Senior Member , IEEE , Simon Stute, Maria-Joao Ribeiro, Clovis T auber , Member , IEEE Abstract —Factor analysis has pro ven to be a rele vant tool for extracting tissue time-acti vity curv es (T A Cs) in dynamic PET images, since it allows for an unsupervised analysis of the data. Reliable and interpr etable results are possible only if considered with respect to suitable noise statistics. Howe ver , the noise in reconstructed dynamic PET images is very difficult to characterize, despite the Poissonian natur e of the count-rates. Rather than explicitly modeling the noise distribution, this work proposes to study the relev ance of sev eral divergence measures to be used within a factor analysis framework. T o this end, the β -diver gence, widely used in other applicative domains, is considered to design the data-fitting term in v olved in three differ ent factor models. The performances of the resulting algorithms are ev aluated for differ ent values of β , in a range covering Gaussian, Poissonian and Gamma-distributed noises. The results obtained on two different types of synthetic images and one real image show the interest of applying non-standard values of β to impro ve factor analysis. Index T erms — β -diver gence, unmixing, nonnegative matrix factorization, dynamic PET , factor analysis, NMF , Poisson noise. I . I N T RO D U C T I O N T HANKS to its ability to ev aluate metabolic functions in tissues from the temporal ev olution of a previously injected radiotracer , dynamic positron emission tomography (PET) has become an ubiquitous analysis tool to quantify biological processes. After acquisition and reconstruction, the main time-acti vity curves (T A Cs) (herein called factors ), which represents the concentration of tracer in each tissue and blood over time, can be extracted from PET images for subsequent quantification. For this purpose, factor analysis of dynamic structures (F ADS) has been intensiv ely used [2], [3], further leading to F ADS with nonnegati ve penalizations [4], [5]. Howe ver , these solutions explicitly rely on the assumption that the dynamic PET Part of this work has been presented at the IEEE Int. Conf. Acoust., Speech, and Signal Processing (ICASSP), 2019 [1]. Y . C. Cavalcanti, Th. Oberlin, N. Dobigeon and C. F ´ evotte are with Univ ersity of T oulouse, IRIT/INP-ENSEEIHT , CNRS, 31071 T oulouse Cedex 7, France (e-mail: { Y anna.Cavalcanti, Thomas.Oberlin, Nicolas.Dobigeon, Cedric.Fev otte } @irit.fr). S. Stute is with MIV , CEA, INSERM, Universit ´ es Paris-Sud and Paris-Saclay , Service Hospitalier Fr ´ ed ´ eric Joliot, Orsay , France (e-mail: simon.stute@cea.fr). M.-J. Ribeiro and C. T auber are with UMRS Inserm U930, Univ ersit ´ e de T ours, 37032 T ours, France (e-mail: { maria.ribeiro, clovis.tauber } @uni v-tours.fr). Part of this work has been supported by Coordenac ¸ ˜ ao de Aperfeioamento de Ensino Superior (CAPES), Brazil, and the European Research Council (ERC F ACTOR Y -CoG-681839). noise and the model approximation errors follow Gaussian distributions. T o ov ercome this limitation, sev eral works applied nonnegati ve matrix factorization (NMF) techniques, allowing the Kullback-Leibler (KL) div ergence to be used, which is more appropriate for data corrupted by Poisson noise [6], [7], [8]. NMF with multiplicati ve updates is the approach generally employed since the algorithm is simple and there are less parameters to adjust than in F ADS. Nev ertheless, e ven though the positron decay process can be described by a Poisson distribution [9], the actual noise in reconstructed PET images is not expected to be simply described by Poisson nor Gaussian distributions. Several acquisition circumstances, such as the detector system and electronic components, as well as post-processing corrections for scatter and attenuation, significantly alter the initial Poissonian statistics of the count-rates [10], [11]. Considering the dif ficulties in characterizing the noise properties in PET images, man y works hav e assumed the data to be corrupted by a Gaussian noise [12], [13], [14]. Hybrid distributions, such as Poisson-Gaussian [15] and Poisson-Gamma [16], have been also proposed in an attempt to take into account various phenomena occurring in the data. The work of T e ymurazyan et al. [17] tried to determine the statistical properties of data reconstructed by filtered-back projection (FBP) and iterativ e expectation maximization (EM) algorithms. While FPB reconstructed images were sufficiently described by a normal distribution, the Gamma statistics were a better fit for EM reconstructions. The recent work of Mou et al. [18] further studied the Gamma behavior that can be found on PET reconstructed data. While these works mainly put the emphasis on the noise model, the present study aims at in vestig ating the impact of the div ergence measure to be used for factor analysis of dynamic PET images. This work applies a popular and quite general loss function in NMF , namely the β -div ergence [19], [20]. The β -di ver gence is a family of div ergences parametrized by a unique scalar parameter β . In particular , it has the great advantage of generalizing con v entional loss functions such as the least-square distance, KL and Itakura-Saito di v ergences, respectiv ely corresponding to additive Gaussian, Poisson and multiplicativ e Gamma noise. The current paper will empirically study the influence of β on the factor estimation for three dif ferent methods. First, the standard β -NMF algorithm is applied. Then, an approach that includes a normalization of the factor proportions (herein called β -LMM) is used to provide factors with a physical 2 meaning. Finally , the β -div ergence is also used to generalize the previous model introduced in [21]. Simulations are conducted on two dif ferent sets of synthetic data based on realistic count-rates and one real image of a patient’ s brain. This paper is organized as follows. The considered factor analysis models are described in Section II. Section III presents the β -diver gence as a measure of similarity . Section IV discusses the corresponding factor analysis algorithms able to recover the factors, their corresponding proportions in each v oxel and other parameters of interest. Simulation results obtained with synthetic data are reported in Section V. Experimental results on real data are pro vided in Section VI. A deeper discussion is conducted in Section VII. Section VIII concludes the paper . I I . F AC T O R A N A L Y S I S Let Y be an L × N observation matrix containing a 3D dynamic PET image composed of N voxels acquired in L time-frames. This observation matrix Y can be approximated by an estimated image X ( θ ) according to a factorization model described by P physically interpretable variables θ = [ θ 1 , · · · , θ P ] , i.e., Y ≈ X ( θ ) . (1) The observ ation image is af fected by a noise whose distribution characterization is a highly challenging task, as previously explained. F or this reason, for sake of generality , the description in (1) makes use of an approximation symbol ≈ that generalizes the relation between the factor-dependent estimated image X ( θ ) and the observed data Y . Factor analysis can be formulated as an optimization problem which consists in estimating the parameter vector θ assumed to belong to a set denoted C with possible complementary penalizations R ( θ ) . It is mathematically described as ˆ θ ∈ arg min θ ∈C n D ( Y | X ( θ )) + R ( θ ) o (2) where D ( ·|· ) is a measure of dissimilarity between the observed PET image Y and the proposed model. The choice of this dissimilarity measure will be discussed in Section III. The following paragraphs describe three different factor analysis techniques and detail particular instances of the e xplanatory variable θ under this general formulation. A. Nonnegative Matrix F actorization (NMF) Factorizing a latent (i.e., unobserved) matrix X ∈ R L × N consists in decomposing it into two matrices as X = MA , (3) where M = [ m 1 , ..., m K ] is a L × K matrix of factors and A = [ A 1 , . . . , a N ] is a K × N matrix containing the factor coefficients. In the dynamic PET setting, M is expected to contain the elementary T A Cs characterizing the different kinds of tissues, whereas the coefficient vector a n contains their corresponding proportions in the n th v oxel. In most applicati ve contexts, the number K of elementary T ACs is supposed to be lower than both the number of frames L and the number of pixels N , i.e., K min { L, N } . This choice leads to a low-rank factorization of the matrix X . Moreov er , to provide an additi ve and part-based description of the data, nonnegati ve constraints are assumed for the factors and respecti ve proportions, resulting in the standard NMF formalism [22], [23] A 0 K,N , M 0 L,K , (4) where stands for a component-wise inequality . The formulation of the corresponding NMF optimization problem has been largely considered in the literature [20] and consists in estimating the explanatory variables θ = { M , A } subject to the constraints in (4). B. Linear Mixing Model (LMM) The factorization (3) and constraints (4) that describe a typical NMF can also be en visaged under the light of the LMM widely used in the hyperspectral imagery literature [24]. Additionally to the constraints defined in (4), to associate factors coef ficients with concentrations or proportions, LMM assumes the follo wing sum-to-one constraint A T 1 K = 1 N , (5) where 1 N is the N -dimensional v ector made of ones. The corresponding minimization problem, also widely discussed in the abo ve-mentioned hyperspectral unmixing literature, is formulated as for the NMF , complemented by the additional constraint (5). C. Specific binding linear mixing model (SLMM) The LMM seems to be a rele vant model for dynamic PET data. Although the perfusion in volv ed in the radiotracer diffusion is not linear , in most cases the resulting T AC is approximated by the sum of the pure T A Cs weighted by the factor proportions. But as discussed in [21], in high uptake regions, LMM may not provide a sufficient description of the data. Therefore, a specific binding LMM (SLMM) has been proposed to handle the v ariations in perfusion and labeled molecule concentration affecting the T ACs related to specific binding. It describes the nonlinearity of these T A Cs by an additiv e spatially variant perturbed component that is approximated by a linear expansion over pre viously learned basis elements. By specifically denoting M = [ ¯ m 1 , . . . , m K ] where ¯ m 1 is the nominal specific binding factor , SLMM can be formulated as [21] X = MA + h E 1 A · VB i , | {z } ∆ (6) where “ · ” is the Hadamard point-wise product, E 1 is the matrix [ 1 L, 1 0 L,K − 1 ] , V = [ v 1 , . . . , v N v ] is the L × N v matrix composed of the basis elements used to describe the variability of the specific binding factor (SBF), ( N v L ), and B = [ b 1 , . . . , b N ] is the N v × N matrix composed of internal proportions. If B = 0 , the model in (6) becomes a regular linear mix, as (3). As in [21], to av oid ambiguity in the factor T A Cs due to their strong correlation with the variability elements, the intrinsic variability proportion matrix is constrained to be nonnegati ve B 0 N v ,N . (7) 3 Therefore, the resulting SLMM optimization problem generalizes the NMF and LMM problems where the explanatory parameter v ector is gi ven by θ = { M , A , B } . Under the general formalism (2), the set of constraints is defined by (4), (5) and (7). Moreover , as the SBF variability is only e xpected in the voxels belonging to the region affected by specific binding, B is expected to be zero outside the high-uptake region. Therefore, the spatial sparsity of the related coefficients is enforced by defining the re gularizer in (2) as R ( θ ) , k B k 2 , 1 = N X n =1 k b n k 2 . (8) I I I . D I V E R G E N C E M E A S U R E When analyzing PET data, most of the works in the literature ha ve considered the squared Euclidean distance or the Kullback-Leibler div ergence as the loss function D ( ·|· ) to be used to design the approximation model (1). These choices are intrinsically related to the assumption of Gaussian and Poissonian noises, respecti vely . Ho wev er , as pre viously discussed, the noise encountered in PET data is altered by sev eral external circumstances and parameters, even though its initial count-rates is kno wn to follow a Poisson distribution. Hence, to provide a generalization of these PET noise models, this work proposes to resort to the β -div ergence as the dissimilarity measure underlying the approximation in (1). The β -diver gence first appeared in the works of Basu et al. [19] and Eguchi and Kano [25]. Since then, it has been intensiv ely used, with noticeable successes in the audio literature for music transcription and separation [26], [27], [28]. More precisely , the β -diver gence between two matrices Y and X follows the component-wise separability property D β ( Y | X ) = L X ` =1 N X n =1 d β ( y `,n | x `,n ) (9) and is defined for β ∈ R as d β ( y | x ) = 1 β ( β − 1) ( y β + ( β − 1) x β − β y x β − 1 ) β ∈ R \{ 0 , 1 } y log y x − y + x β = 1 , y x − log y x − 1 β = 0 . (10) The limit cases β = 1 , 0 correspond to the KL and IS div ergences, respecti vely , while β = 2 coincides with the squared Euclidean distance. As an illustration, Fig. 1 compares the loss functions d ( y = 1 | x ) as functions of x for various values of β . For a comprehensi ve discussion of the β -diver gence, the interested readers are invited to consult [29]. Among its interesting properties, the β -diver gence can be related to a wide family of distributions, namely the T weedie distributions, via its corresponding density p ( y | x ) follo wing − log p ( y | x ) = ϕ − 1 d β ( y | x ) + const. (11) where ϕ is a so-called dispersion parameter [30]. In particular , the T weedie distributions encompass a large class of popular distributions, including the Gaussian, Poissonian and Gamma distributions. In other words, choosing the β -di vergence as the 0 0.5 1 1.5 2 2.5 3 3.5 4 0 0.5 1 1.5 Fig. 1. β -div ergence d β ( y | x ) as a function of x with y = 1 and for different values of β . loss function in (2) allows the approximation (1) to stand for a wide range of noise models. For instance, the β -diver gence in the special cases β = 2 , 1 , 0 is related to additiv e Gaussian, Poisson and multiplicativ e Gamma observation noises [20]. As a consequence, thanks to its genericity , the β -div ergence seems to be a relev ant tool to conduct factor analysis when the PET noise is difficult to be characterized. I V . B L O C K - C O O R D I NAT E D E S C E N T A L G O R I T H M The non-con ve x minimization problem stated in (2) is solved through a block-coordinate descent (BCD) algorithm. For each factor analysis model discussed in Section II, the corresponding algorithm iterativ ely updates a latent variable θ i while all the others are kept fixed, allowing for conv ergence tow ards a local solution. The definition of these blocks naturally arises according to the considered latent factor model. The method detailed hereafter leads to multiplicati ve update rules, i.e., consists in multiplying the current variable values by nonneg ativ e terms, thus preserving the nonnegati vity constraint along the iterations. T o av oid undesirable solutions, giv en the non-con ve xity of the problem, the algorithms require proper initialization. The algorithm and corresponding updates used for β -NMF has been introduced in [20]. Therefore, the present paper deriv es only the algorithm associated with the SLMM model, that turns into LMM when fixing B = 0 . The updates are deriv ed following the strategy proposed in [31], while some heuristic rules are inspired by [28]. The principles of these updates are briefly recalled in paragraph IV -A and particularly instantiated for the considered SLMM-based factor model in paragraphs IV -B – IV -D. For conciseness, we only present deriv ations for β ∈ [1 , 2] (the interval where d β ( x | y ) is con ve x with respect to y ) but they can be easily generalized for other values using the methodology described in [20], [32], [31]. The resulting algorithmic procedure is summarized in Algo. 1 where all multiplications (identified by the · symbol), divisions and exponentiations are entry-wise operations, 1 K,L denote a K × L matrix of ones and Γ B , diag[ k b 1 k 1 , · · · , k b 1 k N ] − 1 . Note that, although this algorithmic resolution differs from the one initially proposed in [21], the final results obtained by setting β = 2 are very similar for the same parameter values. 4 Algorithm 1: β -SLMM unmixing Data: Y Input: A 0 , M 0 , B 0 , λ 1 k ← 0 2 ˜ Y ← M 0 A 0 + h E 1 A 0 · VB 0 i 3 while stopping criterion not satisfied do 4 % Update v ariability matrix B k +1 ← B k · " 1 T N v A 1 , : · ( V T ( Y · ˜ X β − 2 )) 1 T N v A 1 , : · ( V T ˜ X β − 1 )+ λ B k Γ B # 1 3 − β 5 ˜ X ← M k A k + h E 1 A k · VB k +1 i 6 % Update f actor T ACs M k +1 2: K ← M k 2: K " ( Y · ˜ X β − 2 ) A T 2: K ˜ X β − 1 A T 2: K # 7 ˜ X ← M k +1 A k + h E 1 A k · VB k +1 i 8 % Update SBF factor proportion A k +1 1 ← A k 1 · " 1 T L (( M 1 1 T N + VB ) · ( Y · ˜ X β − 2 )+ ˜ X β ) 1 T L (( M 1 1 T N + VB ) · ˜ X β − 1 + Y · ˜ X β − 1 ) # 9 % Update other factor proportions A k +1 2: K ← A k 2: K · " M T 2: K ( Y · ˜ X β − 2 )+ 1 K − 1 ,L ˜ X β M T 2: K ˜ X β − 1 + 1 K − 1 ,L ( Y · ˜ X β − 1 ) # 10 k ← k + 1 11 ˜ X ← M k A k + h E 1 A k · VB k i 12 A ← A k 13 M ← M k 14 B ← B k Result: A , M , B A. Majorization-minimization and multiplicative algorithms Our methodology relies on majorization-minimization (MM) and multiplicative algorithms that are common to many NMF settings. Majorization-minimization (MM) algorithms consist in finding a surrogate function that majorizes the original objectiv e function and then computing its minimum. The algorithm iteratively updates each variable θ i giv en all the other variables θ j 6 = i . Hence, the subproblems to be solved can be written min θ i J ( θ i ) = D ( Y | X ( θ )) + R ( θ i ) s.t. θ i ∈ C . (12) By denoting ˜ θ i the state of the latent variable θ i at the current iteration, we first define an auxiliary function G ( θ i | ˜ θ i ) that majorizes J ( θ i ) , i.e., G ( θ i | ˜ θ i ) ≥ J ( θ i ) , and is tight at ˜ θ i , i.e. G ( ˜ θ i | ˜ θ i ) = J ( ˜ θ i ) . The optimization problem (12) is then replaced by the minimization of the auxiliary function. In many NMF problems, canceling the auxiliary function gradient leads to multiplicati ve updates of the form θ i = ˜ θ i " N ( ˜ θ i ) D ( ˜ θ i ) # γ (13) where the functions N ( · ) , D ( · ) and the scalar exponent γ are problem-dependent. A heuristic alternativ e to this algorithm is described in [28]. It consists in decomposing the gradient of the objecti ve function J with respect to (w .r .t.) the variable ˜ θ i as the difference between two nonne gati ve functions, such that ∇ θ i J ( ˜ θ i ) = ∇ + θ i J ( ˜ θ i ) − ∇ − θ i J ( ˜ θ i ) (14) and using (13) with N ( ˜ θ i ) = ∇ − ˜ θ i J ( ˜ θ i ) , (15) D ( ˜ θ i ) = ∇ + ˜ θ i J ( ˜ θ i ) . (16) The heuristic and MM algorithms coincide in many well-known cases [33], [20], [32]. MM guarantees monotonic decrease of the objectiv e function at every iteration. This is not guaranteed by the heuristic alternative, but is often observed in practice [32]. Note that monotonic decrease of the objectiv e function does not automatically implies con v ergence of the parameter iterates, though this is also typically observed in practice. B. Update of the factor T ACs M According to the optimization frame work described abo ve, giv en the current values A and B of the ab undance matrix and the internal proportions, respectively , updating the factor matrix M can be formulated as the minimization subproblem min M J ( M ) = D ( Y | MA + ∆) s.t. M 0 L,K , (17) with ∆ = E 1 A · VB . Follo wing [31], when β ∈ [1 , 2] , the objective function J ( M ) can be simply majorized using Jensen’ s inequality: J ( M ) ≤ X lnk ˜ m lk a kn ˜ x ln d ( y ln | ˜ x ln m lk ˜ m lk ) + δ ln ˜ x ln d ( y ln | ˜ x ln ) | {z } G ( M | ˜ M ) (18) where ˜ x ln = P k ˜ m lk a kn + δ ln is the current state of the model-based reconstructed data. The auxiliary function G ( M | ˜ M ) essentially majorizes the div ergence of the sum by the sum of the diver gences, allowing the optimization of M to be conducted element-by-element. The gradient w .r .t. the element m lk writes ∇ m lk G ( M | ˜ M ) = X n a kn ˜ x ln β − 1 m lk ˜ m lk β − 1 − X n a kn y ln ˜ x β − 2 ln m lk ˜ m lk β − 2 . (19) Thus, minimizing G ( M | ˜ M ) w .r .t. M leads to the following element-wise multiplicati ve update m lk = ˜ m lk " P n a kn y ln ˜ x β − 2 ln P n a kn ˜ x β − 1 ln # γ ( β ) . (20) where γ ( β ) = 1 when β ∈ [1 , 2] . More generally , it can be shown that the update is still valid for β 6∈ [1 , 2] , with γ ( β ) = 1 2 − β for β < 1 and γ ( β ) = 1 β − 1 for β > 2 [31]. 5 C. Update of the factor pr oportions A Giv en the current values M and B of the factor matrix and internal propositions, the update rule for A is obtained by solving min A J ( A ) = D ( Y | MA + h E 1 A · W ) i ) s.t. A 0 K,N , A T 1 K = 1 N , (21) with W = VB . Constructing a MM algorithm that enforces the sum-to-one constraint is not straightforward and we instead resort to the method described in [34], [31], which relies on a change of variable. More precisely , by introducing an auxiliary matrix U , the components a kn of the factor proportion matrix A can be rewritten as a kn = u kn P k u kn , (22) which explicitly ensures the sum-to-one constraint (5). The new optimization problem is then min U J ( U ) s.t. U 0 K,N , (23) with J ( U ) = D ( Y | M u 1 k u 1 k 1 , · · · , u N k u N k 1 + h E 1 u 11 k u 1 k 1 , · · · , u 1 N k u N k 1 · W ) i ) = X ln d ( y ln | X k m lk u kn k u n k 1 + u 1 n k u n k 1 w ln ) . (24) Unfortunately , constructing a MM algorithm for U is not straightforward. As such, we resort to the heuristic alternativ e described in paragraph IV -A. Denoting ˜ x ln = P k 6 =1 m lk ˜ a kn + ˜ a 1 n w ln , this leads to the following multiplicativ e update: u kn = ˜ u kn r kn where r kn = P l ( ˜ x β ln +( m l 1 + w ln ) ˜ x β − 2 ln y ln ) P l ( ( m l 1 + w ln ) ˜ x β − 1 ln + y ln ˜ x β − 1 ln ) , if k = 1 ; P l ( ˜ x β ln + m lk y ln ˜ x β − 2 ln ) P l ( m lk ˜ x β − 1 ln + y ln ˜ x β − 1 ln ) , otherwise. D. Update of the internal variability B Giv en the current states M and A of the factor matrix and factor proportions, respectiv ely , updating B consists in solving min B J ( B ) = D ( Y | MA + h E 1 A · VB ) i ) + λ k B k 2 , 1 s.t. B 0 N v ,N , (25) where the parameter λ controls the trade-of f between the data-fitting term and the spatial sparsity-inducing regularization k B k 2 , 1 . Denoting by ˜ B the current state of B , the model-based reconstructed data using the current estimates is now defined by ˜ x ln = s ln + P i a 1 n v li ˜ b in with s ln = P k m lk a kn . Assuming β ∈ [1 , 2] , Jensen’ s inequalities allows the data fitting term to be majorized as D ( Y | S + [ E 1 A · VB ]) ≤ X ln s ln ˜ x ln d ( y ln | ˜ x ln ) + X i a 1 n v li ˜ b in ˜ x ln d ( y ln | ˜ x ln b in ˜ b in ) | {z } F ( B | ˜ B ) . The auxiliary function associated with J ( B ) can be decomposed as G ( B | ˜ B ) = F ( B | ˜ B ) + λ k B k 2 , 1 . Howe v er , minimizing this auxiliary function w .r .t. B is not straightforward. Follo wing [31], the regularization k B k 2 , 1 is majorized itself by a tangent inequality , thanks to the concavity of the square-root function: k B k 2 , 1 ≤ 1 2 X n k b n k 2 2 k ˜ b n k 2 + k ˜ b n k 2 | {z } H ( B | ˜ B ) . (26) Unfortunately , the resulting auxiliary function is not yet amenable to optimization and our approach again closely follows [32], [31]. The leading monomial of F ( B | ˜ B ) (of degree lower than 2 when β ∈ [1 , 2] ) must be majorized by a quadratic term, matching the quadratic upper bound of the penalty function. After canceling the gradient of the resulting auxiliary function, this leads to the multiplicati ve update b in = ˜ b in a 1 n P l v li y ln ˜ x β − 2 ln a 1 n P l v li ˜ x β − 1 ln + λ ˜ b in k ˜ b n k 2 ξ ( β ) , (27) where ξ ( β ) = 1 3 − β when β ∈ [1 , 2] . Again, the update can be generalized to β ∈ R following [31] and references therein. Experiments showed that dropping the exponent ξ ( β ) still results in a valid algorithm while accelerating con ver gence. V . E X P E R I M E N T S W I T H S Y N T H E T I C D A TA A. Synthetic data generation Simulations have been conducted on synthetic images with realistic count-rate properties [35]. These images have been generated from the Zubal high resolution numerical phantom [36] with v alues deriv ed from real PET images acquired with the Siemens HRR T using the 11 C-PE2I radioligand. The original phantom data is of size 256 × 256 × 128 with a v oxel size of 1 . 1 × 1 . 1 × 1 . 4 mm 3 , and was acquired o ver L = 20 frames of durations that range from 1 to 5 minutes for a 60 minutes total acquisition. 1) Phantom I generation: A clinical PET image with 11 C-PE2I of a healthy control subject has been segmented into regions-of-interest using a corresponding magnetic resonance image. Then av eraged T ACs of each region hav e been extracted and set as the T A C of voxels in the corresponding phantom region. It is worth noting that this supervised segmentation neglects any labeled molecule concentration differences due to possible variability in the specific binding region. Thus, it describes each entire segmented region by a single averaged T AC. This phantom, referred to as Phantom I, has been used to ev aluate the reconstruction error for dif ferent values of β . 2) Phantom II gener ation: T o ev aluate the impact of β on the f actor analysis, a second synthetic phantom, referred to as Phantom II, has been also created as follo ws. Phantom I has been unmixed with the N-FINDR [37] to extract K = 4 factors [38] that correspond to tissues of the brain: specific gray matter , blood or veins, white matter and non-specific gray matter . The corresponding ground truth factor proportions hav e been subsequently set as those estimated by SUnSAL [39]. Then, the SBF as well as the variability dictionary ha ve 6 been generated from a compartment model [40], while the internal variability have been generated by di viding the region concerned by specific binding into 4 subregions with dif ferent mean v ariabilities. Phantom II is finally obtained by mixing these ground truth components according to SLMM in (6). 3) Dynamic PET image simulation: The generation process that takes realistic count rates properties into consideration is detailed in [35]. T o summarize, acti vity concentration images are first computed from the input phantom and T A Cs, applying the decay of the positron emitter with respect to the provided time frames. T o mimic the partial volume effect, a stationary 4mm FWHM isotropic 3D Gaussian point spread function (PSF) is applied, follo wed by a do wn-sampling to a 128 x 128 x 64 image matrix of 2.2 x 2.2 x 2.8 mm 3 vox els. Data is then projected with respect to real crystal positions of the Siemens Biograph T ruePoint T rueV scanner, taking attenuation into account. A scatter distribution is computed from a radial con volution of this signal. A random distrib ution is computed from a fan-sum of the true-plus-scatter signal. Realistic scatter and random fractions are then used to scale all distributions and compute the prompt sinograms. Finally , Poisson noise is applied based on a realistic total number of counts for the complete acquisition. Data were reconstructed using the standard ordered-subset expectation maximization (OSEM) algorithm (16 subsets) including a 4mm FWHM 3D Gaussian PSF modeling as in the simulation. T wo images, referred to as 6it and 50it , are considered for the analysis: the 6th iteration without post-smoothing, and the 50th iteration post-smoothed with a 4mm FWHM 3D Gaussian kernel [41]. A set of 64 independent samples of each phantom were generated to assess consistent statistical performance. B. Compared methods 1) Phantom I: The main objective when using Phantom I is to ev aluate the influence of β on the factor modeling (i.e., by ev aluating the reconstruction error) for images reconstructed with 6 and 50 iterations. It also provides a relev ant comparison between β -NMF and the more constrained solution reco vered by β -LMM. W ithin this experimental setup, β ranges from 0 to 2 . 4 with a step size of 0 . 2 . Factor T A Cs are initialized by vertex component analysis (VCA) [42], while the f actor proportions are initialized either by SUnSAL or randomly , depending on the considered setting (see paragraph V -D). The algorithmic stopping criterion, relating the past and current states of the objectiv e function J , is defined as J ( i − 1) −J ( i ) J ( i − 1) < ε , where the values of ε are reported in T able I. 2) Phantom II: For the sak e of comparison, Phantom II will be analyzed with both the β -SLMM algorithm and its simpler version, β -LMM, which does not take variability into account. The corresponding algorithms are applied for β ∈ { 0 , 1 , 2 } . Since Phantom II exhibits a high variability in the tissue corresponding to the SBF , the pure-pixel assumption considered in VCA may not be enough to capture the complexity of the mixture. For this reason, factor T A Cs hav e been initialized with K-means, which is more robust to outliers. Factor proportions have been initialized either with SUnSAL either randomly , depending on the considered setting (see paragraph V -E). The v ariability matrix B is randomly initialized on both settings. The values for ε in T able I are also v alid in this setting. C. P erformance measures 1) Phantom I: In the first round of e xperiments, the reconstruction error is computed in terms of peak signal-to-noise ratio (PSNR) PSNR( ˆ X ) = 10 log 10 max( X ∗ ) 2 k ˆ X − X ∗ k 2 F (28) where max( X ∗ ) is the maximum value of the ground-truth latent image X ∗ and ˆ X , X ( ˆ θ ) is the image recovered according to the considered f actor model (1) with the estimated latent v ariables ˆ θ . 2) Phantom II: In addition to the PSNR, performances on Phantom II hav e been ev aluated w .r .t. each latent variable by computing the normalized mean square error (NMSE): NMSE( ˆ θ i ) = k ˆ θ i − θ ∗ i k 2 F k θ ∗ i k 2 F , (29) where θ ∗ i and ˆ θ i are the actual and estimated latent variables, respectiv ely . In particular , the NMSE has been computed for the following v ariables: the high-uptake f actor proportions A 1 , the remaining factor proportions A 2: K , the SBF T A C ˜ M 1 , the non-specific factor T A Cs M 2: K and finally , when considering β -SLMM, the internal variability B . D. Results on Phantom I In the first round of simulations, β -NMF and β -LMM algorithms are e v aluated in terms of the reconstruction error (28) for sev eral values of β . T wo cases are considered. The first one considers that the factor T ACs previously estimated by VCA are fix ed. Thus, the algorithm described in Section IV updates only the factor proportions, within a con ve x optimization setting. In this case, the factor proportions hav e been randomly initialized. Within the second and non-con ve x setting, the algorithm estimates both factor T A Cs and proportions where the factor proportions have been initialized using SUnSAL. Note that complementary results are reported in the companion report [43]. 1) β -NMF r esults: Figure 2 sho ws the PSNR mean and corresponding standard de viation obtained on the 6it and 50it images when analyzed with β -NMF . The first line corresponds to the the con ve x estimation setting (i.e., fix ed f actor T ACs) while the non-con ve x framew ork (i.e., estimated factor T A Cs) is reported in the second line. The 6it images show higher PSNRs for the v alues of β ∈ [0 , 0 . 6] in both con ve x and non-con ve x settings. This result indicates a residual noise that is rather between Gamma and Poisson distributed, which is consistent with previous studies from the literature [17], [18]. The best performance PSNR = 25 dB with fixed M is reached for β = 0 , which significantly outperforms the result obtained with the Euclidean diver gence β = 2 commonly adopted in the literature. W ithin a non-con v ex optimization setting, when estimating both factor T A Cs and proportions, 7 PSNR: 6it, -NMF, fixed M 0 0.5 1 1.5 2 2.5 15 20 25 30 35 PSNR (in dB) PSNR: 50it, -NMF, fixed M 0 0.5 1 1.5 2 2.5 15 20 25 30 35 PSNR (in dB) PSNR: 6it, -NMF 0 0.5 1 1.5 2 2.5 15 20 25 30 35 PSNR (in dB) PSNR: 50it, -NMF 0 0.5 1 1.5 2 2.5 15 20 25 30 35 PSNR (in dB) Fig. 2. PSNR mean and standard de viation obtained on the 6it (left) and 50it (right) images after factorization with β -NMF with fix ed (top) and estimated (bottom) factor T ACs o ver 64 samples. the maximum PSNR = 22 . 2 dB is obtained for β = 0 . 6 , followed by β = 0 . 4 . In this case, the difference between the greater and smaller PSNRs is of almost 3.5 dB. As non-conv ex optimization problems are highly sensitive to the initialization, the con ve x frame works sho ws a better mean performance for all values of β , as well as less v ariance among the dif ferent realizations. The reconstruction of the 50it images is clearly less sensiti v e to the choice of the diver gence. Y et, v alues β = 1 and β = 0 . 5 in the con vex and non-con vex settings, respectiv ely , increase the reconstruction PSNR by about 1 dB. This is consistent with prior knowledge about the noise statistics: whereas the nature of noise in the 50it image is still Poissonian, its power is very low due to a higher level of filtering. PSNR: 6it, -LMM, fixed M 0 0.5 1 1.5 2 2.5 15 20 25 30 PSNR (in dB) PSNR: 50it, -LMM, fixed M 0 0.5 1 1.5 2 2.5 15 20 25 30 PSNR (in dB) PSNR: 6it, -LMM 0 0.5 1 1.5 2 2.5 15 20 25 30 PSNR (in dB) PSNR: 50it, -LMM 0 0.5 1 1.5 2 2.5 15 20 25 30 PSNR (in dB) Fig. 3. PSNR mean and standard de viation obtained on the 6it (left) and 50it (right) images after factorization with β -LMM with fixed (top) and estimated (bottom) factor T ACs o ver 64 samples. 2) β -LMM r esults: Figure 3 sho ws the PSNR mean and standard deviation after factorization with β -LMM with fixed (top) and estimated (bottom) factor T A Cs. The results look similar as with the β -NMF for the con v ex case: the factorization of the 6it image is optimal for a value of β around 0 . 5 , which is in agreement with the expected Poisson-Gamma nature of the noise before post-filtering. Factor modeling with β = 0 . 5 is about 5 dB better than the one obtained from the usual Euclidean div ergence relying on Gaussian noise ( β = 2 ). In the non-con v ex case, due to a high dependence on the initialization, β -LMM exhibits a beha vior dif ferent from the con ve x case. In particular , the estimated models seem to be affected by a smaller variance. This may result from the fact that the minimization algorithm likely conv erges to the same critical point. Indeed, for all 64 samples, the f actors and factors proportions ha ve been initialized in the same systematic way , using VCA and SUnSAL respectiv ely . Again, the β parameter has less impact for the 50it image which has been strongly filtered, but the optimal β is still around 1 in the con ve x case. The overall performance reached in the 50it seems to be consistently better for the non-con vex setting when β ≥ 0 . 5 , which may be explained by a good initialization and the joint estimation of the factor T ACs. For the 50it image, once again it is possible to see a more Poisson-like distributed noise with a higher PSNR around 30 dB with β = 1 . In this setting, the difference between the highest PSNR and the lowest one for β = 0 is of more than 3 dB. The highest PSNR for the non-con ve x case is reached with β = 1 and is of 32 dB. The highest PSNR is 9 dB greater than the lo west one obtained with β = 0 when estimating both T A C factors and proportions. Howe ver , the dif ference between the PSNR reached with β = 1 and β = 2 is of less than 0 . 5 dB. All remarks previously made for β -NMF in this case are confirmed with the results of β -LMM. E. Results on Phantom II This paragraph discusses the results of β -SLMM obtained on Phantom II. This experiment considers both the reconstruction error (in terms of PSNR) and the estimation error for each latent variable (in terms of NMSE). The factorization with β -SLMM requires the tuning of parameter λ , which controls the sparsity of the internal variability . In this work, the v alue of this parameter has been empirically tuned to obtain the best possible PSNR result for the different values of β and for the two 6it and 50it images. A priori knowledge on the binding region could also be used to adjust λ , monitoring the accuracy of the method with respect to quantitativ e analysis. The optimal v alue can thus depend on the objecti ve of the subsequent analysis. T wo settings ha ve been considered. In the first one, the f actor T A Cs are fixed to their ground-truth value. Thus, the algorithm described in Section IV updates only the factor proportions and the internal proportions B . In this case, the factor proportions hav e been randomly initialized. In the second setting, the algorithm estimates the factor T A Cs and proportions, as well as the internal variability . In this setting, the factor proportions hav e been initialized using SUnSAL. 8 T ABLE I S T O P P I N G CR I T E R I O N AN D V A R I A B I L I T Y P E N A L I Z A T I O N PA R A M E T E R S . λ ε β =0 β =1 β =2 M fixed M estimated 6it 1 . 3 × 10 − 4 1 . 3 × 10 − 3 3 . 9 × 10 − 3 10 − 5 10 − 4 50it 6 . 8 × 10 − 5 6 . 8 × 10 − 4 2 × 10 − 3 10 − 5 10 − 4 T ABLE II M E A N N M S E O F A 1 , A 2: K A N D A 1 · B A N D P S N R O F RE A S S E M B L E D I M AG E ES T I M ATE D B Y β - L M M AN D β - S L M M W I T H FI X E D M O V E R T H E 6 4 SA M P L E S , F O R D I FF E R E N T VAL U E S OF β . β -LMM β -SLMM β 0 1 2 0 1 2 6it A 1 0.500 0.497 0.491 0.273 0.262 0.274 A 2: K 0.304 0.282 0.290 0.292 0.267 0.276 A 1 · B - - - 0.423 0.439 0.492 PSNR 28.325 28.345 28.224 31.905 31.693 29.825 50it A 1 0.447 0.453 0.452 0.209 0.196 0.204 A 2: K 0.262 0.251 0.268 0.255 0.236 0.258 A 1 · B - - - 0.293 0.305 0.371 PSNR 31.992 32.799 32.180 34.556 36.385 35.178 T able I reports the values of λ for each value of β and each image. The parameters were the same for fixed and estimated M . T able II presents the mean NMSE for A 1 , A 2: K and A 1 · B as well as the PSNR for the 6it and 50it images in the framew ork where M is fixed. The estimation performance of A 1 · B rather than B is e valuated because the partial volume effect (due to the PSF) can be propagated either in v ariable A 1 or in B . Both 6it and 50it images present similar results, with the smallest NMSE of A 1 and A 2: K obtained for β = 1 and the best estimation performance of A 1 · B obtained for β = 0 . Howe ver , the PSNR values sho w that, while 6it reaches its best performance for β = 0 closely followed by β = 1 , 50it achie ves its highest PSNR for β = 1 , followed by β = 2 . This result confirms the previous results on Phantom I, which exhibited a Poisson-Gamma noise distribution for the 6it image and a Poisson-Gaussian noise distribution for the 50it images. T able III shows the mean NMSE for A 1 , A 2: K , ˜ M 1 , M 2: K and A 1 · B in the setting where M is no w estimated with the other latent variables. Unlike the pre vious experiments, the results here are less clear since, depending on the v ariable, different values of β lead to the best results. This could be explained by the strong non-conv exity of the problem, and possibly identifiability issues since 3 sets of latent v ariables need to be estimated. The results in T able III show that β -LMM with β = 2 performs the best for the estimation of A 2: K and M 2: K in the 6it image, and for the estimation of A 2: K in the 50it image. All variables related to specific binding, i.e., A 1 , ˜ M 1 and A 1 · B , are best estimated by β -SLMM with β = 1 . For 50it, due to the high lev el of filtering along with the non-con vexity of this setting, analyzing the results is more dif ficult. It is, howe ver , possible to state that a rather Poisson-Gaussian distributed noise yields the ov erall best mean NMSE of each variable. Regarding the PSNRs, once again, the best PSNR on the 6it image is reached for β = 0 , closely follo wed by β = 1 . Con versely , on the 50it image, the best performance is reached for β = 1 , then followed by β = 0 . As also stated in T ABLE III M E A N N M S E O F A 1 , A 2: K , ˜ M 1 , M 2: K A N D A 1 · B A N D P S N R O F R E A S S E M B L E D I M AG E E S T I M ATE D B Y β - L M M A N D β - S L M M W I T H M E S T I M A T E D OV E R T H E 64 SA M P L E S , F O R D I FF E R E N T V A L U E S O F β . β -LMM β -SLMM β 0 1 2 0 1 2 6it A 1 0.382 0.336 0.327 0.323 0.311 0.313 A 2: K 0.629 0.616 0.608 0.634 0.629 0.628 ˜ M 1 0.300 0.343 0.375 0.007 0.006 0.010 M 2: K 0.356 0.346 0.306 0.398 0.390 0.380 A 1 · B - - - 0.475 0.450 0.686 PSNR 27.046 29.445 30.231 31.301 30.279 27.178 50it A 1 0.482 0.491 0.472 0.441 0.423 0.428 A 2: K 1.018 0.842 0.799 1.055 0.886 0.808 ˜ M 1 0.430 0.294 0.332 0.006 0.004 0.003 M 2: K 0.716 0.896 0.832 0.707 0.811 1.169 A 1 · B - - - 0.382 0.307 0.223 PSNR 31.302 27.335 28.891 31.599 31.775 31.080 the non-con v ex case of Phantom I, the initialization plays a relev ant role when sev eral sets of variables are to be estimated. This explains the differences found for the results with M fixed and estimated. Indeed, the high non-con ve xity of the problem with estimated M may sometimes alter the expected response. Finally note that, in practice, each of the three different models e valuated above can be of interest. The most adapted model depends on the data and the application. NMF and LMM are simpler, thus less sensitiv e to initialization and optimization issues. On the other hand, SLMM is based on a finer modelling, and is e xpected to better e xplain the data when the specific binding factor presents some variability . V I . E X P E R I M E N T S W I T H R E A L D A TA A. Real data acquisition T o enrich our study on the impact of β for dif ferent PET image generation settings, the experiments on real data were conducted with both a different tracer and a different scanner . More precisely , a real dynamic PET image of a stroke subject injected with [18F]DP A-714 was used to ev aluate the behavior of β -SLMM in a real setting. The [18F]DP A-714 is a ligand of the 18-kDa translocator protein (TSPO) and has shown its relev ance as a biomarker of neuroinflammation [44]. The image of interest was acquired sev en days after the stroke with an Ingenuity TF64 T omograph from Philips Medical Systems. The image was reconstructed using the Blob-OS-TF algorithm [45] with 3 iterations, 33 subsets and an additional postfiltering step. It consists of L = 31 frames with durations that ranged from 10 seconds to 5 minutes ov er a total of 59 minutes. Each frame is composed of 128 × 128 × 90 voxels of size 2 × 2 × 2 mm 3 . Each v oxel T AC was assumed to be a mixture of K = 4 types of elementary T ACs: specific binding associated with neuroinflammation, blood, non-specific gray matter and white matter . A supervised segmentation from a registered MRI image provided a ground-truth of the stroke region, containing specific binding. The variability descriptors V were learned by PCA from this ground-truth. The cerebrospinal fluid was se gmented and masked as a 5 th class of a K-means clustering that also provided the initialization of the factors. 9 Factor proportions were initialized with the clustering labels found by K-means. For β -SLMM, the nominal SBF was fixed as the empirical av erage of T A Cs from the stroke region with area-under -the-curve (A UC) between the 5th and 10th percentile. Note that the reconstruction settings typically used on the Ingenuity TF64 tomograph for this kind of imaging protocol produce PET images that are characterized by a relativ ely high lev el of smoothness, inducing spatial noise correlation. B. Results Figure 4 shows, from top to bottom, the factor proportions for gray matter , white matter and blood estimated by β -SLMM for β ∈ { 0 , 1 , 2 } where the stopping criterion ε was defined as 5 × 10 − 4 and the hyperparameter λ was set to 9 × 10 − 2 . In particular , λ was ajusted by searching for a reasonable trade-off between localization/sparsity and intensity of the variability in relev ant brain areas, in particular in the central region that corresponds to the thalamus, which is also e xpected to be affected by the variability . Another possible strategy for choosing λ in a clinical context would be to incorporate arterial sampling for the acquisitions of the first fe w patients of a giv en protocol. V isual analysis suggests that all the algorithms provide a good estimation of both gray and white matters. The results for β = 1 and β = 2 are very similar and it is difficult to state which one achiev es the best performance. This is in agreement with the synthetic results previously presented, that showed very similar estimation errors in case of more post-reconstruction filtering. The result for β = 0 is quite different from the others with more contrasted factor proportions. The sagittal vie w of the blood in the 3 rd ro w has been tak en from the center of the brain. The proposed approach correctly identifies the superior sagittal sinus vein of the brain for all tested β v alues. Howe ver , some clear dif ferences can be observ ed and the blood is also more easily identified for β = 0 than for the other values of β . 0 0.5 1 0 0.5 1 0 0.5 1 0 0.5 1 0 0.5 1 0 0.5 1 0 0.5 1 0 0.5 1 0 0.5 1 Fig. 4. From top do bottom: factor proportions ( A 2: K ) from non-specific gray matter , white matter and blood obtained with β -SLMM for β = 0 , 1 , 2 . Figure 5 confirms these findings, showing T A Cs that are very similar for β ∈ { 1 , 2 } while the T ACs for β = 0 are always a bit apart from the others. The expected initial pick characterizing the blood T A C is more easily identified with β = 1 and β = 2 . On the other hand, for β = 0 the T A C associated with the non-specific gray matter has a lo wer A UC than the two others, further dif ferentiating from the specific binding T A C. SBF 0 2000 4000 Time(s) 0 2 4 6 8 10 Concentration Gray matter 0 2000 4000 Time(s) 0 2 4 6 8 10 Concentration White matter 0 2000 4000 Time(s) 0 2 4 6 8 10 Concentration Blood 0 2000 4000 Time(s) 0 5 10 15 20 Concentration =0 =1 =2 Fig. 5. T ACs corresponding to the specific binding factor , gray matter , white matter and blood. Figure 6 sho ws a manually segmented ground-truth of the stroke zone along with the corresponding factor proportions and v ariability matrices estimated with SLMM. The results obtained with β = 0 sho w a more accurate identification of the stroke zone. Results with β = 1 , 2 are very similar: they localize the thalamus, known for having higher binding of neuroinflammation. But they also reco ver non-specific gray matter in the factor proportion related to specific binding. All values of β show v ariability matrices that are consistent with the stroke area. The results for β ∈ { 1 , 2 } are very similar but β = 2 shows a stronger intensity , while β = 1 shows a more spread result, ev en presenting the influence of the thalamus in the 2 nd row , similarly to β = 0 . V I I . D I S C U S S I O N As previously discussed, different acquisition conditions and reconstruction settings produce PET images with different noise distrib utions. Therefore, the optimal v alue of β , i.e. the value which produces the best decomposition, highly depends on the experimental setting. This can be observed in the abov e-presented e xperiments, where the optimal β was shown to be dri ven by the reconstruction, the model, and even the way we ev aluate the factor decomposition. 10 0 0.5 1 0 0.5 1 0 10 20 30 40 0 0.5 1 0 10 20 30 40 0 0.5 1 0 10 20 30 40 0 0.5 1 0 0.5 1 0 10 20 30 40 0 0.5 1 0 10 20 30 40 0 0.5 1 0 10 20 30 40 0 0.5 1 0 0.5 1 0 10 20 30 40 0 0.5 1 0 10 20 30 40 0 0.5 1 0 10 20 30 40 Fig. 6. From left to right: Transv ersal, coronal and sagital planes (top to bottom) of MRI ground truth of the stroke zone, factor proportions ( A 1 ) from specific gray matter and variability matrices ( B ) obtained with β -SLMM for β = 0 , 1 , 2 . One of the main objectiv es of this paper was to demonstrate the flexibility of the β -di ver gence, and its ability to impro ve the factor analysis ev en when the noise is not well characterized. Howe v er , this can also be seen as a weakness, because ho w to choose β in real situations is not straightforward. As a tentativ e to address this issue, we studied the optimal β v alue for synthetic images generated with the same process described in paragraph V -A3 for 3, 6, 15, 30 and 50 reconstruction iterations (respectively 3it, 6it, 15it, 30it and 50it images). W e run 16 independent simulations for each setting, and e v aluated the optimal β as a function of reconstruction iterations, with and without final post-filtering. Figure 7 shows the optimal β for 3it, 6it, 15it, 30it and 50it, computed o ver 16 samples without a postfiltering step (left) and with a postfiltering step (right). This figure can serve as a reference to choose β in this experimental setting, and it is consistent with the other results presented abov e. T o summarize, without the post-filtering step, a reasonable choice of β is around 0 . 5 for few iterations, and 1 or slightly above for more iterations. W e also remark that the influence of β is less clear when a post-filtering step has been used within reconstruction. This strategy is expected to remain valid for other tracers, other cameras or other reconstruction algorithms. Specific numerical simulations dedicated to the experimental setting can be conducted to obtain a rele vant tuning of the β . Moreov er , throughout this article, the main measure of ev aluation was the PSNR. A more insightful ev aluation could be obtained by separately measuring the final bias and variance for each setting. T o further enlighten the interest of using a correct data-fitting measure, this study w as conducted on Phantom I. The analysis showed that the bias is the most relev ant element for the final PSNR, i.e., it is the measure that is most affected by the use of dif ferent v alues of β (see [43] for more details). 0 10 20 30 40 50 0 0.5 1 1.5 2 0 10 20 30 40 50 0 0.5 1 1.5 2 Fig. 7. Optimal beta computed with the β -NMF algorithm in the conv ex setting for 3it, 6it, 15it, 30it and 50it over: (left) 16 samples without a postfiltering step, (right) 16 samples with a postfiltering step. V I I I . C O N C L U S I O N This paper studied the role of the data-fidelity term when conducting factor analysis of dynamic PET images. W e focused on the beta-div ergence, for which the NMF and LMM decompositions were already proposed in other applicativ e contexts. W e also introduced a new algorithm for computing a f actor analysis allowing for variable specific-binding factor , termed β -SLMM. For all those three models, experimental results sho wed the interest of using the β -div ergence in place of the standard least-square distance. The factor and proportion estimations were indeed more accurate when computed with an suitable value of β . The improv ement was sho wn to be higher when the image had not suffered too strong post-processing corrections. The β -div ergence thus appeared to be a general and flexible framew ork for analyzing dif ferent kind of dynamic PET images. Future works should consider the use of the β -div ergence in the whole image processing pipeline, including the reconstruction from the sinograms and the denoising. This should further improv e the final factor analysis results. While the scope of this paper was to study the relev ance of a flexible di v ergence measure in PET image processing, a deeper ev aluation of the impact of the method on input function estimation and quantification parameter estimation within 11 clinical applications for which arterial sampling is av ailable should also be envisaged in the future. R E F E R E N C E S [1] Y . C. Cavalcanti et al. , “Unmixing dynamic PET images: combining spatial heterogeneity and non-Gaussian noise, ” in Pr oc. IEEE Int. Conf. Acoust., Speech, and Signal Processing (ICASSP) , Brighton, U.K., April 2019. [2] D. C. Barber , “The use of principal components in the quantitative analysis of gamma camera dynamic studies, ” Physics Med. Biol. , vol. 25, no. 2, pp. 283–292, 1980. [3] F . Cav ailloles, J. P . Bazin, and R. Di Paola, “Factor analysis in gated cardiac studies, ” J . Nuclear Med. , vol. 25, pp. 1067–1079, 1984. [4] H.-M. W u et al. , “Factor analysis for extraction of blood time-activity curves in dynamic FDG-PET studies, ” J . Nuclear Med. , vol. 36, no. 9, pp. 1714–1722, Sept. 1995. [5] A. Sitek, E. V . R. Di Bella, and G. T . Gullberg, “F actor analysis with a priori knowledge-application in dynamic cardiac SPECT, ” Physics Med. Biol. , vol. 45, pp. 2619–2638, 2000. [6] J. S. Lee et al. , “Non-neg ative matrix factorization of dynamic images in nuclear medicine, ” in Pr oc. IEEE Nuclear Sci. Symp (NSS) , 2001. [7] P . Padilla et al. , “NMF-SVM based CAD tool applied to functional brain images for the diagnosis of Alzheimers disease, ” in IEEE Tr ans. Med. Imag. , v ol. 31, no. 2, Feb. 2012, pp. 207–216. [8] D. Schulz, “Non-negati ve matrix factorization based input function extraction for mouse imaging in small animal PET - comparison with arterial blood sampling and factor analysis, ” J . Molecular Imag . Dynamics , vol. 02, 2013. [9] L. Shepp and Y . V ardi, “Maximum likelihood reconstruction for emission tomography , ” IEEE Tr ans. Med. Imag. , pp. 113–122, May 1982. [10] N. M. Alpert et al. , “Estimation of the local statistical noise in emission computed tomography , ” IEEE T r ans. Med. Imag. , vol. 1, no. 2, pp. 142–146, Oct. 1982. [11] P . Razifar et al. , “Noise correlation in PET, CT, SPECT and PET/CT data e valuated using autocorrelation function: a phantom study on data, reconstructed using FBP and OSEM, ” BMC Med. Imag. , vol. 5, no. 1, Aug. 2005. [12] J. A. Fessler, “Penalized weighted least-squares image reconstruction for positron emission tomography , ” IEEE T rans. Med. Imag . , v ol. 13, no. 2, pp. 290–300, June 1994. [13] P . G. Coxson, R. H. Huesman, and L. Borland, “Consequences of using a simplified kinetic model for dynamic PET data, ” J. Nuclear Med. , vol. 38, no. 4, pp. 660–667, 1997. [14] M. E. Kamasak, “Clustering dynamic PET images on the Gaussian distributed sinogram domain, ” Comput. Methods Pr o grams Biomed. , vol. 93, pp. 217–227, 2009. [15] M. Slifstein, O. Ma wlawi, and M. Laruelle, “P artial v olume effect correction: Methodological considerations, ” in Physiological Imaging of the Brain with PET , G. A et al. , Eds. San Diego: Academic, 2000, ch. 11, p. 413. [16] Z. Irace et al. , “Bayesian segmentation of chest tumors in PET scans using a Poisson-Gamma mixture model, ” in Pr oc. IEEE-SP W orkshop Stat. and Signal Pr ocess. (SSP) , June 2011, pp. 809–812. [17] A. T eymurazyan et al. , “Properties of noise in positron emission tomography images reconstructed with filtered-backprojection and row-action maximum likelihood algorithm, ” J. Digit. Imag. , vol. 26, no. 3, pp. 447–456, Aug. 2012. [18] T . Mou, J. Huang, and F . O’Sulli van, “The Gamma characteristic of reconstructed PET images: Implications for R OI analysis, ” IEEE T rans. Med. Imag. , v ol. 37, no. 5, pp. 1092–1102, May 2018. [19] A. Basu et al. , “Rob ust and efficient estimation by minimising a density power di ver gence, ” Biometrika , vol. 85, no. 3, pp. 549–559, 1998. [20] C. F ´ evotte and J. Idier, “ Algorithms for nonnegati ve matrix factorization with the β -diver gence, ” Neural Computation , vol. 23, no. 9, pp. 2421–2456, 2011. [21] Y . C. Cav alcanti et al. , “Unmixing dynamic PET images with v ariable specific binding kinetics, ” Medical Image Analysis , v ol. 49, pp. 117–127, 2018. [22] D. D. Lee and H. S. Seung, “ Algorithms for non-negati ve matrix factorization, ” in Pr oc. Neural Info. Pr ocess. Syst. (NIPS) , 2000. [23] ——, “Learning the parts of objects with nonnegati ve matrix factorization, ” Nature , vol. 401, pp. 788–791, 1999. [24] J. M. Bioucas-Dias et al. , “Hyperspectral unmixing ov erview: Geometrical, statistical, and sparse re gression-based approaches, ” IEEE J. Sel. T opics Appl. Earth Observations Remote Sens. , vol. 5, no. 2, pp. 354–379, April 2012. [25] S. Eguchi and Y . Kano, “Robustifying maximum lik elihood estimation, ” T okyo Institute of Statistical Mathematics, T ok yo, Japan, T ech. Rep., June 2001. [26] P . D. O’Grady and B. A. Pearlmutter , “Discovering speech phones using conv olutiv e non-negati ve matrix factorisation with a sparseness constraint, ” Neur ocomputing , v ol. 72, no. 1–3, pp. 88–101, Dec. 2008. [27] D. FitzGerald, M. Cranitch, and E. Coyle, “On the use of the beta div ergence for musical source separation, ” in IET Irish Signals and Systems Confer ence (ISSC 2009) , June 2009, pp. 1–6. [28] C. F ´ evotte, N. Bertin, and J.-L. Durrieu, “Nonnegati ve matrix factorization with the Itakura-Saito diver gence: W ith application to music analysis, ” Neural Computation , vol. 21, no. 3, pp. 793–830, 2009. [29] A. Cichocki and S.-i. Amari, “Families of alpha- beta- and Gamma- div ergences: Flexible and robust measures of similarities, ” Entr opy , vol. 12, no. 6, pp. 1532–1568, 2010. [30] V . Y . F . T an and C. F ´ evotte, “ Automatic rele vance determination in nonnegati ve matrix f actorization with the β -diver gence, ” IEEE T rans. P att. Anal. Mach. Intell. , v ol. 35, no. 7, pp. 1592–1605, July 2013. [31] C. F ´ evotte and N. Dobigeon, “Nonlinear hyperspectral unmixing with robust nonnegati ve matrix factorization, ” IEEE T rans. Imag e Process. , vol. 24, no. 12, pp. 4810–4819, Dec. 2015. [32] Z. Y ang and E. Oja, “Unified developm ent of multiplicativ e algorithms for linear and quadratic nonnegativ e matrix factorization, ” IEEE T ransactions on Neural Networks , vol. 22, pp. 1878 – 1891, Dec. 2011. [33] R. K ompass, “ A generalized divergence measure for nonnegativ e matrix factorization, ” Neural Computation , vol. 19, no. 3, pp. 780–791, 2007. [34] J. Eggert and E. K orner , “Sparse coding and NMF, ” in Pr oc. IEEE Int. Joint Conf . Neural Net. (IJCNN) , vol. 4, July 2004, pp. 2529–2533 vol.4. [35] S. Stute et al. , “ Analytical simulations of dynamic PET scans with realistic count rates properties, ” in Proc. IEEE Nuclear Sci. Symp. Med. Imag. Conf . (NSS-MIC) , Nov . 2015. [36] I. G. Zubal et al. , “Computerized three-dimensional segmented human anatomy , ” Medical physics , vol. 21, no. 2, pp. 299–302, 1994. [37] M. E. W inter , “N-findr: an algorithm for f ast autonomous spectral end-member determination in hyperspectral data, ” in Pr oc. SPIE Imaging Spectr ometry V , M. R. Descour and S. S. Shen, Eds., v ol. 3753, no. 1. SPIE, 1999, pp. 266–275. [38] M. Y aqub et al. , “Optimization of supervised cluster analysis for extracting reference tissue input curves in (R)-[11C]PK11195 brain PET studies, ” J. Cer eb . Blood Flow Metab . , vol. 32, no. 8, pp. 1600–1608, May 2012. [39] J. M. Bioucas-Dias and M. A. T . Figueiredo, “ Alternating direction algorithms for constrained sparse regression: Application to hyperspectral unmixing, ” in Proc. IEEE GRSS W orkshop Hyperspectr al Image SIgnal Pr ocess.: Evolution in Remote Sens. (WHISPERS) , 2010. [40] M. E. Phelps, J. C. Mazziotta, and H. R. Schelbert, P ositron Emission T omography and Autoradio graphy (Principles and Applications for the Brain and Heart) . Rav en, New Y ork, 1986. [41] S. Stute and C. Comtat, “Practical considerations for image-based PSF and blobs reconstruction in PET, ” Physics Med. Biol. , vol. 58, no. 11, p. 3849, 2013. [42] J. Nascimento and J. Dias, “V ertex component analysis: a fast algorithm to unmix hyperspectral data, ” IEEE T rans. Geosci. Remote Sens. , v ol. 43, no. 4, pp. 898–910, April 2005. [43] Y . C. Ca valcanti et al. , “Factor analysis of dynamic PET images: beyond Gaussian noise – Complementary results and supplementary materials, ” University of T oulouse, IRIT/INP-ENSEEIHT , France, T ech. Rep., Dec. 2018. [Online]. A vailable: http://dobigeon.perso.enseeiht.fr/ papers/Cav alcanti T echReport 2018.pdf [44] F . Chauveau et al. , “Comparative e valuation of the translocator protein radioligands 11C-DP A-713, 18F-DP A-714, and 11C-PK11195 in a rat model of acute neuroinflammation, ” J. Nuclear Med. , vol. 50, no. 3, pp. 468–476, 2009. [45] S. Matej and R. M. Lewitt, “Practical considerations for 3-D image reconstruction using spherically symmetric v olume elements, ” IEEE T rans. Med. Imag . , vol. 15, no. 1, pp. 68–78, Feb . 1996.

Original Paper

Loading high-quality paper...

Comments & Academic Discussion

Loading comments...

Leave a Comment