GFD-SSD: Gated Fusion Double SSD for Multispectral Pedestrian Detection

Pedestrian detection is an essential task in autonomous driving research. In addition to typical color images, thermal images benefit the detection in dark environments. Hence, it is worthwhile to explore an integrated approach to take advantage of both color and thermal images simultaneously. In this paper, we propose a novel approach to fuse color and thermal sensors using deep neural networks (DNN). Current state-of-the-art DNN object detectors vary from two-stage to one-stage mechanisms. Two-stage detectors, like Faster-RCNN, achieve higher accuracy, while one-stage detectors such as Single Shot Detector (SSD) demonstrate faster performance. To balance the trade-off, especially in the consideration of autonomous driving applications, we investigate a fusion strategy to combine two SSDs on color and thermal inputs. Traditional fusion methods stack selected features from each channel and adjust their weights. In this paper, we propose two variations of novel Gated Fusion Units (GFU), that learn the combination of feature maps generated by the two SSD middle layers. Leveraging GFUs for the entire feature pyramid structure, we propose several mixed versions of both stack fusion and gated fusion. Experiments are conducted on the KAIST multispectral pedestrian detection dataset. Our Gated Fusion Double SSD (GFD-SSD) outperforms the stacked fusion and achieves the lowest miss rate in the benchmark, at an inference speed that is two times faster than Faster-RCNN based fusion networks.

💡 Research Summary

The paper addresses the challenge of pedestrian detection for autonomous driving by exploiting both visible‑light (color) and thermal imagery, which are complementary especially under low‑light conditions. While most recent multispectral approaches rely on two‑stage detectors such as Faster‑RCNN, they suffer from high computational cost that hinders real‑time deployment. To bridge the accuracy‑speed gap, the authors propose Gated Fusion Double SSD (GFD‑SSD), a one‑stage detector that fuses two parallel SSD networks—one processing color images, the other thermal images—through novel Gated Fusion Units (GFUs).

Two GFU designs are introduced. GFU_v1 concatenates the color and thermal feature maps, applies separate 3×3 convolutions, passes each through a ReLU, adds the result back to the original maps, concatenates the summed features, and finally reduces dimensionality with a 1×1 convolution. GFU_v2 follows a similar pipeline but applies the 3×3 convolutions directly on each modality before the ReLU and summation, avoiding the initial concatenation step. Both designs replace the sigmoid gating used in prior work with ReLU, allowing the gating coefficients to assume any non‑negative value and simplifying the combination to an additive operation rather than a multiplicative one. This choice improves gradient flow and learning stability.

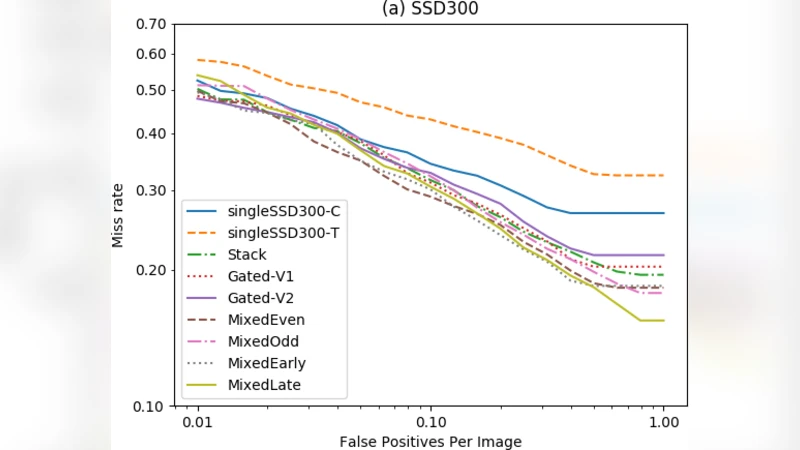

The authors explore two high‑level fusion strategies. In the “full gated” configuration, a GFU is placed on every SSD feature map (conv4_3, conv7, conv8_2, conv9_2, conv10_2, conv11_2), preserving the original number of anchors (8,732) while fully exploiting the gating mechanism. To balance computational load, four “mixed” configurations are also defined: Mixed_Early (gates shallow layers), Mixed_Late (gates deep layers), Mixed_Even (gates even‑indexed layers), and Mixed_Odd (gates odd‑indexed layers). These variants change the total anchor count (ranging from ~8,900 to ~17,300) and allow a trade‑off between speed and accuracy.

Training uses a multi‑task loss comprising classification loss, localization loss, and L2 regularization, weighted as α:β:γ = 5:10:1 to emphasize precise bounding‑box regression. Online Hard Example Mining (OHEM) is employed to focus learning on difficult negative samples.

Experiments are conducted on the KAIST multispectral pedestrian benchmark, which contains 95.3k aligned color‑thermal image pairs (≈50k for training, 45k for testing). The authors report log‑average miss rate (logMR) as the primary metric. GFD‑SSD with the Mixed_Even configuration achieves the lowest miss rate among all tested fusion schemes, outperforming the conventional stacked‑fusion baseline by roughly 1.2 % points. Moreover, because the gated fusion does not increase the number of anchors, inference time remains comparable to a single SSD and is roughly twice as fast as the Faster‑RCNN‑based two‑stage fusion models reported in prior work. Qualitative results show that the gating mechanism effectively suppresses noisy or unreliable thermal features while preserving strong cues from both modalities, leading to robust detection in night and twilight scenes.

The paper’s contributions are threefold: (1) design of two GFU variants that learn adaptive, modality‑specific weighting without restrictive sigmoid gating; (2) introduction of a full gated architecture and four mixed‑fusion variants that can be tailored to hardware constraints; (3) demonstration that a one‑stage multispectral detector can achieve state‑of‑the‑art accuracy while delivering real‑time performance suitable for autonomous driving.

Limitations include reliance on a VGG16 backbone, which is not the most efficient modern architecture; the authors do not evaluate the GFU with lightweight backbones such as MobileNet or EfficientNet, leaving open the question of further speed gains. Additionally, robustness to thermal sensor miscalibration, weather‑induced thermal noise, and domain shift (e.g., different camera rigs) is not examined. Future work could extend GFU to other sensor modalities (e.g., LiDAR), integrate more recent backbone networks, and explore adaptive gating conditioned on environmental cues.

Comments & Academic Discussion

Loading comments...

Leave a Comment