Differentially Private Consensus-Based Distributed Optimization

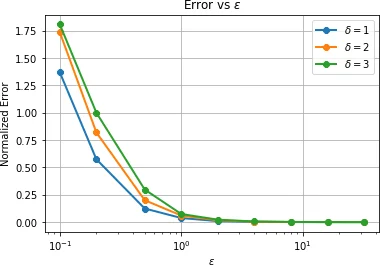

Data privacy is an important concern in learning, when datasets contain sensitive information about individuals. This paper considers consensus-based distributed optimization under data privacy constraints. Consensus-based optimization consists of a set of computational nodes arranged in a graph, each having a local objective that depends on their local data, where in every step nodes take a linear combination of their neighbors’ messages, as well as taking a new gradient step. Since the algorithm requires exchanging messages that depend on local data, private information gets leaked at every step. Taking $(\epsilon, \delta)$-differential privacy (DP) as our criterion, we consider the strategy where the nodes add random noise to their messages before broadcasting it, and show that the method achieves convergence with a bounded mean-squared error, while satisfying $(\epsilon, \delta)$-DP. By relaxing the more stringent $\epsilon$-DP requirement in previous work, we strengthen a known convergence result in the literature. We conclude the paper with numerical results demonstrating the effectiveness of our methods for mean estimation.

💡 Research Summary

The paper addresses the problem of performing consensus‑based distributed optimization while preserving the privacy of each node’s local data. In the considered setting, N computational nodes are connected through an undirected, connected graph and aim to minimize a global objective f(x)=∑_{i=1}^N f_i(x) where each f_i depends on sensitive data stored locally at node i. Direct exchange of local estimates would reveal private information, so the authors adopt (ε,δ)-differential privacy (DP) as the privacy metric and propose to add Gaussian noise to the messages broadcast by each node.

The algorithm is divided into two stages. In Stage I (the first T iterations) each node performs a consensus step followed by a projected gradient descent step, but before broadcasting its local estimate it adds independent zero‑mean Gaussian noise n_i(t)∼𝒩(0,M_t^2 I). The weight matrix W used for consensus is doubly stochastic with spectral gap β<1. After T iterations the algorithm switches to Stage II, where nodes stop adding noise and only perform pure consensus updates to drive all local copies to a common value. This two‑phase design limits the privacy‑inducing gradient updates to a finite horizon while still allowing the network to reach agreement.

A central technical contribution is the direct analysis of the algorithm’s conditional L₂‑sensitivity. Lemma 3 shows that, under the bounded‑gradient assumption (‖∇f_i(x)−∇f_i(y)‖≤G‖x−y‖), the sensitivity at iteration t is Δ(t)=2η_t G, where η_t is the step size. Using this exact sensitivity, Theorem 1 derives a tight privacy condition that does not rely on generic composition theorems:

\

Comments & Academic Discussion

Loading comments...

Leave a Comment