Periocular Recognition in the Wild with Orthogonal Combination of Local Binary Coded Pattern in Dual-stream Convolutional Neural Network

In spite of the advancements made in the periocular recognition, the dataset and periocular recognition in the wild remains a challenge. In this paper, we propose a multilayer fusion approach by means of a pair of shared parameters (dual-stream) conv…

Authors: Leslie Ching Ow Tiong, Andrew Beng Jin Teoh, Yunli Lee

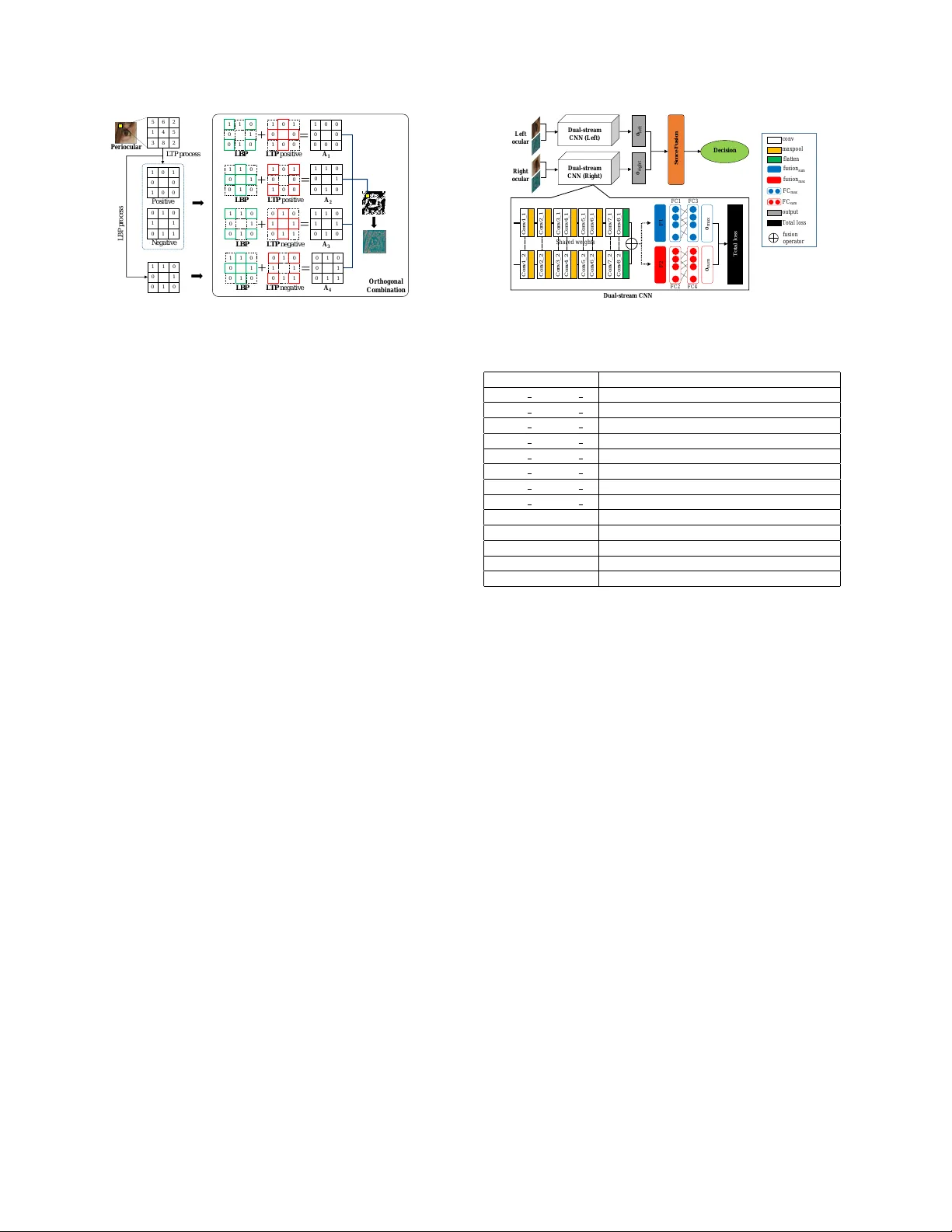

P eriocular Recognition in the W ild with Orthogonal Combination of Local Binary Coded Patter n in Dual-str eam Con volutional Neural Netw ork Leslie Ching Ow T iong KAIST 291 Daehak-ro, Y useong-gu, Daejeon 34141, Republic of K orea tiongleslie@kaist.ac.kr Andre w Beng Jin T eoh Y onsei Uni versity 50 Y onsei-ro, Sinchon-dong, Seodaemun-gu, Seoul, Republic of K orea bjteoh@yonsei.ac.kr Y unli Lee Sunway Uni versity 5 Jln. Uni versiti, Bdr . Sunw ay , 47500 Petaling Jaya, Selangor , Malaysia yunlil@sunway.edu.my Abstract In spite of the advancements made in the periocular r ecognition, the dataset and periocular reco gnition in the wild remains a challenge . In this paper , we propose a mul- tilayer fusion appr oach by means of a pair of shar ed pa- rameters (dual-str eam) con volutional neural network wher e each network accepts RGB data and a novel colour -based textur e descriptor , namely Orthogonal Combination-Local Binary Coded P attern (OC-LBCP) for periocular reco gni- tion in the wild. Specifically , two distinct late-fusion layers ar e intr oduced in the dual-stream network to aggr egate the RGB data and OC-LBCP . Thus, the network beneficial fr om this new feature of the late-fusion layers for accuracy per- formance gain. W e also intr oduce and share a new dataset for periocular in the wild, namely Ethnic-ocular dataset for benchmarking . The pr oposed network has also been as- sessed on one publicly available dataset, namely UBIPr . The pr oposed network outperforms sever al competing ap- pr oaches on these dataset. 1. Introduction In recent years, periocular recognition is gaining atten- tion from the biometrics community due to its promising recognition performance [8]. Periocular usually refers to the region around the eyes, preferably including the eye- brow . An early study of the periocular recognition was done by Park et al . [19], which demonstrated promising results in controlled environments. The authors utilised sev eral tex- ture descriptors such as Histogram of Orientation and Gra- dient (HOG), Local Binary Pattern (LBP), and Scale In vari- ant Feature T ransform (SIFT), followed by score fusion for decision. Sev eral studies such as [15] also focus on us- ing texture descriptors and learning models for periocular recognition. They combined several texture descriptors for a better feature representation. Another work reported in [9], con volv ed the HOG and LBP that generated from peri- ocular images with Gabor filters and followed by concatena- tion. Although all the existing approaches achiev ed decent recognition performances, these approaches were less ro- bust to the “in the wild” variations such as pose alignments, illuminations, glasses, and occlusions. Since 2012, Conv olutional Neural Network (CNN) has gained an exponential attention to learn high-dimensional data in the computer vision domain [13]. CNN with colour - based texture descriptors hav e successfully been employed in numerous vision applications, such as emotional recog- nition [14] and texture classification [7]. Both [14] and [7] demonstrated that using the colour texture do provide com- plementary information to improve CNN in extracting fea- ture representations. In their analysis, they showed that te x- ture descriptors are ubiquitous enough to represent an ob- ject, especially when the shape cannot be visualised clearly . For periocular recognition in controlled en vironments, [12] e xploited two CNNs, which e xtract comprehensiv e pe- riocular information from left and right oculars. [21] and [29] focused on the feature extraction based on regions-of- interest of periocular with CNNs. Both networks exploit prior kno wledge by discarding unnecessary information to enhance CNN in periocular recognition. Howe ver , these networks are not well-performed in the wild en vironment, such as when the periocular images are misaligned or the periocular images does not include the eyebrows or ocu- lar perfectly . A very recent work [25] proposed a multi- modal CNN, namely multi-abstract fusion CNN where the features fusion for iris, face and fingerprint takes place at fully-connected ( fc ) layer . A fusion layer is designed to fuse the different le vels of fc layers as multi-feature representa- tions with sole RGB data. Howe ver , this work only limited to RGB data, which could be of limited in information. In this paper , we in vestigate a fusion approach with a dual-stream CNN where each network accepts RGB data and a novel colour -based texture descriptor, namely Orthog- onal Combination-Local Binary Coded Pattern (OC-LBCP) for periocular recognition in the wild. Both networks are shared in parameters and a late fusion takes place at the last con volutional ( conv ) layer before fc layer . OC-LBCP ex- ploits the colour information in texture representation and can extract features with higher discriminati ve capability . This paper also attempts to address periocular recogni- tion in the wild challenge, which remains not well-cov ered by the e xisting datasets [2, 18, 22] and research community [12, 25]. The periocular recognition in the wild challenges are associated to the huge dif ferences of the periocular im- ages due to sensors location, pose alignments, le vel of il- luminations, occlusions, and others. Specifically , the ap- pearances of periocular region images with cosmetic prod- ucts and plastic surgery may jeopardise the accuracy per- formance severely . Most of the existing periocular datasets such as CASIA-iris distance [2] dataset and UBIPr dataset [18] were collected randomly under controlled en viron- ments and contained limitation for ethnicity . [23] also re- vealed that each ethnic group has a unique shape of perioc- ular and skin texture of periocular re gions. W e therefore create a new dataset, namely Ethnic- ocular 1 by collecting the periocular region images in the wild to validate the proposed method. The dataset is cre- ated based on fiv e ethnic groups namely African , Asian , Latin American , Middle Eastern , and White . As a result, our dataset is designed in such way to avoid unbalanced selec- tion as there are differences in the configuration of oculars among different ethnicities. Thus, the contributions of this paper are as follo ws: • In vestigate periocular recognition in the wild with the combination of RGB data and the proposed colour- based texture descriptor OC-LBCP for dual-stream CNN. • T o offer a better feature representation for periocular recognition in the wild, two distinct late-fusion lay- ers are introduced in the dual-stream CNN. The role of the late-fusion layers is to aggregate the RGB data and OC-LBCP . Thus, the dual-stream CNN beneficial from this new features of the late-fusion layers to de- liv er better accuracy performance. • A new periocular dataset, namely Ethnic-ocular dataset is created, containing periocular images in the wild. The images are collected across highly uncon- trolled subject-camera distances, lo w-resolution im- ages, different appearances, occlusions, glasses, poses, time, locations, and illuminations. The dataset also provides training and testing schemes for performance 1 Ethnic-ocular dataset is available at: https://www.dropbox. com/sh/vgg709to25o01or/AAB4- 20q0nXYmgDPTYdBejg0a? dl=0 analysis and ev aluation. This paper is organised as follows; Section 2 presents the structure of OC-LBCP . Section 3 explains the presentation of our proposed network, dual-stream CNN with late-fusion layers. Section 4 presents the detailed information of pro- posed dataset. Section 5 describes the presentation of exper - imental analysis and results. A conclusion is summarised in Section 6. 2. Colour -based texture descriptor W e devise a colour-based texture descriptor known as OC-LBCP by means of orthogonal combination of Local Binaray Pattern (LBP) [17] and Local T ernary Pattern (L TP) [26]. LBP is to summarise the local structure in an image by comparing each pixel with its neighbourhood. This de- scriptor works by thresholding a 3 × 3 neighbourhood using the grey lev el of the central pixel in the binary code. L TP is an e xtension of the primary LBP with three-valued codes by neighbouring pixels with thresholding to form a ternary pattern. The ternary pattern results in a large range, so the ternary pattern is split into tw o positi ve and ne gative binary patterns as depicted in Figure 1. The OC-LBCP is designed to reduce the sensitivity of image noise and lev els of illumination by creating better texture information of an object. Suppose I ∈ R h × w be an image, where h and w are the height and width, respec- tiv ely . Butterworth filter is applied to I to separate the il- lumination component from I and enhance the reflectance [10]. Next, orthogonal combination of binary codes from LBP and L TP operators is carried out. Figure 1 illustrates the or- thogonal combination by concatenating the LBP and L TP binary codes into four orthogonal groups, namely A 1 , A 2 , A 3 , and A 4 . The orthogonal combinations are beneficial to achiev e illumination in variance by removing outlying dis- turbances. T o generate A 1 , we select the bits from red line boxes in L TP positiv e and the values from green line boxes in LBP (see Figure 1); then, concatenated all of them. The same process is repeated for A 2 , A 3 , and A 4 . The OC-LBCP Ω( i, j ) is formed by choosing the largest binary codes from the orthogonal groups. The OC-LBCP is formed by combining the features as follows: Ω( i, j ) = max ( A ( i, j )) , (1) A ( i, j ) = { A 1 ( i, j ) , A 2 ( i, j ) , A 3 ( i, j ) , A 4 ( i, j ) } , (2) where A represents the four orthogonal groups with binary codes. T o map Ω( i, j ) into a colour space, we define a colour pattern matrix ∆( u, v ) to represent the similarity of the im- age intensity patterns across all possible code v alues based on [14]. The colour mapping transforms OC-LBCP into a 5 6 2 1 4 5 3 8 2 1 1 0 0 1 0 1 0 P eri o cul a r L BP p ro ces s L T P p ro ces s 1 0 1 0 0 1 0 0 0 1 0 1 1 0 1 1 P o s i t i ve Neg a t i ve Ortho g o n a l C o m bi na ti o n 1 1 1 0 0 0 0 0 0 0 0 0 1 1 1 0 L BP L T P p o s i t i ve L T P p o s i t i ve 1 0 1 1 1 0 0 0 1 0 0 0 0 0 0 0 A 1 1 0 0 0 1 1 0 1 1 1 0 1 0 1 0 0 L BP 0 0 0 1 1 1 1 1 1 0 1 1 1 0 0 0 1 1 0 1 1 0 1 0 1 1 1 1 0 0 0 1 1 0 0 0 1 0 1 1 0 1 0 0 1 0 1 1 L BP L TP n eg a t i ve A 3 A 2 L BP L T P n eg a t i ve A 4 Figure 1. Illustration of OC-LBCP . colour-based texture representation, which reflects the dif- ferences in the intensity patterns. The colour pattern matrix is computed based on the Earth Mov ers Distance by following [14]. Then, apply Multi- Dimensional Scaling (MDS) to seek a mapping of the codes into a low dimensional metric space: ∆( u, v ) = b MDS [Ω u ] + MDS [Ω v ] c (3) where u and v are the coordinates in colour pattern matrix ∆( u, v ) and b·c is the floor function. 3. Dual-stream con volutional neural netw ork Dual-stream CNN w as originally designed to extract fea- tures from temporal and structural streams for action detec- tion and recognition [11]. Our work is motiv ated by the dual-stream CNN where RGB data and texture descriptor are concei ved as the first and second stream. As shown in Figure 2, the network consists of eight pairs of shared con v layers and eight max-pooling ( maxpool ) layers, where it is designed to learn the correspondence between the RGB data and OC-LBCP and to discriminate between themselves with the shared weights. T able 1 tabulates the proposed network architecture. The shared conv layer is given as a pair con v layer , with their parameters shared. 3.1. Late-fusion layers T o integrate the information from the dual inputs, we merge the flatten layers ( F 1 and F 2 in T able 1) by creat- ing late-fusion layers to strengthen the feature activ ations of the network. Therefore, two fusion layers, namely max and sum layers are introduced, to fuse the features at flatten layers. T o be specific, max layer Z max takes a larger activ a- tion from F 1 or F 2 with N nodes ( N = 12 , 800 ). On the other hand, sum fusion layer Z sum takes a sum of acti vations of F 1 and F 2 . 3.2. T raining with total loss For training, a total loss function that composed of sum- mation of cross entropy of logit vector of max fusion and D u al - s tr e am C N N conv m axpool Total l os s FC m ax FC s u m f usi on oper at or f l at t e n f usi on s u m f usi on m ax output D u al - s tr e am C N N (Le f t) D u al - str e am C N N (Righ t) Le f t oc u l ar R i gh t oc u l ar D e c i s i on 𝐎 l e f t 𝐎 righ t Score F usi on F C 3 C onv1_1 Sha r ed wei ghts C onv1_2 C onv2_2 C onv3_2 C onv4_2 C onv5_2 C onv6_ 2 C onv7_2 C onv8_2 C onv2_1 C onv3_1 C onv4_ 1 C onv5_1 C onv6_1 C onv7_1 C onv8_1 F C 1 F C 2 F C 4 F1 F2 T ot al l os s 𝐎 s u m 𝐎 m a x Figure 2. Architecture of our proposed network. T able 1. Configuration of the proposed network. Note that, f.m. is defined as the size of output conv layer and f. as the size of filter . Layer Configuration conv 1 1 , conv 1 2 f.m. : 64 × 80 × 80; f. : 2 × 2; maxpool: 2 × 2 conv 2 1 , conv 2 2 f.m. : 128 × 40 × 40; f. : 2 × 2; maxpool: 2 × 2 conv 3 1 , conv 3 2 f.m. : 256 × 20 × 20; f. : 2 × 2 conv 4 1 , conv 4 2 f.m. : 256 × 20 × 20; f. : 2 × 2; maxpool: 2 × 2 conv 5 1 , conv 5 2 f.m. : 512 × 10 × 10; f. : 2 × 2 conv 6 1 , conv 6 2 f.m. : 512 × 10 × 10; f. : 2 × 2; maxpool: 2 × 2 conv 7 1 , conv 7 2 f.m. : 512 × 5 × 5; f. : 2 × 2 conv 8 1 , conv 8 2 f.m. : 512 × 5 × 5; f. : 2 × 2 F 1 , F 2 1 × 1 × 4,096 Z max , Z sum 1 × 1 × 4,096 F C 1 , F C 2 1 × 1 × 4,096 F C 3 , F C 4 1 × 1 × 4,096 o max , o sum 1 × 1 × C sum fusion and their respective one-hot encoded labels as follows is utilised: total loss = L ( y max ) + L ( y sum ) , (4) L ( y ∗ ) = − M X i C X j l ij log ( softmax ( y ∗ ) ij ) , (5) where ∗ ∈ { max , sum } . l , M and C denote class label, the numbers of training sample, and the number of class, respectiv ely . Since a periocular region can be either left or right oculars; we therefore train each side with separate dual-stream CNN (see Figure 2). 3.3. T esting with score fusion lay er Let o max = softmax ( y max ) ∈ R C and o sum = softmax ( y sum ) ∈ R C be the softmax vectors of respective max (after F C 3 ) and sum ( F C 4 ) output layer and C is the number of classes. The two softmax vectors are aggre gated yield o = o max + o sum ∈ R C . Since there are two dual- stream CNN, each for left and right ocular, thus we differ - entiate the softmax vector o to o left and o right , respectiv ely . T o determine the identity of an unknown input based on the trained network, we follow the identification protocol where the testing set, which is not overlapping with training set is di vided according to gallery and probe sets. Each sub- ject in the gallery set is composed of his/her left and right softmax vectors o G i = { o G left ,i , o G right ,i } where i = 1 , · · · , C . Figure 3. Sample images of Ethnic-ocular dataset. Each row presents different images per ethnic group. For a given probe with its left and right ocular softmax vectors o P = { o P left , o P right } , we compute the fused score with sum rule as: s fuse ( o G i , o P ) = s ( o G left ,i , o P left ) + s ( o G right ,i , o P right ) (6) where s ( o G * ,i , o P * ) = 1 − cos ( o G * ,i , o P * ) , ∗ ∈ { left , right } and cos ( · , · ) is the cosine similarity distance. Finally , the iden- tity of o P , δ can be decided based on δ = max i [ s fuse ( o G i , o P )] . (7) 4. Ethnic-ocular dataset T o design our dataset, we follow the example of Face- Scrub [16] dataset collection. Our goal is to produce a large collection of periocular images based on dif ferent eth- nic groups for recognising indi viduals. Thus, all the pe- riocular images are collected in the wild, such as uncon- trolled subject-camera distances, locations, poses, appear- ances with and without make-up, and le vel of illuminations. 4.1. Collection setup and inf ormation W e began with 250 subject names from FaceScrub dataset and the 784 subject names from BBC News [1], CNN News [3], and Naver News [5], in order to search for the images of these subjects across Google image search engine. In the search, the top 300 images for each subject were downloaded. W e first extracted facial regions in these images by using the V iola-Jones face detector from Matlab [4]. The views of facial region in these images are between -45 ◦ and 45 ◦ . The images were manually v erified to ensure that the images are correctly labelled by the subjects. The dataset contains 85,394 images (includes left and right oculars) of 1,034 subjects. T o extract the periocular region from each image, we first aligned all the images by fixing the coordinates of facial feature points based on the V iola-Jones face detector bounding box. Then, the images were cropped into left and right oculars by using the tech- nique from [27], and the results were resized to 80 × 80 pix- els individually as sho wn in Figure 3. 4.2. Benchmark protocols The dataset provides training and testing protocols; 623 subjects were randomly selected as training and the rest of the subjects were used as testing. In the testing, we ha ve di- vided the images such that the ratio between the gallery sets and probe sets is 50:50. This di vision process was repeated three times. 5. Experiments W e used the proposed dataset namely Ethnic-ocular dataset and one public dataset - UBIPr [18] as the target datasets to ev aluate the performances of the dual-stream CNN and other benchmark approaches. All the configu- rations of approaches are described next. 5.1. Experimental setup 5.1.1 Proposed network Dual-stream CNN is implemented by using the T ensor- Flow [6] toolkit. W e applied an annealed learning rate, which started from 1 . 0 × 10 − 3 and it is subsequently re- duced by 10 − 1 for e very 10 epochs. The minimum learning rate was defined as 1 . 0 × 10 − 5 . An Adam optimizer w as ap- plied, where the weight decay and momentum were set to 5 . 0 × 10 − 4 and 0.9, respectiv ely . The batch size was set to 64 and the training was carried out across 200 epochs. The training was done by using our dataset and it was performed by an NV idia T itan Xp GPU. 5.1.2 Benchmark approaches Sev en deep networks were selected to ev aluate the per- formance of periocular recognition, namely AlexNet [13], FaceNet [24], LCNN-29 [28], VGG-16 [20], DeepIrisNet- A [12], DeepIrisNet-B [12], and Multi-abstract fusion CNN [25]. Here we use the pre-trained models that were pro- vided by the authors. All the networks are trained with left and right oculars, respecti vely . In the cases of DeepIrisNet- A, DeepIrisNet-B, and Multi-abstract fusion CNN, we tried our best effort to implement these networks from scratch by following [12] and [25], respectiv ely , as the networks are not publicly av ailable. 5.2. Experimental results This section presents the experimental results on the tasks of periocular recognition. W e conducted the experi- ments on periocular recognition in the wild and controlled en vironments. W e ev aluated the performance using Cumu- lativ e Matching Characteristic (CMC) curve with 95% con- fidence interval (CI). 5.2.1 Perf ormance evaluation on proposed network vs single-stream CNN This section analyses the robustness and performance of our proposed network. T able 2 presents the performance analysis on the capability of a single-stream CNN with re- spectiv e features, followed by dual-stream CNN without sharing the weights in conv and fc layers, and our proposed network. Note that, the dual-stream CNN without sharing T able 2. Performance analysis on rank-1 recognition accuracy . The highest accuracy is written in bold. Appr oaches Accuracy (%) CNN using RGB data 80.8 ± 1.4 CNN using OC-LBCP 66.6 ± 2.2 Dual-stream CNN (without shared weights) 82.1 ± 1.6 Proposed netw ork 85.0 ± 1.9 the weights also implemented the late-fusion and score fu- sion. This experiment was conducted using Ethnic-ocular dataset. T able 2 sho ws that our proposed network achieved the highest rank-1 recognition accuracy with 85.0 ± 1.9%. Howe ver , single-stream CNN only achie ved 80.8 ± 1.4% and 66.6 ± 2.2% with RGB data and OC-LBCP , respectiv ely . As compared to single-stream CNN, the results indicate that the late-fusion layers are significant to correlate the RGB data and OC-LBCP in order to achieve better recognition performance. The analysis demonstrated that our proposed network provides more complementary information than single-stream CNN. Compared with dual-stream CNN without shared weights, the experimental results in T able 2 show that our proposed network is well-performed than dual-stream CNN without shared weights at least 2.9% improvement. This is because our proposed network utilised the shared con v and the fusion fc layers to aggregate the RGB data and OC- LBCP . As a result, the proposed have successfully trans- formed the new knowledge representations in the network to perform better recognition. 5.2.2 Perf ormance evaluation on proposed network vs benchmark approaches UBIPr dataset: T o verify the robustness of our proposed network, we also conducted the performance on more sub- jectiv e experiment with UBIPr dataset. This dataset consists of 342 subjects with varying different subject-camera dis- tances, poses, illumination, and occlusion. This experiment ev aluated the performance of all the approaches with vary- ing pose and subject-camera distances. Six images from each subject were randomly selected as a gallery set; the remaining images were used as a probe set. The selection process was repeated three times. T able 3 presents that dual-stream CNN achiev es the highest average rank-1 and rank-5 recognition accuracies with 91.28 ± 1.18% and 98.59 ± 0.44%, respecti vely . The second best is achiev ed by multi-abstract fusion CNN with 90.75 ± 1.01% and 97.44 ± 0.34% as rank-1 and rank-5 accu- racies. Figure 4a shows that our network outperforms most of the benchmark approaches and achieves the highest recall rate against all other approaches for all ranks recognition. Ethnic-ocular dataset: W e presented the experimental results in T able 3 by following the recognition protocol as mentioned in Section 4.2. T o e valuate the performance of (a) UBIPr dataset (b) Ethnic-ocular dataset Figure 4. Performances of CMC curve. the proposed network, we compared our results with sev en benchmark approaches (see T able 3). For the results of recognition, our proposed network achieved 85.03 ± 1.88% and 94.23 ± 1.26% as rank-1 and rank-5 accuracies, respec- tiv ely . Figure 4b illustrates the CMC curve of our proposed network, which outperformed other benchmark approaches from rank-1 to rank-10 recognition accuracies. Besides, the results proved that our network can learn new features from the late-fusion layers for better recog- nition. The effecti veness of these fusion layers provides strong support to our assumption that multi-feature learn- ing achie ves significantly better results than using ra w data. 6. Conclusion This paper outlined a plausible perspecti ve into ho w ma- chine interpretation of periocular images in the wild could benefit from the RGB data and colour-texture descriptors, known as OC-LBCP . In addition, a dual-stream CNN uti- lized the late-fusion feature learning, which sho wn con- tribute to a more rob ust feature representation in recogni- tion. W e observed that accessing to dual inputs (RGB data and OC-LBCP) significantly outperformed the existing de- scriptors. W e also introduced a new Ethnic-ocular dataset, which consists of a large collection of periocular images based on different ethnic groups for recognising individu- als. Good performances were obtained for both controlled and in the wild en vironments of periocular recognition with T able 3. Evaluation of recognition performance on the Ethnic-ocular dataset and UBIPr dataset. The highest accuracy is written in bold. Appr oach Ethnic-ocular (%) UBIPr (%) Rank-1 Rank-5 Rank-1 Rank-5 AlexNet 64.72 ± 3.28 82.98 ± 2.52 84.88 ± 2.50 96.01 ± 1.77 FaceNet 78.71 ± 3.66 92.19 ± 1.59 90.24 ± 1.43 97.36 ± 0.44 LCNN-29 79.35 ± 2.64 92.17 ± 1.80 90.28 ± 1.71 97.18 ± 0.67 VGG-16 76.43 ± 2.16 91.29 ± 1.54 90.24 ± 1.38 97.09 ± 1.14 DeepIrisNet-A 79.54 ± 3.12 90.43 ± 2.44 90.30 ± 1.16 97.41 ± 1.07 DeepIrisNet-B 81.13 ± 3.08 92.37 ± 1.20 90.20 ± 1.66 97.43 ± 0.54 Multi-abstract fusion CNN 81.79 ± 3.54 93.03 ± 1.33 90.75 ± 1.01 97.44 ± 0.34 Proposed netw ork 85.03 ± 1.88 94.23 ± 1.26 91.28 ± 1.18 98.59 ± 0.44 the proposed network. Howe ver , this work is limited to case of individual who is wearing sunglass. In future, we aim to explore generati ve adversarial network to reconstruct new periocular images without sunglasses. References [1] BBC News. [Online]. A vailable: http://www .bbc.com/ne ws. [2] CASIA iris database. [Online]. A vailable: http://biometrics.idealtest.org. [3] CNN News. [Online]. A vailable: https://edition.cnn.com/. [4] Matlab object detector . [Online]. A vailable: https://uk.mathworks.com/help/vision/ref/vision.cascadeobj ectdetector-system-object.html. [5] Nav er News. [Online]. A vailable: http://ne ws.nav er .com/. [6] T ensorFlow . [Online]. A v ailable: https://tensorflow .org. [7] R. M. Anwer , F . S. Khan, J. van de W eijer, M. Molinier , and J. Laaksonen. Binary patterns encoded conv olutional neural networks for texture recognition and remote sensing scene classification. ISPRS J . Photogr amm. Remote Sens. , 138:74– 85, 2018. [8] E. Barroso, G. Santos, L. Cardoso, C. Padole, and H. Proenc ¸ a. Periocular recognition: Ho w much facial expres- sions affect performance? P attern Anal. Appl. , 19(2):517– 530, 2016. [9] Z. Cao and N. A. Schmid. Fusion of operators for hetero- geneous periocular recognition at varying ranges. P attern Recognit. Lett. , 82:170–180, 2016. [10] K. Delac, M. Grgic, and T . K os. Sub-image homomorphic filtering technique for improving facial identification under difficult illumination conditions. In Int. Conf. Syst., Signals Image Pr ocess. , pages 95–98, 2006. [11] C. Feichtenhofer, A. Pinz, and A. Zisserman. Con volutional two-stream network fusion for video action recognition. In CVPR , pages 1933–1941, 2016. [12] A. Gangwar and A. Joshi. Deepirisnet: Deep iris represen- tation with applications in iris recognition and cross-sensor iris recognition. In ICIP , pages 2301–2305, 2016. [13] A. Krizhe vsky , I. Sutsk ev er , and H. Geoffrey . Imagenet clas- sification with deep con volutional neural networks. In Int. Conf. Neur al Info. Pr ocess. Syst. , pages 1097–1105, 2012. [14] G. Levi and T . Hassner . Emotion recognition in the wild via con v olutional neural networks and mapped binary patterns. In Int. Conf. Multimodal Inter action , pages 503–510, 2015. [15] G. Mahalingam and K. Ricanek. LBP-based periocular recognition on challenging face datasets. EURASIP J. Im- age V ideo Pr ocess. , 2013(36):1–13, 2013. [16] H. W . Ng and S. W inkler . A data-driv en approach to cleaning large f ace datasets. In ICIP , pages 343–347, 2014. [17] T . Ojala, M. Pietik ¨ ainen, and T . M ¨ aenp ¨ a ¨ a. Multiresolution gray-scale and rotation in variant texture classification with local binary patterns. IEEE Tr ans. P attern Anal. Mach. In- tell. , 24(7):971–987, 2002. [18] C. N. P adole and H. Proenc ¸ a. Periocular recognition: Anal- ysis of performance de gradation factors. In ICB , pages 439– 445, 2012. [19] U. Park, R. R. Jillela, A. Ross, and A. K. Jain. Periocular biometrics in the visible spectrum: A feasibility study . In BT AS , pages 1–6, 2009. [20] O. M. Parkhi, A. V edaldi, and A. Zisserman. Deep face recognition. In British Mach. V is. Conf. , pages 1–12, 2015. [21] H. Proenc ¸ a and J. C. Ne ves. Deep-prwis: Periocular recog- nition without the iris and sclera using deep learning frame- works. IEEE Tr ans. Inf. F or ensics Secur ., , 13(4):888–896, 2018. [22] R. Raghav endra and C. Busch. Learning deeply coupled au- toencoders for smartphone based robust periocular verifica- tion. In ICIP , pages 325–329, 2016. [23] S. C. Rhee, K. S. W oo, and B. Kwon. Biometric study of eye- lid shape and dimensions of different races with references to beauty . Aesthetic Plast. Surg . , 36(5):1236–1245, 2012. [24] F . Schroff, D. Kalenichenko, and J. Philbin. FaceNet: A unified embedding for face recognition and clustering. In CVPR , pages 815–823, 2015. [25] S. Soleymani, A. Dabouei, H. Kazemi, J. Dawson, and N. M. Nasrabadi. Multi-level feature abstraction from con volu- tional neural networks for multimodal biometric identifica- tion. In Int. Conf . P attern Recognit. (ICPR) , pages 1–8, 2018. [26] X. T an and B. Triggs. Enhanced local texture feature sets for f ace recognition under difficult lighting conditions. IEEE T rans. Image Pr ocess. , 19(6):1635–1650, 2010. [27] V . ˇ Struc and N. Pav e ˇ si ´ c. The complete Gabor-fisher clas- sifier for robust face recognition. EURASIP J. Adv . Signal Pr ocess. , 2010:1–26, 2010. [28] X. W u, R. He, Z. Sun, and T . T an. A light CNN for deep face representation with noisy labels. IEEE T rans. Inf. F or ensics Secur . , 13(11):2884–2896, 2018. [29] Z. Zhao and A. Kumar . Improving periocular recognition by explicit attention to critical regions in deep neural network. IEEE T rans. Inf . F orensics Secur ., , 13(12):2937–2952, 2018.

Original Paper

Loading high-quality paper...

Comments & Academic Discussion

Loading comments...

Leave a Comment