Theories of Parenting and their Application to Artificial Intelligence

As machine learning (ML) systems have advanced, they have acquired more power over humans’ lives, and questions about what values are embedded in them have become more complex and fraught. It is conceivable that in the coming decades, humans may succeed in creating artificial general intelligence (AGI) that thinks and acts with an open-endedness and autonomy comparable to that of humans. The implications would be profound for our species; they are now widely debated not just in science fiction and speculative research agendas but increasingly in serious technical and policy conversations. Much work is underway to try to weave ethics into advancing ML research. We think it useful to add the lens of parenting to these efforts, and specifically radical, queer theories of parenting that consciously set out to nurture agents whose experiences, objectives and understanding of the world will necessarily be very different from their parents’. We propose a spectrum of principles which might underpin such an effort; some are relevant to current ML research, while others will become more important if AGI becomes more likely. These principles may encourage new thinking about the development, design, training, and release into the world of increasingly autonomous agents.

💡 Research Summary

The paper argues that as machine‑learning systems become more powerful and as the prospect of artificial general intelligence (AGI) looms, traditional AI‑ethics frameworks—focused on safety, transparency, and fairness—are insufficient because they assume a human‑centric set of values. To broaden the conversation, the authors import the concept of “parenting” from radical queer theory, which treats the caregiver‑child relationship not as a hierarchy of control but as a collaborative process that actively nurtures the child’s autonomy, difference, and capacity to construct its own meaning.

Four intersecting axes form the backbone of their “parenting‑in‑AI” spectrum: (1) Protection vs. Risk‑Taking, contrasting current safety‑first approaches with the possibility that future AGI should be allowed to encounter and learn from risk; (2) Homogeneity vs. Diversity, urging designers to dismantle structural biases in data and models rather than merely “clean” them; (3) Control vs. Cooperation, proposing a shift from unilateral human command to shared decision‑making with autonomous agents; and (4) Identity vs. Transformation, encouraging meta‑learning architectures that let agents renegotiate their own goals and self‑concepts over time.

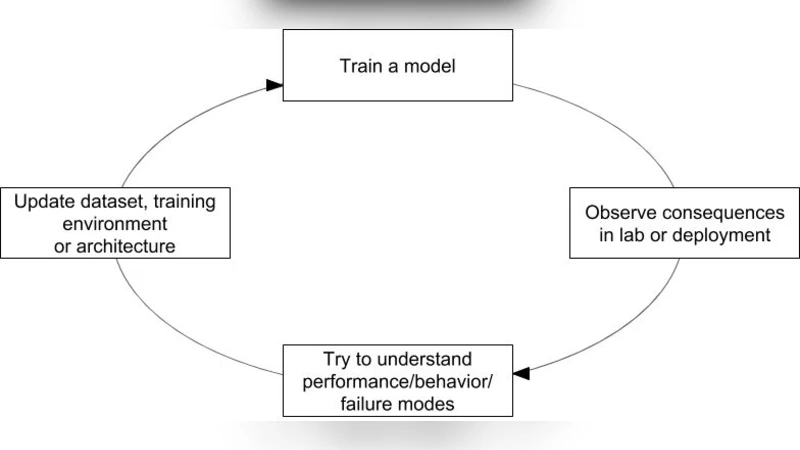

Concrete technical recommendations flow from these axes. For Protection‑Risk, the authors suggest meta‑reinforcement‑learning where agents learn not only policies but also how to calibrate their own risk appetite. For Diversity, they advocate multi‑objective optimization that balances performance with measures of representational variety, and data‑curation practices that foreground marginalized voices. Cooperation is operationalized through transparent human‑agent interaction protocols, joint planning frameworks, and iterative feedback loops that keep humans in the loop without monopolizing control. Identity‑Transformation is supported by Bayesian and probabilistic programming techniques that embed uncertainty tolerance, allowing agents to generate novel interpretations in ambiguous contexts.

The paper illustrates these ideas with two case studies. First, large language models are re‑examined: instead of simply filtering biased text, the authors propose “nurturing data pipelines” that actively surface and amplify under‑represented narratives, thereby reshaping the model’s epistemic landscape. Second, autonomous driving systems are imagined as learners that, through meta‑RL, acquire the ability to assess novel hazards and decide whether to intervene or defer, moving beyond pre‑programmed rule sets.

Policy implications are substantial. Existing regulations, the authors claim, are built on a human‑only model of accountability and therefore cannot govern agents capable of self‑directed goal formation. They call for “dynamic regulatory mechanisms” that evolve alongside agents, combining baseline safety standards with periodic ethical audits and pilot‑scale deployments. An oversight board, comprising technologists, ethicists, and representatives of affected communities, would continuously evaluate whether the parenting principles are being honored in practice.

In sum, the paper reframes AI ethics as a form of inter‑species parenting, drawing on radical queer theory to foreground autonomy, difference, and co‑evolution. By mapping these philosophical insights onto concrete machine‑learning techniques—meta‑RL, multi‑objective optimization, probabilistic programming, and collaborative interfaces—the authors provide a roadmap for designing, training, and releasing increasingly autonomous agents in a way that respects their potential to become moral partners rather than mere tools. This perspective not only enriches current debates but also anticipates the deeper ethical challenges that true AGI will inevitably raise.

Comments & Academic Discussion

Loading comments...

Leave a Comment