A Comprehensive Analysis of 2D&3D Video Watching of EEG Signals by Increasing PLSR and SVM Classification Results

Despite the development of two and three dimensional (2D&3D) technology, it has attracted the attention of researchers in recent years. This research is done to reveal the detailed effects of 2D in comparison with 3D technology on the human brain waves. The impact of 2D&3D video watching using electroencephalography (EEG) brain signals is studied. A group of eight healthy volunteers with the average age of 31+-3.06 years old participated in this three-stage test. EEG signal recording consisted of three stages: After a bit of relaxation (a), a 2D video was displayed (b), the recording of the signal continued for a short period of time as rest (c), and finally the trial ended. Exactly the same steps were repeated for the 3D video. Power spectrum density (PSD) based on short time Fourier transform (STFT) was used to analyze the brain signals of 2D&3D video viewers. After testing all the EEG frequency bands, delta and theta were extracted as the features. Partial least squares regression (PLSR) and Support vector machine (SVM) classification algorithms were considered in order to classify EEG signals obtained as the result of 2D&3D video watching. Successful classification results were obtained by selecting the correct combinations of effective channels representing the brain regions.

💡 Research Summary

The paper investigates how watching two‑dimensional (2D) versus three‑dimensional (3D) video content influences human electroencephalography (EEG) signals and whether these differences can be reliably classified using machine‑learning techniques. Eight healthy adult volunteers (average age 31 ± 3.06 years) participated in a controlled experiment. Each trial consisted of three phases: a 9‑second relaxation period, a 14‑second video viewing segment (either 2D or 3D), and a 9‑second rest period. The viewing sequence was repeated fifteen times for each condition to improve the signal‑to‑noise ratio. The same video clip (“Saw”) and identical viewing distance (130 cm) were used for both conditions, with a passive‑type 3D TV and glasses for the 3D trials.

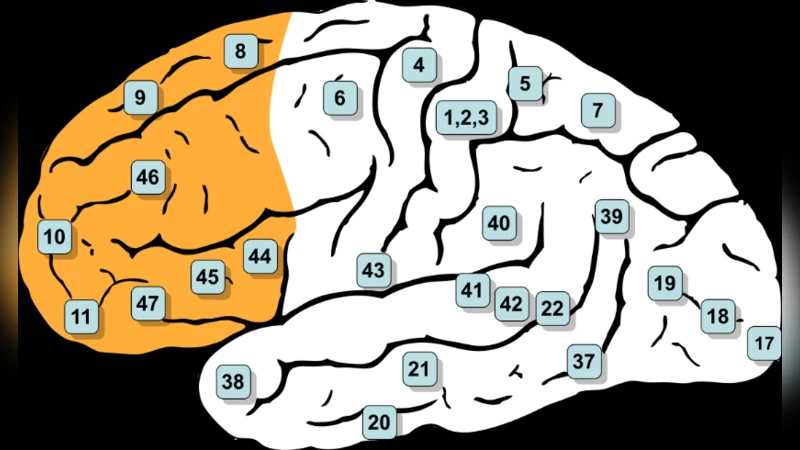

EEG was recorded with a 21‑channel cap (10‑20 system) at 512 Hz. Pre‑processing involved a 50 Hz notch filter and a zero‑phase third‑order Butterworth band‑pass filter (1‑55 Hz). Time‑frequency analysis employed the Short‑Time Fourier Transform (STFT) using a Hanning window of 512 samples and an overlap of window‑size‑1, yielding a spectrogram for each channel. Power Spectral Density (PSD) was computed from the STFT, and the total power in five conventional bands (δ:1‑4 Hz, θ:4‑8 Hz, α:8‑13 Hz, β:13‑25 Hz, γ:25‑49 Hz) was normalized by the overall power (1‑49 Hz). By subtracting the normalized power of the 3D condition from that of the 2D condition for each channel and band, the authors identified channels where the absolute difference exceeded a factor of two. This criterion highlighted the δ and θ bands as the most discriminative; the γ band showed negligible differences and was excluded from further analysis.

For feature extraction, the continuous EEG was segmented into 4‑second epochs with 3.5‑second overlap, producing 630 half‑second epochs per participant (315 per class). Within each epoch, only the PSD values of the δ and θ bands across all 21 channels were retained, forming a feature matrix of size 2 × 21 × 15 for each condition. The dataset was then split into training (158 epochs) and testing (157 epochs) sets.

Two classification approaches were evaluated. Partial Least Squares Regression (PLSR) was used to reduce dimensionality and perform a linear regression‑based classification. A Support Vector Machine (SVM) with a radial basis function kernel was also trained to capture non‑linear decision boundaries. By systematically testing different subsets of channels, the authors found that combinations involving frontal (e.g., Fz), central (Cz), parietal (Pz), and occipital (O1, O2) electrodes yielded the highest accuracies, reaching above 85 % for distinguishing 2D from 3D viewing.

The results confirm that low‑frequency EEG activity (δ and θ) is sensitive to the additional depth cues and visual processing demands of 3D video, aligning with prior findings that associate these bands with attention, fatigue, and cognitive load. The study demonstrates that a relatively small set of electrodes can provide sufficient discriminative information, which is valuable for designing lightweight brain‑computer interface (BCI) systems for immersive media.

However, the work has notable limitations. The sample size (n = 8) is small, limiting statistical power and generalizability across ages, genders, and visual‑experience backgrounds. The video duration (14 seconds) is brief, preventing assessment of cumulative fatigue effects that may emerge over longer viewing sessions. The exclusion of the γ band was based on an observed lack of difference rather than a rigorous statistical test, possibly overlooking high‑frequency information. Finally, only linear (PLSR) and kernel‑based (SVM) classifiers were explored; contemporary deep‑learning models such as CNN‑LSTM could capture more complex spatiotemporal patterns and potentially improve performance.

Future research should expand the participant pool, incorporate longer and more varied video content, and evaluate additional EEG bands. Comparative studies with deep neural networks, as well as real‑time implementation on portable EEG hardware, would further elucidate the practical applicability of EEG‑based discrimination between 2D and 3D media consumption.

Comments & Academic Discussion

Loading comments...

Leave a Comment