What sets Verified Users apart? Insights, Analysis and Prediction of Verified Users on Twitter

Social network and publishing platforms, such as Twitter, support the concept of a secret proprietary verification process, for handles they deem worthy of platform-wide public interest. In line with significant prior work which suggests that possessing such a status symbolizes enhanced credibility in the eyes of the platform audience, a verified badge is clearly coveted among public figures and brands. What are less obvious are the inner workings of the verification process and what being verified represents. This lack of clarity, coupled with the flak that Twitter received by extending aforementioned status to political extremists in 2017, backed Twitter into publicly admitting that the process and what the status represented needed to be rethought. With this in mind, we seek to unravel the aspects of a user’s profile which likely engender or preclude verification. The aim of the paper is two-fold: First, we test if discerning the verification status of a handle from profile metadata and content features is feasible. Second, we unravel the features which have the greatest bearing on a handle’s verification status. We collected a dataset consisting of profile metadata of all 231,235 verified English-speaking users (as of July 2018), a control sample of 175,930 non-verified English-speaking users and all their 494 million tweets over a one year collection period. Our proposed models are able to reliably identify verification status (Area under curve AUC > 99%). We show that number of public list memberships, presence of neutral sentiment in tweets and an authoritative language style are the most pertinent predictors of verification status. To the best of our knowledge, this work represents the first attempt at discerning and classifying verification worthy users on Twitter.

💡 Research Summary

The paper “What sets Verified Users apart? Insights, Analysis and Prediction of Verified Users on Twitter” investigates whether the opaque Twitter verification process can be reverse‑engineered from publicly available data. The authors collected a comprehensive dataset comprising all English‑language verified accounts (231,235 users) as of July 2018 and a matched control group of non‑verified users (175,930) whose follower counts were within 2 % of a corresponding verified user. Over a one‑year period (June 2017 – May 2018) they harvested every tweet authored by these accounts, yielding roughly 494 million tweets.

From this massive corpus they extracted a rich feature set (>200 features) spanning four major dimensions: (1) profile metadata (account age, follower/friend counts, total tweets, number of public list memberships, bio length, etc.), (2) linguistic and stylistic content features (POS tag distributions, hashtag/mention/link frequencies, average words per sentence, character‑level entropy, proportion of long words), (3) sentiment scores (positive, negative, neutral, and compound VADER scores aggregated per user), and (4) temporal activity signals (daily averages and growth rates of followers, friends, and tweet volume, activity regularity, and sudden spikes). Additionally, they incorporated state‑of‑the‑art bot detection scores (Botometer) to control for automated behavior.

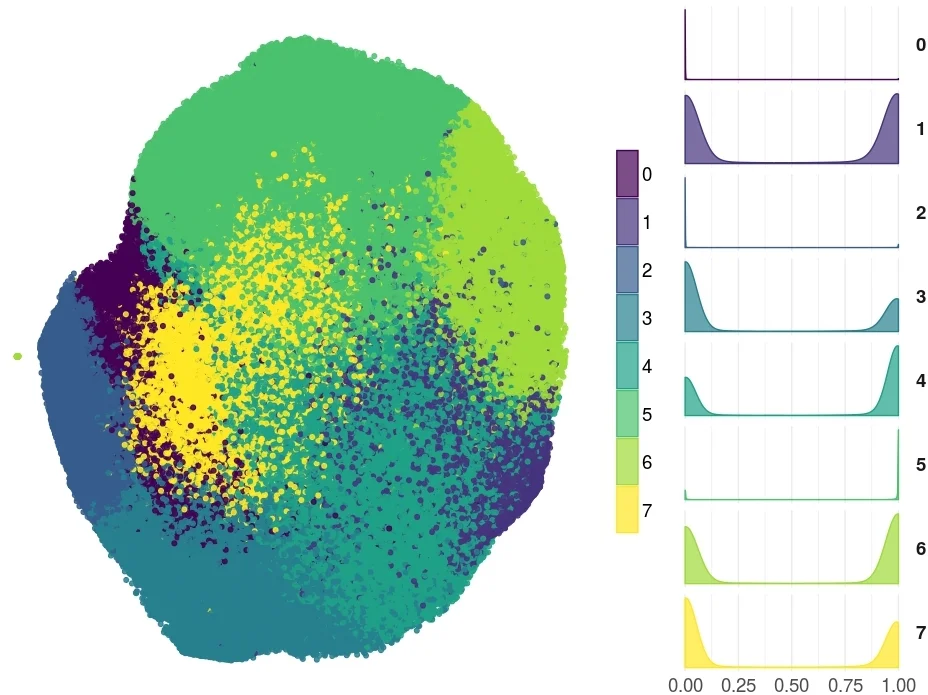

The central research questions are: (RQ1) Can verification status be predicted from these features, and which features are most discriminative? (RQ2) Do verified and non‑verified users differ in the topical breadth of their tweets? To answer RQ1 the authors trained several classifiers (logistic regression, random forest, XGBoost) and addressed class imbalance with SMOTE oversampling. Using 10‑fold cross‑validation and a held‑out test set, the XGBoost model achieved an AUC of 0.991, accuracy ≈ 96 %, recall ≈ 97 %, and precision ≈ 95 %. Feature importance analysis revealed that the number of public list memberships is the single strongest predictor, followed by the proportion of neutral sentiment, an “authoritative language style” (high usage of formal, domain‑specific vocabulary), and account age. Bot scores were consistently low for verified users, confirming that verification is rarely granted to automated accounts.

For RQ2, the authors applied Latent Dirichlet Allocation (LDA) and clustering to the tweet text, finding that verified users concentrate on topics with high public interest—politics, journalism, sports, and major events—whereas non‑verified users tweet more about entertainment, hobbies, and niche interests. This supports the platform’s stated policy that verification is tied to “public interest” rather than arbitrary popularity.

The paper discusses several limitations. The dataset is limited to English‑language accounts and reflects the verification criteria as of 2018; policy changes after 2022 are not captured. Matching on follower count controls for one dimension of public interest but may still leave confounding variables (e.g., brand sponsorships that inflate list memberships). Sentiment analysis relies on VADER, which may miss cultural nuances. Moreover, the high predictive performance could enable malicious actors to game the system—e.g., artificially inflating list memberships or posting neutral‑tone content to appear verification‑worthy.

Ethically, the study uses only publicly available tweets, anonymizes personal identifiers, and promises to release the curated dataset, enhancing reproducibility. Nonetheless, the authors acknowledge the risk of “verification fraud” and suggest that platforms could use such predictive insights to increase transparency while adding safeguards against manipulation.

In summary, the work provides a data‑driven deconstruction of Twitter’s verification badge, demonstrating that a combination of profile visibility (list memberships), neutral emotional tone, and formal language style can predict verification status with near‑perfect accuracy. It contributes both methodological advances (large‑scale feature engineering, robust classification) and practical insights for platform governance, while highlighting the need for continual monitoring of verification criteria to prevent exploitation.

Comments & Academic Discussion

Loading comments...

Leave a Comment