Dataspace: A Reconfigurable Hybrid Reality Environment for Collaborative Information Analysis

Immersive environments have gradually become standard for visualizing and analyzing large or complex datasets that would otherwise be cumbersome, if not impossible, to explore through smaller scale computing devices. However, this type of workspace often proves to possess limitations in terms of interaction, flexibility, cost and scalability. In this paper we introduce a novel immersive environment called Dataspace, which features a new combination of heterogeneous technologies and methods of interaction towards creating a better team workspace. Dataspace provides 15 high-resolution displays that can be dynamically reconfigured in space through robotic arms, a central table where information can be projected, and a unique integration with augmented reality (AR) and virtual reality (VR) headsets and other mobile devices. In particular, we contribute novel interaction methodologies to couple the physical environment with AR and VR technologies, enabling visualization of complex types of data and mitigating the scalability issues of existing immersive environments. We demonstrate through four use cases how this environment can be effectively used across different domains and reconfigured based on user requirements. Finally, we compare Dataspace with existing technologies, summarizing the trade-offs that should be considered when attempting to build better collaborative workspaces for the future.

💡 Research Summary

The paper introduces Dataspace, a reconfigurable hybrid‑reality environment designed for collaborative analysis of large and complex datasets. Dataspace combines fifteen 4K OLED displays mounted on seven‑degree‑of‑freedom KUKA robotic arms, a central ceramic table with dual 2K projectors, a spatial audio system, and a perception layer consisting of eight Kinect v2 depth sensors. The robotic arms allow each screen to be moved, rotated, and tilted in real time, supporting a variety of configurations such as vertical “Immersion,” circular “Context,” and grouped “Triptych” layouts. Touch interaction is emulated through torque sensors in the robot joints, achieving ±2 cm precision.

AR headsets (e.g., Microsoft HoloLens) are tightly integrated, enabling users to overlay 3‑D visualizations onto the physical environment while still accessing high‑resolution 2‑D charts on the large screens. VR headsets provide a virtual extension of the room for remote participants, synchronizing visual and auditory cues with the local space. The central table serves as a high‑resolution interactive surface; gestures on its passive surface are captured by the Kinect array and processed by a perception subsystem.

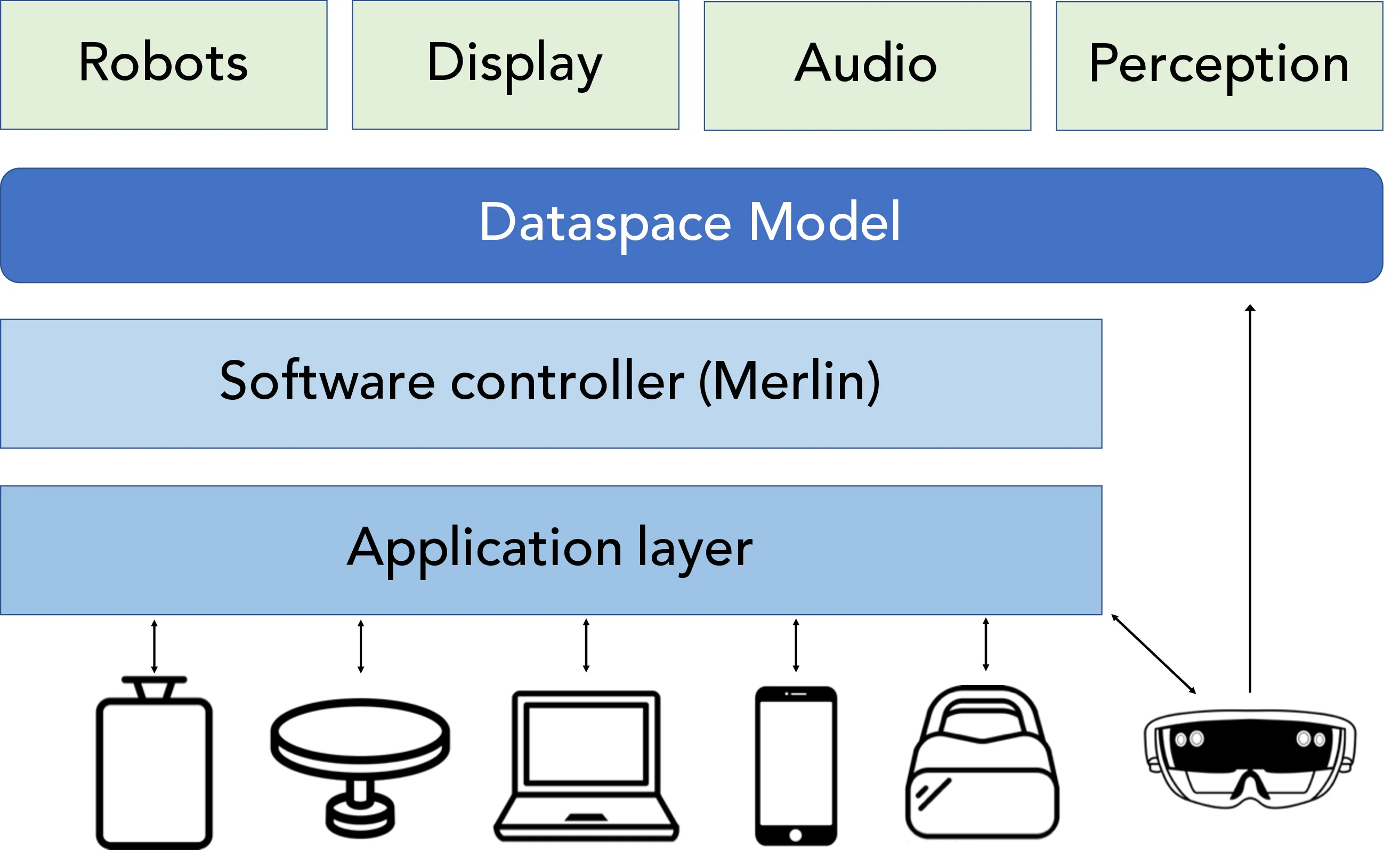

All hardware components are abstracted through a middleware layer called Merlin. Merlin exposes a “Dataspace model” that web‑based applications can query and manipulate via RESTful APIs and WebSockets. This architecture simplifies the addition of new devices, AI modules, or interaction techniques, ensuring extensibility and maintainability.

The authors define five design criteria that guided the system: (D1) Shared Data Exploration, encouraging multidisciplinary teams to work together; (D2) Egalitarian Access, achieved through a circular layout and omnidirectional audio so every participant has the same view regardless of position; (D3) Flexible Data Immersion, supporting the full spectrum from 2‑D statistical charts to immersive 3‑D AR visualizations; (D4) Multimodal Interaction, providing keyboard/mouse, touch (via robot sensors), spatial controllers, and voice; and (D5) Seamless Integration of Heterogeneous Devices, allowing plug‑and‑play addition of new hardware.

Four use cases demonstrate the platform’s versatility: (1) Data‑center management, where vertical screens display real‑time logs while AR shows rack locations; (2) Geospatial analysis, using a circular screen arrangement to present satellite imagery and GIS layers, with table gestures to select regions; (3) Biomedical visualization, combining AR‑based 3‑D molecular models with high‑resolution 2‑D experimental data for collaborative discussion; and (4) AI‑driven insight discovery, where machine‑learning results are rendered as heatmaps on the walls and as 3‑D point clouds in AR, with users adjusting model parameters via hand gestures.

A comparative evaluation against prior systems such as traditional power‑walls, CA VE 2, Reality Deck, and pure HMD‑based analytics shows that Dataspace offers lower cost, easier installation, and superior flexibility. The robotic reconfiguration eliminates the need for physical redesign when switching tasks, a limitation of fixed‑layout walls. The circular design and spatial audio provide equal access for all participants, addressing the single‑viewpoint problem of many HMD solutions. Moreover, the multimodal interaction model supplies robust fallbacks in case of device failure.

In conclusion, Dataspace represents a next‑generation collaborative analytics environment that unifies high‑resolution displays, robotic reconfiguration, AR/VR, spatial audio, and multimodal interaction within a single, extensible framework. The paper suggests future work on automated layout optimization, adaptive interaction based on user behavior, high‑bandwidth secure streaming for remote collaboration, and deeper integration of AI assistants to further enhance collaborative insight generation.

Comments & Academic Discussion

Loading comments...

Leave a Comment