GAN Based Image Deblurring Using Dark Channel Prior

A conditional general adversarial network (GAN) is proposed for image deblurring problem. It is tailored for image deblurring instead of just applying GAN on the deblurring problem. Motivated by that, dark channel prior is carefully picked to be incorporated into the loss function for network training. To make it more compatible with neuron networks, its original indifferentiable form is discarded and L2 norm is adopted instead. On both synthetic datasets and noisy natural images, the proposed network shows improved deblurring performance and robustness to image noise qualitatively and quantitatively. Additionally, compared to the existing end-to-end deblurring networks, our network structure is light-weight, which ensures less training and testing time.

💡 Research Summary

The paper presents a conditional generative adversarial network (CGAN) specifically designed for single‑image blind deblurring, and it introduces a novel way to incorporate the dark channel prior (DCP) into the training loss. Traditional GAN‑based deblurring methods, such as DeblurGAN, rely on a combination of adversarial loss, pixel‑wise L1/L2 loss, and sometimes a perceptual loss computed from a pretrained VGG network. While these approaches achieve impressive PSNR and SSIM scores, visual inspection often reveals grid‑like artifacts caused by the generator’s tendency to produce repetitive patterns when trying to satisfy the adversarial objective.

To address this, the authors adopt the dark channel prior, originally proposed for haze removal, which measures the minimum intensity across the three color channels within a local patch. Prior work on deblurring showed that the dark channel of a sharp image is sparser than that of a blurred one, and some methods enforced this sparsity using an L0 norm on the dark channel map. However, the L0 norm is non‑differentiable and unsuitable for back‑propagation in deep networks. The authors therefore replace the L0 term with an L2 norm that directly penalizes the Euclidean distance between the dark channel maps of the generated image and the ground‑truth sharp image. This loss is fully differentiable, easy to compute, and still encourages the generator to produce images whose dark channel exhibits the desired sparsity, thereby suppressing ringing and grid artifacts.

The network architecture follows an encoder‑decoder paradigm for the generator, similar to the “image‑to‑image” framework of Isola et al. Each encoder block consists of a convolution with stride 2 and a 5×5 kernel, followed by batch normalization (except for the first layer) and a LeakyReLU activation. The decoder mirrors this structure with transposed convolutions, incorporates dropout to mitigate over‑fitting, and uses skip connections that concatenate feature maps from the encoder to the corresponding decoder layers, preserving fine‑grained details. The discriminator is a straightforward stack of convolutional layers (stride 2, 5×5 kernels) ending in a single scalar passed through a sigmoid, which evaluates the realism of the (blurred, restored) image pair.

Training loss for the generator is a weighted sum of three components:

- Adversarial loss (standard CGAN formulation).

- Content loss, an L1 distance between the generated image and the ground truth (λ₁ = 100).

- Dark‑channel loss, the L2 distance between the dark channel maps of the two images (λ₂ = 250).

The perceptual loss used in DeblurGAN is deliberately omitted after experiments showed it degraded performance, likely because the adversarial component already captures high‑level texture information.

For data, the authors use the widely adopted GOPRO dataset, which contains 2103 training and 1111 testing pairs of synthetically blurred and sharp frames captured from high‑frame‑rate video. To make the model robust to real‑world noise, they create a “GOPRO‑noise” variant by adding Gaussian noise (variance 0.001) to both training and testing images. They also incorporate an external synthetic dataset used by DeblurGAN, training on the combined set.

Training is performed on an NVIDIA GTX 1080 Ti GPU with Adam optimizer. Each iteration updates the discriminator once and the generator twice, preventing the discriminator from dominating the loss. The model converges after only 15 epochs (approximately two days), a stark contrast to DeblurGAN’s 200 epochs over six days, demonstrating the efficiency of the lightweight architecture.

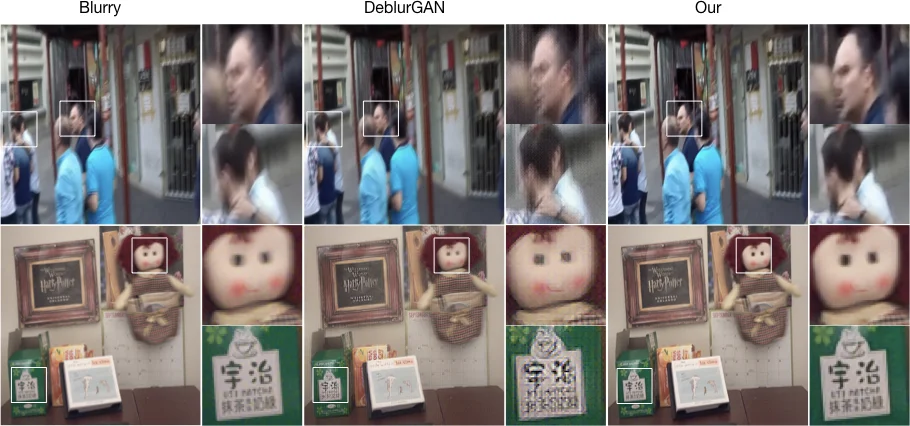

Quantitative results (Table 1) show that the proposed method achieves higher average PSNR (≈26.8 dB) and SSIM (≈0.88) on the clean GOPRO test set, and similarly improved scores on the noisy variant, outperforming DeblurGAN and ablations without the dark‑channel term. Qualitative comparisons (Figure 3) illustrate that the baseline GAN produces noticeable grid artifacts, especially on high‑frequency regions, whereas the dark‑channel‑enhanced generator yields sharper edges and cleaner textures without such artifacts.

In conclusion, the paper successfully integrates a classic image prior—dark channel—into a modern GAN framework by reformulating it as an L2 loss, thereby eliminating non‑differentiable components while preserving its regularizing effect. The resulting model is both lighter (fewer layers and parameters) and faster to train, yet delivers superior deblurring performance on synthetic and noisy real‑world images. This work exemplifies how domain‑specific priors can be harmoniously combined with deep learning to overcome limitations of purely data‑driven approaches.

Comments & Academic Discussion

Loading comments...

Leave a Comment