Attentive Statistics Pooling for Deep Speaker Embedding

This paper proposes attentive statistics pooling for deep speaker embedding in text-independent speaker verification. In conventional speaker embedding, frame-level features are averaged over all the frames of a single utterance to form an utterance-…

Authors: Koji Okabe, Takafumi Koshinaka, Koichi Shinoda

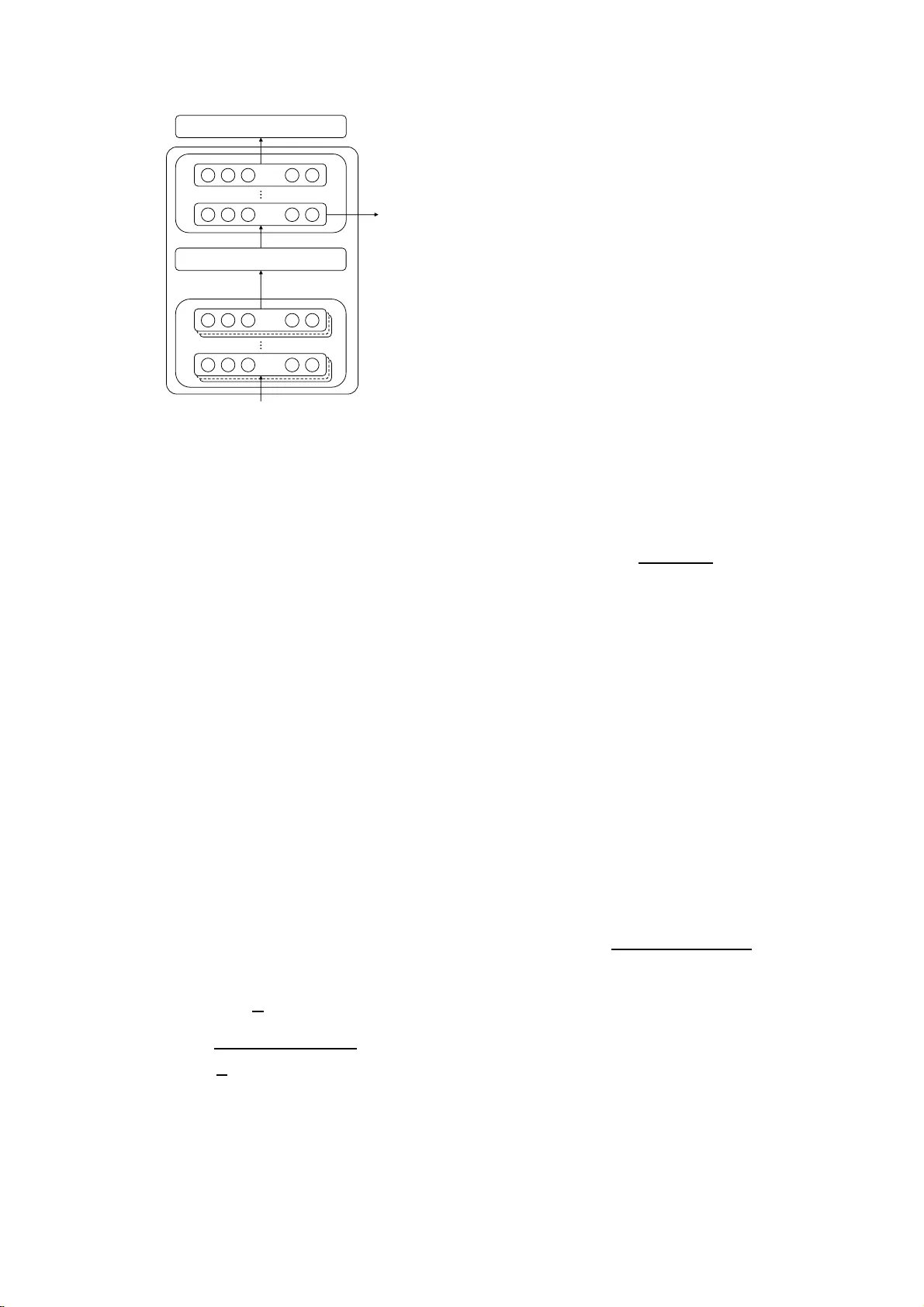

Attentiv e Statistics P ooling f or Deep Spe aker Embedding K oji Okabe 1 , T akafumi K oshin aka 1 , K oichi Shinoda 2 1 Data Science Research Laboratories, NEC Corporation, Japan 2 Department of Computer Science, T okyo Institute of T echnology , Japan k-okabe@bx.jp .nec.com, koshinak@ap.j p.nec.com, shinoda@c.ti tech.ac.jp Abstract This paper proposes attentiv e statistics pooling for deep speak er emb edding in te xt-independent speaker v erification. In con ventional speak er embedding, frame-lev el features are av- eraged ove r all the frames of a single utterance to form an utterance-le vel feature. Our metho d utilizes an attention mecha- nism to give different weights to different frames an d generates not only weighted means b ut also weighted standard dev iations. In this way , it can capture long - term v ariations in speak er char- acteristics more ef fectiv ely . An ev aluation on the NIST SRE 2012 and the V oxCeleb data sets sho ws t hat it reduces equal error rates (EE Rs) from the con ventional method by 7.5% and 8.1%, resp ectively . Index T erms : speak er recognition, deep neural networks, at- tention, statistics pooling 1. Intr oduction Speaker recognition has adv anced considerably in t he last decade with the i-vector paradigm [1], in which a spee ch ut- terance or a speaker is represented in the form of a fixed- low- dimensional fea ture v ector . W i t h the great success of deep learning over a wide range of ma chine learning tasks, including automatic speech recog ni- tion (ASR), an increasing number of research studies have intro- duced deep learnin g into feature extraction for speak er recogni- tion. In early studies [2, 3], deep neural networks (DNNs) de- riv ed from acoustic models for AS R have been employ ed as a uni versal background model (UBM) to provide phoneme pos- teriors as well as bottleneck features, which are used for , re- specti vely , zeroth- and first-order statistics in i-vector extrac- tion. Whil e they have shown better performance than con ven- tional UBMs ba sed o n Gaussian mixture models (GMMs), they hav e the drawback of lang uage dependency [4] and a lso require expen sive p honetic transcription s for trai ning [5]. Recently , DN N s hav e been sho wn to be useful for extract- ing speaker -discriminative feature vectors independently from the i-vector framew ork. W ith the help of large-scale training data, such approaches lead to better results, particularly under conditions of short-duration utterances. In fixe d-phrase te xt- dependen t speaker verification, an end-to-end neural network- based method has been proposed [6] in which Long Short-T erm Memory (LS T M) wi th a single output from the last frame is used to obtain utterance-lev el speaker features, and it has out- performed co nv entional i-vector extraction. In tex t-independent speak er verification, where inp ut utter- ances can hav e variable phrases and lengths, an average pool- ing layer has been i ntroduced to aggregate frame-le vel speak er feature vectors to obtain an utterance-le vel feature vector , i.e., speak er embedding, wi th a fixed number of dimensions. Most recent studies have sho wn that DNNs achie ve better accuracy than do i-vectors [7, 8]. Snyder et al. [9] employed an extension of a verage poo l ing, in which what they called statistics po oli ng calculated no t only the mean, b ut also the standard d e viation of frame-lev el features. T hey , howe ver , have not yet r eported the efficti veness of stand ard de viaion po oling to accuracy improve- ment. Other recent stud ies co nducted fro m a different perspectiv e [10, 11] hav e incorporated attention mechanisms [12]. It had pre viously produced significant improv ement in machine trans- lation. In the scenario of speaker recognition, an importance metric is computed by the small attention network that works as a part of the speaker embedding netwo rk. The importance is utilized for calculating the weighted mean of frame-lev el fea- ture v ectors. This mechanism enables sp eaker embedding to be focused on important frames and t o obtain long-term speaker representation with high er discriminati ve power . Such p rev ious work, ho w ever , has been e valuated only in such limited tasks as fixed-dura tion text-indepen dent [10] or text-depend ent speaker recognition [1 1]. In this paper , we propose a new pooling method, called attentiv e statistics pooling, that provides importance-weighted standard deviations as well as the weighted mean s of frame- lev el features, for which the importance is calculated by an at- tention mechanism. This enables speak er embedding to more accurately and efficiently capture speaker factors with respect to long-term va riations. T o the best of our kno wledge, this is the first attemp t reported in the literature to use attentiv e statis- tics pooling in t ext-indep endent and variable-du ration scenar- ios. W e have also ex perimentally shown, through comparisons of various pooling layers, the effecti veness of long-term speaker characteristics d erived fr om standard d e viations. The remainder of this paper is organized as follo ws: Section 2 describes a con ventional method f or extracting deep speaker embedding. Section 3 r e vie ws two extensions for the con ven- tional method, and then introduces the proposed speak er em- bedding method. The experimental setup and results are pre- sented in S ecti on 4. Section 5 summarizes our work and notes future p l ans. 2. Deep speak er embedding The con ventional DNN for extracting utterance-lev el speaker features consists of t hree blocks, as sho wn in F igure 1. The first blo ck is a frame-le vel feature e xtractor . The input to t his block is a sequence of acoustic features, e.g., MFCCs and filter-bank coefficients. After considering relativ ely short- term acoustic features, this block outputs frame-lev el features. Any type of neural network is applicable for the extractor , e.g., a T ime-Delay Neural Network (TDNN) [9], Con volutional Neu- ral Network (CNN) [7, 8], LST M [10, 11], or Gated Recurrent Unit (GR U) [ 8]. The second block is a pooling layer that con verts variable- length fr ame-level features into a fixed-dimensional vector . The Pooling layer Acoustic feature ଵ , ଶ , … , ் Frame-level feature ଵ , ଶ , … , ் Speaker IDs Utterance-level feature ⋯ ⋯ Frame-level feature extractor Utterance-level feature extractor ⋯ ⋯ Figure 1: DNNs for extra cting utteranc e-level speaker featur es most standard t ype of pooling layer obtains the average of all frame-lev el features (average pooling). The third block is an utterance-lev el feature extractor in which a number of fully-connected hidden layers are stacked. One of t hese hidden layers is often designed to have a smaller number of units (i.e., t o be a bottleneck layer), w hich forces the information brought from the preceding layer into a low- dimensional representation. The output is a softmax layer , and each of its output nodes corresponds to one speaker ID. For training, we employ back-prop agation with cross entropy loss. W e can then use bottleneck features in the third block as utterance-le vel features. S ome st udies refrain from using soft- max layers and achie ve end-to-end neural netw orks by using contrasti ve loss [7] or triplet loss [8 ]. Probabilistic linear dis- criminant analysis (PL D A) [13, 14 ] can also be used for mea- suring t he distance between two utterance-lev el features [9, 10]. 3. Higher -order pooling with attention The con ven tional speaker embedding described in the p rev ious section suggests the addition of two extensions of the pooling method: the use of higher -order statist i cs and the use of at- tention mechanisms. In this section we revie w both and then introduce our proposed pooling method, which we refer t o as attentiv e statistics pooling. 3.1. Statistics poo ling The statisti cs pooling layer [9] calculates t he mean vector µ as well as the second-order statisti cs as the st andard deviation vector σ over frame -lev el features h t ( t = 1 , · · · , T ). µ = 1 T T X t h t , (1) σ = v u u t 1 T T X t h t ⊙ h t − µ ⊙ µ , (2) where ⊙ represents the Hadamard product. The mean vector (1) which aggre gates frame-lev el features can be vie wed as the main body of utterance-le vel features. W e consider that the standard d ev iation (2) also plays an important role sinc e it con- tains other speaker characteristics in terms of t emporal variabil- ity ove r long contexts. LSTM is capable of taking relative ly long contexts into account, using its recurrent connections and gating functions. Howe ver , the scope of LSTM i s actually no more than a second ( ∼ 100 frames) due to the vanish ing gra- dient problem [15]. A standard deviation, which is potentially capable o f revealing any distance in a conte xt, can help speak er embedding cap t ure lo ng-term v ariability ov er an utterance. 3.2. Attention mechanism It is often the case that frame-le vel features of some frames are more u nique and imp ortant for discriminating spe akers than others i n a given utterance. Recent studies [10, 11] have applied attention mecha nisms to spe aker recog nition for the purpose of frame selection by automatically calculating the importance of each frame. An attention model works in conjunction with the original DNN and calculates a scalar score e t for each frame-level fea- ture e t = v T f ( W h t + b ) + k, (3) where f ( · ) is a non-linear acti vation f unction, such as a tanh o r ReLU function. The score i s normalized over all frames by a softmax function so a s to add up to the following unity: α t = exp( e t ) P T τ exp( e τ ) . (4) The normalized score α t is then used as the weight in the pooling lay er to calculate the weighted mean vector ˜ µ = T X t α t h t . (5) In this way , an utterance-lev el feature extracted from a weighted mean vector focuses on important frames and hence becomes m ore sp eaker discriminative. 3.3. Attentiv e statistics pooling The authors believ e that both higher -order stati st ics ( standard de viations as utterance-lev el features) and attention mecha- nisms are effecti ve for higher speaker discriminability . Hence, it would make sense to consider a ne w pooling method, atten- tiv e stat i stics pooling, wh ich produces b oth means and standard de viations with imp ortance weighting by means of attention, as illustrated in Figure 2. Here the weighted mean is gi ven b y (5), and the weighted stan dard de viation is defined as follows: ˜ σ = v u u t T X t α t h t ⊙ h t − ˜ µ ⊙ ˜ µ , (6) where t he w eight α t calculated by (4) is shared by both the weighted mean ˜ µ and weighted standard de viation ˜ σ . The weighted standard dev iation is thought to take the adv antage of both stati st ics pooling and attention, i .e., feature representa- tion in terms of long-term v ariations and frame selection in ac- cord with importance, bringing higher speaker discriminability to utterance-lev el features. Needless to say , as (6) is differen- tiable, DNNs with attenti ve statistics poo ling can be trained on the b asis of back-propagation . Frame-level features ࢎ ௧ ܶ frames W eights ߙ ௧ Attention model Attentive statistics pooling layer W eighte d mean ࣆ W eighte d standard deviation ࣌ Figure 2: Attentive statistics pooling 4. Experiments 4.1. Experimental setti ngs W e report here speaker verification accuracy w .r . t. the NIST SRE 2012 [ 16 ] Common Condition 2 (S RE12 CC2) and V ox- Celeb corpora [7]. Deep speake r embedding with our attentive statistics pooling is compared to that with con ventiona l statis- tics pooling and with attentive average pooling , as well as with traditional i-v ector extraction based on GMM-UBM. 4.1.1. i-vecto r system The baseline i-vector system uses 20-dimension al MFCCs for e very 10ms. Their d elta and delta-delta features were app ended to form 60-dimensiona l acoustic features. Sliding mean normal- ization with a 3-second windo w an d energy-based voice activity detection (V AD) were then applied, in that order . An i-vector of 400 dimensions was then extracted f rom the acoustic feature vectors, using a 2048-mixture UBM and a t otal v ariability ma- trix (TVM). Mean subtraction, whitening, and l ength normal- ization [1 7] were applied to the i-vector as pre-proc essing steps before sending it to the PLDA, and similarity was then ev aluated using a P LD A model with a speake r space o f 40 0 dimension s. 4.1.2. Dee p speake r embedding system W e used 20-dimensional MFCCs f or SRE12 ev aluation, and 40-dimensiona l MFCCs f or V oxCeleb ev aluation, for ev ery 10ms. S liding mean normalization wit h a 3-second windo w and energy -based V AD were then applied in the same way as was done with t he i -vector system. The network st ructure, except for its input dimen sions, w as exactly the same as the one shown in the recipe 1 published in Kaldi’ s official repository [18, 19]. A 5-layer TDNN with ReLU follo wed by batch no rmalization was used fo r extracting frame- lev el features. T he number of hidden nodes in each hidden layer was 512. The dimension of a frame-lev el feature f or pooling was 1500. Each frame-lev el feature was generated from a 15- frame co ntext of acoustic feature vectors. Pooling layer aggregates frame-le vel features, follo wed by 2 fully-connected l ayers with ReLU activ ation functions, batch normalization, and a softmax output layer . The 512- dimensional bottleneck features from the first fully-connected 1 egs/sre 16/v2 layer we re use d as speaker embe dding. W e tried four pooling techniques to ev aluate the effecti ve- ness of th e proposed method: (i) simple averag e pooling to pro- duce means only , (ii) statistics pooling to produce means and standard deviations, (ii i) attentive av erage pooling to produce weighted mean s, and (i v) our proposed attenti ve statistics poo l- ing. W e used ReLU followed by batch normalization f or acti - v ation function f ( · ) in (3 ) of the attention model. The number of h idden no des w as 64 . Mean subtraction, whitening, and l ength normalization were applied to the sp eaker embedding, as pre-processing steps before sending it to the PLDA, and similarit y was then ev aluated using a P LD A model with a speake r space o f 51 2 dimension s. 4.1.3. T raining and evaluation data In order to avoid condition mismatch, different tr aining data were used for each e valua tion task w .r .t. SRE12 CC2 and V ox- Celeb . For SRE12 e v aluation, t elephone recordings from SR E 04- 10, Swit chboard, a nd Fisher English were u sed as training data. W e also applied data augmentation to the trai ning set in the fol- lo wing w ays: (a) Additi ve n oise: each segment w as mix ed wi th one of the noise samples i n the PRISM corpus [20] (SN R : 8, 15, or 2 0dB), (b) Rev erberation: each se gment was conv olved with one of the room impulse responses in t he REVERB challenge data [21], (c) Speech encoding: each segment was encoded with AMR codec (6.7 or 4.75 kbps). The e valuation set we used was SRE12 Common Condition 2 (CC2), which is kno wn as a typi- cal su bset o f telepho ne con versations without added noise. For V oxCeleb e valuation, the de velopme nt and t est sets de- fined in [7] were respectiv ely used as training data and ev alua- tion data. The number of speakers in the training and e v aluation sets were 1,206 and 40, respectively . The number of segments in training and e valuation sets were 140,286 and 4 , 772, respec- tiv ely . Note that these numbers are slightly smaller than those reported in [7] due to a few dead links o n the o fficial downloa d server . W e also used the data augmentation (a) and (b) men- tioned above. W e report here results in terms of equal error rate (EER) and the minimum of the normalized detection cost function, for which we assume a prior target probability P tar of 0.01 (DCF10 -2 ) or 0.001 (DCF10 -3 ), and equal weights of 1.0 be- tween m isses C miss and false alarms C f a . 4.2. Results 4.2.1. NIST SRE 2012 T able 1 shows the performance on NIST SRE12 CC2. In the “Embedding” column, averag e [7, 8] denotes average pooling that used only means, attention [10, 11] used weighted means scaled by attention (attentiv e av erage pooling), stati stics [9] used both means and standard deviations (st atistics pooling), and attentive statistics is the proposed method (attentiv e statis- tics pooling), which used both weighted means and weighted standard d ev iations scaled by attention. In co mparison to av erage pooling, which u sed only mea ns, the add ition of attention was superior in terms of all e valua- tion measures. Surprisingly , the addition of standard devia- tions was even more effecti ve than that of attention. This i n- dicates t hat long-term information is quite important in text- independe nt speak er verification. Further, the proposed atten- tiv e statistics pooling r esulted in the best EER as well as minD- CFs. In terms of EER , it was 7.5% better than statistics pooling. T able 1: P erformance on NIST SRE 2012 Common Condition 2. Boldface denotes the best performance for each column. Embedding DCF10 -2 DCF10 -3 EER (%) i-vector 0.169 0.291 1.50 av erage [7, 8 ] 0.290 0.484 2.57 attention [ 10 , 11 ] 0.228 0.399 1.99 statistics [9 ] 0.183 0.331 1.58 attentiv e statistics 0.170 0.309 1.47 T able 2: EERs (%) for each duration on NIST SRE 2012 Com- mon Condition 2. Boldface denotes t he best performance for each column. Embedding 30s 100s 300s Pool i-vector 2.66 1.09 0.58 1.50 av erage [7, 8 ] 3.58 2.07 1.86 2.57 attention [ 10 , 11 ] 3.00 1.58 1.27 1.99 statistics [9 ] 2.49 1.25 0.82 1.58 attentiv e statistics 2.46 1.07 0.80 1.47 This reflects the effect of using both long-context and frame- importance. The traditional i-vector systems, ho wever , per- formed better than speaker embed ding-based systems, except for performance w .r .t. EER . This seems to hav e been because the SRE12 C C2 task consisted of long-utterance tr i als in which durations of t est utterances were from 30 seconds to 300 sec- onds and durations of multi- enrollment utterances were longer than 300 seconds. T able 2 shows comparisons of EERs for seve ral durations on NI S T SRE12 CC2. W e can see that deep sp eaker embedding of fered rob ustness in short-duration trials. Al though i -vector of fered the best performance under the long est-duration con- dition (300s), our att entiv e statistics pooling achiev ed the best under all other conditions, with better error rates than those of statistics pooling under all conditions, including Pool (ove rall av erage). Only att entiv e statistics pooling sho wed b etter perfor- mance than i- vectors on both 30-second trials and 100-second trials. 4.2.2. V oxCeleb T able 3 shows performance on the V oxCeleb test set. Here, also, the a ddition of both attention and of stan dard deviations helped improv e performance. As in the SRE12 CC2 case, standard de - viation addition had a l arger impact t han that of attention. The proposed attentiv e statistics pooling achie ved the best perfor- mance in all e valuation measures and w as 8.1% better in terms of EER than statist ics pooling. This may ha ve been because the durations were shorter t han those with SRE 12 CC2 (about 8 seconds on averag e in the ev aluation), and speaker embed- ding outperformed i-vectors, as well. It should be noted that compared to the ba seline performance shown in [7], whose be st EER was 7.8%, our experimental systems achie ved much better performance, ev en though w e used sl i ghtly smaller training an d e v aluation sets due to lack of certain videos. T able 3: P erformance on V oxCeleb . Boldface denotes the best performance for eac h column. Embedding DCF10 -2 DCF10 -3 EER (%) i-vector 0.479 0.595 5.39 av erage [7, 8 ] 0.464 0.550 4.70 attention [ 10 , 11 ] 0.443 0.598 4.52 statistics [9 ] 0.413 0.530 4.19 attentiv e statistics 0.406 0.513 3.85 5. Summary and Futur e W ork W e have proposed attentiv e statisti cs pooling for extracting deep speaker embedding. The proposed poo ling layer calcu- lates weighted means and weighted standard dev iations over frame-lev el features sca led by an attention model. This enables speak er embedding to focus only on important frames. More- ov er, long-term variations can be obtained a s speaker character- istics in the standard deviations. Such a combination of att en- tion and st andard deviations produces a synergetic effect to give deep speaker embedding higher discriminativ e power . T ext- independe nt speaker verification expe riments on NIST SRE 2012 and V oxCeleb ev aluation sets showed that it reduced EE Rs from a conv entional method by , respectiv ely , 7.5% and 8.1% for the two sets. W hile w e have achie ved considerable improve- ment under both short- and long-duration conditions, i-vectors are still comp etitiv e for long durations (e.g., 300s in SRE12 CC2). P ursuing ev en better accuracy under such conditions is an i ssue for our f uture w ork. 6. Refer ences [1] N. Dehak, P . J. K enny , R. Dehak, P . Dumouchel, and P . Ouellet, “Front-en d factor analy sis for speake r verificat ion, ” IEEE T rans- actions on Audio, Speech, and Langua ge Proc essing , vol. 19, no. 4, pp. 788–798, 2011. [2] Y . Lei, N. Sche ffer , L. Ferrer , and M. Mc L aren, “ A n ov el scheme for speaker recognition using a phonetical ly-aware dee p neu ral netw ork, ” in Proc. ICASSP , 2014, pp. 1695–1699. [3] M. McLaren, Y . L ei, and L . Ferrer , “ Adv ances in deep neural net- work approaches to speaker recognit ion, ” in Pro c. ICASSP , 2015, pp. 4814–4818. [4] H. Zheng, S. Zhang, and W . L iu, “Exploring robustness of DNN/RNN for ext racting speaker Baum-W elch statistics in m is- matched conditio ns, ” in Proc . Inter speec h , 2015, pp. 1161–1165. [5] Y . Tian , M. Cai, L. He, W .-Q. Zhang, and J. Liu, “Improving deep neural network s base d speaker verificatio n using unlabeled data . ” in P r oc. Interspeec h , 2016, pp. 1863–1867. [6] G. Heigold, I. Moreno, S. Bengio, and N. Shazeer , “End-to-en d tex t-depend ent speaker ver ificati on, ” in Pr oc. ICASSP , 2016, pp. 5115–5119. [7] A. Nagra ni, J. S. Ch ung, and A. Zisserman, “V oxCele b: A lar ge- scale speaker identi fication dataset, ” in P r oc. Interspee ch , 2017, pp. 2616–2620. [8] C. Li, X. Ma, B. Jiang, X. Li, X. Z hang, X. Liu, Y . Cao, A. Kan- nan, and Z. Zhu, “Deep speaker : an end-to-end neural s peaker embedding system, ” arX iv prep rint arXiv:1705.02304 , 2017. [9] D. Snyder , D. Garcia-Romero , D. Povey , and S. Khudanpur , “Deep neural network embeddings for text-inde pendent speaker veri fication , ” in P r oc. Interspee ch , 2017, pp. 999–1003. [10] G. Bhattachary a, J. Alam, and P . Kenn y , “Deep speaker em- beddings for short-durat ion speaker veri fication , ” in Proc . Inter- speec h , 2017, pp. 1 517–1521. [11] F . Chowdhury , Q. W ang, I. L. Moreno, and L. W an, “ Attention- based models for text-depe ndent speaker verificati on, ” arXiv pre print a rXiv:1710.10470 , 2017. [12] C. Raf fel and D. P . Ellis, “Feed-forw ard netw orks with at ten- tion can solve some long-term memory problems, ” arXiv pr eprint arXiv:1512.08756 , 2015. [13] S. Iof fe, “Proba bilisti c linear d iscrimina nt analysi s, ” in Europ ean Confer ence on Compu ter V ision . Springe r , 2006, pp. 531–542. [14] S. J. Prince and J. H. Elder , “Probabili s tic linear discriminant anal- ysis for inferenc es about identity , ” in P r oc. ICCV , 2007, pp. 1–8. [15] G. Bhattacha rya, J. Alam, T . Stafylakis, and P . Kenn y , “Deep neu- ral network based text -depende nt speaker recogniti on: Prelimi- nary results, ” in Proc. Ody s se y , 20 16, pp. 9–15. [16] C. S. Greenber g, V . M. Stanford, A. F . Martin, M. Y adagiri, G. R. Doddingto n, J. J . Godfre y , and J . Hernandez-C ordero, “The 2012 NIST speak er recognit ion e va luati on. ” in P r oc. Inter s peec h , 20 13, pp. 1971–1975. [17] D. Garcia-Romero and C. Y . Espy-W ilson, “ Analysis of i-vec tor length normalizat ion in speaker recognition systems, ” in Pr oc. In- terspe ech , 2011, pp. 249–252. [18] D. Po vey , A. Ghoshal, G. Boulianne, L. Burget, O. Glembek, N. Goel, M. Hannemann, P . Motlicek, Y . Qia n, P . Sch warz e t a l. , “The Kaldi speech recognition toolkit, ” in IE EE 2011 workshop on auto matic speec h recog nition and under standing (ASR U) , no. EPFL-CONF-192584. IEEE Signal Processing Society , 2011. [19] D. Sn yder , D. Garcia-Ro mero, G. Sell, D. Pove y , and S. Khudan- pur , “X-vector s: Robust DNN embeddings for speaker recogni- tion, ” in Proc . ICASSP , 2018. [20] L. Ferrer , H. Bratt, L. Burget, H. Cernock y , O. Glembek, M. Gra- ciaren a, A. Lawson, Y . Lei, P . Matejka, O. Plchot et al. , “Pro- moting robustness for speake r m odeling in the community: the PRISM ev aluation set, ” in Procee dings of NIST 2011 workshop , 2011. [21] K. Kinoshita, M. Delcroix, S. Gannot, E. A. Habets, R. Haeb- Umbach, W . Kell ermann, V . Leutnant , R. Maas, T . Naka tani, B. Raj et al. , “ A summary of the REVERB challenge: state-of- the-art and remaining challenges in reve rberant speech processing research , ” EURASIP Journal on A dvances in Signal Proc essing , vol. 2016, no. 1, p. 7, 2016.

Original Paper

Loading high-quality paper...

Comments & Academic Discussion

Loading comments...

Leave a Comment