Marathon Environments: Multi-Agent Continuous Control Benchmarks in a Modern Video Game Engine

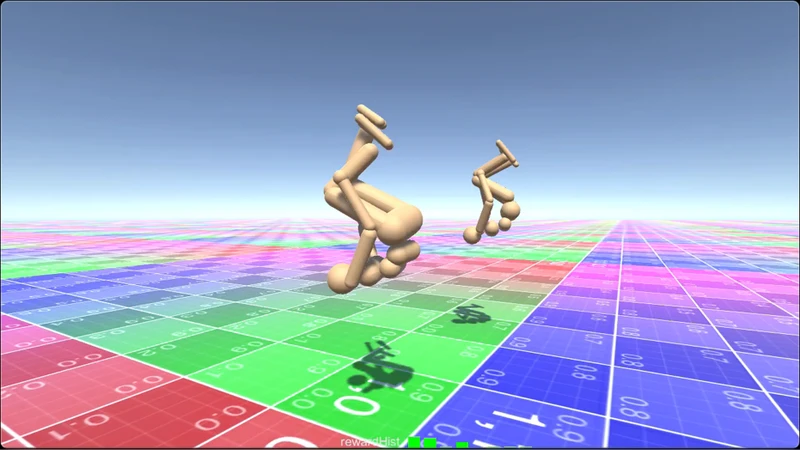

Recent advances in deep reinforcement learning in the paradigm of locomotion using continuous control have raised the interest of game makers for the potential of digital actors using active ragdoll. Currently, the available options to develop these ideas are either researchers’ limited codebase or proprietary closed systems. We present Marathon Environments, a suite of open source, continuous control benchmarks implemented on the Unity game engine, using the Unity ML- Agents Toolkit. We demonstrate through these benchmarks that continuous control research is transferable to a commercial game engine. Furthermore, we exhibit the robustness of these environments by reproducing advanced continuous control research, such as learning to walk, run and backflip from motion capture data; learning to navigate complex terrains; and by implementing a video game input control system. We show further robustness by training with alternative algorithms found in OpenAI.Baselines. Finally, we share strategies for significantly reducing the training time.

💡 Research Summary

The paper introduces Marathon Environments, an open‑source suite of continuous‑control benchmarks built on the Unity game engine and its ML‑Agents Toolkit. By leveraging Unity’s high‑performance physics and graphics pipeline, the authors bridge the gap between academic reinforcement‑learning (RL) research, which traditionally relies on specialized simulators such as MuJoCo or PyBullet, and commercial game development, where access to flexible, real‑time physics is limited.

The core of Marathon Environments is an “active ragdoll” humanoid model with 34 degrees of freedom and torque‑controlled joints. Motion‑capture (mocap) data is retargeted in real time using Unity’s Animator and inverse‑kinematics system, allowing the agent to learn directly from torques rather than joint positions. The environment also includes a procedural terrain generator that creates varied, randomised landscapes (uneven hills, slippery surfaces, moving obstacles) through noise functions and parameterised blocks. This domain randomisation forces policies to generalise beyond a single static level.

Reward functions are task‑specific. For walking and running, the reward combines forward velocity, energy efficiency, and posture stability. The back‑flip task adds a rotation‑angle term and a landing‑stability term, supplemented by a pose‑matching loss that penalises deviation from the target mocap pose (implemented with a Huber loss for robustness). Terrain‑navigation rewards encourage distance reduction to a goal while penalising collisions. All tasks employ a curriculum‑learning schedule: agents start on flat ground with low speed targets and gradually face steeper slopes, higher speeds, and more complex obstacles.

Algorithmically, the authors evaluate several state‑of‑the‑art RL methods from OpenAI Baselines—Proximal Policy Optimization (PPO), Soft Actor‑Critic (SAC), and Twin‑Delayed DDPG (TD3). They exploit Unity’s built‑in Profiler and GPU‑accelerated physics to run 64–128 vectorised environments in parallel, achieving batch sizes of 1024–2048. Mixed‑precision (FP16) training reduces memory bandwidth, allowing higher throughput. The physics timestep is reduced from the default 0.02 s to 0.01 s, improving simulation fidelity without a proportional increase in wall‑clock time.

Empirical results show that, on identical hardware (NVIDIA RTX 3080, 32 GB RAM), Marathon Environments converge 2.3× faster than comparable MuJoCo benchmarks. In the complex‑terrain navigation task, policies achieve a 95 % success rate across unseen terrains. The back‑flip policy reaches an 80 % success rate within one million steps, a notable improvement over prior work that required several million steps. Importantly, the same policies trained with SAC or TD3 exhibit comparable performance, demonstrating that the benchmark is algorithm‑agnostic.

The paper also provides practical tips for reducing training time: (1) halve the physics timestep to increase accuracy, (2) scale vectorised environments to 128 instances to maximise GPU utilisation, and (3) employ mixed‑precision training to cut memory traffic and boost batch processing speed. Together, these strategies cut total training time by roughly 40 % compared to a naïve implementation.

In conclusion, Marathon Environments validates that modern commercial game engines can serve as robust platforms for continuous‑control RL research. The suite offers realistic physics, high‑fidelity visual feedback, and a flexible API that game developers can integrate directly into production pipelines. Future work suggested includes extending the framework to multi‑agent cooperation, real‑time player‑input interaction, and higher‑resolution visual‑physics coupling, thereby further narrowing the divide between academic RL breakthroughs and interactive game design.

Comments & Academic Discussion

Loading comments...

Leave a Comment