Learning Topological Representation for Networks via Hierarchical Sampling

The topological information is essential for studying the relationship between nodes in a network. Recently, Network Representation Learning (NRL), which projects a network into a low-dimensional vector space, has been shown their advantages in analyzing large-scale networks. However, most existing NRL methods are designed to preserve the local topology of a network, they fail to capture the global topology. To tackle this issue, we propose a new NRL framework, named HSRL, to help existing NRL methods capture both the local and global topological information of a network. Specifically, HSRL recursively compresses an input network into a series of smaller networks using a community-awareness compressing strategy. Then, an existing NRL method is used to learn node embeddings for each compressed network. Finally, the node embeddings of the input network are obtained by concatenating the node embeddings from all compressed networks. Empirical studies for link prediction on five real-world datasets demonstrate the advantages of HSRL over state-of-the-art methods.

💡 Research Summary

The paper addresses a fundamental limitation of most contemporary network representation learning (NRL) methods: while they excel at preserving local topological information, they largely ignore the global structure of the graph. To bridge this gap, the authors propose a novel framework called HSRL (Hierarchical Sampling Representation Learning). The core idea is to compress the original graph into a hierarchy of smaller graphs that reflect the community structure at multiple scales, then learn embeddings on each compressed graph using any existing NRL algorithm (e.g., DeepWalk, node2vec, LINE), and finally concatenate the embeddings from all levels to obtain the final representation for each original node.

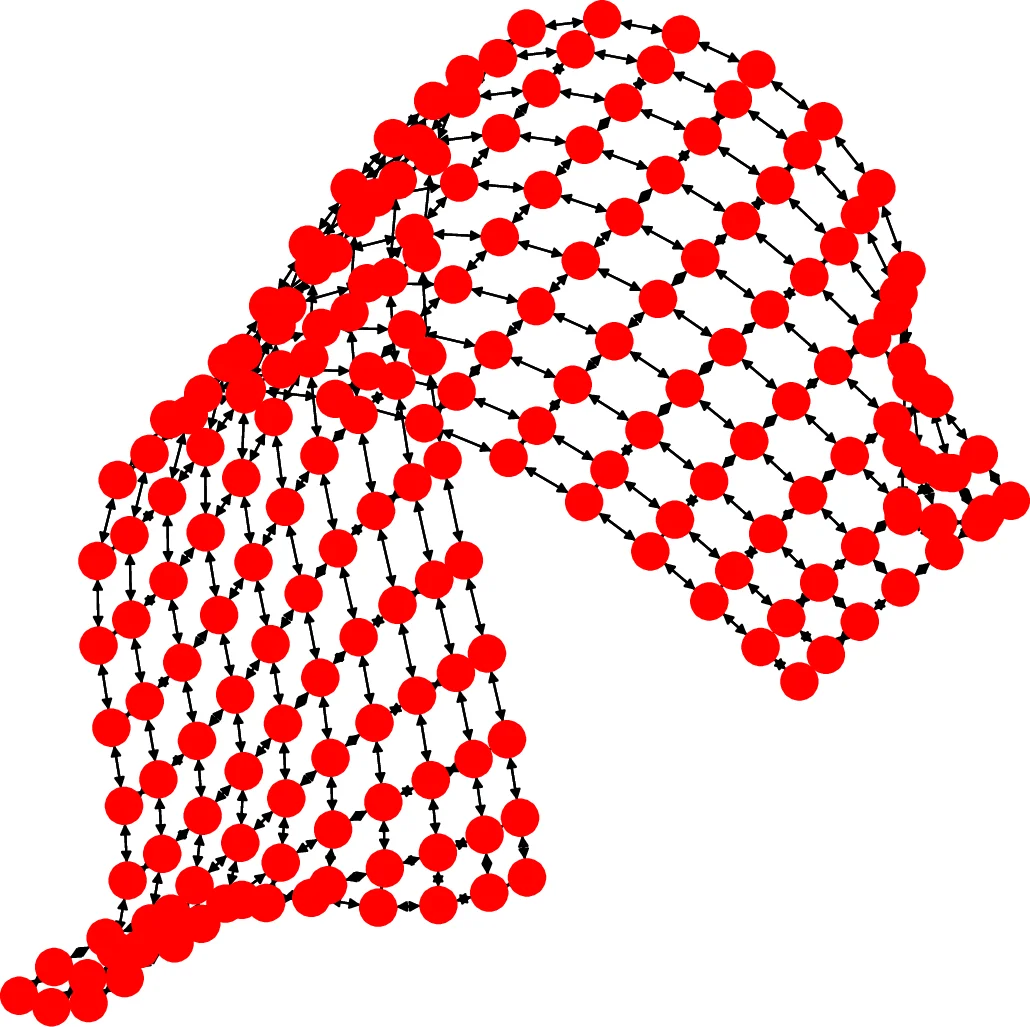

The hierarchical compression process, termed “hierarchical sampling,” is inspired by the Louvain method for modularity optimization. Starting from the original graph G₀, the algorithm repeatedly performs two phases: (1) Modularity Optimization – each node initially forms its own community; neighboring nodes are merged if the merge increases the overall modularity, and this is repeated until a local maximum is reached. (2) Node Aggregation – each discovered community becomes a super‑node in the next‑level graph G₁, and edge weights between super‑nodes are the sum of the weights of edges crossing the corresponding communities. By iterating these two phases K times, a sequence of graphs G₀, G₁, …, G_K is produced, each exposing a different resolution of the original network’s global topology. Unlike HARP, which merges arbitrary adjacent nodes and can blur community boundaries, HSRL’s community‑aware compression preserves the high‑level structure.

After obtaining the hierarchy, any NRL mapping function f can be applied independently to each G_k, yielding embeddings Z_k. For a node v_i in the original graph, its final embedding Z_i is formed by concatenating the embeddings of the communities to which v_i belongs at each level: Z_i =

Comments & Academic Discussion

Loading comments...

Leave a Comment