ACTRCE: Augmenting Experience via Teachers Advice For Multi-Goal Reinforcement Learning

Sparse reward is one of the most challenging problems in reinforcement learning (RL). Hindsight Experience Replay (HER) attempts to address this issue by converting a failed experience to a successful one by relabeling the goals. Despite its effectiveness, HER has limited applicability because it lacks a compact and universal goal representation. We present Augmenting experienCe via TeacheR’s adviCE (ACTRCE), an efficient reinforcement learning technique that extends the HER framework using natural language as the goal representation. We first analyze the differences among goal representation, and show that ACTRCE can efficiently solve difficult reinforcement learning problems in challenging 3D navigation tasks, whereas HER with non-language goal representation failed to learn. We also show that with language goal representations, the agent can generalize to unseen instructions, and even generalize to instructions with unseen lexicons. We further demonstrate it is crucial to use hindsight advice to solve challenging tasks, and even small amount of advice is sufficient for the agent to achieve good performance.

💡 Research Summary

This paper introduces ACTRCE (Augmenting experienCe via TeacheR’s adviCE), a novel reinforcement learning (RL) technique designed to address the pervasive challenge of sparse rewards. The method builds upon and significantly extends the Hindsight Experience Replay (HER) framework by employing natural language as a universal and compact goal representation.

The core problem in sparse-reward RL is that an agent receives feedback (a positive reward) only upon complete success, making learning extremely inefficient. HER mitigates this by relabeling failed trajectories with alternative goals that were achieved during the episode, effectively creating successful experiences from failures. However, HER’s applicability is limited by its reliance on a hand-designed goal representation, often a subset of the state space (e.g., object coordinates), which can be redundant, non-informative, and lacks generalizability.

ACTRCE overcomes this limitation by leveraging natural language. The algorithm operates as follows: After an agent completes an episode, a “teacher” module provides advice in natural language, describing a goal that was actually achieved in the final state (e.g., “reached the blue torch”). This advice serves as a new, alternative goal. The original trajectory is then relabeled with this linguistic goal and a corresponding positive reward, creating a new, successful experience that is stored in the replay buffer. This process augments the sparse reward signal with dense, language-grounded feedback.

The authors conduct comprehensive experiments in two challenging environments: a 2D KrazyGrid World and a complex 3D navigation task in VizDoom. The results demonstrate several key advantages:

- Effectiveness of Language Representation: Compared to non-language baselines and simple one-hot encoding of language, ACTRCE with GRU or pre-trained word embeddings efficiently solves tasks where HER with standard goal representations fails completely.

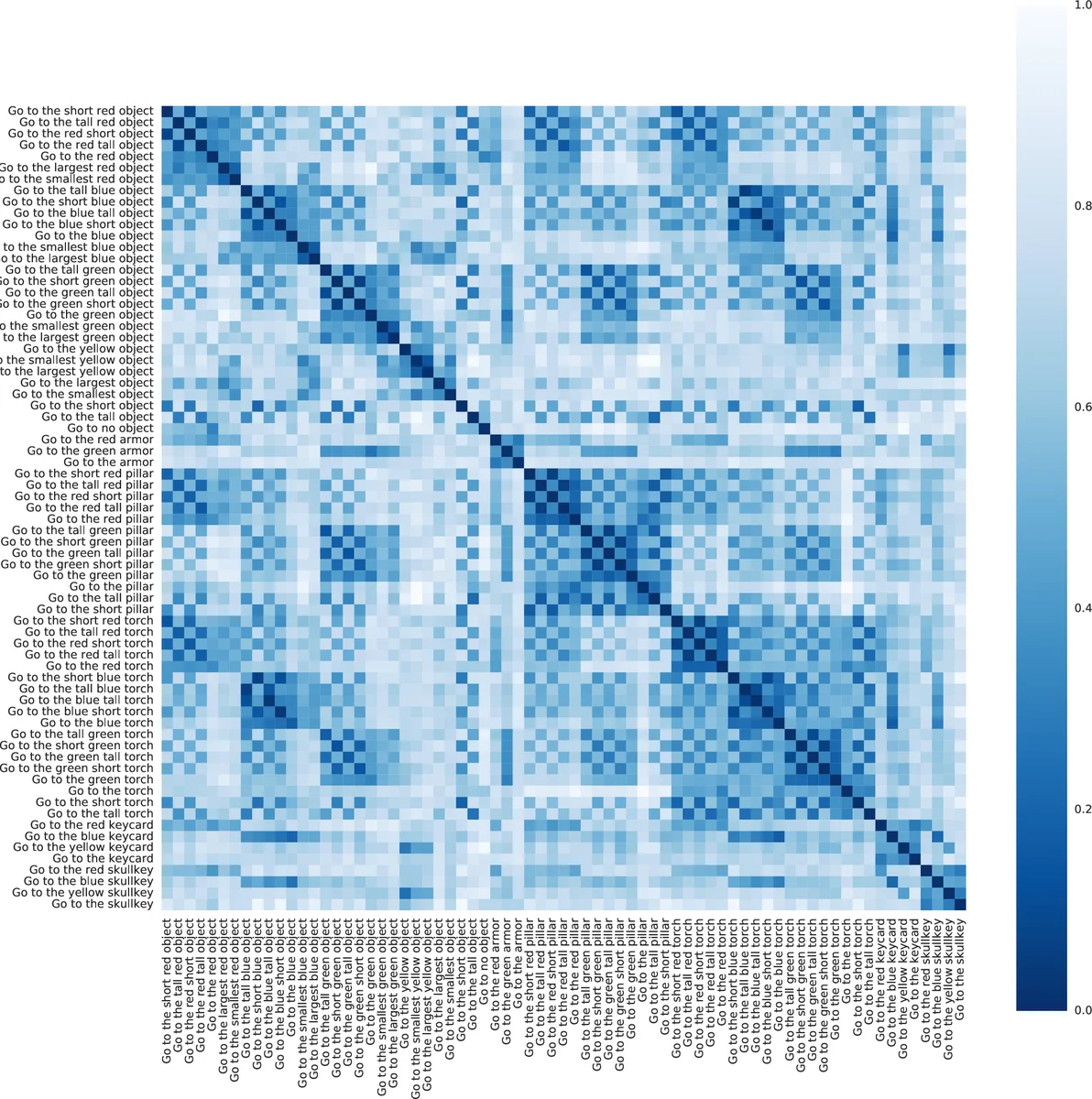

- Generalization to Unseen Instructions: The agent can successfully follow instructions that were not seen during training, such as novel combinations of attributes and objects. Remarkably, when using pre-trained word embeddings, the agent can even generalize to instructions containing entirely unseen vocabulary words (e.g., “magenta”) by leveraging semantic similarities from the embedding space.

- Crucial Role of Hindsight Advice: The use of language-based hindsight advice is shown to be critical for learning in difficult environments. In the most challenging VizDoom tasks, the agent without teachers’ advice makes no progress, while ACTRCE learns effectively.

- Sample Efficiency of Advice: The performance improvement is substantial even when teachers’ advice is provided for only a small fraction of the total experiences, highlighting the practical utility and low burden of the method.

The paper also details the neural network architecture, which integrates a language processing module (for goal embedding), a vision processing module (for state observation), and a gated attention mechanism to fuse goal and state information before computing action values.

In conclusion, ACTRCE presents a significant advancement by integrating the expressive and compositional power of natural language into the RL paradigm. It provides a scalable solution to the sparse reward problem, enables impressive generalization, and opens new avenues for research at the intersection of language grounding and reinforcement learning. The finding that minimal linguistic advice can dramatically boost learning efficiency is particularly promising for real-world applications where such feedback might be available from humans or automated systems.

Comments & Academic Discussion

Loading comments...

Leave a Comment