OEDIPUS: An Experiment Design Framework for Sparsity-Constrained MRI

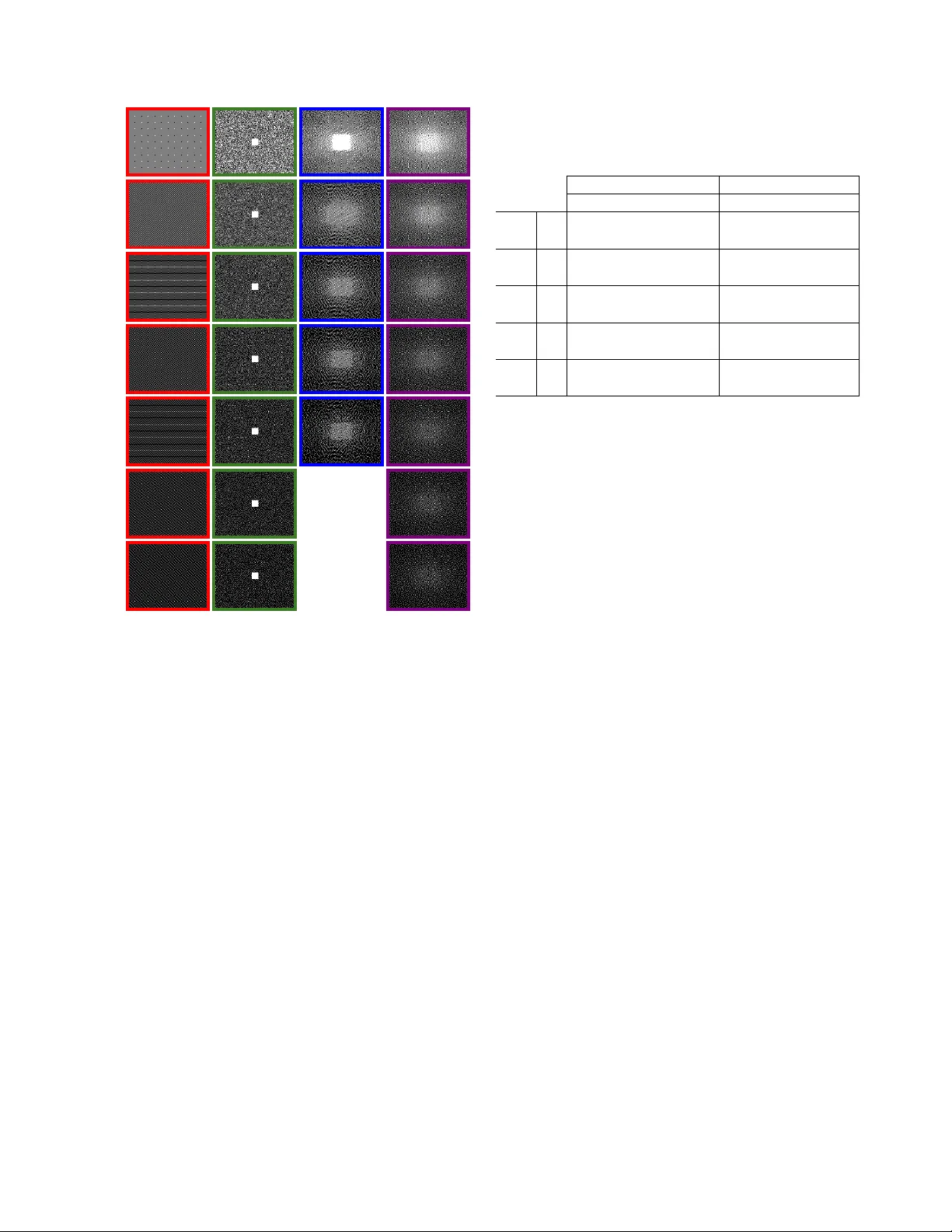

This paper introduces a new estimation-theoretic framework for experiment design in the context of MR image reconstruction under sparsity constraints. The new framework is called OEDIPUS (Oracle-based Experiment Design for Imaging Parsimoniously Unde…

Authors: Justin P. Haldar, Daeun Kim