Supervised Metric Learning with Generalization Guarantees

The crucial importance of metrics in machine learning algorithms has led to an increasing interest in optimizing distance and similarity functions, an area of research known as metric learning. When data consist of feature vectors, a large body of wo…

Authors: Aurelien Bellet

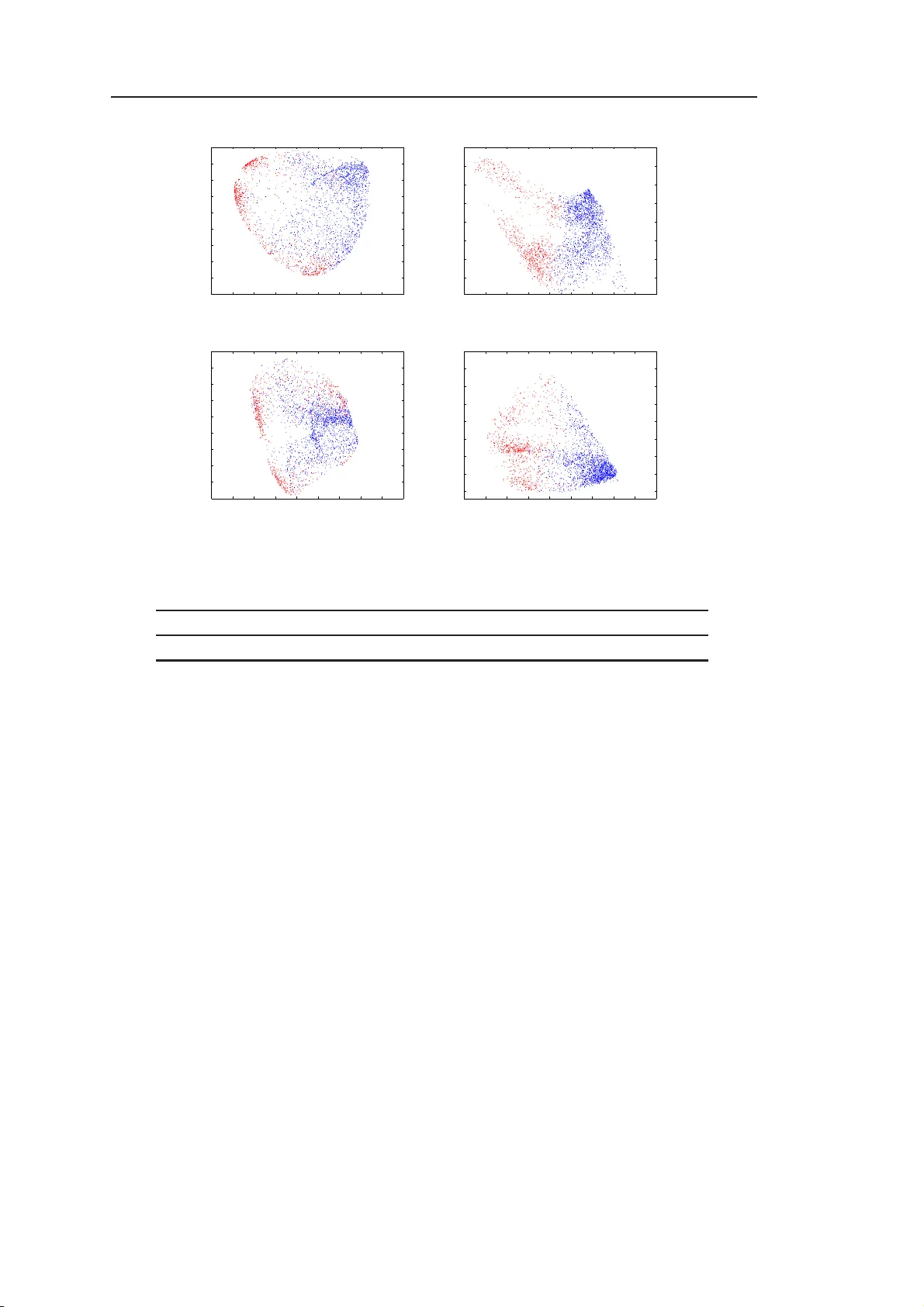

´ Ecole Do ctorale ED488 “Sciences, Ing ´ enierie, S ant ´ e” Sup ervised Metric Learning with Generalization Gu aran tees Th ` ese av ec lab el europ ´ een pr´ epar ´ ee par Aur ´ elien Bellet p our obtenir le grade de : Do cteur de l’Univ ersit ´ e Jean Monnet de Sain t- ´ Etienne Domaine : Informatique Lab orato ire Hub ert Curien, UMR CNRS 5516 F acult ´ e des Sciences et T ec hnique s Soutenance le 11 D ´ ece mbre 2012 au Lab oratoire Hub ert Cur ien dev an t le jury c omp os ´ e de : Pierre Dup on t Professeur, Univ ersit ´ e Catholique de Louv a in Rapp orteur R ´ emi Gilleron Professeur, Univ ersit ´ e de Lille Examinateur Amaury Habrard Professeur, Univ ersit ´ e d e Sain t- ´ Etienne Co-directeur Jose Oncina Professeur, Univ ersidad d e Alic ante Rapp orteur Liv a R alaivola Professeur, Aix-Marseille Univ ersit ´ e Examinateur Marc Sebban Professeur, Univ ersit ´ e de Sain t- ´ Etienne Directeur Remerciements Je tiens tout d’ab ord ` a remercier Pierre Dup on t, P rofesseur ` a l’Univ ersit´ e Catholique de Louv ain, et Jose O n cina, Professeur ` a l’Universit ´ e d’Alicant e, d ’a v oir accept ´ e d’ ˆ etre les rapp orteurs d e mon trav ail de th ` ese. Leurs r emarques p ertinente s m’on t p ermis d’am ´ eliorer la qualit ´ e de ce man uscrit. Plus g ´ en´ eralemen t, je remercie l’ensemble du jury , notammen t R ´ emi Gill eron, Professeur ` a l’Unive rsit´ e de Lille, et Liv a Ralaiv ol a, Professeur ` a Aix-Marseille Univ ersit ´ e, qui on t tout de suite a ccept ´ e d’ ˆ etre examinateurs. Je remercie c haleureusement mon directeur et mon co-directeur de th ` ese, Marc et Amaury , a v ec q u i j’ai d´ eve lopp´ e des liens pr ofessionn els et p e rsonn els qu i de toute ´ evidence dureront au-del` a de cette th ` ese. Je su is partic uli` erement reconnaissan t env ers Marc qui, malgr ´ e son attrait p ou r une certaine ´ equip e de f o ot ball, a su me conv aincre d e faire cette th ` ese et m’a fait confiance en acceptan t un arr angemen t extraordinaire (dans tous les sens du terme) p our qu e je p uisse passer ma premi` ere ann´ ee ` a ´ Edim b ourg. Je ve ux ´ egalemen t saluer les coll ` egues d u Lab oratoire Hub ert Curien et du d ´ epartement d’informatique de l’UJM. En premier lieu, mon voisin de bureau et ami JP , qui fut aussi un excellen t co- ´ equipier W arligh t. Je p ens e aus s i aux au tr es do ctoran ts (anciens et actuels) que son t Lauren t, Christophe, ´ Emilie, Da vid, F abien, T ung, C hahrazed et Mattias. Enfi n, je veux mentio nn er les p ersonnes r encon tr ´ ees dans le cadre des pro jets P ASCAL2 et LAMP AD A, notamment Emilie et Pierre du LIF de Marseill e a v ec qui j’esp ` ere av oir l’occasion d e trav ailler et de collab orer encore dans le futur. D’un p oint de vue p lu s p ersonnel, je salue ´ evidemment les amis, qui son t trop n om breux p our ˆ etre cit ´ es mais qui s e r econna ˆ ıtron t. L a pr´ esence d e certains ` a ma soutenance me fait ´ enorm ´ ement plaisir. Ces remerciements ne seraient p as complets sans un mot p our Marion, qui m’a b eaucoup soutenu et encourag ´ e p endan t ces (pr esqu e) trois ann ´ ees. Elle a mˆ eme essa y ´ e de s’in t´ eresser ` a la classification lin ´ eaire parcimonieuse, r ´ eu ssissan t ` a faire illusion lors d’une r ´ eception ` a ECML! Enfin , last but not le ast , je d´ edie tout s implemen t cette th ` ese ` a mes parent s, mes grands-parents et mon p etit fr ` ere. iii Contents List of Figures vii List of T ables ix 1 In tro duction 1 I Bac kground 7 2 Preliminaries 9 2.1 Sup ervised Learn in g . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . 9 2.2 Deriving Generalization Guarante es . . . . . . . . . . . . . . . . . . . . . 14 2.3 Metrics . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . 19 2.4 Conclusion . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . 27 3 A Review of Sup ervised Metric Learning 29 3.1 In tro d uction . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . 29 3.2 Metric Learning from F eature V ectors . . . . . . . . . . . . . . . . . . . . 30 3.3 Metric Learning from S tructured data . . . . . . . . . . . . . . . . . . . . 42 3.4 Conclusion . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . 45 I I Contributions in Metric Learning from Structured Data 49 4 A String Kernel Based on Learned E dit Similarities 51 4.1 In tro d uction . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . 51 4.2 A New Marginalized String E dit K ernel . . . . . . . . . . . . . . . . . . . 52 4.3 Computing the Edit Kernel . . . . . . . . . . . . . . . . . . . . . . . . . . 53 4.4 Exp erimental V alidation . . . . . . . . . . . . . . . . . . . . . . . . . . . . 59 4.5 Conclusion . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . 63 5 Learning Go o d Edit Similarities from Lo cal Constraints 65 5.1 In tro d uction . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . 65 5.2 The Theory of ( ǫ, γ , τ )-Goo d Similarit y F unctions . . . . . . . . . . . . . . 67 5.3 Preliminary Exp erimen tal Study . . . . . . . . . . . . . . . . . . . . . . . 71 5.4 Learning ( ǫ, γ , τ )-Go o d Edit S imilarit y F unctions . . . . . . . . . . . . . . 77 5.5 Theoretical Analysis . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . 81 5.6 Exp erimental V alidation . . . . . . . . . . . . . . . . . . . . . . . . . . . . 89 5.7 Conclusion . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . 95 v vi CONTENTS I I I Con tributions in Metric Learnin g from F eature V ectors 99 6 Learning Go o d Bilinear Similarities from Global Constraints 101 6.1 In tro d uction . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . 101 6.2 Learning ( ǫ, γ , τ )-Go o d Bilinear S imilarit y F unctions . . . . . . . . . . . . 102 6.3 Theoretical Analysis . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . 105 6.4 Exp erimental V alidation . . . . . . . . . . . . . . . . . . . . . . . . . . . . 109 6.5 Conclusion . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . 113 7 Robustness and Generalization for Metric Learning 117 7.1 In tro d uction . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . 117 7.2 Robustness and Generalizatio n for Metric Learning . . . . . . . . . . . . . 118 7.3 Necessit y of Robustness . . . . . . . . . . . . . . . . . . . . . . . . . . . . 124 7.4 Examples of R ob u st Metric Learning Algorithms . . . . . . . . . . . . . . 126 7.5 Conclusion . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . 129 8 Conclusion & P ersp ectives 131 List of Publications 135 A L earning Condit ional Edit Probabilities 137 B Pro ofs 141 B.1 Pro ofs of C h apter 5 . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . 141 B.2 Pro ofs of C h apter 7 . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . 147 Bibliograph y 153 List of Figures 1.1 The t w o-fold pr oblem of generalization in metric learning . . . . . . . . . 3 2.1 3D unit balls of th e L 1 , L 2 and L 2 , 1 norms . . . . . . . . . . . . . . . . . . 13 2.2 Geometric int erpr etation of L 2 and L 1 constrain ts . . . . . . . . . . . . . 13 2.3 Plot of s ev eral loss functions for binary classification . . . . . . . . . . . . 14 2.4 Mink o wski distances: unit circles for v a rious v alues of p . . . . . . . . . . 22 2.5 Strategies to delete a n o de within a tree . . . . . . . . . . . . . . . . . . . 25 3.1 In tuition b ehind metric learning . . . . . . . . . . . . . . . . . . . . . . . 30 4.1 An example of m emoryless cPFT . . . . . . . . . . . . . . . . . . . . . . . 55 4.2 Tw o cPFT T | a and T | ab mo d eling p e ( s | a ) and p e ( s | ab ) . . . . . . . . . . . 56 4.3 The cPFT T | a and T | ab r epresen ted in the form of automata . . . . . . . 56 4.4 Automaton mo deling the in tersection of the automata of Figure 4.3 . . . . 57 4.5 A handwritten digit and its strin g repr esen tation . . . . . . . . . . . . . . 60 4.6 Comparison of our edit ke rn el with ed it d istances . . . . . . . . . . . . . . 61 4.7 Comparison of our edit ke rn el with other s tring kernels . . . . . . . . . . . 63 4.8 Influence of th e parameter t of K L & J . . . . . . . . . . . . . . . . . . . . . 64 5.1 A graphical insigh t in to ( ǫ, γ , τ )-go o dness . . . . . . . . . . . . . . . . . . 68 5.2 Pro jectio n space implied by the to y example of Figure 5.1 . . . . . . . . . 69 5.3 Estimation of the goo dness of edit similarities . . . . . . . . . . . . . . . . 72 5.4 Classification accuracy and sparsit y (Digit dataset) . . . . . . . . . . . . . 74 5.5 Classification accuracy and sparsit y with resp ect to λ . . . . . . . . . . . 75 5.6 Classification accuracy and sparsit y with resp ect to t . . . . . . . . . . . . 75 5.7 Classification accuracy and sparsit y (W ord dataset) . . . . . . . . . . . . . 76 5.8 Learning the edit costs: rate of con v ergence (W ord d ataset) . . . . . . . . 90 5.9 Influence of th e pairing s tr ategie s (W ord dataset) . . . . . . . . . . . . . . 91 5.10 Learning the separator: accuracy and sparsity results (W ord dataset) . . . 92 5.11 Learning the edit costs: rate of con v ergence (Digit dataset) . . . . . . . . 94 5.12 Infl u ence of the pairing strategies (Digit dataset) . . . . . . . . . . . . . . 95 5.13 Example of a set of reasonable p oin ts (Digit dataset) . . . . . . . . . . . . 95 5.14 1-Nearest Neigh b or results (W ord dataset) . . . . . . . . . . . . . . . . . . 97 6.1 Accuracy of the metho ds with resp ect to KPC A d imension . . . . . . . . 112 6.2 F eature space induced b y the similarit y (Rings dataset) . . . . . . . . . . 113 6.3 F eature space induced b y the similarit y (Svmguide1 dataset) . . . . . . . 114 7.1 Illustration of robustness in th e classic and metric learning settings . . . . 120 vii List of T ables 1.1 Summary of notation . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . 6 2.1 Common regularizers on v ectors . . . . . . . . . . . . . . . . . . . . . . . . 12 2.2 Common regularizers on matrices . . . . . . . . . . . . . . . . . . . . . . . 12 2.3 Example of an edit cost matrix . . . . . . . . . . . . . . . . . . . . . . . . 24 3.1 Metric learning from feature v ectors: main features of the metho ds . . . . 46 3.2 Metric learning from structured data: main features of th e metho ds . . . 46 4.1 Statistical comparison of our edit kernel with edit distances . . . . . . . . 61 4.2 Statistical comparison of our edit kernel with other strin g k ernels . . . . . 63 5.1 Example of a set of reasonable p o ints (W ord dataset) . . . . . . . . . . . . 92 5.2 Discriminativ e p atterns extracted from the reasonable p oints of T able 5.1 93 5.3 Summary of the main f eatures of GESL . . . . . . . . . . . . . . . . . . . 96 5.4 1-Nearest Neigh b or results on the Digit dataset . . . . . . . . . . . . . . . 97 6.1 Prop erties of the datasets used in the exp erim ental s tudy . . . . . . . . . 110 6.2 Accuracy of the linear classifiers bu ilt from the stud ied similarities . . . . 111 6.3 Accuracy of 3-NN classifiers us in g the stud ied similarities . . . . . . . . . 111 6.4 Runt ime of the studied metric learning metho ds . . . . . . . . . . . . . . 113 6.5 Summary of the main f eatures of SL LC . . . . . . . . . . . . . . . . . . . 114 ix “There is nothing more pr actical than a go o d theory .” — J ames C. Maxwel l “There is a theory whic h states that if eve r an yo ne disco v ers exactly what the Univ erse is for and why it is here, it w ill instant ly disapp ear and b e replaced by something even more bizarre and in exp licable. There is another theory which states that this has already h app ened.” — Douglas Ad ams xi CHAPTER 1 Intro duction The go al of mac hine learning is to automatica lly figure out ho w to perf orm ta sks by generalizing fr om examples. A mac hine learning algorithm tak es a d ata sample as inpu t and infers a mod el that captures the un derlying mec hanism (usu ally assumed to b e some unkn o wn probabilit y distribution) which generated the data. Data can consist of features v ectors (e.g., the age, b od y mass index, blo o d pressu re, ... of a patien t) or can b e s tr uctured, such as strings (e.g., text do c uments) or trees (e.g., XML do c uments). A classic setting is sup ervise d le arning , wher e the algorithm has access to a set of tr aining examples along with their lab els and m ust learn a mo del that is able to accurately predict the lab el of f uture (u nseen) exa mples. Sup ervised learning encompasses classification problems, where the lab el set is finite (for in stance, predicting the lab el of a c haracter in a handwriting recognitio n system) a nd regression pr oblems, where the lab el set is con tin uous (for example, the temp erature in we ather forecasting). On the other hand, an unsup ervise d le arning algo rithm has no access to the lab e ls of the training data. A classic example is clustering, w here w e aim at assigning d ata in to similar group s . The generalizat ion abilit y of the learned mo del (i.e., its p erformance on un seen examples) can sometimes b e guaran teed using arguments from statistical learning theory . Relying on the sa ying “bir d s of a feather fl o c k together”, many sup ervised and un su- p ervised mac h ine learning algorithms are based on a notion of metric (similarity or distance function) b et wee n examples, su c h as k -n earest neighbors or su pp o rt v ector ma- c hines in the sup ervised s etting and K -Mea ns clus terin g in unsu p ervised learning. The p erforman ce of these algorithms criticall y dep e nd s on the relev ance of the metric to the problem at hand — for instance, w e h op e that it identifies as similar the examples th at share the same und erlying lab el and as dissimilar those of d ifferen t lab els. Unfortu- nately , stand ard metrics (suc h as the Eu clidean distance b etw een feature v ectors or th e edit distance b et wee n strin gs) are often not appropriate b ec ause th ey fail to capture the sp ecific nature of the pr oblem of interest. F or this reaso n, a lot of effort has gone into metric le arning , the r esearc h topic devot ed to automatica lly learning metrics fr om data. In this thesis, w e fo cus on sup ervised metric learning, where w e try to adapt the metric to the problem at hand using the informatio n brought by a sample of lab eled examples. Man y of these metho ds aim to fi nd the parameters of a metric so that it b est satisfies a set of lo cal constrain ts o v er the training 1 2 Chapter 1. Introd uction sample, r equiring for instance that pairs of examples of the same class sh ould b e similar and that those of different class s h ould b e dissimilar according to th e learned metric. A large b ody of w ork has b een dev oted to sup ervised m etric learning from feature ve ctors, in particular Mahalanobis distance learning, w h ic h essentia lly learns a linear pro jectio n of the d ata into a new sp ace where the local constraints are b ett er sat isfied. While early metho d s were costly and could n ot b e applied to m edium-sized problems, recen t metho ds offer b etter scalabilit y and in teresting features such as sparsit y . Sup ervised metric learning from stru ctured data has receiv ed less attent ion b ecause it requires more complex pro cedures. Most of th e w ork has fo cused on learning metrics based on the edit distance. Roughly sp eaking, the edit distance b e t wee n t wo ob jects corresp onds to the c heap est sequence of edit op erations (ins ertion, d eletion and substitution of subparts) turning one ob ject in to the other, w here op er ations are assigned sp ecific costs gathered in a m atrix. Edit distance learning consists in optimizing the cost matrix and u sually relies on maximizing the lik eliho o d of pairs of similar examples in a probabilistic m o del. Ov erall, w e id en tify t wo main limitatio ns of the curren t su p ervised metric learning meth- o ds. First, metrics are optimized b ased o n lo c al constraints and used in lo c al algorithms, in particular k -n earest neigh b ors. Ho we ve r, it is u nclear whether the s ame pro cedur es can b e used to obtain go o d metrics for u se in glob al algorithms such as linear separa- tors, whic h are simple y et p ow erful cla ssifiers that ofte n require less memory and pr o vide greater p rediction sp ee d than k -nea rest neigh b ors. In this con text, one ma y wan t to op- timize the metrics according to a glob al crite rion but, to the b est of our kno wledge, this has neve r b een addressed. Second, and p erh ap s more imp ortant ly , there is a substan- tial lac k o f theoret ical understanding of generalization in metric learning. It is w orth noting that in this con text, th e question of generalizati on is t wo-fold, as illustrated in Figure 1.1 . First, one ma y b e in terested in the generalization abilit y of the metric itself, i.e., its co nsistency not only on the training sample bu t also on unseen data co ming from the same distrib ution. V ery little wo rk has b een done on this matter, and existing framew orks lac k generali t y . Second, one ma y also b e in terested in the generalizat ion abilit y of the learning algorithm that uses the learned metric, i.e., can w e derive gener- alizati on guaran tees for the learned mo del in terms of the qualit y of the learned m etric? In practice, the learned metric is plugged into a learning algorithm and one can only hop e that it yields go o d results. Although some ap p roac hes optimize th e metric based on the decisio n rule of classification algorithms su ch as k - nearest neigh b ors, this question has nev er b een in ve stigated in a formal wa y . As w e will see later in th is do cument, the recen tly-prop osed theory of ( ǫ, γ , τ )-goo d similarit y function ( Balcan et al. , 2008a , b ) has b een th e first attempt to bridge the gap b etw een the prop er ties of a sim ilarity fun ction and its p e rform an ce in linear classification, b u t h as n ot b een used so far in the con text of metric learning. This theory pla ys a central r ole in tw o of our con tributions. The limitations describ ed a b o v e constitute the main motiv ation for this thesis, and our co ntributions address them in sev eral wa ys. First, w e in tro du ce a string ke rn el Chapter 1. Introd uction 3 Metric learning algorithm Metric−based learning algorithm Learned metric Sample of examples Underlying unknown distribution Generalization guarantees for the learned model using the metric? Consistency guarantees for the learned metric? Learned model Sample of examples Figure 1.1: The t wo-fold problem o f gene r alization in metric lear ning. W e are in- terested in the g e ne r alization ability of the learned metr ic itself: can we s ay a nythin g ab out its cons is tency on unseen da ta drawn from the same distribution? F ur ther more, we are interested in the generaliza tio n ability of the learned mo del using that metric: can we relate its p e rformance on unse e n da ta to the qua lity of the learne d metr ic? that allo w s the u se of learned edit distances in k ernel-based methods su c h as supp ort v ector mac h ines. This pro vides a wa y to use these learned metrics in global classifiers. Second, w e prop ose t wo m etric learning ap p roac hes based on ( ǫ, γ , τ )-go o dness, for whic h generalizat ion guaran tees can b e deriv ed b oth for the learned metric itself and for a linear classifier built from that metric. In the first approac h (whic h deals with stru ctur ed data), the metric is optimized with resp ect to lo cal pairs to ensure the optimalit y of the solution. In th e second app roac h , dealing with f eature v ectors allo ws us to optimize a global criterion th at is m ore appropriate to linear classification. Lastly , we introd uce a general framew ork th at c an be used to derive generalization guarantees for man y existing metric learning metho ds b ased on local constraint s. Con text of this w ork This th esis w as carried out in the mac hin e learning team of Lab oratoire Hub ert Cu r ien UMR CNRS 55 16, part of Univ ersit y of Sain t- ´ Etienne and Univ ersity of L y on. Th e con tribu tions presented in this thesis w ere dev elop e d in the context of the ANR pro ject Lampada 1 (ANR-09-EM ER-007), whic h deals w ith scaling learning algorithms to hand le large sets of s tr uctured data, with fo cuses on metric learning and sp arse learning, and P ASCAL2 2 , a Eur op ean Net work of Excellence supp orting researc h in mac hin e learnin g, statistics and optimization. Outline of the thesis This dissertation is organized as follo w s. P art I reviews the bac kground w ork relev ant to this thesis: • Ch apter 2 formally introdu ces the scien tifi c conte xt: sup ervised learning, analytical framew orks for deriving generalizatio n guarante es, and v arious typ es of metrics. 1 http://lam pada.gforge.in ria.fr/ 2 http://pas callin2.ecs.so ton.ac.uk/ 4 Chapter 1. Introd uction • Ch apter 3 is a large survey of sup ervised metric learning from feature v ectors and structured data, with a fo cu s on th e relativ e merits and limitat ions of the metho ds of the literature. P art I I gathers our contributions on metric learning fr om structur ed d ata: • Ch apter 4 int ro d uces a n ew string k ernel based on learned edit pr obabilities. Unlik e other str ing edit k ernels, it is parameter-free an d guarante ed to b e v alid. Its n aiv e form requ ires the computation of an in finite su m o v er all finite strings that can b e bu ilt from the alphab e t. W e show ho w to g et roun d this problem b y using in tersection of pr obabilistic automata and algebraic manipulation. Exp eriments highligh t the p erform ance of our k ernel ag ainst state-of-t he-art string k ernels of the literature. • Ch apter 5 builds up on t he theory of ( ǫ, γ , τ )-go o d similarity function. W e fir st sho w that w e can us e edit similarities directly in this framew ork and ac hieve competitiv e p erforman ce. The main con tribution of this c hapter is a n o v el m etho d for learning string and tree edit similarities called GESL (for Go o d Edit Similarit y Learning) that relies on a relaxed v ersion of ( ǫ, γ , τ )-goo d ness. The prop osed approac h, whic h is more flexible than previous m etho ds, learn an edit simila rity from lo c al pairs and is then u sed to build a glob al linear classifier. Using uniform stabilit y argument s, w e are able to derive generaliza tion g uarantee s for th e learned similarit y that actually give an upp er b ound on t he ge neralization error of th e linear classifier. W e conduct extensiv e exp erimen ts that show the usefu lness of ou r app roac h and the p erform an ce and sp arsit y of the resulting linear classifiers. P art I I I gathers our con tributions on metric learning from feature vec tors: • Ch apter 6 presents a new b ilinear similarit y learning metho d for linear classifica- tion, calle d SLLC (for Similarit y L earn ing for Linear C lassification). Unlik e GESL, SLLC directly optimize s the empirical ( ǫ, γ , τ )-go o dness criterion, whic h m akes the approac h en tirely glob al : the similarit y is optimized with r esp ect to a global cri- terion (instea d of lo cal pairs) a nd plugged in a global linear classifier. S LLC is form ulated as a con ve x minimization problem that can b e efficien tly sol ve d in a batc h or online wa y . W e also k ernelize our approac h, thus learning a linear s im i- larit y in a nonlin ear feature sp ace induced by a k ernel. Using similar arguments as f or GESL, we deriv e generalization guaran tees for SLLC h ighligh ting that our metho d actually min im izes a tight er b ound on the generalizatio n error of the clas- sifier than GESL. Exper im ents on sev eral stand ard datasets sho w that SLLC leads to comp etit ive classifiers that ha v e the additional adv an tage of b eing v ery s parse, th us sp e eding up prediction. Chapter 1. Introd uction 5 • Ch apter 7 ad d resses the lack of general framew ork for establishing generalization guaran tees for metric learning. It is based on a sim p le adaptation of algorithmic robustness to the case where training data is made of pairs of examples. W e sho w that a r obust metric learning algorithm has generalization guaran tees, and furthermore that a wea k n otion of r obustness is actually necessary and sufficien t for a metric learning algorithm to generalize. W e illustrate the usefulness of our approac h b y sho wing that a large class of m etric learning algorithms are robu st. In particular, w e a re a ble to deal with sparsity-i nd ucing regularizers, whic h w as not p ossible with previous framew orks. Notation Throughout this do cument, N denotes th e s et of natural n umb er s while R and R + resp ectiv ely denote th e sets of real num b ers a nd nonnegativ e real num b e rs. Arbitrary sets are denoted b y calligraphic letters suc h as S , a nd |S | stand s for the n umb er of elemen ts in S . A set of m elemen ts f rom S is denoted by S m . W e denote v ectors b y b old lo w er case letters. F or a v ector x ∈ R d and i ∈ [ d ] = { 1 , . . . , d } , x i denotes the i th comp onent of x . The inner p r o duct b et w een tw o v ectors is denoted b y h· , ·i . W e denote matrices by b old u pp e r case letters. F or a c × d real-v alued matrix M ∈ R c × d and a p air of integ ers ( i, j ) ∈ [ c ] × [ d ], M i,j denotes the en try at ro w i and column j of the matrix M . The identit y m atrix is denoted b y I and the cone of symmetric p ositi ve semi-definite (PSD) d × d real-v alued matrices b y S d + . k · k denotes an arbitrary (v ector or m atrix) norm and k · k p the L p norm. Strings are d enoted b y sans serif letters suc h as x . W e u s e | x | to denote the length of x and x i to r efer to its i th sym b o l. In the conte xt of learning problems, w e use X a nd Y to denote th e inp ut space ( or instance space) and the output space (or label space) resp ectiv ely . W e use Z = X × Y to denote th e join t space, and an arb itrary lab eled instance is denoted by z = ( x, y ) ∈ Z . The hinge function [ · ] + : R → R + is defined as [ c ] + = max(0 , c ). Pr[ A ] denotes the probabilit y of the even t A , E [ X ] the exp ectation of the random v ariable X and x ∼ P indicates that x is dr a wn according to the probability distribu tion P . A su mmary of the n otations is giv en in T able 1.1 . 6 Chapter 1. Introd uction Notation Description R Set of real n umbers R + Set of nonnegativ e real num b ers R d Set of d -dimensional real-v alued vectors R c × d Set of c × d real-v alued matrices N Set of natural n umbers, i.e., { 0 , 1 , . . . } S d + Cone of symmetric PSD d × d real-v alued matrices [ k ] The set { 1 , 2 , . . . , k } S An arbitrary set |S | Number of elemen ts in S S m A set of m elements from S X Input space Y Output space z = ( x, y ) ∈ X × Y An arbitrary labeled instance x An arbitrary v ector x j , x i,j The j th compon ent of x and x i h· , ·i Inner product b etw een vectors [ · ] + Hinge function M An arbitrary matrix I The iden tity matrix M i,j Entry at row i and column j of matrix M k · k An arbitrary norm k · k p L p norm x An arbitrary string | x | Length of string x x i , x i , j j th symbol of x and x i x ∼ P x is dra wn i.i.d. from probability d istribu tion P Pr[ · ] Probabilit y of even t E [ · ] Exp ectation of random v ariable T able 1. 1: Summary of notation. P AR T I Background 7 CHAPTER 2 Prelimi na r ies Chapter abstract In this c ha pter, we intro duce the sc ie nt ific context of this th esis a s well as r elev ant bac kgr ound work. W e first intro duce forma lly the sup ervised learning setting and describ e the main idea s of statistica l learning theory , with a focus on bi- nary class ification. W e then presen t three analytica l frameworks (uniform con vergence, uniform stability and a lgorithmic robustness) for establishing that a learning algo rithm has g eneralizatio n guar antees. Lastly , we recall the definitio n o f several t y pes of metrics and give examples of such functions fo r feature vectors and structured data. 2.1 Sup ervised Learning The goal of sup erv ised learning 1 is to automaticall y infer a mo del (h yp o thesis) from a set of labeled examples t hat is a ble to mak e predictions give n n ew un lab eled data . In the follo wing, w e review basic notions of statistical learning theory , a v ery p opular framew ork p ioneered b y V apnik & Chervo nenkis ( 1971 ). Th e in terested r eader can refer to V apnik ( 1998 ) and Bousquet et al. ( 2003 ) for a m ore thorough d escription. 2.1.1 T ypical Se tt ing In su p ervised learning, we learn a h yp othesis from a s et of labeled examples. T his notion of training samp le is formalized b elo w. Definition 2.1 (T rainin g sample) . A training sample of size n is a set T = { z i = ( x i , y i ) } n i =1 of n observ ations indep e nd en tly and iden tically distributed (i.i.d.) according to an u nknown j oin t distribution P ov er the sp ace Z = X × Y , where X is the input space an d Y the output sp ace. F or a give n observ ation z i , x i ∈ X is the instance (or example) and y i ∈ Y its lab e l. When Y is discrete, w e are dealing with a classification task, and y i is called the class of x i . When Y is con tinuous, this is a regression task. In this t hesis, w e mainly fo c us on binary cla ssification tasks, where we assume Y = {− 1 , 1 } . 1 Note that there exist other learning paradigms , such as unsup ervised lea rning ( Ghahramani , 20 03 ), semi-sup ervised learning ( Chapelle et al. , 2006 ), transfer learning ( Pan & Y ang , 2010 ), reinforcemen t learning ( Sutton & Barto , 1998 ), etc. 9 10 Chapter 2. Preliminaries W e will mostly deal with feature vec tors and strings. F or feature v ectors, we generally assume that X ⊆ R d . F or strings, w e need the follo wing definition. Definition 2.2 (Alphab et and string) . An alphab et Σ is a finite nonempty set of sym- b ols. A string x is a fi nite sequ ence of symb ols from Σ. The empty string/sym b ol is denoted b y $ and Σ ∗ is the set of all finite strings (including $) that can b e generated from Σ. Finally , the length of a string x is denoted b y | x | . W e can n ow form ally defin e wh at we mean by sup ervised learnin g. Definition 2.3 (Sup ervised learning) . Sup ervised learning is the ta sk of inferr ing a function (often r eferred to as a h yp o thesis or a mo d el) h T : X → L b elonging to some h yp ot hesis class H f rom a training sample T , whic h “best” predicts y from x for an y ( x, y ) d r a wn from P . No te that the decision space L m a y or ma y not b e equal to Y . In ord er to c ho ose h T , w e need a criterion to assess the qualit y of an arbitrary hyp othesis h . Giv en a nonnegativ e loss function ℓ : H × Z → R + measuring the degree of a greemen t b et wee n h ( x ) and y , we d efine the notion of true risk. Definition 2 .4 (T rue risk) . The true risk (also called generalizatio n error) R ℓ ( h ) of a h yp ot hesis h with r esp ect to a loss fun ction ℓ is th e exp ected loss suffered by h o v er the distribution P : R ℓ ( h ) = E z ∼ P [ ℓ ( h, z )] . The most natural loss function for bin ary classification is the 0/1 loss (also called clas- sification error): ℓ 0 / 1 ( h, z ) = ( 1 if y h ( x ) < 0 0 otherwise . R ℓ 0 / 1 ( h ) then co rresp onds to the prop ortion of time h ( x ) and y agree in sign, and in particular to the pr op ortion of correct p redictions w h en L = Y . The goal of sup ervised learning is then to fin d a hyp othesis that ac hiev es the smallest true risk. Unfortunately , in general we cannot compute the true risk of a h y p othesis since the distribu tion P is un kno wn. W e can only measure it empirically on the training sample. This is called th e emp irical r isk. Definition 2.5 (Empirical risk ) . Let T = { z i = ( x i , y i ) } n i =1 b e a training sample. The empirical risk (also called emp irical error) R ℓ T ( h ) of a h yp othesis h o ve r T with resp ect to a loss fu nction ℓ is the a v erage loss su ffered by h on the instances in T : R ℓ T ( h ) = 1 n n X i =1 ℓ ( h, z i ) . Chapter 2. Preliminaries 11 Under some restrictions, u sing the emp ir ical risk to select the b est hyp othesis is a go o d strategy , as d iscussed in the next section. 2.1.2 Finding a Goo d Hyp othesis This section fo cuses on classic strategies for fin ding a go o d hyp othesis in the true risk sense. The d eriv ation of guarantee s on the true r isk of the selected hyp othesis will b e studied in Section 2.2 . Simply minimizing the empirical risk o ver all p ossible hyp otheses wo uld ob viously b e a go o d strategy if infinitely many training instances were a v ailable. Unfortunately , in real istic scenarios, trainin g data is limited and there alw ays e xists a h yp othesis h , ho w ev er complex, that p erfectly p r edicts the training samp le, i.e., R ℓ T ( h ) = 0 , but generalizes p o orly , i.e., h has a n onzero (p otent ially large) true risk. This situation wh ere the true r isk of a hypothesis is m uch larger than its empirical risk is called overfitting . The intuitiv e idea behin d it is that learnin g the training sample “b y h eart” do e s not pro vide go o d generalization to unseen data. There is therefore a trade-off b et w een min im izing the empirical risk and the complexit y of the consider ed h yp ot heses, kno wn as the bias-v ariance trade-off. Th ere essentially exist t w o wa ys to deal w ith it and a v oid ov erfitting: (i) restrict the h yp othesis space, and (ii) fa v or simple hyp otheses o ve r complex ones. In the follo win g, w e briefly presen t three classic str ategies for fi n ding a hyp othesis with small true risk. Empirical Risk Minimization The idea of the Empirical Risk Minimization (ERM) principle is to pic k a restricted hypothesis space H ⊂ L X (for in stance, linear classifiers, decision trees, etc.) and select a hyp othesis h T ∈ H that minimizes the empir ical risk: h T = arg min h ∈H R ℓ T ( h ) . This m a y w ork well in p ractice b ut dep ends on the choice of h yp othesis space. E s - sen tially , we w ant H large enough to include h yp otheses with small risk, but H small enough to a void o verfitting. Without bac kground knowledge on the t ask, pic king an appropriate H is difficult. Structural Risk Minimization In Structural Risk Minimization (SRM), w e u se an infinite sequence of hyp othesis classes H 1 ⊂ H 2 ⊂ . . . of increasing size an d s elect the h yp ot hesis that minim izes a p enalized v ersion of th e empir ical risk that fav ors “simple” classes: h T = arg min h ∈H c ,c ∈ N R ℓ T ( h ) + pen ( H c ) . 12 Chapter 2. Preliminaries Name F ormula Pros Cons L 0 norm k x k 0 Number of nonzero components SP N CO, NSM L 1 norm k x k 1 P | x i | CO, SP NSM (Squared) L 2 norm k x k 2 2 P x 2 i CO, SM L 2 , 1 norm k x k 2 , 1 Sum of L 2 norms of grouped v ariables CO, GSP NSM T able 2.1: Commo n r egularizer s on vectors. CO/NCO sta nd for conv ex /nonconv ex, SM/NSM for smo o th/nonsmo oth and SP/ GSP for s pa rsity/group sparsity . Name F ormula Pros Cons L 0 norm k M k 0 Number of nonzero components SP N CO, NSM L 1 norm k M k 1 P | M i,j | CO, SP N SM (Squared) F roben ius norm k M k 2 F P M 2 i,j CO, SM L 2 , 1 norm k M k 2 , 1 Sum of L 2 norms of ro ws/columns CO, GSP NSM T race (nuclear) norm k M k ∗ Sum of singular v alues CO, LO NSM T able 2.2: Common regula rizers on matrice s . Abbreviations are the same as in T able 2.1 , with LO standing for low-rank. This imp lemen ts the Occam’s r azor p rinciple according to wh ich one should choose th e simplest explanation consisten t with the training data. Regularized Risk Minimization Regularize d Risk Minimiza tion (RRM) also builds up on the Occam’s razor principle bu t is easier to implement: one p icks a single, large h yp ot hesis space H and a regularizer (usually some norm k h k ) and selects a hyp othesis that ac h iev es the b est trade-off b et wee n empirical risk minimization and r egularizatio n: h T = arg m in h ∈H R ℓ T ( h ) + λ k h k , (2.1) where λ is the trade-off parameter (in practice , it is set using v alidation data). The role of r egularization is to p enalize “complex” hypotheses. Note that it also pro vides a built-in w a y to br eak the tie b et wee n hyp otheses th at h a v e the same empirical risk. The c hoice of regularizer is im p ortan t and dep ends on the considered task and the desired effect. Common regularizers for v ector and matrix m o dels are giv en in T able 2.1 and T able 2 .2 resp ectiv ely . Some regularizers are easy to optimize b eca use they are con v ex and smo oth (for instance, the squ ared L 2 norm) while others do not ha ve these con v enien t p rop erties and are thus harder to deal with (see Figure 2.1 for a graphical insigh t into some of these r egularizers). Ho wev er, the latter ma y bring some p ote ntia lly in teresting effects suc h as sparsity: they tend to set some p arameters of the hyp othesis to zero. Figure 2.2 illustrates this on L 2 and L 1 constrain ts — this also holds for regularization. 2 2 In fact, regularized and constrained problems are equiv alent in the se nse that for any v alue of the parameter β of a feasible co nstrained problem, there exists a v alue of the parameter λ of t he Chapter 2. Preliminaries 13 Figure 2.1: 3D unit balls of the L 1 , L 2 and L 2 , 1 norms (ta ken from Grandv ale t , 2011 ). The L 2 norm is conv ex, smo oth and do es not induce sparsity . The L 1 norm is co nvex, nonsmo oth and induces sparsity at the co o rdinate level. The L 2 , 1 norm is conv ex, nonsmo o th a nd induces spa rsity at the gr o up level (simultaneous spa rsity of co ordinates b elo nging to the sa me predefined group). L 1 constrain t L 2 constrain t Figure 2 .2: Geometric interpretation of L 2 and L 1 constraints in 2D. Suppo se that we a re lo oking f or a hypothesis h ∈ R 2 with a constraint k h k ≤ β (represe nt ed in dark blue) tha t minimizes the empirical r isk (represented by the lig ht gre e n cont our line). U nlike the L 2 norm, the L 1 norm tends to zero out co ordinates, th us reducing dimensionality . Regularizatio n is used in many successful learning metho ds and, as w e will see in Sec- tion 2.2 , ma y help deriving generaliza tion guaran tees. 2.1.3 Surrogate Loss F unctions The metho d s d escrib ed ab o ve all rely on m inimizing the emp irical risk . Ho we ve r, due to the n on conv exit y of the 0/1 loss, minimizing (or app ro ximately min imizing) R ℓ 0 / 1 is kno wn to b e NP-hard ev en for simple hypothesis classes ( Ben-Da vid et al. , 2003 ). F or this r eason, surrogate conv ex loss fun ctions (that can b e m ore efficien tly h andled) are often used. The most p rominent choic es in the conte xt of b inary classification are: • the hinge loss: ℓ hing e ( h, z ) = [1 − y h ( x )] + = max(0 , 1 − y h ( x )), used for instance in supp o rt vecto r mac h ines ( Cortes & V apn ik , 1995 ). • the exp onen tial loss: ℓ exp ( h, z ) = e − y h ( x ) , used in Adab oost ( F reund & Schapire , 1995 ) . correspondin g regularized problem such that b oth p roblems have th e same set of solutions, and vice versa . In practice, regulari zed problems are more con venien t to use because they are alw a ys feasible. 14 Chapter 2. Preliminaries -0.5 0 0.5 1 1.5 2 2.5 3 3.5 -2 -1.5 -1 -0.5 0 0.5 1 1.5 2 Classification error (0/1 loss) Hinge loss Logistic loss Exp onential loss y h ( x ) ℓ ( h, z ) Figure 2.3: Plot of several loss functions for binary c lassification. • the logistic loss: ℓ log ( h, z ) = log(1 + ǫ − y h ( x ) ), used in Logitb o ost ( F riedman et al. , 2000 ) . These loss f unctions are plotted in Figure 2.3 along with the n oncon v ex 0/1 loss. Cho osing an appropriate loss fun ction is not an easy task and strongly dep ends on the problem, but there exist general results on the r elativ e merits of different loss fu nctions. F or in stance, Rosasco et al. ( 2004 ) studied statistical prop erties of several conv ex loss functions in a general classification setti ng and concluded that the hinge loss h as a better con v ergence r ate than other loss f u nctions. Ben-Da vid et al. ( 2012 ) ha ve fu rther sho wn that in the con text of linear classification, the hin ge loss offers the b e st guaran tees in terms of classification err or. In the follo wing section, we present analytical fr amew orks that allo w the deriv ation of generalizat ion guaran tees, i.e., relating the empirical risk of h T to its tr u e r isk. 2.2 Deriving Generalization Guaran tees In the previous sec tion, w e describ ed a few generic metho ds for learning a h yp othesis h T from a training sample T based on minimizing the (p enaliz ed) emp irical risk. Ho wev er, learning a hyp othesis with small true risk is wh at we are r eally int erested in. Typically , the empirical risk can b e seen as an optimisticall y biased esti mation of the true risk (esp ecially when the training sample is small), and a considerable amount of researc h has gone into deriving generaliza tion guaran tees for learning algorithms, i.e., b ound ing the deviation of the true r isk of the learned hyp othesis from its empirical measurement. These b ound s are often referred to as P A C (Pr obably Appro ximately Correct) b oun ds Chapter 2. Preliminaries 15 ( V aliant , 1984 ) and ha v e the follo wing form: Pr[ | R ℓ ( h ) − R ℓ T ( h ) | > ǫ ] ≤ δ , where ǫ ≥ 0 and δ ∈ [0 , 1]. In other w ords, it b ound s the probabilit y to observ e a large gap b et we en the true risk and the empirical risk of an hyp othesis. The k ey instruments for deriving P A C b ounds are concen tration inequ alities. They es- sen tially assess the deviation of s ome fu nctions of ind ep endent r andom v ariables from their exp ectation. Different concen tration inequ alities tac kle differen t fu n ctions of the v ariables. The most commonly u sed in m ac h ine learning are C heb yshev (only one v ari- able is considered), Ho effding (sums of v ariables) and McDiarmid (that can accommo- date any sufficiently regular fu nction of the v ariables). F or more d etails ab out concen- tration inequalities, see f or in s tance th e su rve y of Bouc h eron et al. ( 2004 ). In this section, w e presen t three theoretical framewo rks for establishing generalizatio n b ound s: uniform conv ergence, u niform s tability and algorithmic robustn ess (for a more general o verview, please r efer to the tu torial by Langford , 2005 ). Note that our con tr i- butions in Chapter 5 , Chapter 6 and Chapter 7 m ak e use of th ese framew orks. 2.2.1 Uniform Conv ergence The theory of uniform con ve rgence of empirical qu an tities to their mean ( V apnik & Chervo nenkis , 1971 ; V apnik , 1982 ) is one of the most pr ominen t to ols for deriving gen- eralizatio n b ounds. It p ro vides guaran tees that hold f or any hyp othesis h ∈ H (including h T ) and essentially b ounds (with some probabilit y 1 − δ ) the true risk of h by its em- pirical risk p lus a p enal ty term that dep end s on the num b er of training examples n , the size (or complexity) of the hyp othesis space H and the v alue of δ . Intuitiv ely , large n brings high confidence (sin ce as n → ∞ the empirical r isk conv erges to the tru e risk by the la w o f large n umb ers), complex H brings low confi d ence (since o v erfitting is more lik ely), and δ accoun ts for the probabilit y of dra wing an “unluc ky” training sample (i.e., not represent ativ e of the und erlying distribu tion P ). When the h yp othesis space is finite, w e get the follo wing P AC b ound in O (1 / √ n ). Theorem 2.6 (Uniform conv ergence b ound for the fin ite case) . L et T b e a tr aining sample of size n dr awn i.i.d. fr om some distribution P , H a finite hyp othesis sp ac e and δ > 0 . F or any h ∈ H , with pr ob ability 1 − δ over the r andom sample T , we have: R ℓ ( h ) ≤ R ℓ T ( h ) + r ln |H | + ln(1 /δ ) 2 n . When H is co ntin uous (for instance, if H is th e space of linear classifiers), w e n eed a measure of the complexit y of H such as the V C dimension ( V apnik & Ch erv onenkis , 16 Chapter 2. Preliminaries 1971 ) , the fat-shattering dimension ( Alon et al. , 1997 ) or th e Rademac her c omplexit y ( Koltc h in skii , 200 1 ; Bartlett & Me nd elson , 2002 ). F or instance, using the VC dimension, w e get the follo wing b ound . Theorem 2.7 (Uniform con v ergence b ound with V C d imension) . L et T b e a tr aining sample of size n dr awn i.i.d. fr om some distribution P , H a c ontinuous hyp othesis sp ac e with VC dimension V C ( H ) and δ > 0 . F or any h ∈ H , with pr ob ability 1 − δ over the r andom sample T , we have: R ℓ ( h ) ≤ R ℓ T ( h ) + v u u t V C ( H ) ln 2 n V C ( H ) + 1 + ln(4 /δ ) n . A dra wb ack of un iform con v ergence analysis is that it is only based on the size of the training sample and the complexit y of the h yp othesis space, and completely ignores the learnin g alg orithm, i.e., ho w the h yp othesis h T is s elected. 3 In the follo wing, w e present tw o an alytical frameworks that explicitly tak e into accoun t the algorithm and can b e used to deriv e generalization guarantee s f or h T sp ecifically , in particular in the regularized risk minimization setting ( 2.1 ). 2.2.2 Uniform Stability Building on pr evious w ork on algorithmic stabilit y , Bousquet & Elissee ff ( 20 01 , 200 2 ) in tro du ced new defi n itions that allo w the deriv ation of generalization b oun ds for a large class o f algorithms. In tuitiv ely , an algorithm is said stable if it is robu st to small c hanges in its in p ut (in our case, the trainin g samp le), i.e., the v ariation in its outpu t is small. F ormally , w e fo cus on un iform stabilit y , a ve rsion of stabilit y that allo ws the deriv ation of rather tigh t b ounds. Definition 2.8 (Uniform stabilit y) . An al gorithm A has u niform stabilit y κ/n with resp ect to a loss fu nction ℓ if the follo w ing holds: ∀T , |T | = n , ∀ i ∈ [ n ] : sup z | ℓ ( h T , z ) − ℓ ( h T i , z ) | ≤ κ n , where κ is a p o sitiv e constan t, T i is obtained from the training sample T by r eplacing the i th example z i ∈ T b y another example z ′ i dra wn i.i.d. from P , h T and h T i are the h yp ot heses learned by A from T and T i resp ectiv ely . 4 3 In fact, the Rademacher complexit y can sometimes implicitly take into account t h e regularization term of the al gorithm. 4 Definition 2.8 corresp onds to t he case where th e training sample is altered through the replacement of an instance by another. Bousquet & Elisseeff ( 2001 , 2002 ) also give a definition of uniform stabilit y based on th e remo v al of an instance from the training sample, which implies Defin ition 2.8 . W e will use Definition 2.8 throughout this thesis: w e find it more con venien t to deal with since replacemen t preserves th e size of t he training sample. Chapter 2. Preliminaries 17 Bousquet & Elisseeff ( 2001 , 2002 ) ha v e sho wn that a large class of regularized r isk minimization algorithms sat isfies this definition. Th e constan t κ t yp ically dep ends on the form o f the loss function, the regularizer an d the regularization p arameter λ . Making a go o d us e of Mc Diarmid’s inequalit y , they show that wh en Defin ition 2.8 is fulfi lled, the follo win g b ound in O (1 / √ n ) h olds. Theorem 2.9 (Uniform stabilit y b o un d) . L et T b e a tr aining sample of size n dr awn i.i.d. fr om some distribution P and δ > 0 . F or any algorithm A with uniform stability κ/n with r e sp e ct to a loss function ℓ upp er-b ounde d by some c onstant B , 5 with pr ob ability 1 − δ over the r andom sample T , we have: R ℓ ( h T ) ≤ R ℓ T ( h T ) + κ n + (2 κ + B ) r ln(1 /δ ) 2 n , wher e h T is the hyp othesis le arne d by A fr om T . The main d ifference b et w een un iform con ve rgence and u niform stabilit y is that the lat- ter incorp orates r egularizatio n (through κ and h T ) and do es not r equire an y hyp othesis space complexit y argument. In particular, uniform stabilit y can b e u s ed to d eriv e gen- eralizatio n guaran tees for hypothesis classes that are difficult to analyze with classic complexit y argumen ts, s uc h as k -nearest neigh b ors or supp ort ve ctor machines that ha v e infinite VC dimension. I t can also b e adapted to n on-i.i.d. settings ( Mohri & Rostamizadeh , 2007 , 2010 ). W e will u se uniform stabilit y in the con tributions presented in Chapter 5 and Chap ter 6 . On the other hand, Xu et al. ( 20 12a ) ha v e shown that a lgorithms with sp ars it y -in d ucing regularization are not stable. 6 Algorithmic robustness, presented in the next section, is able to d eal w ith su c h algorithms. W e will mak e use of this framew ork in Chapter 7 . 2.2.3 Algorithmic R obustness Algorithmic robustness ( Xu & Mannor , 2010 , 2012 ) is the abilit y of an algorithm to p erform “similarly” on a tr aining example and on a test example that are “close”. It relies on a p artitioning of the space Z to charact erize closeness: t w o examples are close to eac h other if they lie in th e same partition of the sp ace. The partition itself is based on th e n otion of co v ering num b er ( Kolmogoro v & Tikh omiro v , 1961 ). Definition 2.10 (Co v ering n umb er ) . F or a metric s p ace ( S , ρ ) and V ⊂ S , we say that ˆ V ⊂ V is a γ -co v er of V if ∀ t ∈ V , ∃ ˆ t ∈ ˆ V suc h th at ρ ( t, ˆ t ) ≤ γ . The γ -co v ering num b er of V is N ( γ , V , ρ ) = min n | ˆ V | : ˆ V is a γ -co ver of V o . 5 Note that many loss functions are unb ounded if their domain is assumed to b e unboun ded ( see Figure 2.3 ), but in practice t h ey hav e b ound ed domain due for example to the common assumption that the norm of an y instance is boun ded. 6 Sparsity is seen here as the abilit y to identify redundan t features. 18 Chapter 2. Preliminaries In particular, wh en X is compact, N ( γ , X , ρ ) is fin ite, leading to a finite co ver. Then, Z can b e partitioned in to |Y |N ( γ , X , ρ ) subsets s u c h that if tw o examples z = ( x, y ) and z ′ = ( x ′ , y ′ ) b elong to the same subset, then y = y ′ and ρ ( x, x ′ ) ≤ γ . W e can n ow form ally defin e the n otion of robustness. Definition 2.11 (Algorithmic r ob u stness) . Algorithm A is ( K , ǫ ( · ))-robust, for K ∈ N and ǫ ( · ) : Z n → R , if Z can b e partitioned in to K disjoin t s ets, denoted b y { C i } K i =1 , suc h that the follo w in g holds for all T ∈ Z n : ∀ z ∈ T , ∀ z ′ ∈ Z , ∀ i ∈ [ K ] : if z , z ′ ∈ C i , then | ℓ ( h T , z ) − ℓ ( h T , z ′ ) | ≤ ǫ ( T ) , where h T is the hyp othesis learned by A from T . Briefly s p eaking, an algorithm is robust if for an y example z ′ falling in the same sub set as a training example z , then the gap b etw een the losses asso ciated with z and z ′ is b ound ed (b y a qu an tit y that ma y dep end on the trainin g sample T ). T he existence of the partition itself is guaran teed by the definition of co vering n umb er . No te that b o th uniform s tability and algorithmic robus tness prop erties in v olv e a b ound on deviations b et wee n losses. The key d ifference is th at uniform stabilit y studies the v ariation of the loss associated with a ny example z u n der sm all c hanges in the training sample (implying that the le arned h y p othesis itself do es not v ary muc h ), while algorithmic robustness considers the deviation b e tw een the losses asso ciated with t w o examples that are close (implying that the learned h yp othesis is lo cally consisten t). Xu & Mannor ( 2010 , 2012 ) hav e sho wn that a robust algorithm has generalization guar- an tees. Th is is formalized by the follo w in g theorem. Theorem 2 .12 (Robustness b ound) . L e t ℓ b e a loss function upp er-b ounde d by some c onstant B , and δ > 0 . If an algorithm A is ( K , ǫ ( · )) -r obust, then with pr ob ability 1 − δ , we have: R ℓ ( h T ) ≤ R ℓ T ( h T ) + ǫ ( T ) + B r 2 K ln 2 + 2 ln(1 /δ ) n , wher e h T is the hyp othesis le arne d by A fr om T . Note that ther e is a tradeoff b et w een the size K of the partition and ǫ ( T ): the latter can essen tially b e made as small as p ossible by us in g a fin er-grained cov er. P A C b ounds based on robustness are generally n ot tig ht since they r ely on uns p ec- ified (p ote ntia lly large) co vering n umb ers. On the o ther hand, a great adv anta ge of robustness is that it can deal with a larger class of regularizers than stabilit y (in p artic- ular, sparsit y -in d ucing norms can b e consid er ed ), and its geometric in terpretation mak es adaptations to non -stand ard settings (su c h as non-i.i.d. data) p ossib le. Our contribu- tion in Chapter 7 adapts robustness to the case of metric learning, when trainin g data consist of non -i.i.d. pairs of examples. Finally , note that Xu & Mannor ( 2010 , 2 012 ) Chapter 2. Preliminaries 19 established that a we ak notion of robustness is n ecessary and su fficien t f or an algorithm to generalize asymptotically , making robustness a key prop erty for th e generalizatio n of learning algorithms. After ha ving presente d the su p ervised learning setting and analytica l f ramew orks for deriving generaliza tion guaran tees, w e no w turn to th e topic of m etrics, whic h has a great place in this thesis. 2.3 Metrics The notion of metric (us ed here as a generic term for distance, similarit y or dissim- ilarit y function) pla ys an imp ortant role in man y mac hine learning problems suc h as classification, regression, clustering, or ranking. Successful examples include: • k -Nearest Neigh b ors ( k -NN) classificat ion ( Co v er & Hart , 196 7 ), where the p re- dicted cla ss of an instance x corresp onds to the ma jorit y class among the k -nearest neigh b ors of x in the training samp le, according to some distance or similarit y . • Kern el metho ds ( Sc h¨ olko pf & Smola , 200 1 ), where a sp ecific type of similarit y function called k ernel (see Definition 2.15 ) is used to im p licitly pro ject data in to a new high-dimen s ional feature space. T h e most prominent example is Supp ort V ector Ma c hines (SVM) classification ( Cortes & V ap n ik , 1 995 ), where a large- margin linear classifier is learned in that space. • K -Me ans ( Llo yd , 1982 ), a clustering algorithm which aims at fi nding the K clusters that minim ize th e within-cluster d istance on th e training sample a ccording to some metric. • In formation retriev al, where a similarit y function is ofte n u s ed to retriev e docu- men ts (w ebpages, images, etc.) that are similar to a qu ery or to another do cument ( Salton et al. , 1975 ; Baeza -Y ates & Rib eiro-Neto , 1999 ; Sivic & Z isserman , 2009 ). • Data visu alizatio n, where v isu alizatio n of in teresting patterns in high-dimensional data is sometimes a c hieved b y means of a metric ( V enna et al. , 20 10 ; Bertini et al. , 2011 ) . It should b e noted that metrics are esp ecially imp ortan t when dealing with stru ctured data (suc h as strings, tr ees, or graphs) b eca use they are often a con venien t pr o xy to ma- nipulate these complex ob jec ts: if a met ric is a v ailable, then any met ric-based algorithm (suc h as those presen ted in the ab o v e list) can b e used. In this section, w e first giv e the definitions of distance, similarit y and k ernel f u nctions ( 2.3.1 ), and then giv e some examples (b y no means an exhaustive list) of su ch metrics b et wee n f eature vect ors ( 2.3.2 ) and b et we en stru ctured data ( 2.3.3 ). 20 Chapter 2. Preliminaries 2.3.1 Definitions W e start by introd ucing the defin ition of a distance fun ction. Definition 2.13 (Dista nce fun ction) . A distance o v er a set X is a pairwise function d : X × X → R wh ic h satisfies the follo wing p rop erties ∀ x, x ′ , x ′′ ∈ X : 1. d ( x, x ′ ) ≥ 0 (nonn egativit y), 2. d ( x, x ′ ) = 0 if and only if x = x ′ (iden tit y of indiscernib les), 3. d ( x, x ′ ) = d ( x ′ , x ) (symmetry), 4. d ( x, x ′′ ) ≤ d ( x, x ′ ) + d ( x ′ , x ′′ ) (triangle inequ alit y). A pseudo-distanc e satisfies the prop e rties of a m etric, except th at instead of p rop erty 2, only d ( x, x ) = 0 is requir ed. No te that the pr op ert y of triangle inequalit y can b e used to sp eedup learning algorithms su c h as k -NN (e.g., Mic´ o et al. , 1994 ; Lai et al. , 2007 ; W an g , 2011 ) or K -Mea ns ( Elk an , 2003 ). While a distance function is a well-defined mathematical concept, there is n o general agreemen t on the defin ition of a (dis)similarit y function, which can essent ially b e an y pairwise function. Thr oughout this thesis, w e will use the follo wing definition. Definition 2.14 (S imilarit y fun ction) . A (d is)similarit y fun ction is a p airwise function K : X × X → [ − 1 , 1]. W e s a y that K is a symmetric similarit y function if ∀ x, x ′ ∈ X , K ( x, x ′ ) = K ( x ′ , x ). A similarity fun ction should return a high score for similar inp uts and a lo w sco re for dis- similar ones (the other wa y arou n d for a dissimilarit y function). Note that (normalized) distance functions are dissimilarit y functions. Finally , a kernel is a sp ecial t yp e of similarit y fun ction, as formalized by th e follo wing definition. Definition 2.15 (Kernel function) . A symmetric similarity function K is a ke rn el if there exists a (p ossibly implicit) mapping fun ction φ : X → H fr om the instance space X to a Hilb ert sp ace H such that K can b e written as an in n er pro du ct in H : K ( x, x ′ ) = φ ( x ) , φ ( x ′ ) . Equiv alen tly , K is a k ernel if it is p ositiv e semi-definite (PSD), i.e., n X i =1 n X j =1 c i c j K ( x i , x j ) ≥ 0 for all fin ite sequences of x 1 , . . . , x n ∈ X and c 1 , . . . , c n ∈ R . Chapter 2. Preliminaries 21 Kernel functions are a key comp o nent of k ern el m etho ds such as SVM, b eca use they can implicitly allo w chea p inner pro d uct compu tations in v ery high-dimensional spaces (this is kn own as the “k ernel tric k”) and b ring an elegan t theory b ased on Repro du cing Kernel Hil b ert Spaces (RKHS). Note that th ese adv an tages disapp ear w h en u s ing an arbitrary non-PSD simila rity function instead of a kernel, and the conv ergence of the k ernel-based algorithm ma y not ev en b e guaran teed in this case. 7 2.3.2 Some Metr ic s betw een F eature V ectors Mink o wski distances Mi nko wski d istances are a family of distances induced b y L p norms. F or p ≥ 1, d p ( x , x ′ ) = k x − x ′ k p = d X i =1 | x i − x ′ i | p ! 1 /p . (2.2) F rom ( 2.2 ) w e can reco ver three widely used d istances: • When p = 1, we get the Manhattan distance: d man ( x , x ′ ) = k x − x ′ k 1 = d X i =1 | x i − x ′ i | . • When p = 2, we get the “ordinary” Euclidean distance: d euc ( x , x ′ ) = k x − x ′ k 2 = d X i =1 | x i − x ′ i | 2 ! 1 / 2 = q ( x − x ′ ) T ( x − x ′ ) . • When p → ∞ , we get the Chebyshev distance: d che ( x , x ′ ) = k x − x ′ k ∞ = max i | x i − x ′ i | . Note that when 0 < p < 1, d p is n ot a p rop er d istance (it vio lates the triangle inequalit y) and the corresp onding (p seudo) norm is n oncon v ex. Figure 2.4 sh o ws the corresp onding unit circles for sev eral v alues of p . Mahalanobis dista nces The M ahalanobis d istance, whic h incorp orates knowledge ab out the correlation b et wee n features, is defin ed by d Σ − 1 ( x , x ′ ) = q ( x − x ′ ) T Σ − 1 ( x − x ′ ) , 7 Some researc h h as gone into training SVM with indefinite kernels, mostly based on building a PSD kernel from the indefinite one while learning the SVM classifier. The interested reader may refer to the work of Ong et al. ( 2004 ); Luss & d’Aspremont ( 2007 ); Chen & Y e ( 2008 ); Chen et al. ( 2009 ) and references therein. 22 Chapter 2. Preliminaries p → 0 p = 0 . 3 p = 0 . 5 p = 1 p = 1 . 5 p = 2 p → ∞ Figure 2.4: Minko wski distances: unit circ le s for v ar ious v alues of p . where x and x ′ are random vec tors from the same distribution with co v ariance matrix Σ . The term Mahalanobis distance is also used t o refer to the follo win g generaliza tion of the original d efinition, sometimes referred to as generalized quadratic distances ( Nielsen & Nock , 2009 ): d M ( x , x ′ ) = q ( x − x ′ ) T M ( x − x ′ ) , where M ∈ S d + . S d + denotes the cone of symmetric PS D d × d real-v alued matrices. M ∈ S d + ensures that d M is a pseud o-distance. When M is the iden tit y matrix, w e reco ver the Eu clidean d istance. Otherwise, using Ch olesky decomp osition, one can rewrite M as L T L , w h ere L ∈ R k × d , where k is th e rank of M . Hence: d M ( x , x ′ ) = q ( x − x ′ ) T M ( x − x ′ ) = q ( x − x ′ ) T L T L ( x − x ′ ) = q ( Lx − Lx ′ ) T ( Lx − Lx ′ ) . Th us, a Mahalanobis distance implicitly co rr esp onds to compu ting the Eu clidean dis- tance after the linear pro jection of the data defined by L . Note that if M is lo w-rank, i.e., r ank( M ) = r < d , then it indu ces a linear pro j ection of the data into a space of lo wer dimension r . It th us all o ws a more compact represen tation of the data and cheaper distance computations, esp ecia lly when the original feature space is high-dimensional. Because of these n ice p rop erties, learning Mahalanobis distance has attracted a lot of in terest and is a ma jor comp onent of metric learning (see S ection 3.2.1 ). Cosine similarity The co sine similarit y m easur es th e cosine of the a ngle b e t wee n tw o instances, and can b e computed as K cos ( x , x ′ ) = x T x ′ k x k 2 k x ′ k 2 . The cosine similarit y is widely us ed in data mining, in p articular in text r etriev al ( Baeza- Y ates & Rib eiro-Neto , 1999 ) and more recentl y in image retriev al (see for in s tance S ivic & Zisserman , 2009 ) when data are represente d as term v ectors ( Salton et al. , 1975 ). Chapter 2. Preliminaries 23 Bilinear similarity The bilinear similarit y is r elated to the cosine similarit y bu t does not includ e n ormalizatio n by the norms of the inputs and is parameterized b y a matrix M : K M ( x , x ′ ) = x T Mx ′ , where M ∈ R d × d is not requir ed to b e PSD nor symmetric. The b ilinear similarit y has b een used f or instance in image retriev al ( Deng et al. , 2011 ). When M is th e iden tit y matrix, K M amoun ts to an unnormalized cosine similarity . The bilinear s im ilarity has t w o adv anta ges. F irst, it is efficien tly computable for sparse in puts: if x and x ′ ha v e k 1 and k 2 nonzero features, K M ( x , x ′ ) can b e compu ted in O ( k 1 k 2 ) time. Seco nd , unlik e Mink o wski distance, Mahalanobis d istances a nd the cosine similarit y , it can be ea sily used as a simila rity measure b et we en instance s o f differen t dimension (for example, a do cument and a query) by choosing a nonsquare matrix M . A ma jor con tr ib ution of this th esis is to pr op ose a n o v el metho d for learning a bilinear similarit y (Chapter 6 ). Linear kernel The linear k ernel is simply the in ner pro du ct in the original sp ace X : K lin ( x , x ′ ) = x , x ′ = x T x ′ . In other wo rds, the corresp onding φ is an identit y map: ∀ x ∈ X , φ ( x ) = x . Note that K lin corresp onds to the bilinear similarit y with M = I . P olynomial kernels Pol ynomial kernels are defin ed as: K deg ( x , x ′ ) = ( x , x ′ + 1) deg , where deg ∈ N . It can b e sho wn that K deg implicitly pr o ject s an instance i nto the nonlinear space H of all monomials of degree up to deg . Gaussian k ernel The Gaussian ke rnel, also known as the RBF k ernel, i s a widely used k ernel defin ed b y K g aus ( x , x ′ ) = exp − k x − x ′ k 2 2 2 σ 2 , where σ 2 > 0 is a wid th parameter. F or this k ernel, it can b e sh o wn th at the c orre- sp ond ing implicit nonlinear pro jection space H is infin ite-dimensional. 2.3.3 Some Metr ic s betw een Structured Data Hamming distance The Hamming distance is a d istance b et wee n strings of ident ical length and is equal to th e num b er of p osit ions at which the symbols differ. It h as b een 24 Chapter 2. Preliminaries C $ a b $ 0 2 10 a 2 0 4 b 10 4 0 T able 2.3: Example of edit cost matrix C . Here, Σ = { a , b } . used mostly for b inary strin gs and is defi ned by d ham ( x , x ′ ) = |{ i : x i 6 = x ′ i }| . String edit distance The strin g ed it distance ( Lev ensht ein , 1966 ) is a d istance b e- t w een strin gs of p ossib ly differen t length built from an alphab et Σ. I t is b ased on three elemen tary edit op er ations: inser tion, delet ion a nd sub stitution of a symbol. I n the more general version, eac h op eration has a sp ec ific cost, gathered in a nonnegativ e ( | Σ | + 1) × ( | Σ | + 1) matrix C (the additional ro w and column accoun t for insertion and d eletion costs r esp ectiv ely). A sequence of op erations transforming a strin g x into a string x ′ is called an edit scr ip t. The edit distance b et we en x and x ′ is defin ed as the cost of the c h eap est edit script that turn s x in to x ′ and can b e computed in O ( | x | · | x ′ | ) time b y dynamic p rogramming. 8 The cl assic edit distance , known as the Lev enshtein distance, uses a unit cost matrix and th us corresp onds to the minim um num b er of op erations turning one string in to another. F or ins tance, the Lev ensht ein distance b et we en abb and aa is equal to 2, since turning abb int o aa requires at le ast 2 op erati ons (e.g., su bstitution of b with a and d eletion of b ). On the other hand , usin g the cost matrix given in T able 2.3 , the edit distance b et wee n ab b and a a is equal to 10 (deletion of a and t w o substitutions of b with a is the c heap est edit script). Using task-sp ecific costs is a k ey ingredien t to the su ccess of the edit distance in many applications. F or some p roblems suc h as handwr itten c haracter recognition ( Mic´ o & Oncina , 1998 ) or protein alignmen t ( Da yhoff et al. , 1978 ; Henik off & Heniko ff , 1992 ), relev an t cost matrices ma y b e a v ailable. But a m ore general solution consists in auto- matically learning the cost matrix from data, as w e shall see in Section 3.3 .1 . On e of the con tributions of this thesis is to prop ose a new edit cost learning metho d (Chapter 5 ). Sequence alignmen t Sequence alignmen t is a wa y of compu ting th e similarit y b e- t w een t w o strings, mostl y used in b ioinformatics to iden tify r egions of similarit y in DNA or p rotein sequen ces ( Moun t , 2004 ). It corresp onds to th e score of the b est align- men t. T h e s core of a n alignmen t is based on t he same elemen tary op eratio ns as the 8 Note that in the case of strings of equal length, the ed it d istance is u pp er b ounded by th e Hamming distance. Chapter 2. Preliminaries 25 (a) (b) (c) Figure 2 .5: Strategies to delete a node within a tre e: (a) original tree, (b) after deletion of the no de as defined b y Zhang & Shasha, and (c) after deletion of the

- no de as defined by Selko w. edit distance and on a score matrix f or su bstitutions, b ut u ses a (linear or affin e) gap p enalt y function instead of insertion and deletion costs. Th e most p rominent sequence alignmen t measur es are the Needleman-W un sc h score ( Needleman & W unsch , 1970 ) for global alignments and the S mith-W aterman score ( Smith & W aterman , 1981 ) for lo cal alignmen ts. T hey can b e compu ted by dynamic p rogramming. T ree edit distance Because of the g rowing in terest in applications that naturally in v olv e tree-structured data (suc h as the secondary structure of RNA in b iology , XML do cuments on the web or parse tree s in natural language pro cessing), s everal w orks ha v e extended th e string edit distance to trees, r esorting to the same elementa ry edit op erations (see Bi lle , 2005 , for a su rv ey on the matter). There exist t w o main v arian ts of the tree edit distance that differ in the wa y the deletion of a no de is handled. In Zhang & Shasha ( 1989 ), when a no de is delet ed all its c hildren are connected to its father. The b est algorithms for computing this distance hav e an O ( n 3 ) worst-case complexit y , where n is the num b er of no d es of the largest tree (see P a wlik & Augsten , 2011 , for an empirical ev aluation of several algorithms). Another v arian t is due to Selk o w ( 1977 ), where insertions and deletions are restricted to the lea v es of the tree. Suc h a distance is relev an t to sp ecific applications. F or instance, d eleting a

- tag (i.e., a n on leaf no de) of an un ordered list in an HTML do cument would r equire the iterativ e deletion of the

Original Paper

Loading high-quality paper...

Comments & Academic Discussion

Loading comments...

Leave a Comment