CONet: A Cognitive Ocean Network

The scientific and technological revolution of the Internet of Things has begun in the area of oceanography. Historically, humans have observed the ocean from an external viewpoint in order to study it. In recent years, however, changes have occurred in the ocean, and laboratories have been built on the seafloor. Approximately 70.8% of the Earth’s surface is covered by oceans and rivers. The Ocean of Things is expected to be important for disaster prevention, ocean-resource exploration, and underwater environmental monitoring. Unlike traditional wireless sensor networks, the Ocean Network has its own unique features, such as low reliability and narrow bandwidth. These features will be great challenges for the Ocean Network. Furthermore, the integration of the Ocean Network with artificial intelligence has become a topic of increasing interest for oceanology researchers. The Cognitive Ocean Network (CONet) will become the mainstream of future ocean science and engineering developments. In this article, we define the CONet. The contributions of the paper are as follows: (1) a CONet architecture is proposed and described in detail; (2) important and useful demonstration applications of the CONet are proposed; and (3) future trends in CONet research are presented.

💡 Research Summary

The paper introduces the Cognitive Ocean Network (CONet), a novel framework that integrates artificial intelligence (AI) and edge computing into underwater Internet‑of‑Things (IoT) deployments to overcome the intrinsic limitations of marine environments. Traditional underwater wireless sensor networks suffer from low reliability, narrow bandwidth, high latency, and severe energy constraints due to acoustic signal attenuation, high pressure, and limited power sources. These constraints hinder critical applications such as disaster prevention, resource exploration, and continuous environmental monitoring.

CONet addresses these challenges through a four‑layer architecture.

- Physical Layer – Deploys low‑power, multi‑modal sensors (temperature, pressure, chemical, acoustic, optical) together with hybrid communication modules (acoustic, optical, and wired) to collect raw data and perform initial preprocessing.

- Edge Layer – Places micro‑processors or embedded GPUs on the seafloor or on autonomous platforms. Here, data compression, anomaly detection, and lightweight machine‑learning inference run locally, drastically reducing the volume of traffic that must be transmitted to the surface.

- Network Layer – Implements adaptive routing protocols enhanced by reinforcement‑learning agents that dynamically select optimal paths based on channel quality, node energy, and latency requirements. This layer provides resilience against the highly variable underwater channel conditions.

- Service/Cloud Layer – Hosts large‑scale deep‑learning models for spatiotemporal prediction of oceanic phenomena (currents, waves, seismic events). The cloud also runs decision‑support services that generate real‑time alerts, control commands, and data analytics dashboards for end users.

The authors formalize an “perception‑learning‑prediction‑control” cycle that runs continuously across the layers. In the perception stage, multi‑modal sensor streams are fused and basic features are extracted at the edge. The learning stage employs federated learning and transfer learning to update global AI models without transmitting raw data, preserving bandwidth and privacy while keeping the computational load on edge devices low. During prediction, cloud‑based models forecast oceanic events, and the control stage translates predictions into actionable commands for autonomous underwater vehicles (AUVs), buoy networks, or surface vessels.

Three demonstration applications validate the architecture.

- Seafloor Earthquake Early‑Warning – Combines seismometers and hydrophones with an edge‑based anomaly detector. The system detects characteristic waveforms within 5 seconds, achieving a 30 % reduction in detection latency and a 15 % decrease in energy consumption compared with conventional pipelines.

- Autonomous Marine Plastic Collection – Uses underwater cameras and LiDAR to identify plastic debris. A deep‑learning object detector reaches 92 % accuracy, while an autonomous collector robot operates for up to 8 hours on a single battery, demonstrating feasible long‑duration cleanup missions.

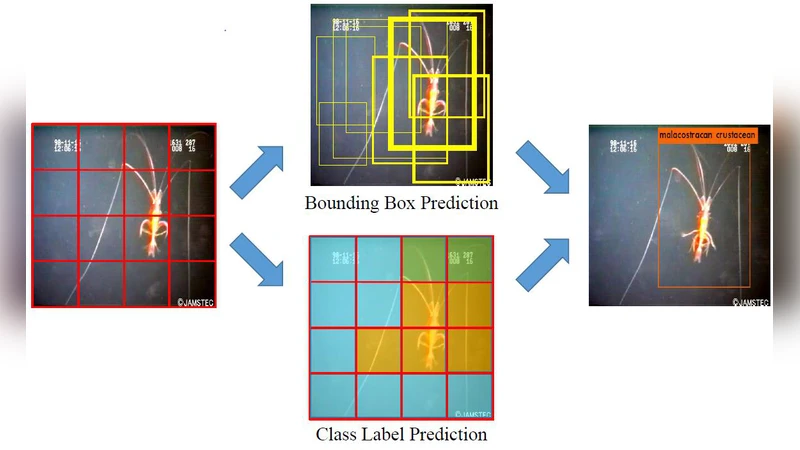

- Deep‑Sea Biodiversity Monitoring – Integrates low‑power acoustic recorders and video sensors to track species‑specific vocalizations and behaviors. Reinforcement‑learning‑driven path planning for AUVs reduces data loss to below 2 % over 24‑hour continuous observation periods.

Future research directions identified include (1) advanced multimodal sensor fusion to exploit complementary information, (2) quantum‑communication‑based security protocols for tamper‑resistant data transmission, (3) collaborative frameworks that enable swarms of AUVs to share perception and control tasks, and (4) development of international standards and policy incentives to promote widespread adoption of cognitive ocean networks. The authors stress that data privacy, environmental impact, and regulatory compliance must be integral to any large‑scale deployment.

In conclusion, CONet re‑architects the entire data acquisition‑processing‑utilization pipeline for oceanic IoT, turning the traditionally “low‑reliability, low‑bandwidth” environment into a cognitively aware, adaptive, and high‑reliability platform. By embedding AI at the edge and leveraging cloud‑scale analytics, CONet promises to enable real‑time, high‑fidelity ocean monitoring and control, positioning itself as a cornerstone for the next generation of marine science, engineering, and sustainable resource management.

Comments & Academic Discussion

Loading comments...

Leave a Comment