Online Influence Maximization (Extended Version)

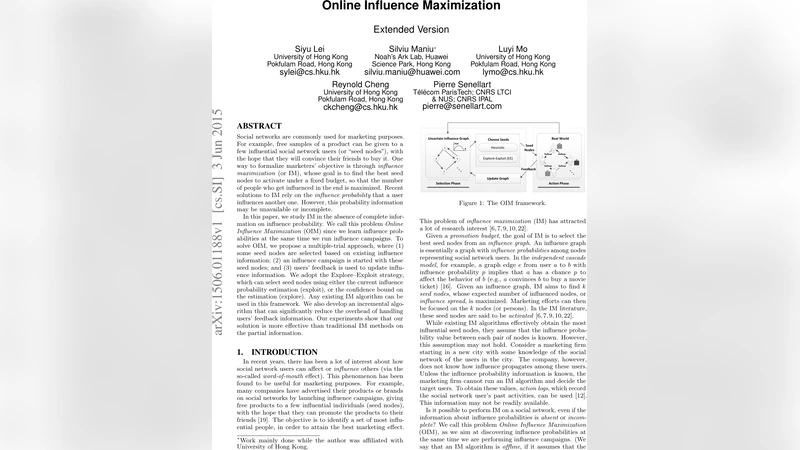

Social networks are commonly used for marketing purposes. For example, free samples of a product can be given to a few influential social network users (or “seed nodes”), with the hope that they will convince their friends to buy it. One way to formalize marketers’ objective is through influence maximization (or IM), whose goal is to find the best seed nodes to activate under a fixed budget, so that the number of people who get influenced in the end is maximized. Recent solutions to IM rely on the influence probability that a user influences another one. However, this probability information may be unavailable or incomplete. In this paper, we study IM in the absence of complete information on influence probability. We call this problem Online Influence Maximization (OIM) since we learn influence probabilities at the same time we run influence campaigns. To solve OIM, we propose a multiple-trial approach, where (1) some seed nodes are selected based on existing influence information; (2) an influence campaign is started with these seed nodes; and (3) users’ feedback is used to update influence information. We adopt the Explore-Exploit strategy, which can select seed nodes using either the current influence probability estimation (exploit), or the confidence bound on the estimation (explore). Any existing IM algorithm can be used in this framework. We also develop an incremental algorithm that can significantly reduce the overhead of handling users’ feedback information. Our experiments show that our solution is more effective than traditional IM methods on the partial information.

💡 Research Summary

The paper introduces the problem of Online Influence Maximization (OIM), which addresses the practical limitation that influence probabilities on social‑network edges are often unknown or only partially known when a marketer must decide which users to target. Traditional Influence Maximization (IM) assumes a fully specified influence graph (each edge (u,v) has a known probability p_uv) and selects a seed set S of size k that maximizes the expected spread σ(S). In many real‑world scenarios—new markets, new products, or privacy‑restricted data—these probabilities cannot be obtained in advance.

To overcome this, the authors propose a multi‑trial framework. In each trial a seed set of up to k users is chosen, the campaign is run, and the observed activations (whether a seed succeeded in influencing a neighbor) are fed back to update the belief about edge probabilities. The belief is modeled as a Beta distribution for each edge, parameterized by (α,β) that count successful and unsuccessful influence attempts. Two complementary update schemes are described: (i) Local Update, which adjusts the Beta parameters of each individual edge based on its own observed successes/failures, and (ii) Global Update, which treats the whole graph as sharing a common prior and updates that prior in bulk. Both are derived from classical maximum‑likelihood or Bayesian estimation techniques.

Seed selection in each trial follows an Explore‑Exploit (EE) strategy. The Exploit mode uses the current point estimates of p_uv (e.g., the mean of the Beta distribution) and runs any off‑the‑shelf IM algorithm—such as CELF, Degree Discount, TIM, or TIM+—to obtain a seed set that maximizes the expected spread under the current model. The Explore mode, by contrast, selects seeds that are expected to reduce uncertainty: it computes an Upper Confidence Bound (UCB) for each edge from its Beta distribution and prefers nodes incident to edges with large UCB gaps. This mirrors the ε‑greedy and combinatorial UCB ideas from multi‑armed bandit literature but is adapted to the constraints of influence campaigns (limited budget, no repeated activation of the same node). By interleaving exploration and exploitation across trials, the algorithm both learns the influence probabilities and gradually improves the quality of the seed sets.

A major computational bottleneck in modern IM algorithms is the sampling of the influence graph, especially for reverse‑reachability (RR) set based methods like TIM and TIM+. Sampling dominates runtime (over 99 % in TIM+). The authors observe that feedback from a trial typically affects only a small subgraph, so many previously sampled RR‑sets remain valid for the next trial. They formalize conditions under which an RR‑set can be reused: an RR‑set that does not contain any edge whose probability has changed can be kept unchanged. To exploit this, they maintain an edge‑to‑RR‑set index, allowing fast identification of affected RR‑sets after each update. The resulting incremental algorithm reuses a large fraction (≈70 %) of RR‑sets, dramatically reducing both time and memory consumption while preserving the theoretical guarantees of the underlying IM algorithm.

Experimental evaluation uses both synthetic networks and real‑world social graphs (e.g., Facebook, Twitter). Baselines include (a) traditional offline IM run on a graph with guessed probabilities, and (b) existing online bandit‑based approaches such as CUCB and Thompson Sampling. Results show that the OIM framework achieves 12 %–18 % higher total activated nodes across all trials, especially when the exploration probability is higher in early trials. The incremental sampling technique cuts runtime by more than half and keeps memory usage under 30 % of the non‑incremental version.

In summary, the paper makes three key contributions: (1) formal definition of Online Influence Maximization under unknown edge probabilities; (2) a flexible Explore‑Exploit seed selection scheme that can plug any state‑of‑the‑art IM algorithm; (3) an incremental RR‑set reuse mechanism that makes the approach scalable to large networks. The work opens avenues for future research on multi‑product campaigns, dynamic networks, and reinforcement‑learning‑driven exploration policies.

Comments & Academic Discussion

Loading comments...

Leave a Comment