We have introduced in former work the concept of Deep Randomness and its interest to design Unconditionally Secure communication protocols. We have in particular given an example of such protocol and introduced how to design a Deep Random Generator associated to that protocol. Deep Randomness is a form of randomness in which, at each draw of random variable, not only the result is unpredictable bu also the distribution is unknown to any observer. In this article, we remind formal definition of Deep Randomness, and we expose two practical algorithmic methods to implement a Deep Random Generator within a classical computing resource. We also discuss their performances and their parameters.

Deep Dive into Practical Implementation of a Deep Random Generator.

We have introduced in former work the concept of Deep Randomness and its interest to design Unconditionally Secure communication protocols. We have in particular given an example of such protocol and introduced how to design a Deep Random Generator associated to that protocol. Deep Randomness is a form of randomness in which, at each draw of random variable, not only the result is unpredictable bu also the distribution is unknown to any observer. In this article, we remind formal definition of Deep Randomness, and we expose two practical algorithmic methods to implement a Deep Random Generator within a classical computing resource. We also discuss their performances and their parameters.

(*) See contact and information about the author at last page

Practical Implementation of a Deep Random

Generator

Thibault de Valroger (*)

Abstract

We have introduced in former work [5] the concept of Deep

Randomness and its interest to design Unconditionally Secure

communication protocols. We have in particular given an example of

such protocol and introduced how to design a Deep Random

Generator associated to that protocol. Deep Randomness is a form of

randomness in which, at each draw of random variable, not only the

result is unpredictable bu also the distribution is unknown to any

observer. In this article, we remind formal definition of Deep

Randomness, and we expose two practical algorithmic methods to

implement a Deep Random Generator within a classical computing

resource. We also discuss their performances and their parameters.

Key words. Deep Random, Random Generator, Perfect Secrecy, Unconditional Security, Prior

Probabilities, information theoretic security

I. Introduction

Prior probabilities theory

Before presenting the Deep Random assumption, it is needed to introduce Prior probability theory.

The art of prior probabilities consists in assigning probabilities to a random event in a context of

partial or complete uncertainty regarding the probability distribution governing that random event. The

first author who has rigorously considered this question is Laplace [10], proposing the famous

principle of insufficient reason by which, if an observer does not know the prior probability of

occurrence of 2 events, he should consider them as equally likely. In other words, if a random variable

can take several values , and if no information regarding the prior probabilities

is available for the observer, he should assign them

⁄ in any attempt to produce

inference from an experiment of .

Several authors observed that this principle can lead to conclusion contradicting the common sense in

certain cases where some knowledge is available to the observer but not reflected in the assignment

principle.

If we denote the set of all prior information available to observer regarding the probability

distribution of a certain random variable (‘prior’ meaning before having observed any experiment of

that variable), and any public information available regarding an experiment of , it is then

possible to define the set of possible distributions that are compatible with the information

regarding an experiment of ; we denote this set of possible distributions as:

The goal of Prior probability theory is to provide tools enabling to make rigorous inference reasoning

in a context of partial knowledge of probability distributions. A key idea for that purpose is to consider

groups of transformation, applicable to the sample space of a random variable , that do not change

the global perception of the observer. In other words, for any transformation of such group, the

observer has no information enabling him to privilege | rather than

| as the actual conditional distribution. This idea has been developed by Jaynes [7], in

order to avoid the incoherence brought in some cases by the imprudent application of Laplace

principle.

We will consider only finite groups of transformation, because one manipulates only discrete and

bounded objects in digital communications. We define the acceptable groups as the ones fulfilling

the 2 conditions below:

Stability - For any distribution , and for any transformation , then

Convexity - Any distribution that is invariant by action of does belong to

It can be noted that the set of distributions that are invariant by action of is exactly:

{

| | ∑

| }

The condition is justified by the fact that in the absence of information enabling the observer to

privilege from , he should choose equivalently one or the other distribution, but then of

course the average distribution

| | ∑

should still belong to the set of possible distributions

knowing .

The set of acceptable groups as defined above is denoted:

Let’s consider some examples.

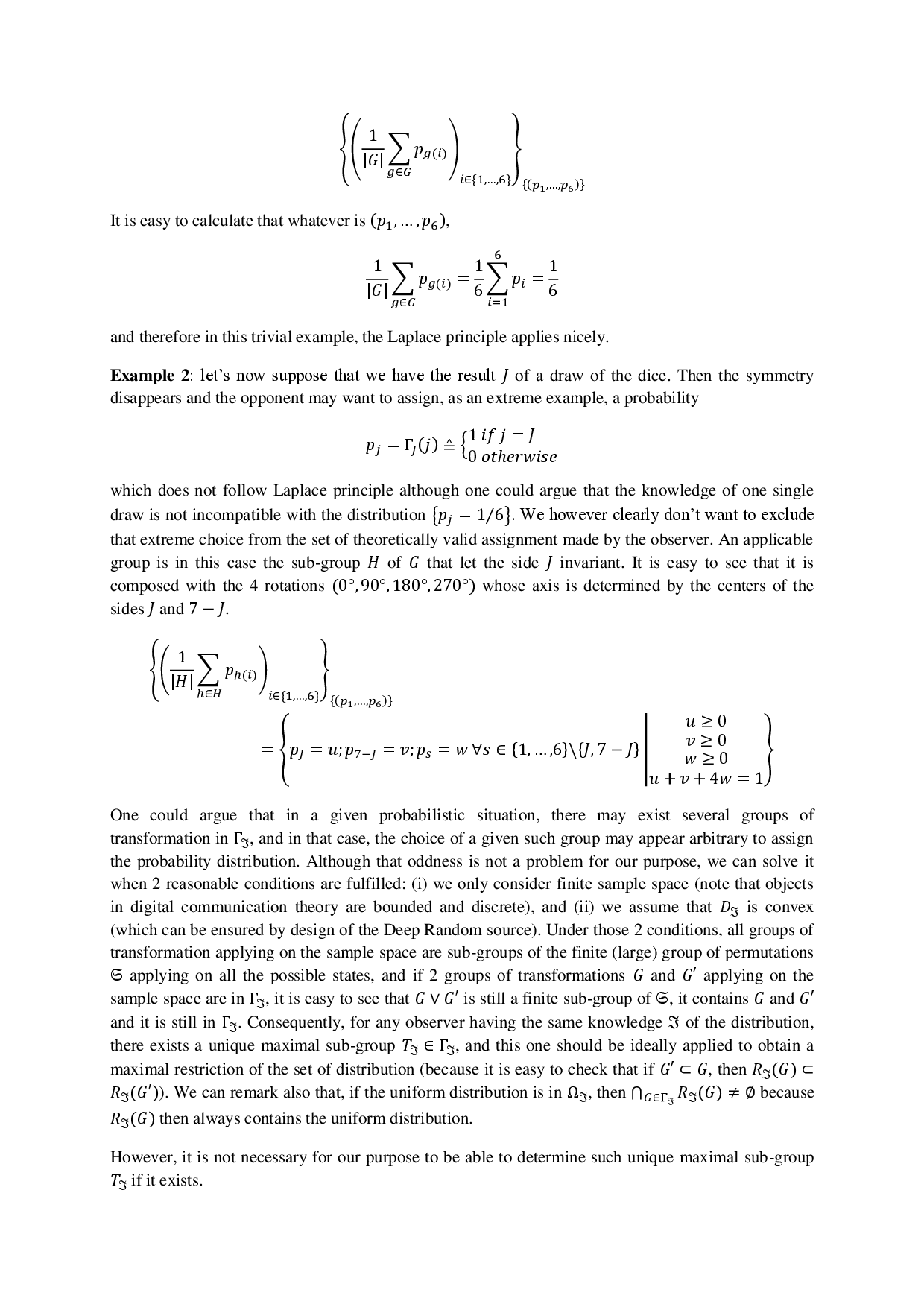

Example 1: we consider a 6-sides dice. We are informed that the distribution governing the

probability to draw a given side is altered, but we have no information of what that distribution

actually is, and we have no available information regarding an experiment. We want nevertheless to

assign an a priori probability distribution for the draw of dice. In this very simple case, it seems quite

reasonable to assign an a priori probability of

⁄ to each side. A more rigorous argument to justify

this decision, based on the above, is the following: let’s consider the finite group of transformation

that let the dice unchanged, this group is well known, it is generated by the 3 axis 90° rotations, and

has 24 elements. It is clear he

…(Full text truncated)…

This content is AI-processed based on ArXiv data.