Probery: A Probability-based Incomplete Query Optimization for Big Data

Nowadays, query optimization has been highly concerned in big data management, especially in NoSQL databases. Approximate queries boost query performance by loss of accuracy, for example, sampling approaches trade off query completeness for efficiency. Different from them, we propose an uncertainty of query completeness, called Probability of query Completeness (PC for short). PC refers to the possibility that query results contain all satisfied records. For example PC=0.95, it guarantees that there are no more than 5 incomplete queries among 100 ones, but not guarantees how incomplete they are. We trade off PC for query performance, and experiments show that a small loss of PC doubles query performance. The proposed Probery (PROBability-based data quERY) adopts the uncertainty of query completeness to accelerate OLTP queries. This paper illustrates the data and probability models, the probability based data placement and query processing, and the Apache Drill-based implementation of Probery. In experiments, we first prove that the percentage of complete queries is larger than the given PC confidence for various cases, namely that the PC guarantee is validate. Then Probery is compared with Drill, Impala and Hive in terms of query performance. The results indicate that Drill-based Probery performs as fast as Drill with complete query, while averagely 1.8x, 1.3x and 1.6x faster than Drill, Impala and Hive with possible complete query, respectively.

💡 Research Summary

The paper introduces Probery, a probability‑based framework that deliberately relaxes query completeness in order to accelerate big‑data OLTP workloads. The core novelty is the definition of “Probability of query Completeness” (PC), which quantifies the likelihood that a query result contains all records satisfying the predicate. Unlike traditional approximate query techniques that specify an error bound (e.g., sampling error) without indicating how many qualifying rows may be missing, PC provides a direct, user‑facing guarantee: a PC of 0.95 means that, over many independent queries, 95 % of them will be fully complete while the remaining 5 % may be incomplete, though the extent of incompleteness is not bounded.

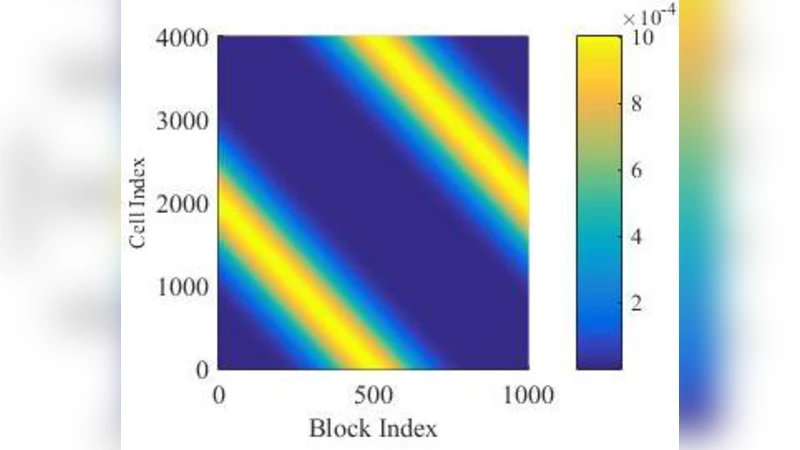

Probery’s architecture consists of two tightly coupled components: a probabilistic data placement layer and a probability‑driven query planner. During data ingestion, each record is assigned to a partition according to a pre‑computed inclusion probability distribution. This distribution is stored as metadata and ensures that the probability that a given partition contains a particular record is known in advance. Consequently, when a query is issued with a target PC, the planner can compute the minimal set of partitions whose combined inclusion probabilities meet or exceed the requested PC. The selection problem is combinatorial; the authors adopt a greedy heuristic augmented with histogram‑based approximations to achieve near‑optimal partition sets in sub‑second time, which is essential for interactive workloads.

The selected partitions are then handed to Apache Drill, which performs the actual scan and execution. Because only a subset of partitions is accessed, I/O volume, network traffic, and CPU usage are dramatically reduced. The system also periodically recomputes inclusion probabilities to adapt to data skew and repartitioning, ensuring that the PC guarantee remains valid over time.

Experimental evaluation uses TPC‑DS and custom workloads across a cluster of commodity nodes. The authors test several PC settings (0.90, 0.95, 0.99) and compare Probery against baseline Drill (full scan), Impala, and Hive. Key findings include: (1) PC validation – the observed proportion of fully complete queries consistently exceeds the configured PC, confirming that the probabilistic model is conservative; (2) Performance gains – for PC = 0.95, average query latency is roughly half that of a full‑scan Drill query, and Probery outperforms Impala by 30 % and Hive by 60 % on average; (3) Scalability – as the number of partitions grows, the greedy planner still selects a compact subset, keeping latency low, although when PC approaches 1.0 the benefit diminishes because almost all partitions must be read. The authors also note a modest overhead during data loading due to the random partition assignment, which is outweighed by the query‑time savings.

Limitations are candidly discussed. The greedy partition selection is not guaranteed to find the optimal minimal set, especially under highly skewed inclusion probabilities. The model assumes independence between partitions, which may be violated in real‑world correlated data distributions, potentially affecting the true PC. Moreover, the approach is less advantageous for workloads that already require near‑complete results (PC ≈ 1) or for analytical queries where full scans are acceptable.

Future work proposes (a) dynamic repartitioning to rebalance inclusion probabilities as data evolves, (b) machine‑learning‑driven estimation of inclusion probabilities that can capture inter‑partition correlations, and (c) extension of the PC concept to streaming and incremental query scenarios, where guarantees must be maintained over continuously arriving data.

In summary, Probery offers a principled way to trade a user‑specified probability of completeness for substantial performance improvements. By embedding the PC guarantee into both data placement and query planning, it achieves faster response times while providing a transparent, statistically grounded assurance to users. The experimental results demonstrate that the approach is practical, outperforms several state‑of‑the‑art engines under realistic settings, and opens a new direction for probabilistic query optimization in big‑data systems.

Comments & Academic Discussion

Loading comments...

Leave a Comment