Hierarchical Generative Modeling for Controllable Speech Synthesis

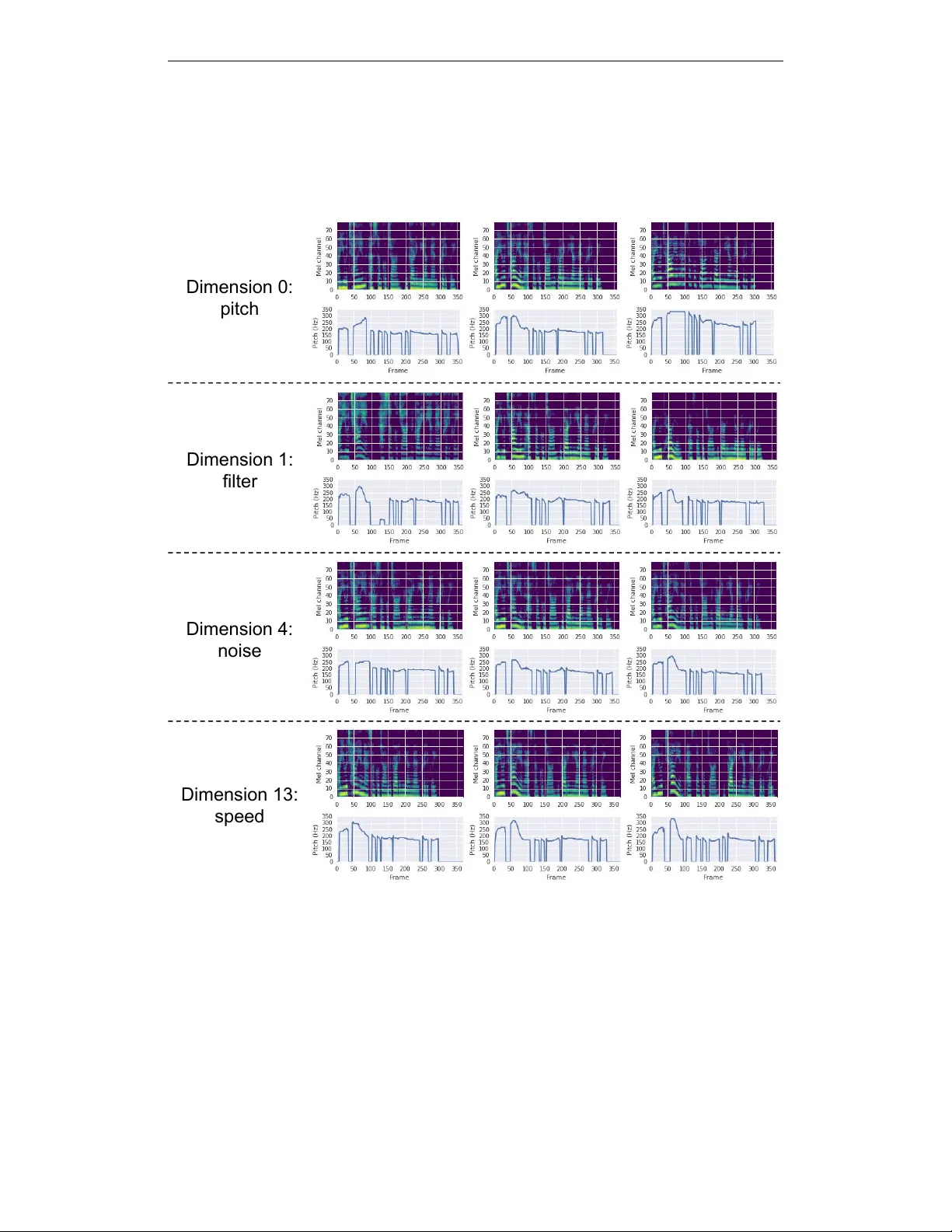

This paper proposes a neural sequence-to-sequence text-to-speech (TTS) model which can control latent attributes in the generated speech that are rarely annotated in the training data, such as speaking style, accent, background noise, and recording c…

Authors: Wei-Ning Hsu, Yu Zhang, Ron J. Weiss