Machine learning and AI research for Patient Benefit: 20 Critical Questions on Transparency, Replicability, Ethics and Effectiveness

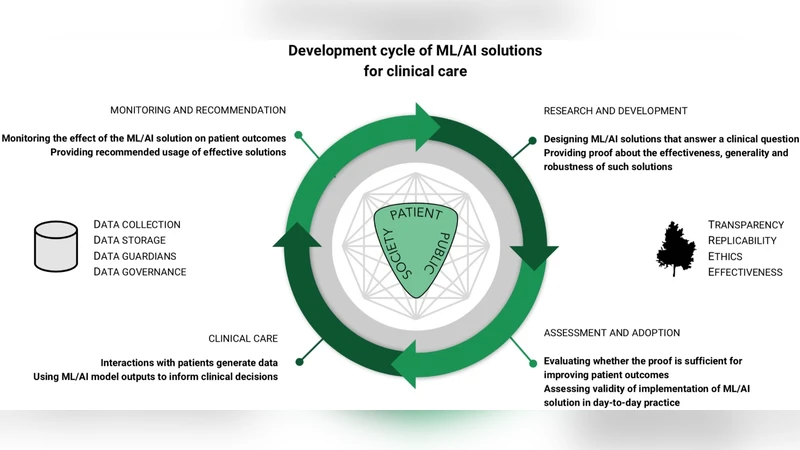

Machine learning (ML), artificial intelligence (AI) and other modern statistical methods are providing new opportunities to operationalize previously untapped and rapidly growing sources of data for patient benefit. Whilst there is a lot of promising research currently being undertaken, the literature as a whole lacks: transparency; clear reporting to facilitate replicability; exploration for potential ethical concerns; and, clear demonstrations of effectiveness. There are many reasons for why these issues exist, but one of the most important that we provide a preliminary solution for here is the current lack of ML/AI- specific best practice guidance. Although there is no consensus on what best practice looks in this field, we believe that interdisciplinary groups pursuing research and impact projects in the ML/AI for health domain would benefit from answering a series of questions based on the important issues that exist when undertaking work of this nature. Here we present 20 questions that span the entire project life cycle, from inception, data analysis, and model evaluation, to implementation, as a means to facilitate project planning and post-hoc (structured) independent evaluation. By beginning to answer these questions in different settings, we can start to understand what constitutes a good answer, and we expect that the resulting discussion will be central to developing an international consensus framework for transparent, replicable, ethical and effective research in artificial intelligence (AI-TREE) for health.

💡 Research Summary

The paper addresses a critical gap in the rapidly expanding field of machine learning and artificial intelligence (ML/AI) applied to health care: the lack of systematic guidance that ensures research is transparent, reproducible, ethically sound, and demonstrably effective for patients. The authors argue that without such guidance, the literature suffers from opaque reporting, insufficient detail for replication, unexamined ethical risks, and weak evidence of clinical benefit. To confront these challenges, they propose a pragmatic, question‑driven framework called AI‑TREE (Artificial Intelligence‑Transparency, Replicability, Ethics, Effectiveness).

The core contribution is a set of twenty carefully crafted questions that span the entire lifecycle of an AI‑health project—from initial problem definition through data acquisition, model development, validation, deployment, and post‑implementation monitoring. Each question is designed to elicit concrete information that can be documented, shared, and independently audited. For example, early questions require explicit articulation of clinical need, stakeholder involvement, and patient engagement. Subsequent questions demand detailed provenance of data sources, quality control procedures, privacy safeguards, and labeling protocols. In the modeling phase, the framework insists on justification of algorithm choice, full disclosure of hyper‑parameter settings, and open sharing of code and trained weights.

Evaluation questions focus on both internal validation (cross‑validation, bootstrapping) and external validation (multi‑site datasets), insisting on reporting of performance metrics, confidence intervals, and statistical significance. They also require bias and fairness analyses to surface potential inequities before clinical use. Ethical and regulatory considerations are woven throughout, with mandatory documentation of informed consent processes, risk‑benefit assessments, and compliance with local and international data protection laws. Finally, the deployment and monitoring stage calls for real‑world effectiveness studies, continuous performance tracking, mechanisms for model updates, and systematic adverse event reporting.

By answering these questions prospectively or retrospectively, research teams can generate a structured evidence base that facilitates peer review, regulatory assessment, and meta‑analysis across studies. The authors envision that accumulating such structured answers will enable the community to converge on what constitutes a “good” answer, thereby informing an international consensus on best practices. In essence, the paper offers a concrete, actionable roadmap that can elevate the rigor of AI‑driven health research, ensuring that technological advances translate into genuine patient benefit while upholding the highest standards of scientific integrity and ethical responsibility.

Comments & Academic Discussion

Loading comments...

Leave a Comment